AI titans Microsoft and Nvidia reportedly had a standoff over Microsoft's use of B200 AI GPUs in its own server rooms

Microsoft wanted to use its own custom server racks that can also support GPUs from other vendors.

Nvidia is well known for its high-performance gaming GPUs and industry-leading AI GPUs. However, the trillion-dollar GPU manufacturer is also known for controlling how its GPUs are used beyond company walls. For example, it can be quite restrictive with its AIB partners' graphics card designs. Perhaps not surprisingly, this level of control also appears to extend beyond card partners over to AI customers, including Microsoft. The Information reports that there was a standoff between Microsoft and Nvidia over how Nvidia's new Blackwell B200 GPUs were to be installed in Microsoft's server rooms.

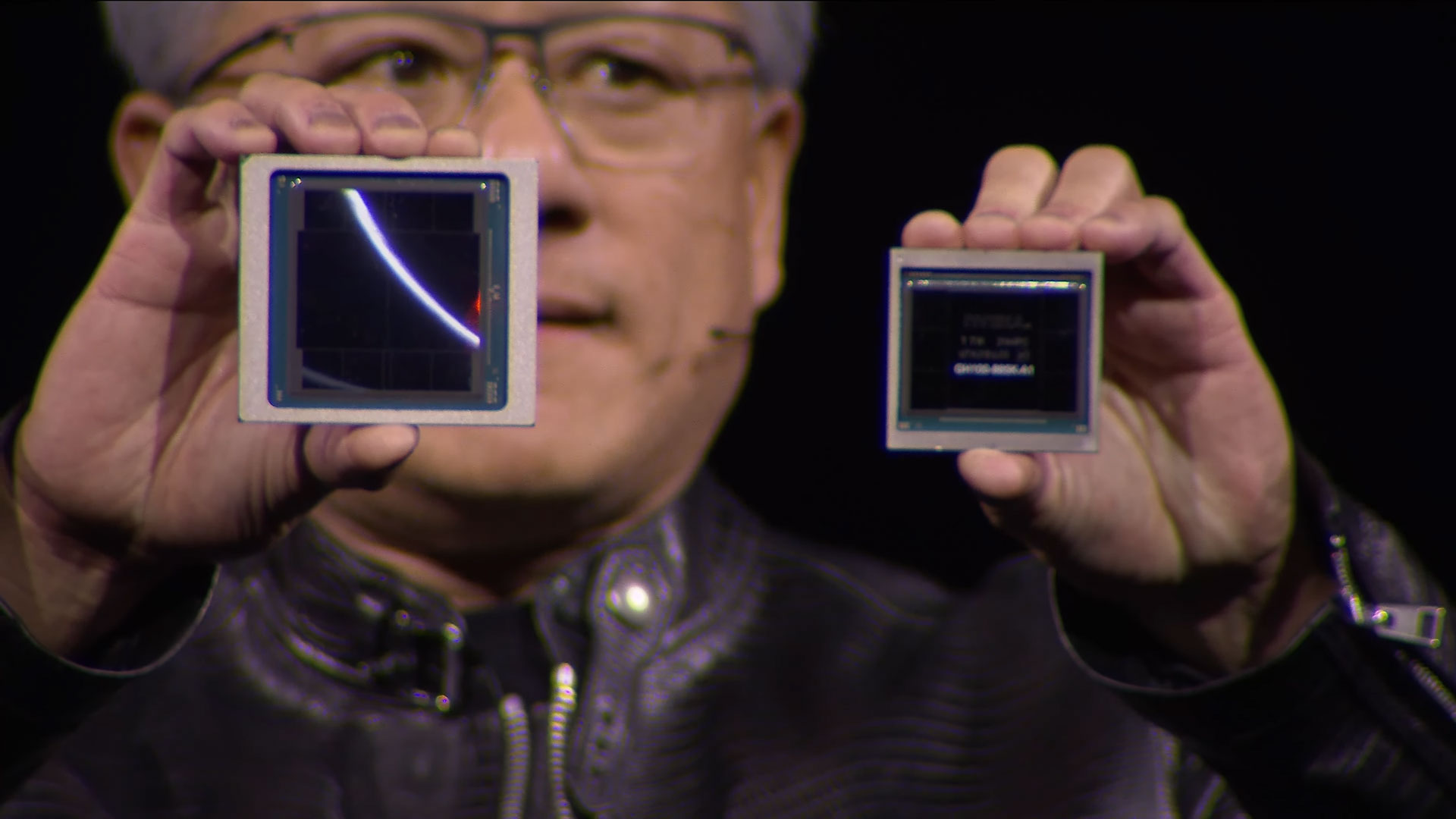

Nvidia has been aggressively pursuing ever larger pieces of the data center pie, which is immediately clear if you look at how it announced the Blackwell B200 parts. Multiple times during the presentation, Jensen Huang indicated that he doesn't think about individual GPUs any more — he thinks of the entire NVL72 rack as a GPU. It's a rather transparent effort to gain additional revenue from its AI offerings, and that extends to influencing how customers install their new B200 GPUs.

Previously, the customer was responsible for buying and building appropriate server racks to house the hardware. Now, Nvidia is pushing customers to buy individual racks and even entire SuperPods — all coming direct from Nvidia. Nvidia claims this will boost GPU performance, and there's merit to such talk considering all the interlinks between the various GPUs, servers, racks, and even SuperPods. But there's also a lot of dollar bills changing hands when you're building data centers at scale.

Nvidia's smaller customers might be ok with the company's offerings, but Microsoft wasn't. VP of Nvidia Andrew Bell reportedly asked Microsoft to buy a server rack design specifically for its new B200 GPUs that boasted a form factor a few inches different from Microsoft's existing server racks that are actively used in its data centers.

Microsoft pushed back on Nvidia's recommendation, revealing that the new server racks would prevent Microsoft from easily switching between Nvidia's AI GPUs and competing offerings such as AMD's MI300X GPUs. Nvidia eventually backed down and allowed Microsoft to use its own custom server racks for its B200 AI GPUs, but it's probably not the last such disagreement we'll see between the two megacorps.

To be clear, Nvidia supports both OCP's Open Rack (21-inch) and standard 19-inch EIA racks for MGX. Microsoft appears to use OCP racks, which are newer and should be better for density. Microsoft likely has a lot of existing infrastructure that continues to work well, and upgrading all of that would be quite expensive.

A dispute like this this is a sign of how large and valuable Nvidia has become over the span of just a year. Nvidia became the most valuable company earlier this week (briefly), and that title will likely change hands many times in the coming months and years. Server racks aren't the only area Nvidia wants to control, as the tech giant also controls how much GPU inventory gets allocated to each customer to maintain demand, and it's using its dominant position in the AI space to push its own software and networking systems to maintain its position as a market leader.

Nvidia is benefiting massively from the AI boom, which started when ChatGPT exploded in popularity one and a half years ago. Over that same timespan, Nvidia's AI-focused GPUs have become the GPU manufacturer's most in-demand and highest income generating products, leading to incredible financial success. Stock prices for Nvidia have soared and are currently over eight times higher than they were at the beginning of 2023, and over 2.5 times higher than at the start of 2024.

Nvidia continues to use all of this extra income to great effect. Its latest AI GPU, the Blackwell B200, will be the fastest graphics processing unit in the world for AI workloads. A "single" GB200 Superchip delivers up to a whopping 20 petaflops of compute performance (for sparse FP8) and is theoretically five times faster than its H200 predecessor. Of course GB200 is actually two B200 GPUs plus a Grace CPU all on a large daughterboard, and the B200 uses two large dies linked together for good measure. The 'regular' B200 GPU on the other hand only offers up to 9 petaflops FP4 (with sparsity), but even so that's a lot of number crunching prowess. It also delivers up to 2.25 petaflops of dense compute for FP16/BF16, which is what's used in AI training workloads.

As of June 20th, 2024, Nvidia stock has dropped slightly from its $140.76 high and closed at $126.57. We'll have to see what happens once Blackwell B200 begins shipping en masse. In the meantime, Nvidia continues to go all-in on AI.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Aaron Klotz is a contributing writer for Tom’s Hardware, covering news related to computer hardware such as CPUs, and graphics cards.

-

Reply

" .....had a standoff over use of Microsoft's B200 AI GPUs"

Didn't know MS also made or had their own AI GPUs. :rolleyes:

Its latest AI GPU, the Blackwell B200, will be the fastest graphics processing unit in the world for AI workloads A "single" GPU delivers up to a whopping 20 petaflops of compute performance (for FP8) and is four times faster than its H200 predecessor.

Not correct, that is 20 petaflops of FP4 horsepower. So 20 petaflops of FP4 is for a single B200.

But if you want to just stick with FP8 format, then the B200 offers only 2.5x more theoretical FP8 compute than H100 (with sparsity, of course), and that too comes from having two chips in total.

So the FP8 throughput is half the FP4 throughput at 10 petaflops, and the FP16/BF16 throughput is again half the FP8 value at 5 petaflops.

Of course it's actually two large chips for such a GPU,

Then that would be GB200, not B200 as you mentioned in the article. Because the 20 petaflops claim of FP4 is for a single B200. Half of a GB200 superchip.

Of course, the Blackwell B200 is also not a single GPU in the traditional sense, but the petaflops figure match that of the GB200 superchip instead. -

JarredWaltonGPU Reply

Just an error in word order. We were workshopping the title and accidentally put two words in the wrong spot. Instead of "over use of Microsoft's B200 AI GPUs in its own server rooms" it's supposed to be "over Microsoft's use of B200 AI GPUs in its own server rooms" — I've fixed it now.Metal Messiah. said:Didn't know MS also made or had their own AI GPUs. :rolleyes:

Yeah, it depends on which "GPU" we're talking about. It's up to 40 petaflops of FP4 with sparsity, and up to 20 petaflops FP8 with sparsity... for GB200. But that's two B200 at higher clocks; a 'regular' B200 is only 18 petaflops FP4 and 9 petaflops FP8. I looked at the wrong column when editing, apparently. :\Metal Messiah. said:Not correct, that is 20 petaflops of FP4 horsepower. So 20 petaflops of FP4 is for a single B200.

Again, technically that's half of a GB200 that's 10 petaflops sparse FP8, and if you want a 'normal' B200 then it's down to 2.25X. But Nvidia likes to use GB200 for comparisons because it shows a bigger delta, naturally. I've corrected that paragraph to make sure we clearly indicate what's being discussed.Metal Messiah. said:But if you want to just stick with FP8 format, then the B200 offers only 2.5x more theoretical FP8 compute than H100 (with sparsity, of course), and that too comes from having two chips in total. -

ReplyJarredWaltonGPU said:Just an error in word order. We were workshopping the title and accidentally put two words in the wrong spot. Instead of "over use of Microsoft's B200 AI GPUs in its own server rooms" it's supposed to be "over Microsoft's use of B200 AI GPUs in its own server rooms" — I've fixed it now.

Yeah, I know that. I was just being sarcastic ! Thanks for the correction, btw ! :)

JarredWaltonGPU said:Again, technically that's half of a GB200 that's 10 petaflops sparse FP8, and if you want a 'normal' B200 then it's down to 2.25X.

I suppose that should be dense for GB200, no ? 10 petaflops FP8. Sparse FP8 value should be 20 petaflops, imo. Or are you counting half of these values here, to compare it with the B200 ?

Anyway, let me check the specs again though. Will edit this post accordingly ! -

JarredWaltonGPU Reply

https://www.tomshardware.com/pc-components/gpus/nvidias-next-gen-ai-gpu-revealed-blackwell-b200-gpu-delivers-up-to-20-petaflops-of-compute-and-massive-improvements-over-hopper-h100Metal Messiah. said:I suppose that should be dense for GB200, no ? 10 petaflops FP8. Sparse FP8 value should be 20 petaflops, imo. Or are you counting half of these values here, to compare it with the B200 ?

Anyway, let me check the specs again though. Will edit this post accordingly !

Though if any of those specs in the table are wrong, do let me know! -

d0x360 Microsoft angered that they funded a significant part of nVidia'a meteoric rise in valuation wants revenge for nVidia daring to temporarily pass them... They spent over a decade chasing God damn apple and they won't stand for this again!Reply

In other news..

Brought to you from the future! The AI bubble has burst and Microsoft has acquired nVidia who ran out of money due to all the expensed hyper cars (we counted 184 Bugatti Veyron Super Sports parked at nVidia by 6am and the office doesn't even open until 9!

A ridiculous number of black leather jackets was also discovered buried until various offices, why does every employee need 400 jackets!? An odd obsession with using the same GPU control panel for 24 years was also unearthed but wait this is the future so we meant 35 years.

Our last nVidia news item is a doozy.. Oddly one day every engineer at nVidia went to the Bahamas and forgot to come back which allowed AMD to finally take the GPU performance crown.

In other news Intel GPU's still suck and their CPU division is hurting because they pretended arm didn't exist until 2032. Their fab gets little business as everyone (including Intel) says screw it and uses tsmc. Despite warnings from basically everyone that splitting fab off to operate like a separate entity wouldn't work Pat Gelsinger decided to do it anyways... He may be a Chinese spy working in the cpu design arm of Huawei but eee.MarsTechnica.spaceX was unable to verify that so pretend we didn't say it.

Future news brought to you by your friends at..2pYLpY5

Pudding CO! ®1964

Serving the best future news since jello.

and...

Lack of Sleep LLC! -

TechyIT223 We can't read the original link posted in the article. The source link "The information" requires a subscription to read the full news.Reply -

vanadiel007 Sounds to me like unfair competition practices, trying to exclude other companies by designing the equipment in such a way it can only be used with the equipment of 1 specific vendor.Reply

Could be a sign of things to come, if let's say Nvidia decides all it's video cards need a custom connection to the motherboard PCB that is "needed" to ensure the video cards "AI" operates properly. -

Reply

Hey, the specs table seems correct to me. No issues there. But I think the 10 petaflops FP8 value which you mentioned above should be for 'dense'. But never mind, this gets so confusing sometimes, that I just mug up these values for future reference.JarredWaltonGPU said:https://www.tomshardware.com/pc-components/gpus/nvidias-next-gen-ai-gpu-revealed-blackwell-b200-gpu-delivers-up-to-20-petaflops-of-compute-and-massive-improvements-over-hopper-h100

Though if any of those specs in the table are wrong, do let me know!

:D -

TechyIT223 Replyvanadiel007 said:Sounds to me like unfair competition practices, trying to exclude other companies by designing the equipment in such a way it can only be used with the equipment of 1 specific vendor.

Could be a sign of things to come, if let's say Nvidia decides all it's video cards need a custom connection to the motherboard PCB that is "needed" to ensure the video cards "AI" operates properly.

If nvidia tries to monopolize here then most probably they will lose the market share, clientele, and goodwill as well.