Nvidia sold half a million H100 AI GPUs in Q3 thanks to Meta, Facebook — lead times stretch up to 52 weeks: Report

Join the queue for Nvidia's top AI/HPC GPU.

Having earned $14.5 billion on datacenter hardware in the third quarter of fiscal 2024, Nvidia clearly sold a boatload of its H100 GPUs for artificial intelligence (AI) and high-performance computing (HPC). Omdia says that Nvidia sold nearly half a million A100 and H100 GPUs, and demand for these products is so high that the lead time of H100-based servers is from 36 to 52 weeks.

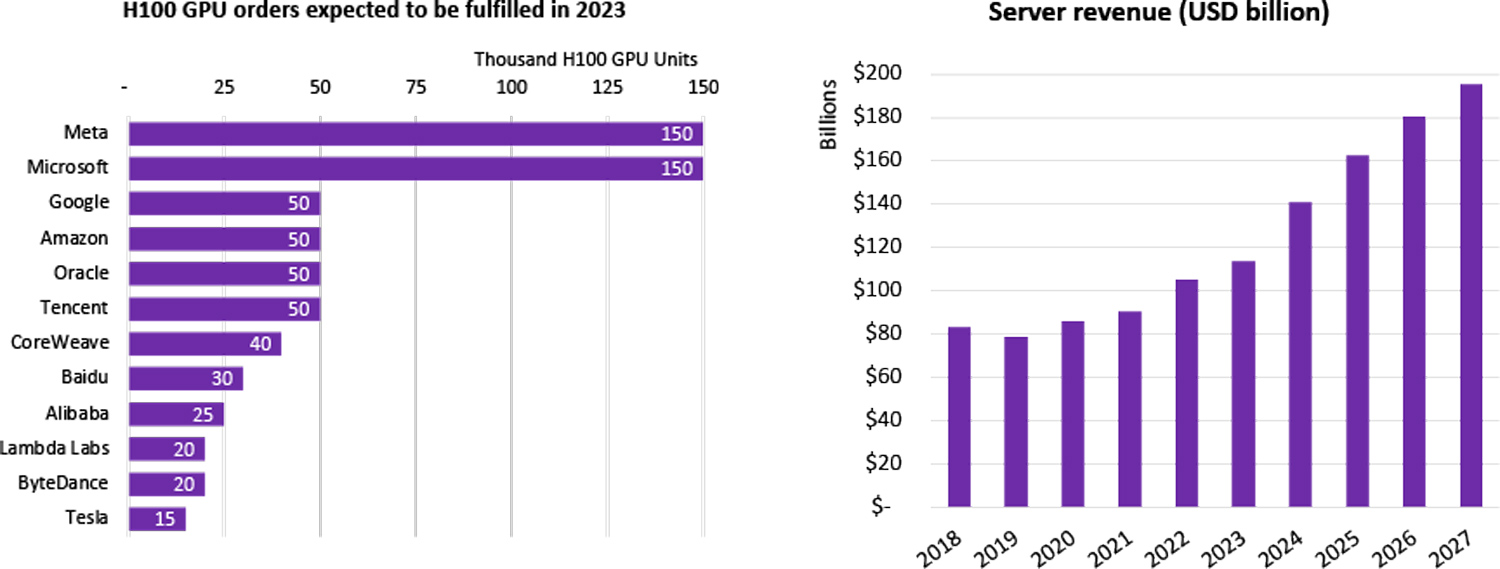

Omdia, a market tracking company, believes that Meta and Microsoft are the largest purchasers of Nvidia's H100 GPUs. They procured as many as 150,000 H100 GPUs each, considerably more than the number of H100 processors purchased by Google, Amazon, Oracle, and Tencent (50,000 each). It is noteworthy that the majority of server GPUs are supplied to hyperscale cloud service providers. Server OEMs (Dell, Lenovo, HPE) cannot get enough AI and HPC GPUs to fulfill their server orders yet, Omdia claims.

The analyst firm believes that sales of Nvidia's H100 and A100 compute GPUs will exceed half a million units in Q4 2023. Meanwhile, demand for H100 and A100 is so strong that the lead time of GPU servers is up to 52 weeks. Meanwhile, Omdia says that server shipments for 2023 are trending between -17% and -20% year-over-year, whereas server revenue for 2023 is trending between +6% and +8% year-over-year.

Meanwhile, it should be noted that virtually all companies that purchase Nvidia's H100 GPUs in large quantities are also developing custom silicon for AI, HPC, and video workloads. As a result, their purchases of Nvidia hardware will likely decline over time as they transit to their own chips.

Meanwhile, looking forward to 2027, the server market's value is estimated at a staggering $195.6 billion. This growth trajectory is fueled by the shift towards servers tailored for specific applications featuring a diverse array of co-processors. Examples include Amazon's servers dedicated to AI inference, which boast 16 Inferentia 2 co-processors, and Google's video transcoding servers, outfitted with 20 custom VCUs. Meta has also followed suit with servers equipped with 12 custom processors for video processing.

This inclination towards custom, application-optimized server configurations is set to become the norm as the cost-efficiency of building specialized processors is realized, with media and AI being the current front runners and other sectors like database management and web services expected to join the movement.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.