Intel Demos Discrete Arc GPUs, Still Coming in Q1

The GPU space gets another competitor, sort of

We've been hearing about Intel's Arc Alchemist in various forms since Raja Kudori left AMD's Radeon group for Intel all the way back in November 2017. It's no secret that he's been working to build a new graphics architecture and team in the ensuing years. And it's also proof of how difficult it can be to break into the graphics industry. But now, after four years of work, Intel Arc is taxiing down the runway and preparing for takeoff. Will AMD and Nvidia finally have some serious competition in the market for the best graphics cards? We're about to find out. Maybe.

The difficult thing about building a GPU isn't just the hardware. Drivers matter. On paper, GPUs just do a whole lot of math calculations and texturing, but we've seen numerous theoretically capable designs never get far. Intel's DG1 was clearly more of a proof of concept than a product that would actually compete with AMD or Nvidia, but it did pave the way with some needed driver updates — several of the issues we noted with our DG1 testing have since been fixed.

Still, the hardware definitely plays a role, and we have little idea of what to expect from Intel Arc. Architecture matters, and we'll need to actually taste the proverbial pudding before we can render judgement. Look at AMD and Nvidia as an example: RX 6900 XT 'only' delivers 23 TFLOPS of compute with 512GBps of bandwidth, while the RTX 3090 pushes 35.6 TFLOPS and 936GBps of bandwidth. You'd think Nvidia would run away with the performance crown, but across our GPU benchmarks test suite, the RTX 3090 only leads the RX 6900 XT by 3%. That's largely thanks to AMD's Infinity Cache, but other architectural design decisions also come into play.

All we really know about Intel Arc Alchemist consists of potential theoretical specs. We've seen leaks suggesting Arc could run at up to 2.45GHz, but even with 512 Vector Engines (the new nomenclature for Intel's Execution Units of previous GPUs), that's only a theoretical maximum of 20.1 TFLOPS. Leaks of the lower spec A380 with 128 Vector Engines would check in at 5 TFLOPS. That could be competitive with AMD's RX 6500 XT and Nvidia's RTX 3050, or it might fall well short.

To help assuage our fears about lackluster performance, Intel today demonstrated… video encoding, spread across both the integrated and discrete Intel GPUs in an upcoming laptop. Sigh. Arc gaming performance discussions consisted of talk about Death Stranding Director's Cut getting support for Intel's XeSS, an alternative to Nvidia's DLSS, and not much else. I sure hope Intel is sandbagging, because right now the lack of real-world gaming demonstrations of Arc have me worried.

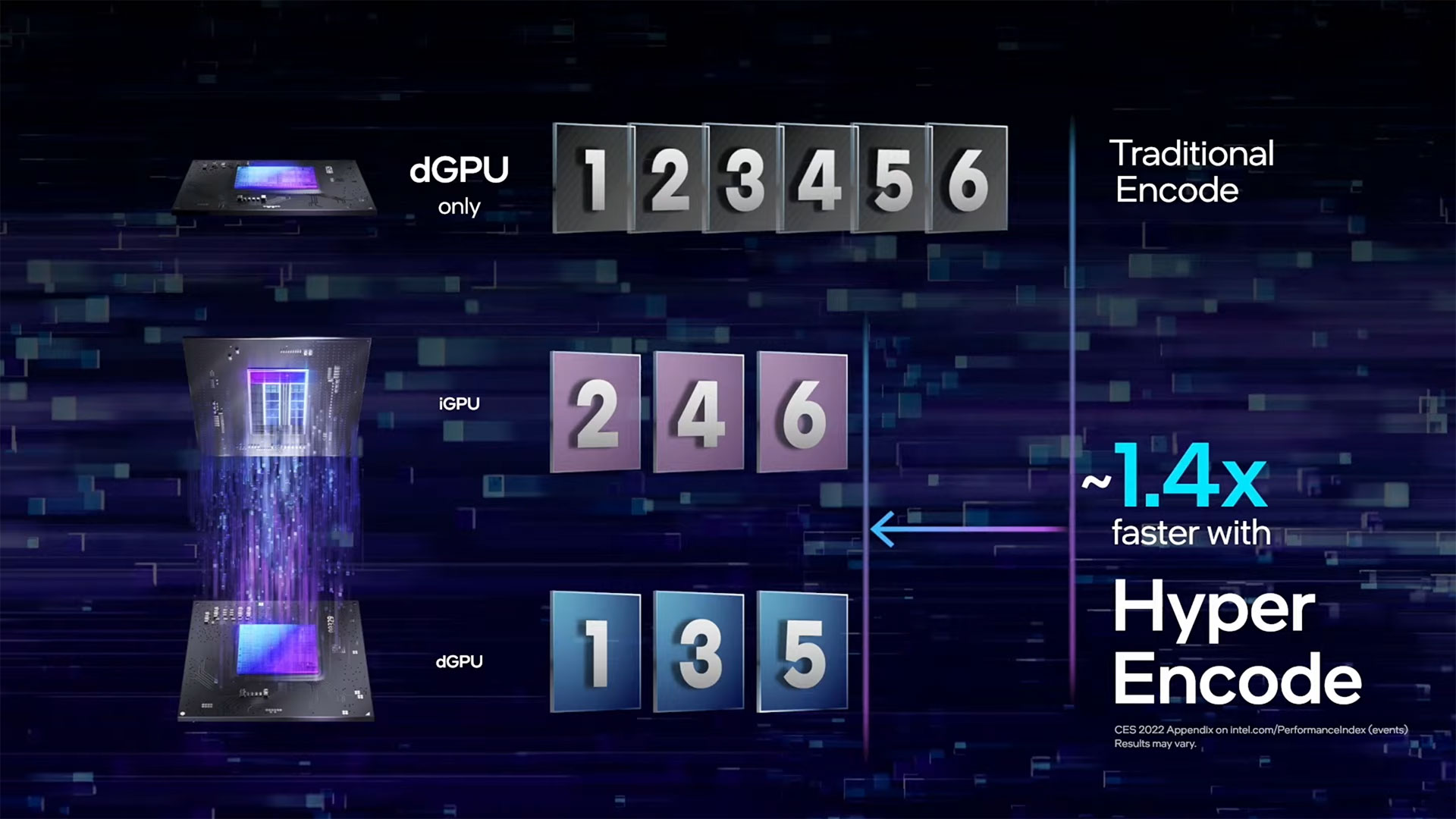

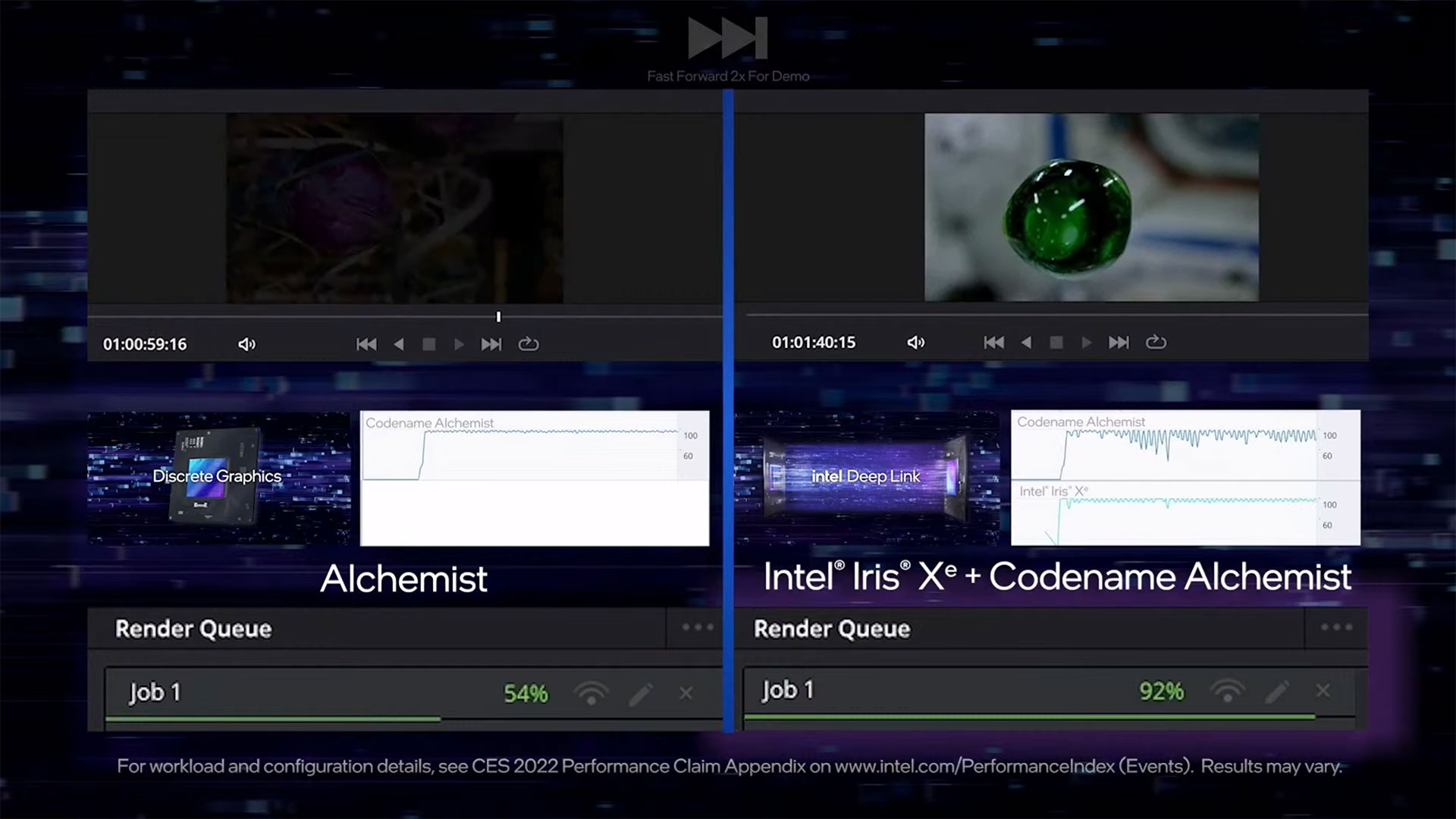

It makes plenty of sense for Intel to go after the mobile market first. Intel sells more laptop chips than desktops, and there's more of a chance to differentiate through power and platform optimizations. However, the Intel Deep Link and Hyper Encode demonstration doesn't feel like something most people are worried about. Intel showed a Davinci Resolve video encode running 40% faster using Hyper Encode compared to just using the discrete GPU for encoding, which is great, but that 40% boost won't extend to traditional gaming.

AMD and Nvidia have basically abandoned CrossFire and SLI multi-GPU rendering. It was too much work and effort for inconsistent rewards. With Intel's integrated Xe Graphics solutions using a different architecture than the Arc dedicated GPUs, we can't see general purpose multi-GPU rendering being likely. We might get XeSS running on the integrated graphics, or other tech like multi-GPU encoding, but we want something more.

Ultimately, the Arc information so far revealed at CES 2022 has been underwhelming. Intel still has up to three months before the stated retail launch, and perhaps we'll still end up impressed, but don't hold your breath. We need to see performance, power use, and compatibility with a large suite of games. Until then, as Intel's presenter put it, "Stay tuned, more excitement is ahead." Alternatively, as a friend put it, this is the ideal time for a newcomer to the GPU space. Intel could drop a turd in a box and it would still sell, since the current GPU prices are enough to make gamers weep.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

gggplaya Intel is smart to not reveal performance before launch. I remember when AMD did that with the RX480, then Nvidia answered back with the GTX1060, stealing all their thunder before launch. Then AMD did it with the Vega 56 and 64, so Nvidia Answered back with a cut down version of the GTX 1080 and called it a GTX 1070 FTW or something like that.Reply

It's best to launch and have at least several weeks of glory and excellent online reviews before Nvidia cuts down an existing product, to match the performance to price ratio of your launching product.

But then again, this ARC GPU could be total garbage which is why they aren't releasing performance numbers. In which case, they shouldn't sell to laptops first, because they are in decent supply. Instead they should make desktop GPU's because they'll sell out even if they do underperform, due to the current situation with GPU availability. -

jkflipflop98 This won't do much to help gamers in all honesty. It isn't going to get better until Intel starts cranking these out in their own fabs. TSMC can't come close to the volume that Intel can produce.Reply -

shady28 Replyjkflipflop98 said:This won't do much to help gamers in all honesty. It isn't going to get better until Intel starts cranking these out in their own fabs. TSMC can't come close to the volume that Intel can produce.

Yeah, this has been my thinking for a while too. Nobody can pump volume like Intel it seems.

However, Intel has a big % of TSMCs allocation. Almost as much as AMD has, I believe it is like 7% Intel and 9.5% AMD. I just don't know exactly how much of Intel's allocation is for ARC, and how much is going into their HPC parts for the Aurora supercomputer. However, at this point I would think fabbing chips for Aurora would be winding down. -

renz496 Replygggplaya said:Then AMD did it with the Vega 56 and 64, so Nvidia Answered back with a cut down version of the GTX 1080 and called it a GTX 1070 FTW or something like that.

Vega 56 is so very close to Vega 64. the gap between 1070 and 1080 is something like 20%. nvidia did that so even an OCed 1070 cannot reach 1080 performance. 1070Ti was a response to Vega 56. but back then Vega was touted as nvidia killer. this makes nvidia drop GTX1080 price

and then introduce 1080Ti in march 2017. in the end AMD only releasing Vega in june with the top Vega only matching GTX1080 performance.

jkflipflop98 said:TSMC can't come close to the volume that Intel can produce.

i don't think this is the case. volume wise TSMC most likely bigger. the thing is intel have the fab for themselves only while TSMC have to serve over 450+ client. also intel is one of TSMC top ten customer. -

JarredWaltonGPU Reply

If AMD is really only 9.5% of TSMC's production, nearly all of that would be going into the consoles. Those are relatively big chips and are selling in the millions. AMD CPUs would take second priority, and the GPUs are a distant third. TSMC is also ramping up capacity, not sure how much things have improved (can improve) without actual new fabs, though. Anyway, Intel's TSMC use would likely be nearly pure GPU production, though future CPUs might also be there as well.shady28 said:Yeah, this has been my thinking for a while too. Nobody can pump volume like Intel it seems.

However, Intel has a big % of TSMCs allocation. Almost as much as AMD has, I believe it is like 7% Intel and 9.5% AMD. I just don't know exactly how much of Intel's allocation is for ARC, and how much is going into their HPC parts for the Aurora supercomputer. However, at this point I would think fabbing chips for Aurora would be winding down. -

shady28 ReplyJarredWaltonGPU said:If AMD is really only 9.5% of TSMC's production, nearly all of that would be going into the consoles. Those are relatively big chips and are selling in the millions. AMD CPUs would take second priority, and the GPUs are a distant third. TSMC is also ramping up capacity, not sure how much things have improved (can improve) without actual new fabs, though. Anyway, Intel's TSMC use would likely be nearly pure GPU production, though future CPUs might also be there as well.

Well, have to keep in mind there is AMD allocation / revenue (9.5%) which is across all TSMC nodes, and then there is allocation by node.

AMD reportedly is putting 80% of its 7nm allocation into consoles > Ref Link

This only leaves 20% for current gen Zen 3. So, AMD appears to me to be ramping down Zen 3, probably in anticipation of ramping up Zen 4.

This follows the same pattern they had in late 2020 :

1 Million Ryzen 5000 CPUs were Sold in Q4 2020: That’s Just 10-12% of AMD’s 7nm Capacity at TSMC

This is why I expect Zen 3D to be more of a process node technology demonstration & probably to iron out any mass production issues with 3D stacking. This isn't new tech but using it in relatively high power parts hasn't been done before AFIK, so they have to be careful they don't run into a bunch of quality issues. Working through that with a limited Zen 3D run before going all out on Zen 4 is probably a smart move.

However there's another piece in the allocation puzzle, which is the 5nm Zen 4. Based on the leak about long term 5nm allocation and allowing that TSMC fell behind this schedule a bit, I would bet AMDs 5nm allocation for 2022 is going to be mostly Zen 4. <- Ref Link

If we factor in revenue at TSMC by customer - noting this isn't directly related to 'fab allocation' but it should be in the ballpark (there are multiple sources for this including Statista and theinformationnet and a number of financial analysts), but Intel was 7.2% of TSMCs revenue and AMD was 9.2%.

However Intel's allocation and TSMC revenue is actually split between GPUs, and ASICs on 5nm, and a lot of those 'GPUs' may be fore HPC not consumers.

AMDs is of course as you said, split up across multiple nodes and products including Zen 3, Zen 3D, console 7nm SoCs, GPUs, and server CPUs.

My general take has been that, given limited allocations, AMD has focused on server / console, desktop / laptop CPUs 2nd, and GPUs last. -

jkflipflop98 Replyrenz496 said:i don't think this is the case. volume wise TSMC most likely bigger. the thing is intel have the fab for themselves only while TSMC have to serve over 450+ client. also intel is one of TSMC top ten customer.

TSMC only has four 12-inch fabs.

Intel's D1X facility can outproduce all of TSMC's company by itself. -

saltweaver No information about Intel ARC supporting Octane renderer or Cycles. This could interest some customers.Reply -

TerryLaze Reply

Yeah, the thing with that is that intel has released like 22 skus right now just for mainstream CPUs, it's not like they can reduce CPU manufacturing to start making GPUs, I mean they could but it would hurt them hugely not being able to supply OEMs with CPUs.jkflipflop98 said:This won't do much to help gamers in all honesty. It isn't going to get better until Intel starts cranking these out in their own fabs. TSMC can't come close to the volume that Intel can produce.

I don't know if they are but they would have to make new FABs just for GPUs. -

jkflipflop98 ReplyTerryLaze said:Yeah, the thing with that is that intel has released like 22 skus right now just for mainstream CPUs, it's not like they can reduce CPU manufacturing to start making GPUs, I mean they could but it would hurt them hugely not being able to supply OEMs with CPUs.

I don't know if they are but they would have to make new FABs just for GPUs.

Normally we convert a fab that's running an older process over to the new stuff. Like for 14nm we'll do Israel and Arizona. Then for 10nm we'll do Ireland and New Mexico. Then when 7nm hits Israel and Arizona will get upgraded again.

D1, of course, is always running the new hotness.