Nvidia Shares RTX 2080 Test Results: 35 - 125% Faster Than GTX 1080

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

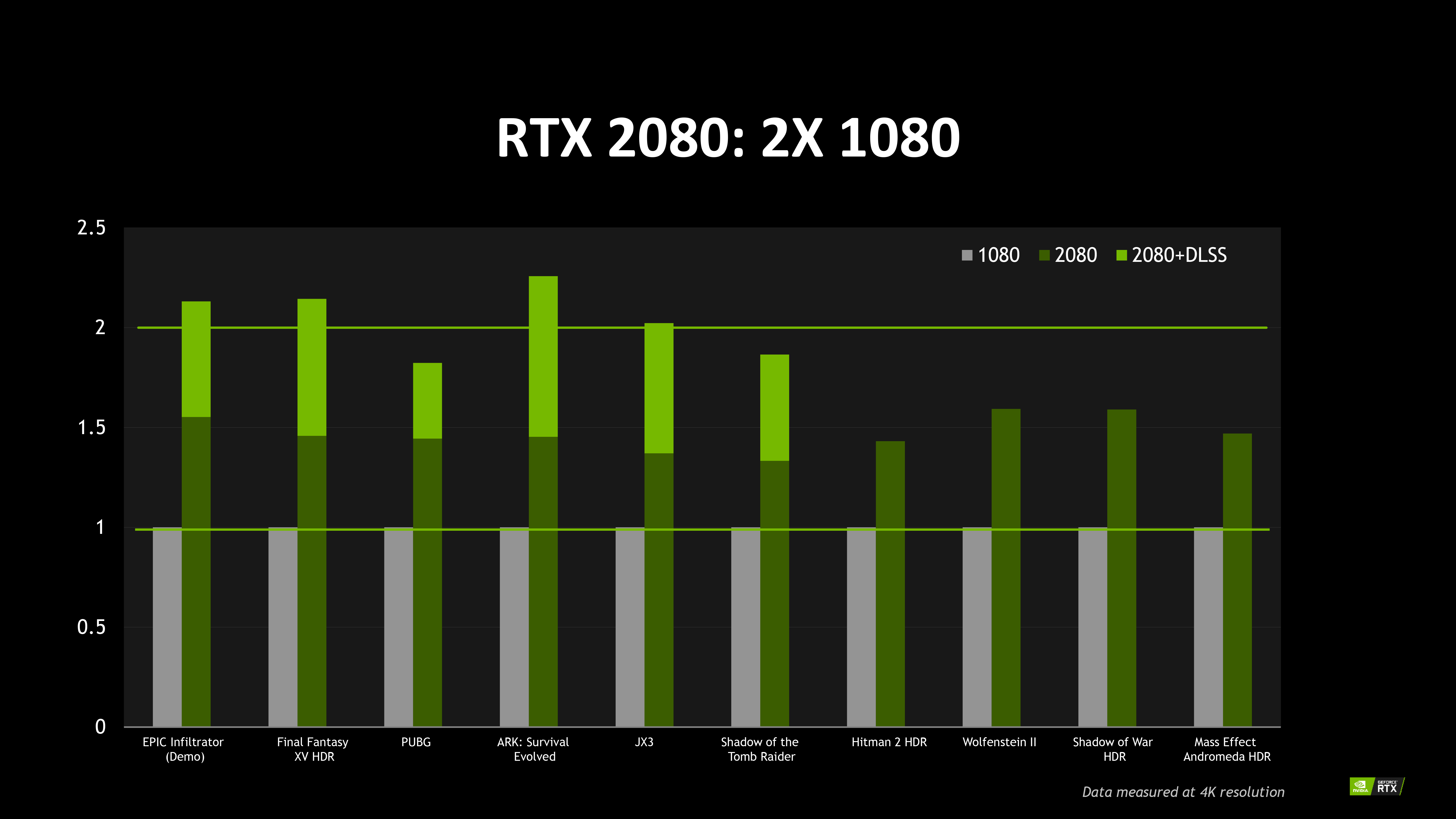

Two days after Nvidia CEO Jensen Huang introduced GeForce RTX 2080 Ti, 2080, and 2070 with a deafening emphasis on real-time ray tracing, the company fed Tom's Hardware early performance data showing GeForce RTX 2080 outperforming GeForce GTX 1080 by anywhere from ~35 percent to ~125 percent at 3840x2160, depending on the workload. Its comparison necessitates a bit of analysis, though.

Right out of the gate, we see that six of the 10 tested games include results with Deep Learning Super-Sampling enabled. DLSS is a technology under the RTX umbrella requiring developer support. It purportedly improves image quality through a neural network trained by 64 jittered samples of a very high-quality ground truth image. This capability is accelerated by the Turing architecture’s tensor cores and not yet available to the general public (although Tom’s Hardware had the opportunity to experience DLSS, and it was quite compelling in the Epic Infiltrator demo Nvidia had on display).

The only way for performance to increase using DLSS is if Nvidia’s baseline was established with some form of anti-aliasing applied at 3840x2160. By turning AA off and using DLSS instead, the company achieves similar image quality, but benefits greatly from hardware acceleration to improve performance. Thus, in those six games, Nvidia demonstrates one big boost over Pascal from undisclosed Turing architectural enhancements, and a second speed-up from turning AA off and DLSS on. Shadow of the Tomb Raider, for instance, appears to get a ~35 percent boost from Turing's tweaks, plus another ~50 percent after switching from AA to DLSS.

Article continues belowIn the other four games, improvements to the Turing architecture are wholly responsible for gains ranging between ~40 percent and ~60 percent. Without question, those are hand-picked results. We’re not expecting to average 50%-higher frame rates across our benchmark suite. However, enthusiasts who previously speculated that Turing wouldn’t be much faster than Pascal due to its relatively lower CUDA core count weren’t taking underlying architecture into account. There’s more going on under the hood than the specification sheet suggests.

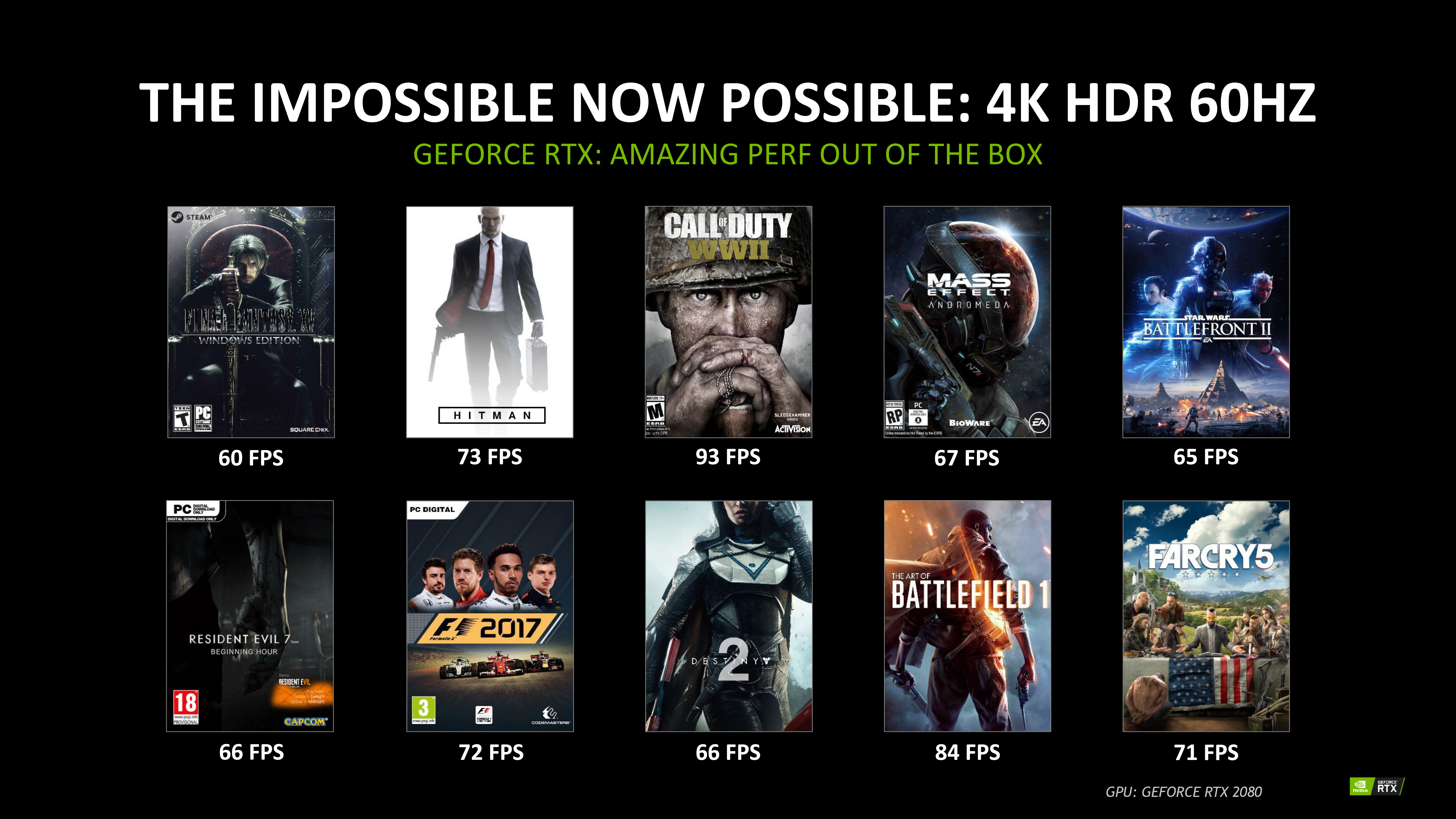

A second slide calls out explicit performance data in a number of games at 4K HDR, indicating that those titles will average more than 60 FPS under GeForce RTX 2080.

Nvidia doesn’t list the detail settings used for each game. However, we’ve already run a handful of these titles for our upcoming reviews, and can say that these numbers would represent a gain over even GeForce GTX 1080 Ti if the company used similar quality presets.

Of course, we’ll have to wait for final hardware, retail drivers, and our own controlled environment before drawing any concrete conclusions. But Nvidia’s own benchmarks at least suggest that Turing-based cards improve on their predecessors in a big way, even in existing rasterized games.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

Patrick_1966 If I can't get 60 - 144fps in 4k then RTX is a total failure for me and not worth the outrageous price. Ray tracing really doesn't help me play my FPS games better. Most of the games I play don't have photo realistic imaging anyways. I am not doing engineering design of living room furniture where the final render would benefit from ray tracing to enhance coffee table reflections of the lamp sitting on it to make it look photo real. The new tomb raider benchmark shows 40fps as it is now.First thing I owuld do would be to turn off the ray tracing since it slows me down. For a majority of the gaming and mining market the RTX is a complete failure and should be ignored.Reply -

Patrick_1966 NVidia has decided that gamers have no value and has added nothing after spending two full years to the value of its graphics cards. There is no value here for gamers. Maybe in another two years after all the games have been re-written and are able to take advantage of the secret tweaks that are currently un-released it might improve. But that will make a total of four years without significant improvements over the 1080Ti. The pricing is simply ridiculous as well. Starting gamers simply do not have the money and can not justify thousands of dollars for a graphics card. This is a total failure on the part of NVidia and its partners.Reply -

TechyInAZ I think we need to see this whole RTX thing from Nvidia's perspective. What does the average/casual gamer want? Image Quality, they don't care didly about frame rate, all they want is that fancy cinematic experience. Unfortunately for all of us hardware enthusiasts/hardcore gamers, we are not exactly that large of a community vs the average consumer.Reply

Nvidia is always going to target the largest consumer market first, hence Ray Tracing taking full priority.

I'm not saying I like this decision one bit, but Nvidia is a company and companies do what gives them the best $$$ for their work.

I wish Nvidia would of launched Volta AND Turing, have Turing be the premium GPU for those consumers who want to get into Ray Tracing fast, then have Volta be the standard card that specifically replaces pascal. -

Mousemonkey Reply21255517 said:How about we wait on independent reviews before we judge the 2000 series?

Dude, the haters are always gonna hate. It wouldn't matter if Nvidia released a card that could could produce hard light holograms, turn lead into gold, cure cancer and bring about an end to world hunger and poverty. -

MCMunroe First of all, I have disclose that I am a Enthusiast PC Hardware guy that is into maximum visual quality and tend to turn up the graphics until the 45-60 FPS range. I own a water cooled GTX 1080 Ti and play all my games in 4K.Reply

I also think that people whom think they need over 100FPS to enjoy a game are morons. … if I read one more forum post of "Help I can't get 500FPS in CS:GO"...

Guys. Let us wait for the real reviews and bench marks. Ray Tracing may be too slow to turn on right now. But all these effect upgrades start as too slow, like HairFX and even Anti-aliasing.

Ray Tracing is a holy grail of rendering as it is what all the Animated Movies ever made are using. It is good that they are pushing it, even if it will take a few generations of hardware to be broadly useable. -

Giroro @TechyinazReply

The "average/casual" gamer isn't out there spending $800 on a graphics card.

The "largest consumer market" is the 85% of gamers who spend less than $300, and Nvidia has not announced any plans whatsoever to bring ray tracing to that market segment. Even if they do, the performance hit on the lower end hardware will be so dire that most games will become totally unplayable, regardless.

Of course, I would argue that Nvidia doesn't care about ray tracing or AI in the high-end gaming segment either. They are just trying to figure out a sales pitch to convince gamers to pay a markup for the enterprise features left over in these factory-reject quadro chips.

The Geforce RTX series has ray tracing because Disney/pixar/Autodesk/Adobe (Nvidia even uses those logos in their marketing) are willing to pay 5 figures for a fully featured Turing card. The AI features are similarly there for Google, Amazon, and auto makers.

Gamers are just being served leftovers that are being re-purposed as a marketing gimmick. This isn't a big deal in itself, that's what they always do... but I don't think Nvidia actually has much motivation to ensure it catches on with developers, or even to fully flesh out drivers to ensure that the tech can be used effectively in games.

There's a good chance that Nvidia will drop ray tracing and DLSS the when they start trying to get the die size down for low/mid range gaming. Of course that's assuming they don't just slap 2060/2050 labels on their overstock 1080/1070 chips like AMD would. -

Geef **Think of it like this**: When Anti-Aliasing first came out how often were you using it to play games? Not much. We had to wait for new cards with more power to show up and allow it to be used without slowing things down.Reply

It will be similar with Ray Tracing. -

tran.bronstein While I am equally outraged at the high RTX prices as other entusiasts, I think it was disingenuous to actually believe there would be no improvement over current GTX 1000 era cards. There will be some for sure. It's just a matter if we consider that improvement worth the price. I sense people are hoping for the drastic 56% increases in FPS the 1000 series brought over the 900 series and NVIDIA to be fair has been saying all along that ray-tracing is what they implemented to accomplish just that. I agree with their pushing the technology forward if not the exorbitant price they want to charge for it.Reply