Intel Silvermont Architecture: Does This Atom Change It All?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

The Silvermont Architecture

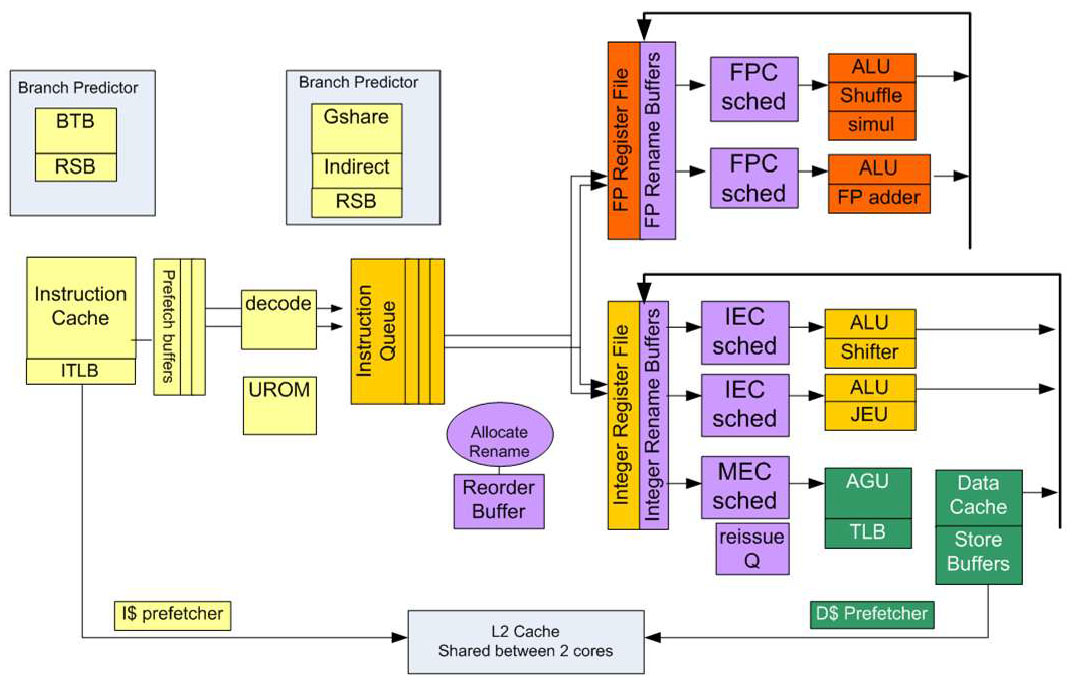

So again, we know that Silvermont is based on an out-of-order execution engine, which has huge ramifications for performance compared to Saltwell (remember, that design is already competitive with other SoCs available today). Intel continues to lean on macro-op execution for more efficient handling of certain x86 instruction combinations, though.

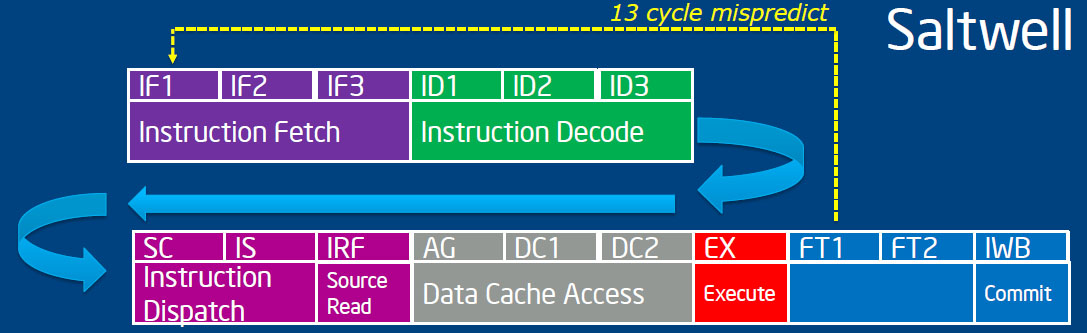

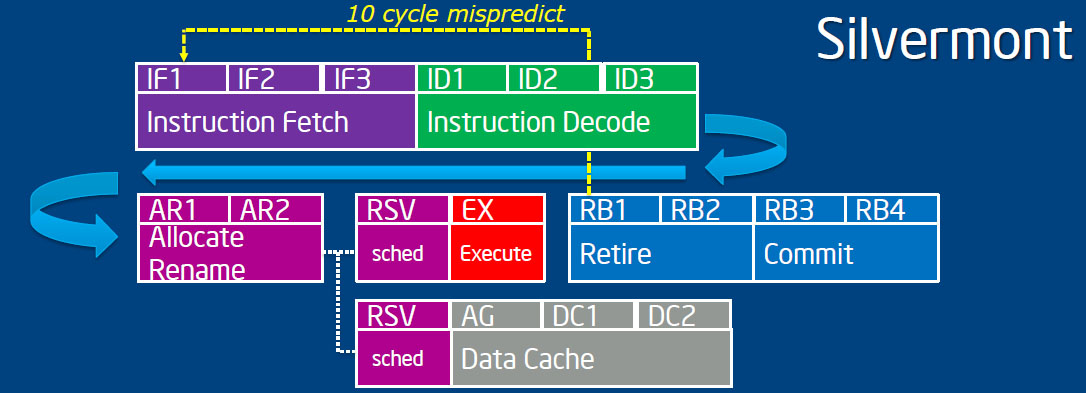

The 32 nm Saltwell execution pipeline is 16 stages long, and because it’s in-order, macro ops have to go through the whole thing, even if they don’t need the cache access stages. As a result, branch mispredicts waste 13 cycles. In Silvermont, the op can bypass the access stages and execute if cache isn’t needed. Mispredicts consequently only burn 10 cycles.

Each Silvermont core receives a number of tweaks and improvements, from larger branch predictors to the reworked execution units and bigger caches. A lot of effort went into identifying instructions that were on the slower side in Intel’s Bonnell design. Silvermont improves much of that, reducing latency and increasing throughput. Floating-point add operations are down several cycles each, packed SIMD double results are achieved in four clocks (instead of nine), and signed multiplies are sped-up significantly. All told, Intel claims that its per-core IPC is about 50% higher across a wide swath of workloads. Consider the jump from Sandy Bridge to Ivy Bridge, where we saw single-digital IPC gains comparing two CPUs running at the same frequency. A 50% boost is outright massive.

Article continues belowBut of course, Atom typically shows up in multi-core configurations. When the processor family first launched, it was a single-core chip. Not long after, Intel introduced a dual-core model, also manufactured at 45 nm. When it came time to adopt 32 nm, only dual-core versions surfaced. And as the company advances it process technology, more parallelized configurations become viable. In fact, Silvermont can scale as high as eight physical cores.

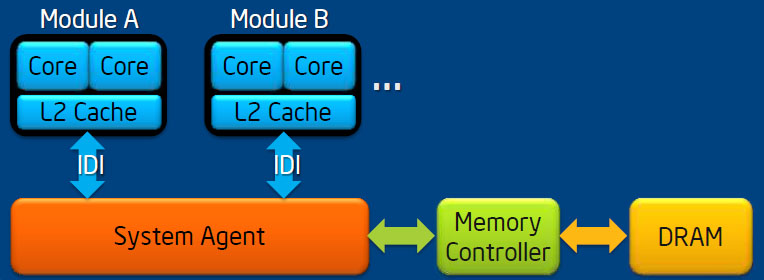

Now, the L2 cache is tightly coupled to the cores, yielding low latency and high bandwidth. Intel’s architects didn’t want to share that cache across more than two cores, though. So they went with a module-based approach. Each little building block includes a pair of cores and 1 MB of L2 cache shared between them (previous Atom processors had 512 KB of L2 per core). Individual cores, the L2 cache, and the interface between the cores and cache can all be power-gated. The cores in a module can even run at different frequencies, though they’ll operate symmetrically by default.

Modules communicate over a point-to-point in-die interface with independent read and write channels, replacing the front-side bus topology altogether. Incidentally, Intel identifies its IDI as one of the keys to the modularity of the Nehalem/Westmere generation, and it’d seem that a lot of work from the “big” core space is affecting Atom here today.

Intel took a look at its core architecture, optimized for single-threaded performance, along with its modular approach to scalability, and chose to drop Hyper-Threading. Including the technology would have increased power use in single-threaded workloads. So the company bypassed SMT altogether, favoring more cores to boost performance in parallelized tasks.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

At the same time, Intel’s engineers incremented its instruction set architecture to the 2010 Westmere class—up four years from the original Atom design’s Merom-compatible ISA. SSE4.1, SSE4.2, and POPCNT (which operates on integer registers) are part of this ISA package update, augmenting the Atom’s performance picture. AES-NI acceleration and Secure Key (including the RDRAND instruction and Digital Random Number Generator) also make it in.

Virtualization acceleration evolves from VT-x support to the technology’s second generation, introduced with Nehalem, supporting Extended Page Tables. Virtual Processor IDs in the TLBs and Unrestricted Guest (allowing KVM guests to run real and unpaged mode code natively when EPT are turned on) are part of that same evolution.

Current page: The Silvermont Architecture

Prev Page Can Silvermont Take Atom From Zero To Hero? Next Page Power Management: The Key To Any Successful Mobile Architecture-

hero1 Nice article as always C.A. I would really like to see this chip on a smartphone. If the performance and power utilisation is as good as it looks then Qualcomm will really feel the heat. Intel has the money and R&D to pull off a big move and compete. Time will tell.Reply -

SchizoFrog I wonder if there are any plans to release Windows Phone 8 smartphones with these SoCs over the next 12-24 months? That would really solidify the eco-system for both Intel and Microsoft in one fell swoop.Reply -

hannibal Much needed upgrades in here. Hopefully they allso deliver what they promise in these slides. Any devices out in this year or do we have to wait untill 2014 we see something based on these. But very promising indeed! A windows pro tablet based on these at desent price would be first candidate to start good move to Windows based tablets. Then there would be three good alternatives in tablets.Reply

-

de5_Roy bulldozer!Reply

.. is the first thing came to my mind when i started reading about the cores. but it's not exactly like bd, it's different. still.. it made me chuckle. amd deserves the credit.

i wonder if future intel cpus ($330+ core i7) will have the same core system instead of htt.... :whistle: :ange: :lol:

edit2: rodney dangerfield FTW! \o/ -

tipoo de5_Roybulldozer!.. is the first thing came to my mind when i started reading about the cores. but it's not exactly like bd, it's different. still.. it made me chuckle. amd deserves the credit.Reply

Well, it's just the cache that's shared in this one, no actual execution resources. -

esrever Finally intel is getting serious. Ditching hyperthreading is the best thing they could have possibly done. Now with OoO and real cores these atoms are looks pretty powerful. They will probably beat Kabini no problem with higher clocks with slightly less IPC. The 22nm trigate will drop power consumption especially without the shitty hyperthreading in the way.Reply -

de5_Roy Reply

i noticed the lack of information on the integrated graphics part. having a powerful cpu isn't enough for atom. the gpu part has always been the weakest point for intel. kabini otoh, will have gcn-based, hsa enabled, low power igpu.10768040 said:Finally intel is getting serious. Ditching hyperthreading is the best thing they could have possibly done. Now with OoO and real cores these atoms are looks pretty powerful. They will probably beat Kabini no problem with higher clocks with slightly less IPC. The 22nm trigate will drop power consumption especially without the shitty hyperthreading in the way.

-

4745454b So they still have an off die memory controller. I would have thought they would have moved that on die by now.Reply

Any more info on this "system agent" and IDI? I'm also surprised the cores can't talk directly to each other. If you want to use many small cores to tackle a problem together that's fine. But give them the ability to do it quickly.

It seems Intel is getting the ball rolling on their smaller chips. I just hope that when they finally do they ditch the Atom name. Bad chips, get a new name for those that aren't. -

esrever de5_Royi noticed the lack of information on the integrated graphics part. having a powerful cpu isn't enough for atom. the gpu part has always been the weakest point for intel. kabini otoh, will have gcn-based, hsa enabled, low power igpu.Too true. Not a single mention of it probably means it won't be anything to brag about. Intel isn't really the type of company that likes to hide breakthroughs anywhere. Im expecting them to finally be able to do 1080p tablets and thats about it.Reply -

jerryblack No, it won't, regardless of what Intel's press release says. If I've learned anything in the past few years, is never take what Intel says in the PR at face value, because it never turns out true.Reply

Silvermont may arrive a few months before the 20nm process for ARM chips is ready, but will that be enough, considering Intel's chips cost 2-3x more than the ARM equivalent? Probably not.