What Does DirectCompute Really Mean For Gamers?

We've been bugging AMD for years now, literally, to show us what GPU-accelerated software can do. Finally, the company is ready to put us in touch with ISVs in nine different segments to demonstrate how its hardware can benefit optimized applications.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

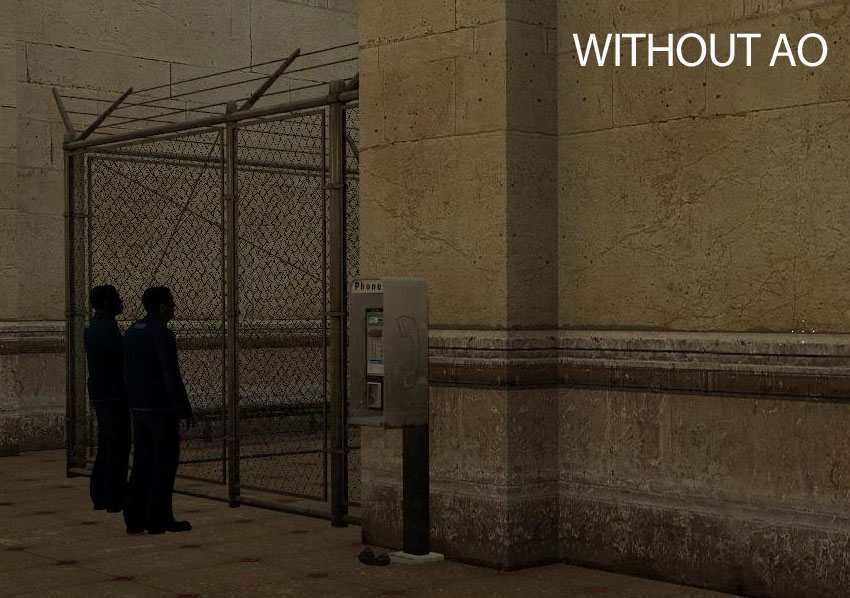

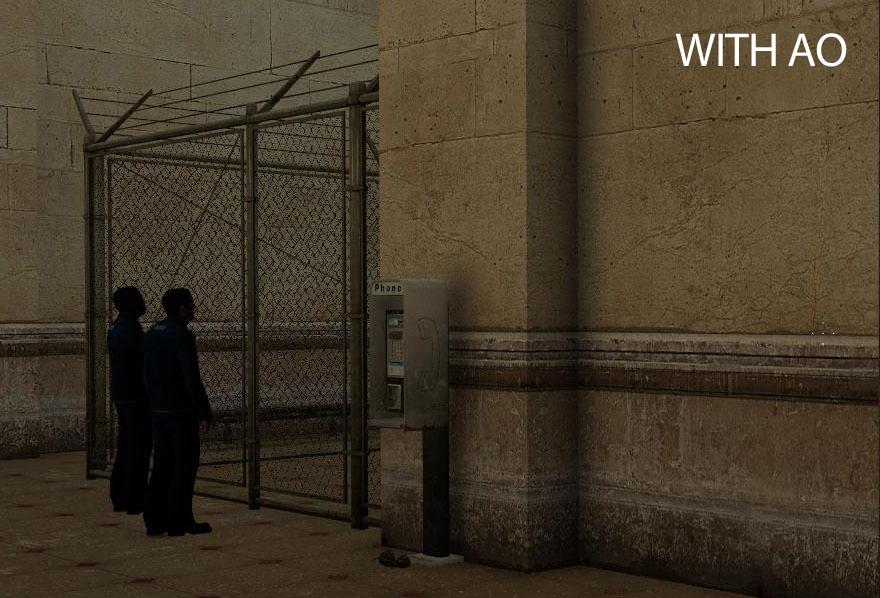

Ambient Occlusion, Continued

Ambient occlusion can also be performed via pixel shaders. Developers have a choice between which method to use and, going into this article, we were a bit in the dark about why DirectCompute might be preferable. After all, we’d seen enough early benchmarks showing that using DC-enabled effects could significantly impact graphics performance (and not in a positive way). Using compute resources to achieve a feature that couldn’t be done otherwise was one thing, but why pick DirectCompute when shaders were already getting the job done? Well, for starters, DirectCompute has no more of an impact on performance than pixel shaders.

“For each pixel the occlusion term is calculated for, multiple reads of the depth texture are required,” says Codemasters’ Thomas. “In a pixel shader, each texture read costs cycles. In a compute shader, the LDS (local data share) is filled with the nearby depth information from the depth texture, and subsequent reads are significantly cheaper compared to a texture fetch.”

In this series, we want to keep returning to the question of heterogeneous computing and how adept today's hardware is at handling the tasks discussed. How do APUs compare to discrete graphics and host processors operating over PCI Express? If texture fetches are coming from memory, and APUs are relying on a shared system RAM architecture, does this inherently handicap an APU's ability execute this task efficiently, or is its proximity to the host processing resources a boon instead?

Article continues below“HDAO only requires the depth of the scene as an input,” says Thomas. “This has to be rendered first, but in practice most games already have this information hanging around from either the g-buffer or a depth pre-pass. The depth buffer is a video memory resource and the implementation of HDAO would be no different on an APU compared to a GPU. The technique is very memory efficient since the only extra memory requirement is for the output texture. This is another reason why the technique is becoming increasingly popular.”

This is born out in our test results, and it’s an important point to make up front. You're going to look at our upcoming Battlefield 3 results and see that the APU only manages an average of 14 FPS with horizon-based ambient occlusion (HBAO) enabled—a clearly unplayable rate. With the Radeon HD 7970 card pulling in results 8.5x greater, it'd be natural to assume that the APU simply can’t handle the DirectCompute load. But don’t let the article’s context mislead you. Even with ambient occlusion disabled, the APU system only averages 16.6 FPS.

Battlefield 3's load is such that it's the APU's graphics muscle is unable to keep up. It's not the chip's heterogeneous architecture killing performance. We simply need hardware with more horsepower.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Ambient Occlusion, Continued

Prev Page DirectCompute Helps Enable Ambient Occlusion Next Page What We Tested: Battlefield 3-

de5_Roy would pcie 3.0 and 2x pcie 3.0 cards in cfx/sli improve direct compute performance for gaming?Reply -

hunshiki hotsacomanHa. Are those HL2 screenshots on page 3 lol?Reply

THAT. F.... FENCE. :D

Every, single, time. With every, single Source game. HL2, CSS, MODS, CSGO. It's everywhere. -

hunshikiTHAT. F.... FENCE. Every, single, time. With every, single Source game. HL2, CSS, MODS, CSGO. It's everywhere.Reply

Ha. Seriously! The source engine is what I like to call a polished turd. Somehow even though its ugly as f%$#, they still make it look acceptable...except for the fence XD -

theuniquegamer Developers need to improve the compatibility of the API for the gpus. Because the consoles used very low power outdated gpus can play latest games at good fps . But our pcs have the top notch hardware but the games are playing as almost same quality as the consoles. The GPUs in our pc has a lot horse power but we can utilize even half of it(i don't what our pc gpus are capable of)Reply -

marraco I hate depth of field. Really hate it. I hate Metro 2033 with its DirectCompute-based depth of field filter.Reply

It’s unnecessary for games to emulate camera flaws, and depth of field is a limitation of cameras. The human eye is able to focus everywhere, and free to do that. Depth of field does not allow to focus where the user wants to focus, so is just an annoyance, and worse, it costs FPS.

This chart is great. Thanks for showing it.

It shows something out of many video cards reviews: the 7970 frequently falls under 50, 40, and even 20 FPS. That ruins the user experience. Meanwhile is hard to tell the difference between 70 and 80 FPS, is easy to spot those moments on which the card falls under 20 FPS. It’s a show stopper, and utter annoyance to spend a lot of money on the most expensive cards and then see thos 20 FPS moments.

That’s why I prefer TechPowerup.com reviews. They show frame by frame benchmarks, and not just a meaningless FPS. TechPowerup.com is a floor over TomsHardware because of this.

Yet that way to show GPU performance is hard to understand for humans, so that data needs to be sorted, to make it easy understandable, like this figure shows:

Both charts show the same data, but the lower has the data sorted.

Here we see that card B has higher lags, and FPS, and Card A is more consistent even when it haves lower FPS.

It shows on how many frames Card B is worse that Card A, and is more intuitive and readable that the bar charts, who lose a lot of information.

Unfortunately, no web site offers this kind of analysis for GPUs, so there is a way to get an advantage over competition.

-

hunshiki I don't think you owned a modern console Theuniquegamer. Games that run fast there, would run fast on PCs (if not blazing fast), hence PCs are faster. Consoles are quite limited by hardware. Games that are demanding and slow... or they just got awesome graphics (BF3 for example), are slow on consoles too. They can rarely squeeze out 20-25 FPS usually. This happened with Crysis too. On PC? We benchmark FullHD graphics, and go for 91 fps. NINETY-ONE. Not 20. Not 25. Not even 30. And FullHD. Not 1280x720 like XBOX. (Also, on PC you have a tons of other visual improvements, that you can turn on/off. Unlike consoles.)Reply

So .. in short: Consoles are cheap and easy to use. You pop in the CD, you play your game. You won't be a professional FPS gamer (hence the stick), or it won't amaze you, hence the graphics. But it's easy and simple. -

kettu marracoI hate depth of field. Really hate it. I hate Metro 2033 with its DirectCompute-based depth of field filter.It’s unnecessary for games to emulate camera flaws, and depth of field is a limitation of cameras. The human eye is able to focus everywhere, and free to do that. Depth of field does not allow to focus where the user wants to focus, so is just an annoyance, and worse, it costs FPS.Reply

'Hate' is a bit strong word but you do have a point there. It's much more natural to focus my eyes on a certain game objects rather than my hand (i.e. turn the camera with my mouse). And you're right that it's unnecessary because I get the depth of field effect for free with my eyes allready when they're focused on a point on the screen. -

npyrhone Somehow I don't find it plausible that Tom's Hardware has *literally* been bugging AMD for years - to any end (no pun inteded). Figuratively, perhaps?Reply