Intel Plays Defense: Inside Its EPYC Slide Deck

Intel's Take On EPYC

Intel covered the broad Purley strokes, then dove deep into architecture, performance, high-performance computing (HPC) applications, communication service provider use-cases, artificial intelligence, and storage, among other topics. Of course, the company also outlined what it feels are its competitive advantages relative to AMD's processors. Intel worked with the data it had at the time, but AMD released far more information after the fact, so a few of Intel's projections are dated.

We'll present every slide in the presentation, so nothing is taken out of context. We also include slides from AMD's EPYC tech day to clarify the company's features. Intel's slides have a white background (except the first one), while AMD's have a blue background.

We've covered the Zen architecture, AMD's EPYC implementation, Intel's mesh topology, and the new Skylake architecture. Head to those pieces for more in-depth analysis.

Article continues below

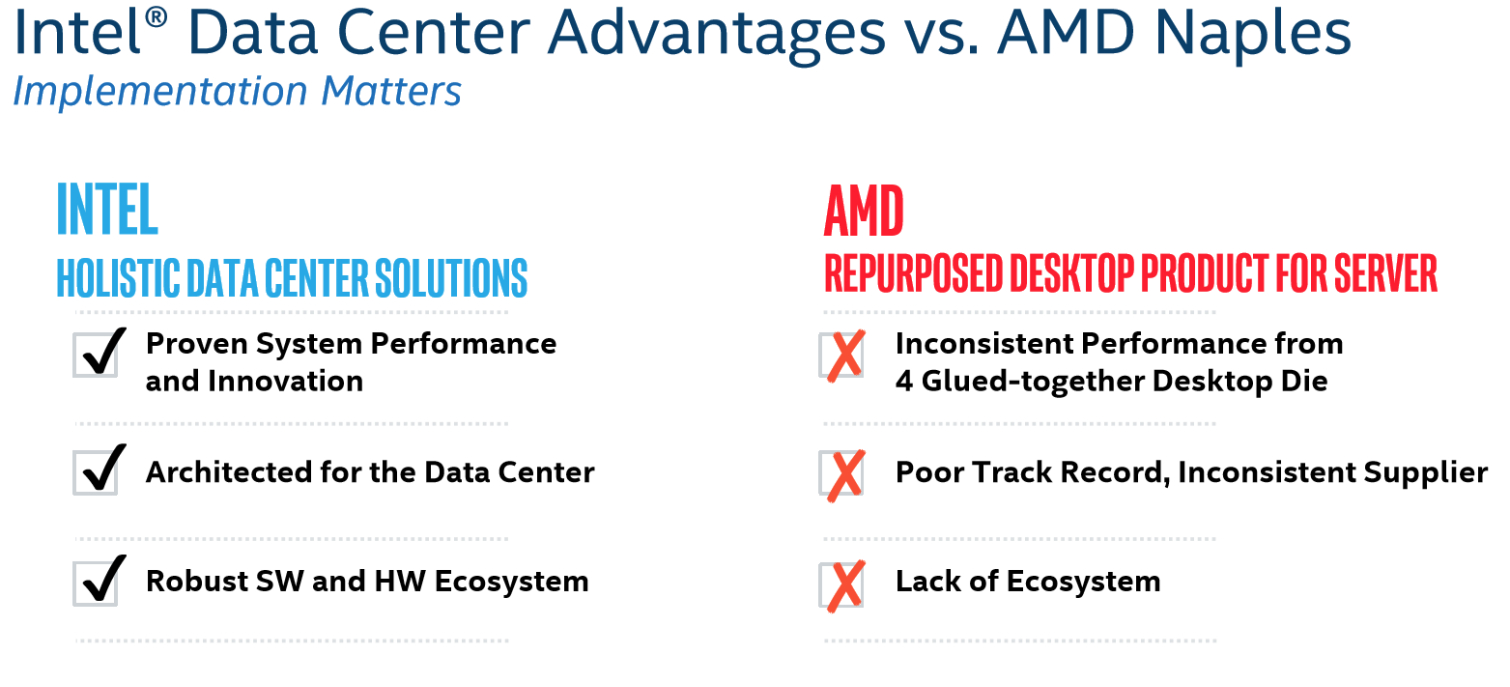

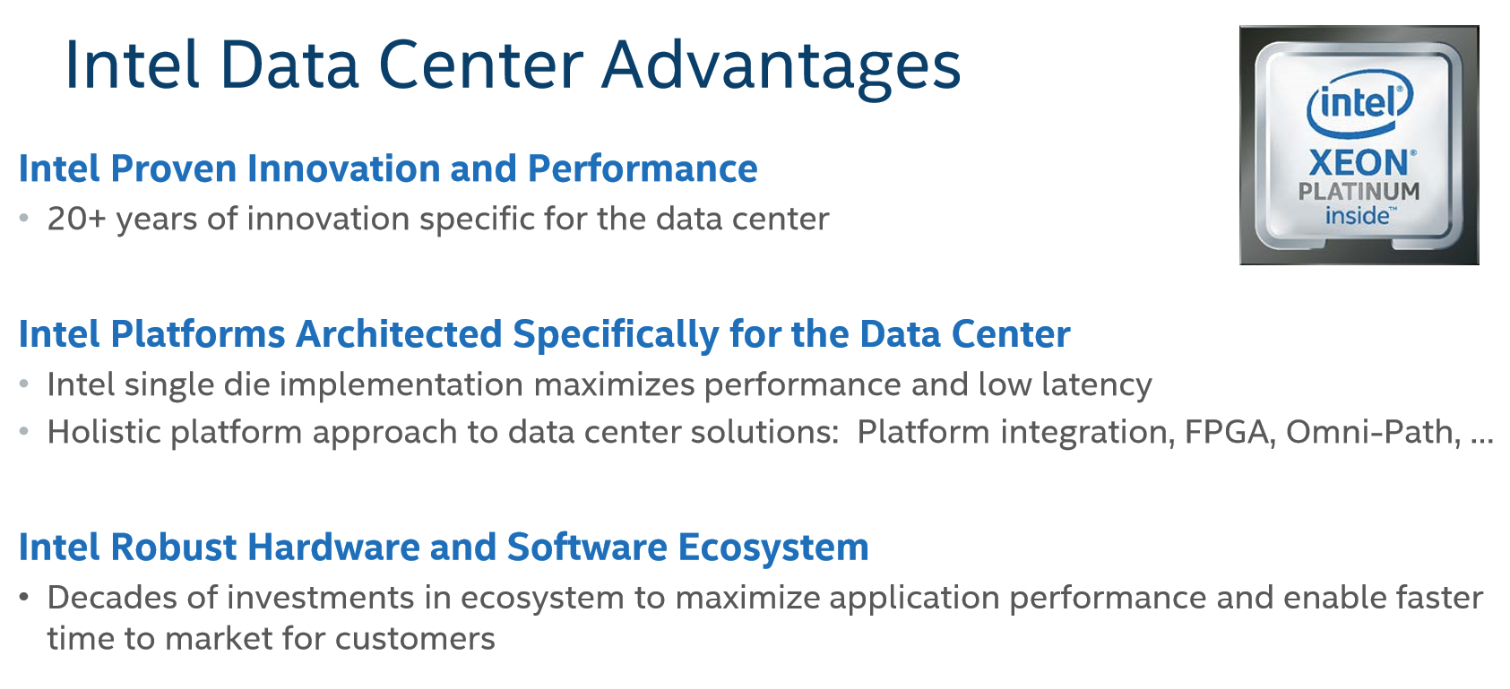

Intel's "advantages" slide outlines what it feels give Xeon an edge in the data center market. Representatives began the presentation by earnestly claiming they take all competition seriously, but noted that AMD has been notably absent from this space for several years. They also mentioned they're strictly working from projections.

The Intel crew touted its work over the last 20 years to help optimize operating systems and other applications for its hardware, including in the open source community. Big emphasis was placed on the fact that Purley "just works" in today's data center ecosystem.

While most of the points mentioned in the third slide of the album above count as strengths, some of that is arguable. Intel lists current and future platform integration, such as FPGAs and networking, as an advantage. But built-in features can be viewed as either holistic or vendor lock-in (a big fear in the data center), depending on your perspective. Intel does wall off certain capabilities behind proprietary interconnects, while AMD embraces open interfaces. A slew of new technologies, such as Gen-Z, OpenCAPI, and CCIX have emerged over the last year. AMD is one of the many industry giants pushing these into the limelight.

Intel claimed that AMD's absence in the data center over the last several years eliminated its competition's once-established ecosystem. It also contended that EPYC architecture isn't as tightly integrated because it uses four "glued-together desktop die." That's bound to generate controversy. After all, Intel also uses the same basic die for Xeons and its HEDT models. However, Intel contends that the uncore and focus are entirely different on its mainstream desktop CPUs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

A mention that the Ryzen ecosystem hasn't been entirely "up to snuff" likely referred to the gaming performance issues AMD faced when Ryzen first launched. Given the chance, AMD would likely counter that collaboration with a number of developers has changed much of what we saw back then. Moreover, a steady stream of AGESA code updates and other optimizations has had a profound effect on performance.

We received no elaboration from Intel on the "Poor track record, inconsistent supplier" check-box in its slide.

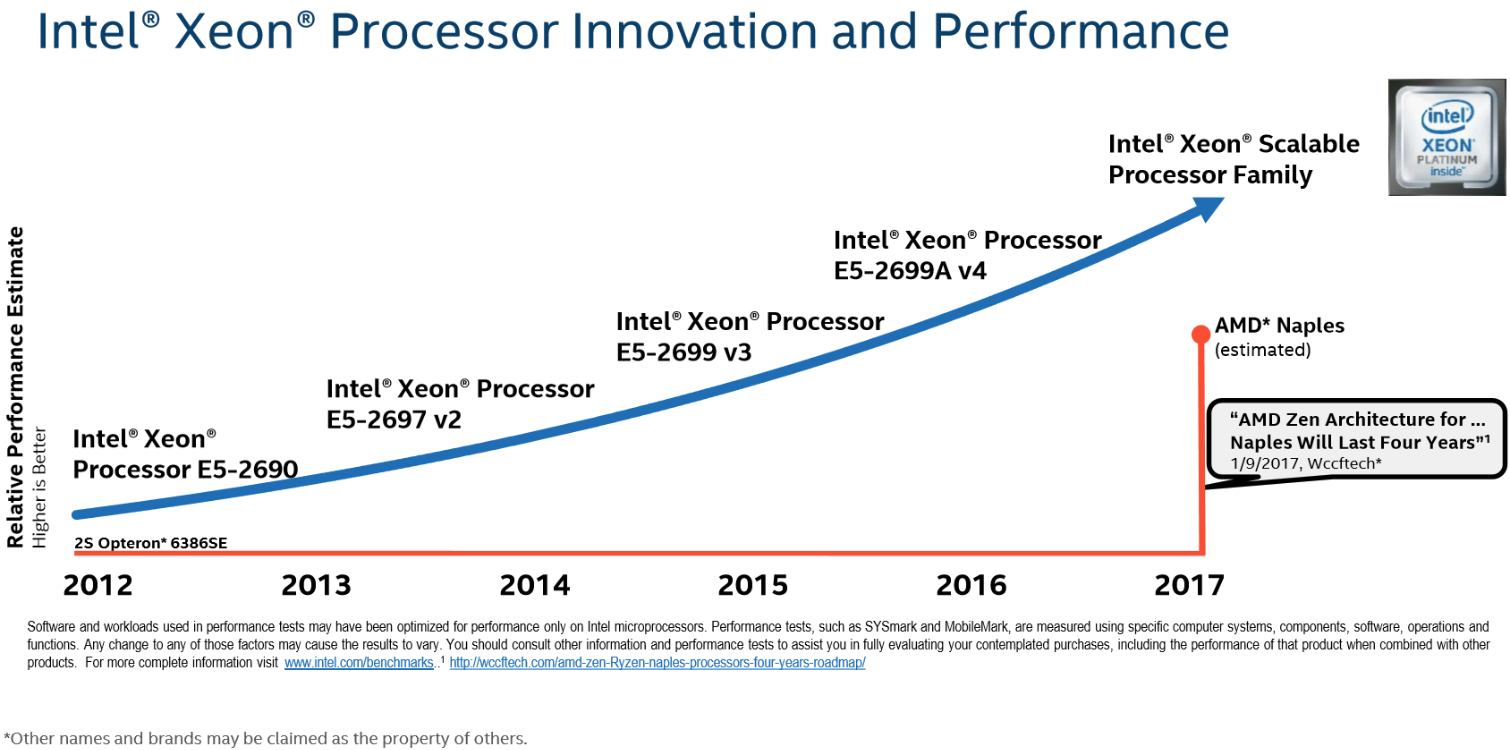

Intel quoted Wccftech as a source reporting that "AMD Zen Architecture for...Naples will last four years." Upon checking the link at the bottom, it appears Intel referenced the article's headline, which reads: "AMD Zen Architecture For Ryzen and Naples Processors Will Last Four Years on 14nm – Future Zen+ Revisions To Improve Architecture." Intel did not mention Zen+ during the presentation, but stated that it wouldn't wait four years before introducing a new architecture. Instead, the company will continue to update its line-up on a steady cadence. The vertical axis represents Intel's performance projections.

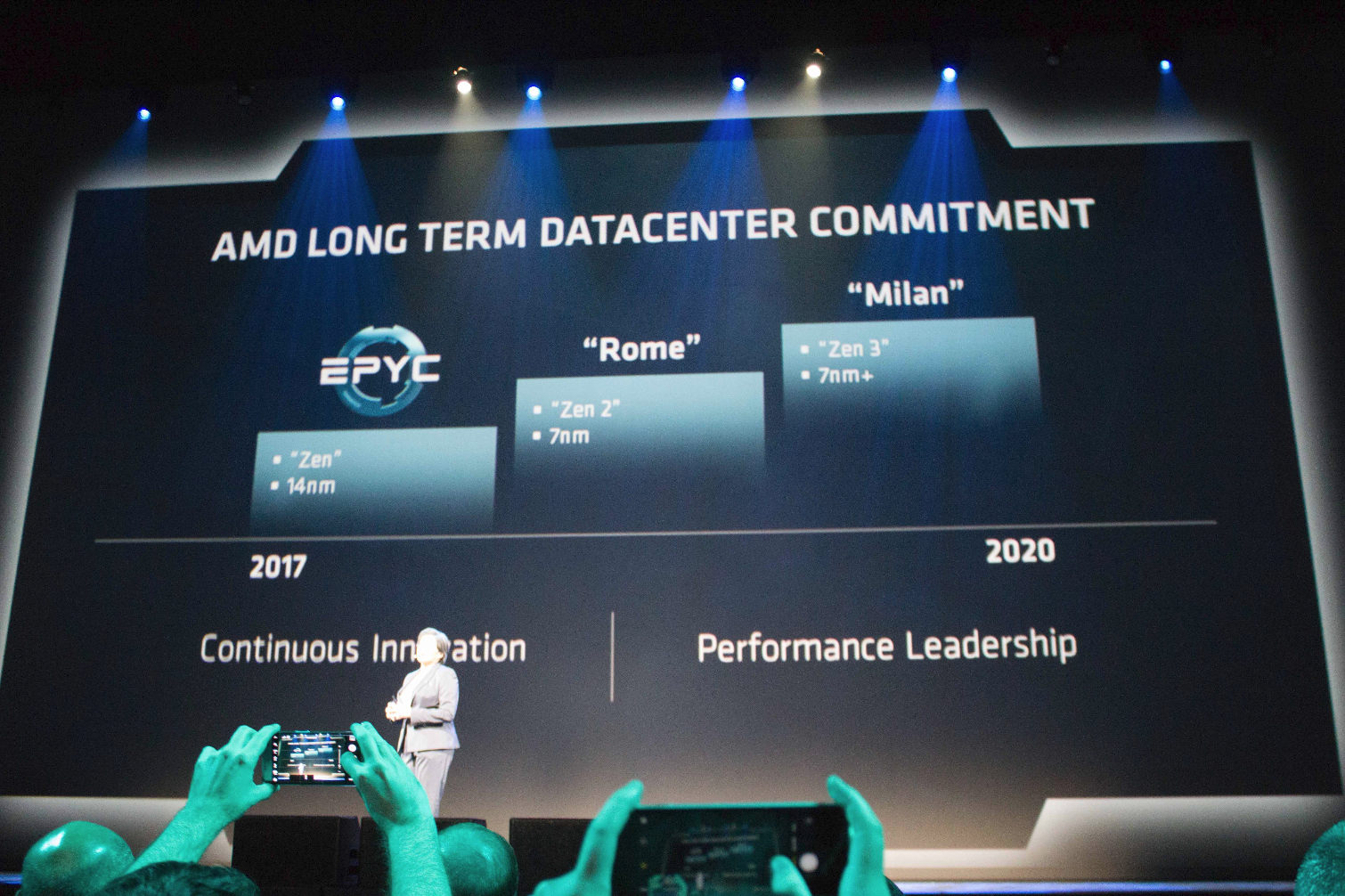

AMD later disclosed that it has the 7nm Zen 2 and Zen 3, code-named Rome and Milan, respectively, scheduled for release before 2020.

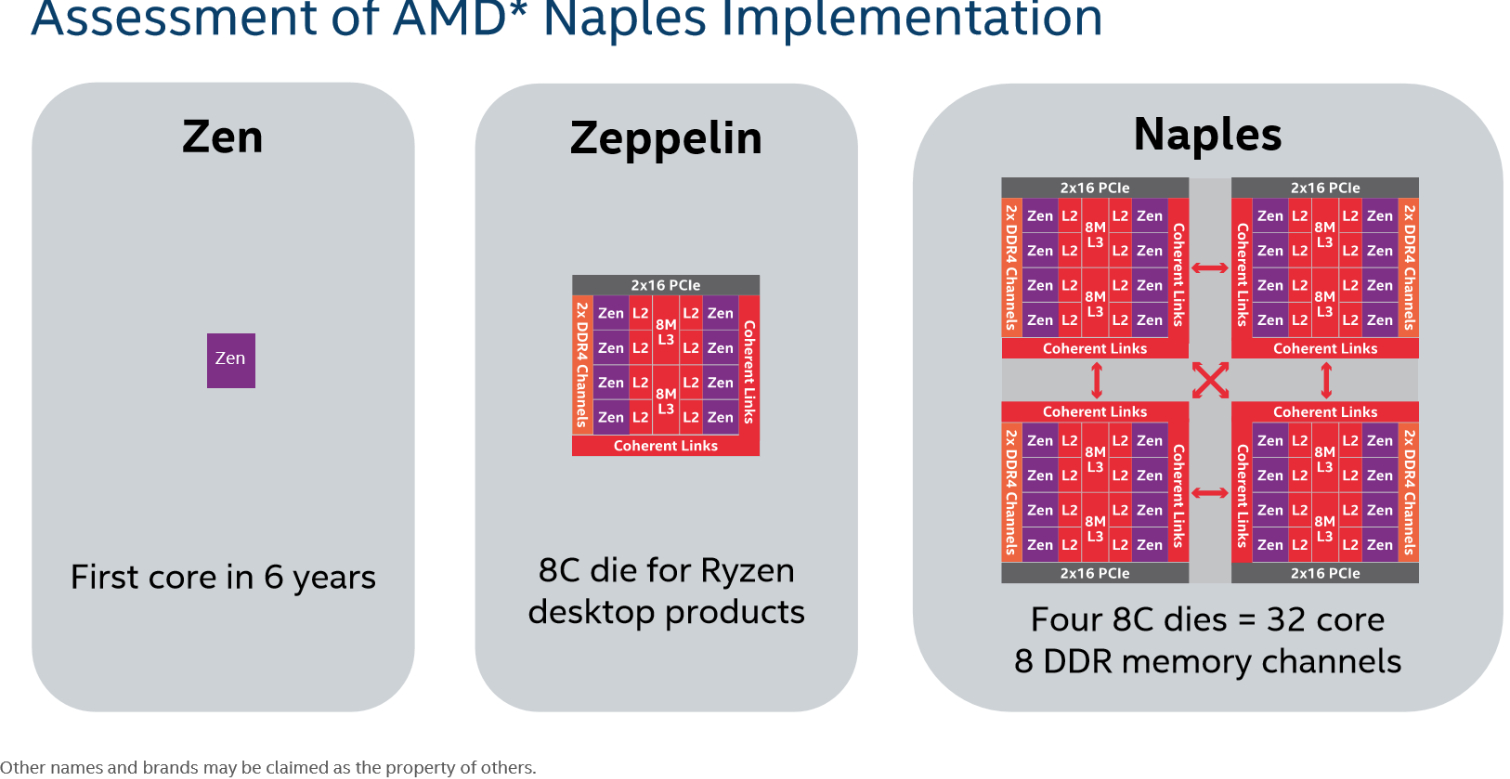

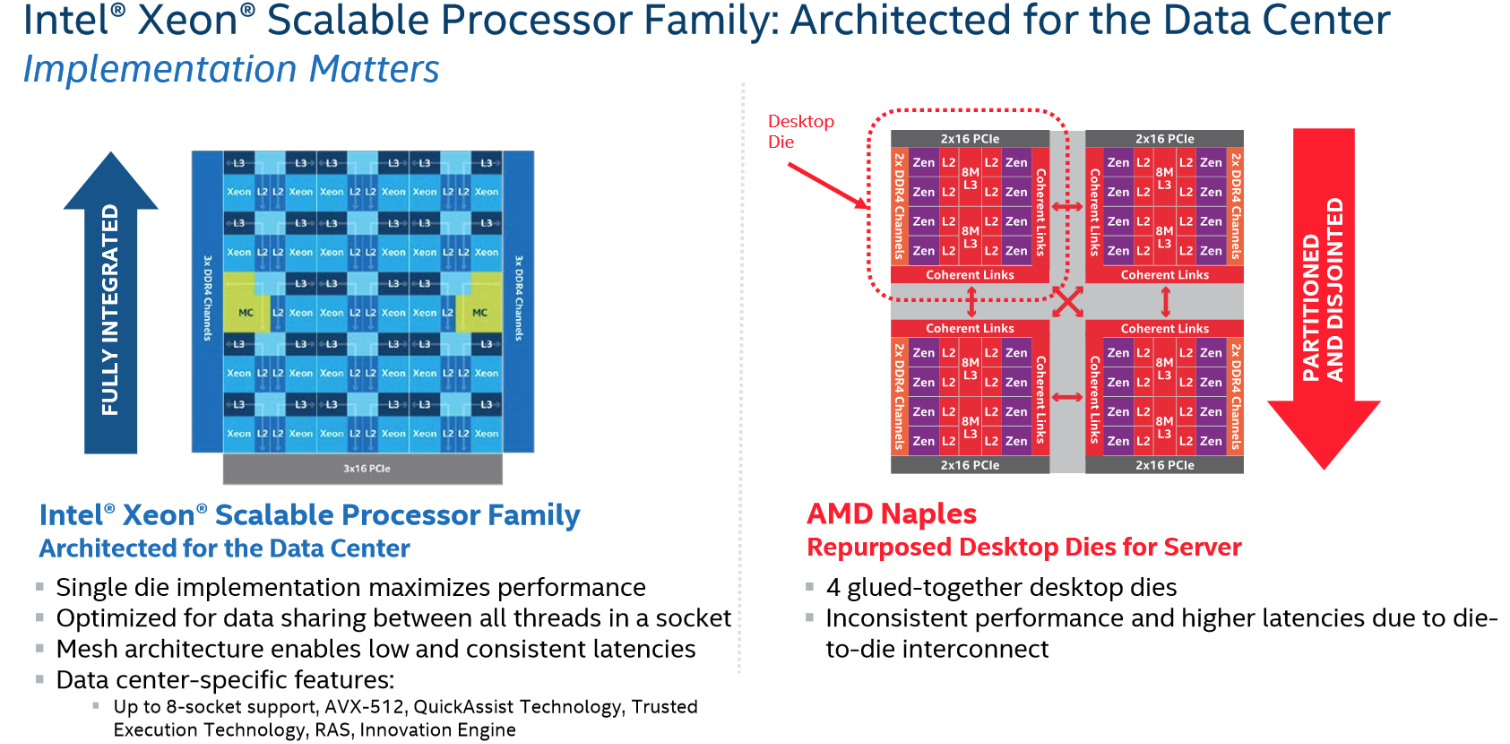

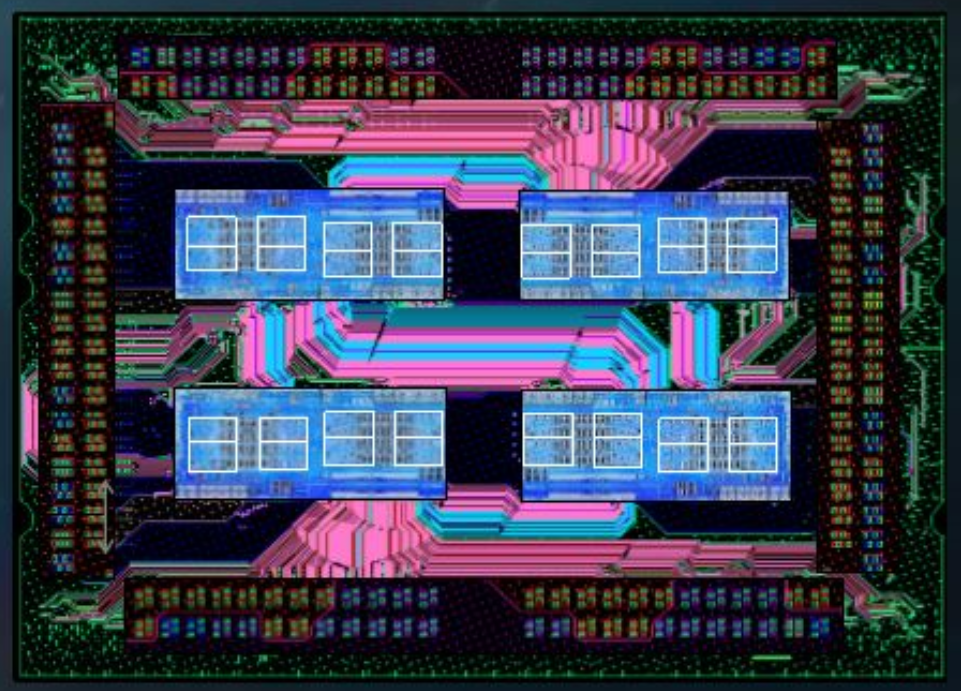

This slide outlines Intel's interpretation of the Zen architecture. It uses AMD's name for the server-oriented implementation, Naples, though we now refer to this as EPYC.

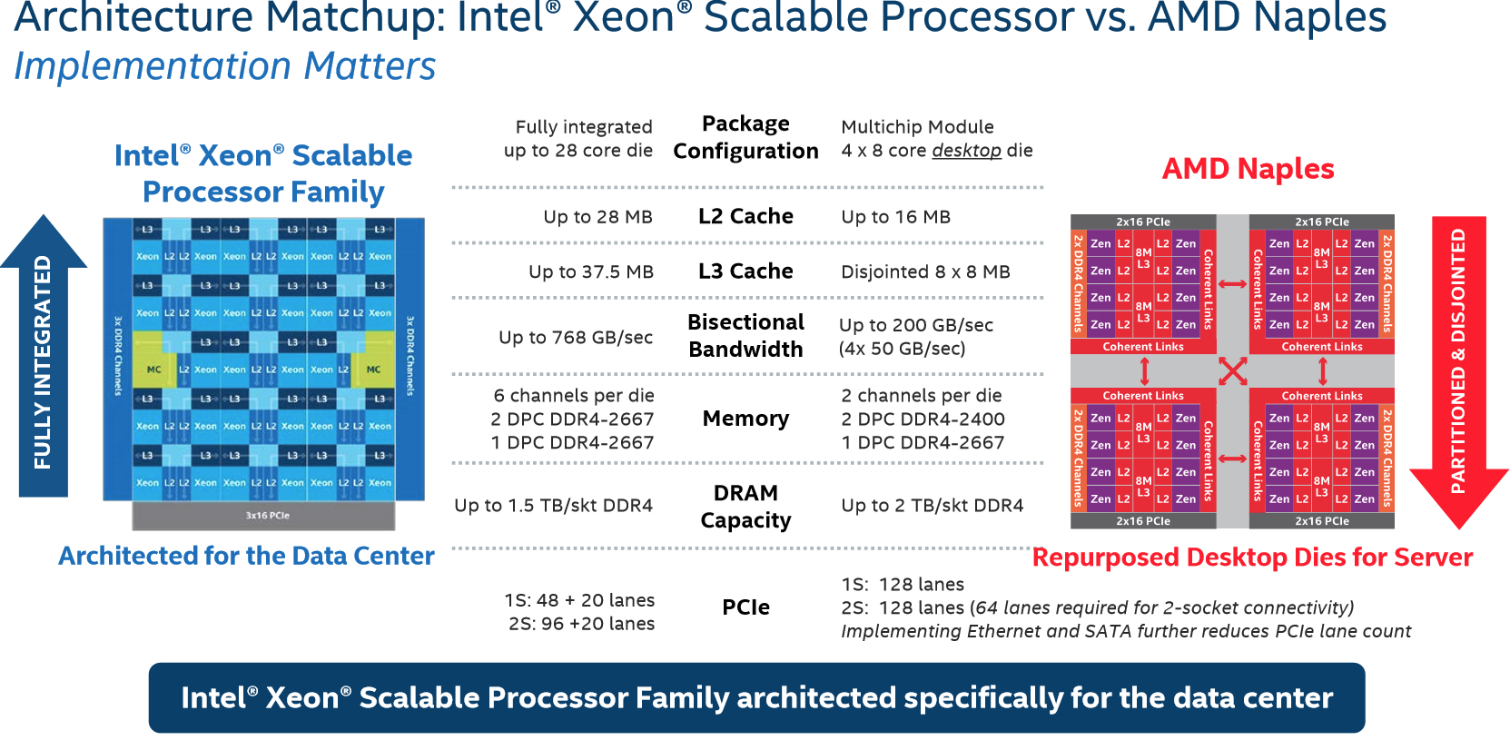

Intel noted that Purley employs a single-die design (covered in depth here) as opposed to AMD's quad-die approach. Consequently, it enjoys lower latency, along with consistent cache, memory, and PCIe accesses. The company believes that AMD's multi-die architecture will result in less predictable performance between those components due to the separate Zeppelin dies and Infinity Fabric interconnect. Intel acknowledged that slower access isn't important to all workloads, but it critically affects some.

We were reminded that Intel sells two-, four-, and eight-socket systems, whereas AMD's EPYC only scales to two sockets. AMD is currently focused on the one and two-socket servers that address the largest segment of the market. The company has not announced any plans to support four- and eight-socket servers with EPYC.

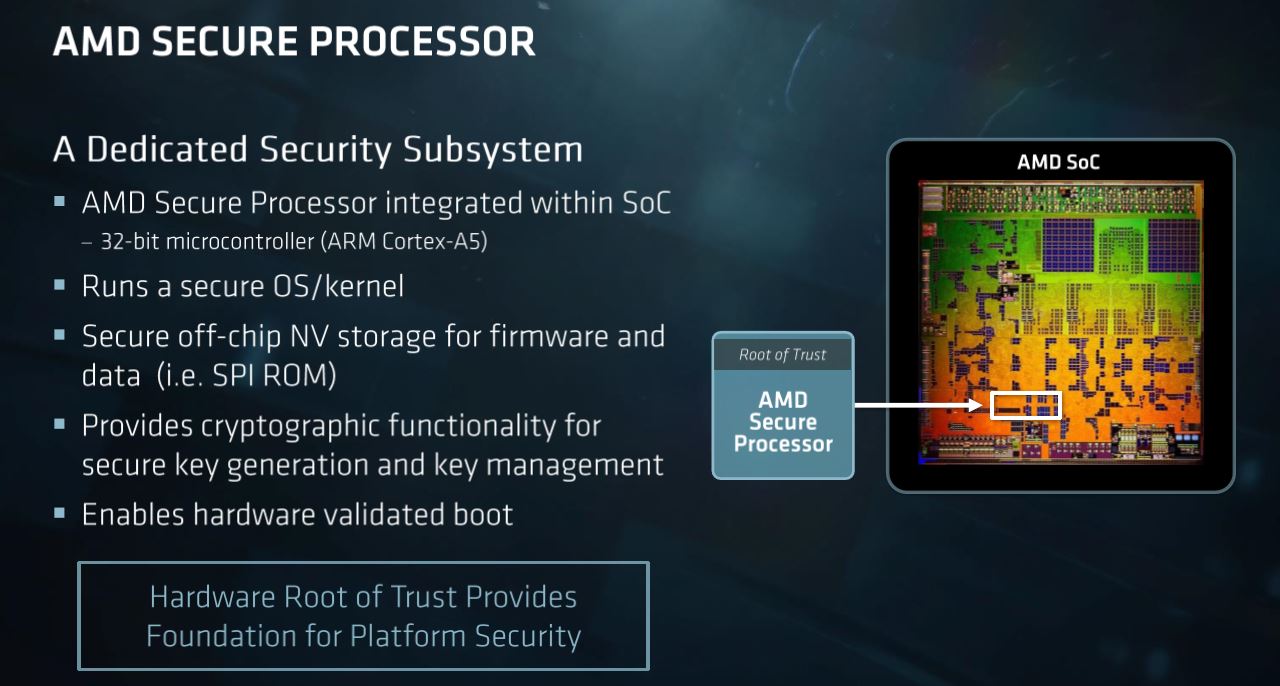

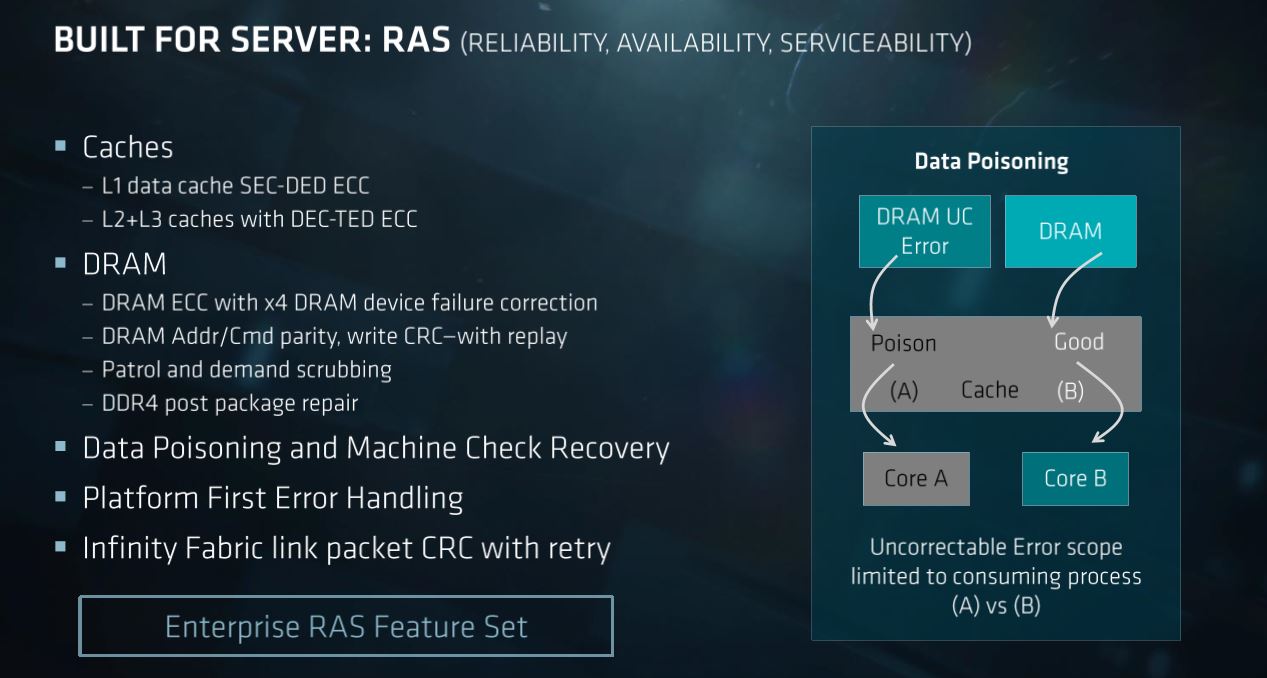

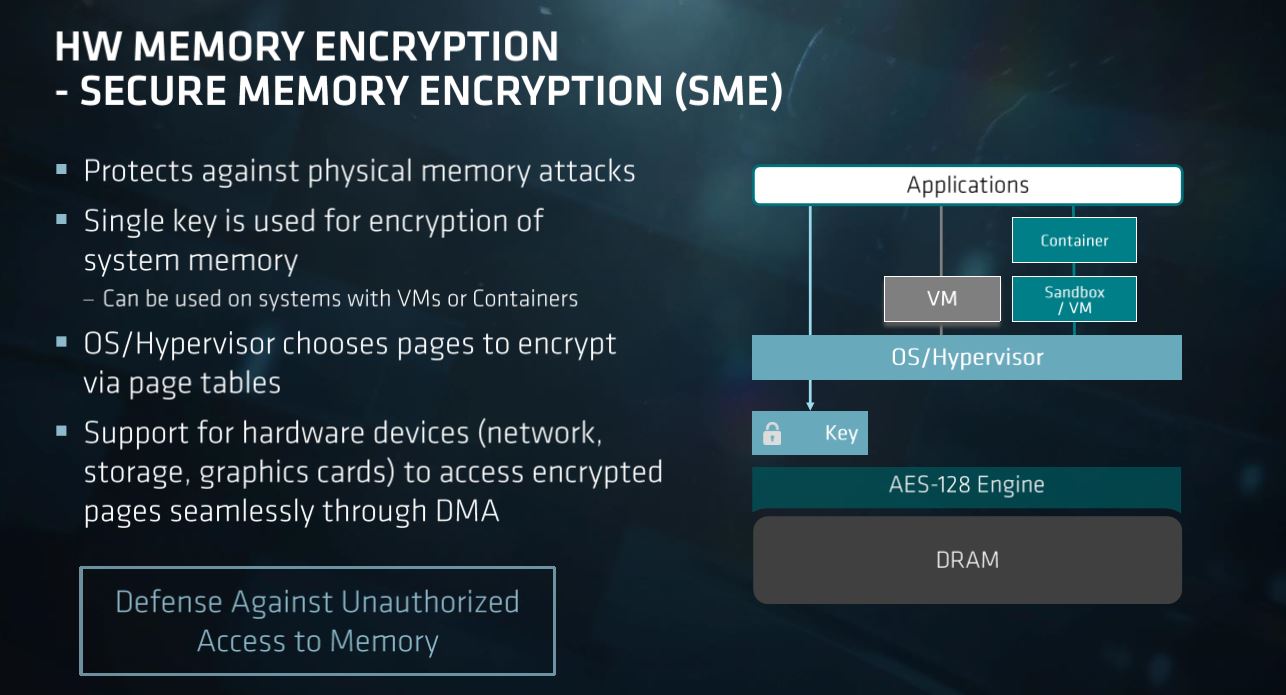

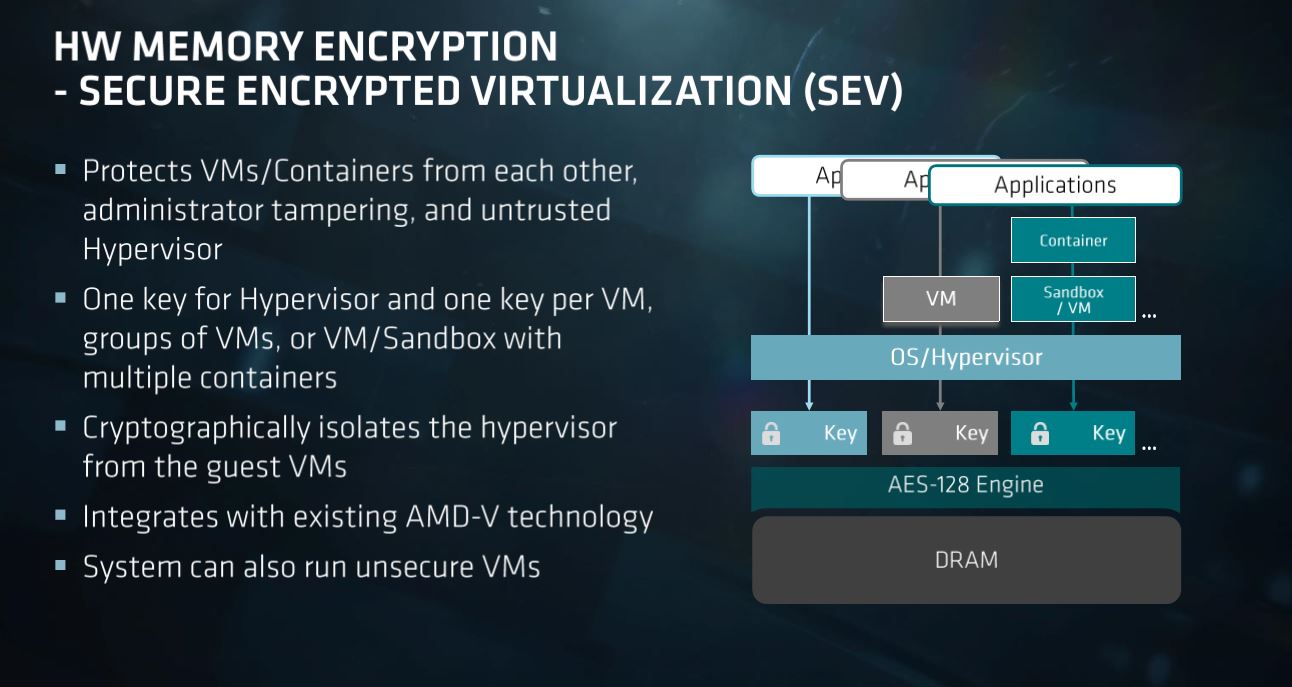

QuickAssist technology, RAS features, Trusted Execution, and the Innovation Engine are all listed by Intel as advantages. The company predicted that AMD wouldn't offer a sandboxed management solution. Later, AMD disclosed its secure processor, a sandboxed ARM processor on the SoC package. AMD also has its own suite of RAS features.

Intel underlines the word desktop on this slide, again pointing out that AMD uses the same Zeppelin die for EPYC as it uses on Ryzen. Whether EPYC's multi-chip module design leads to higher latency, hurting performance in sensitive applications, remains to be seen, though.

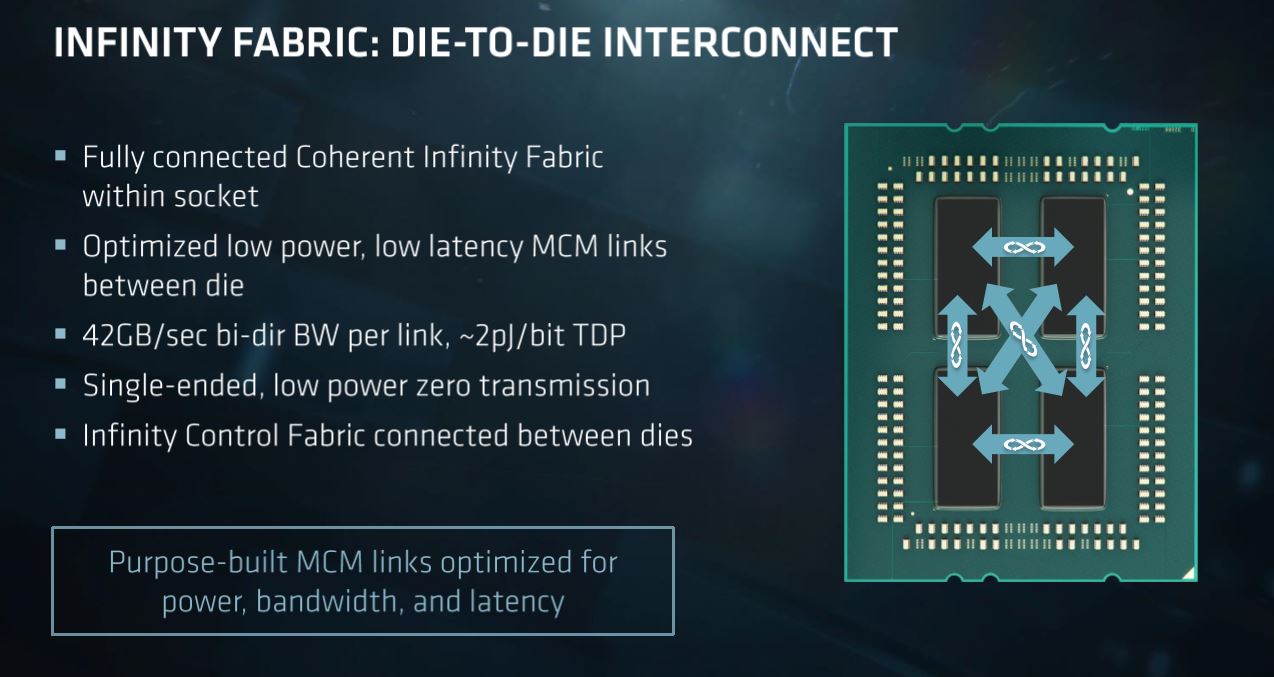

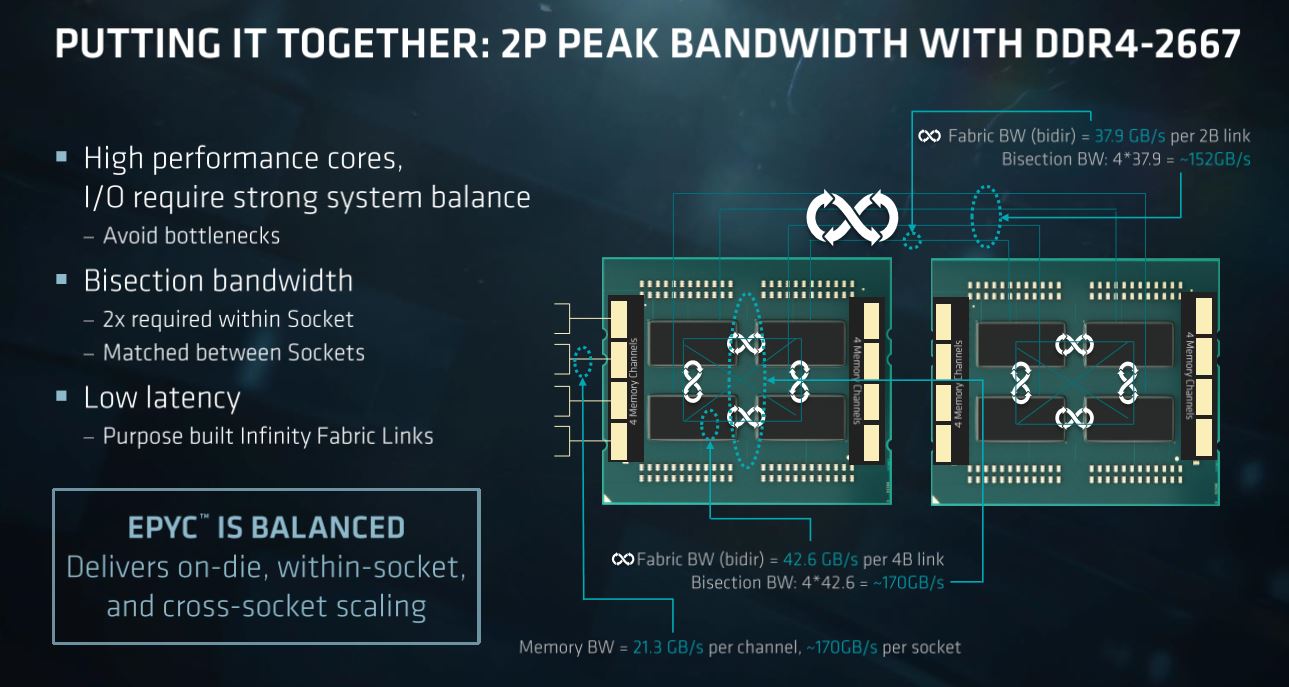

According to Intel's projections, AMD offers up to 200 GB/s of bi-sectional bandwidth between its dies (four 50 GB/s links). However, AMD later disclosed that it provides four 42.6 GB/s links for a total of 170 GB/s of bi-sectional bandwidth. This could create memory and I/O bottlenecks, Intel believes, compared to Purley's 768 GB/s bi-sectional bandwidth.

The Purley platform offers the same DDR4-2666 support with one and two DIMMs per channel, whereas AMD drops to DDR4-2400 when you drop in a second DIMM per channel. That's similar to Intel's previous-generation Broadwell-EP.

AMD holds the unequivocal lead in PCIe connectivity for a single-socket server with 128 lanes. That far outweighs Purley's single-socket maximum of 48+20 (CPU+chipset). Intel admits it has a "lane deficiency," but claims this won't impact the average consumer. AMD naturally thinks differently, contending it has a big advantage for customers with big I/O needs.

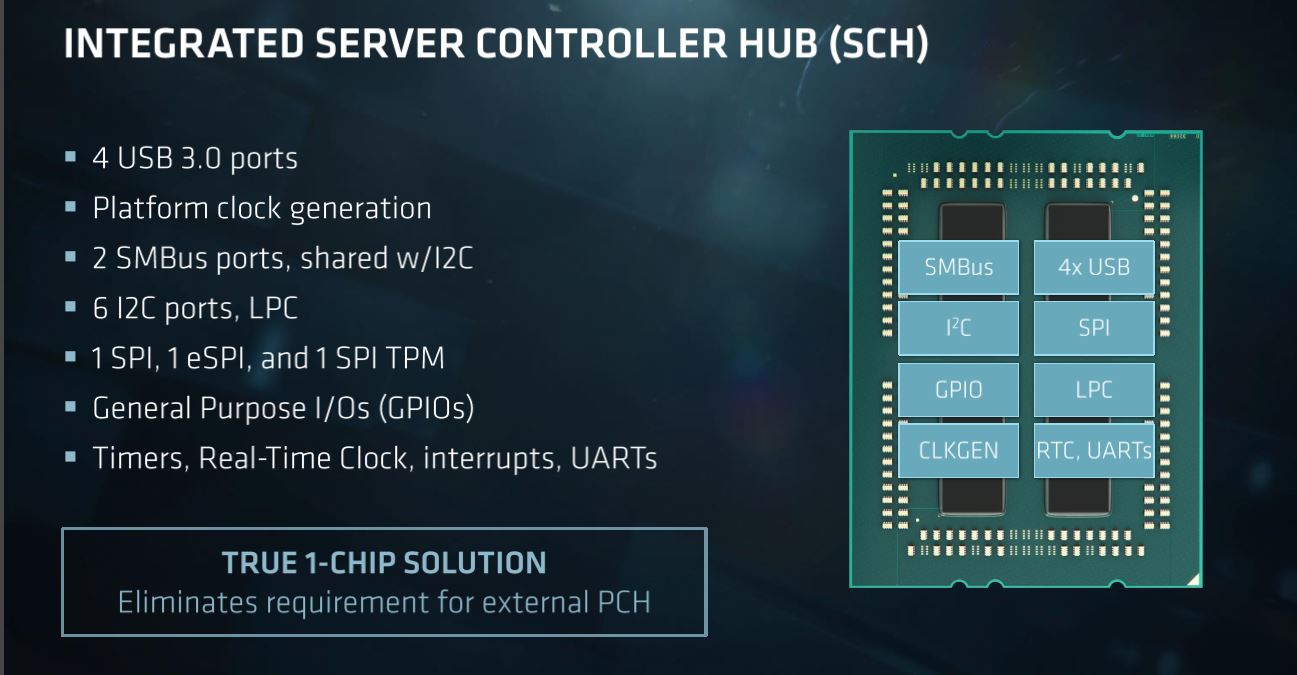

Zooming out to dual-socket systems erodes some of AMD's PCIe advantage, as EPYC loses 64 lanes to inter-socket communication. That allows Intel to almost catch up when you take chipset-based lanes into account. Just bear in mind that chipset lanes are designed with connectivity in mind, not throughput. Intel's coalesces its 20 extra lanes behind a four-lane DMI link at PCIe 3.0 transfer rates. This severely restricts total bandwidth. It's also noteworthy that EPYC doesn't have a PCH on the motherboard. Instead, it uses an Integrated Server Controller Hub. That reduces motherboard cost and complexity, but means you subtract lanes for SATA and Ethernet from the 128-lane allotment.

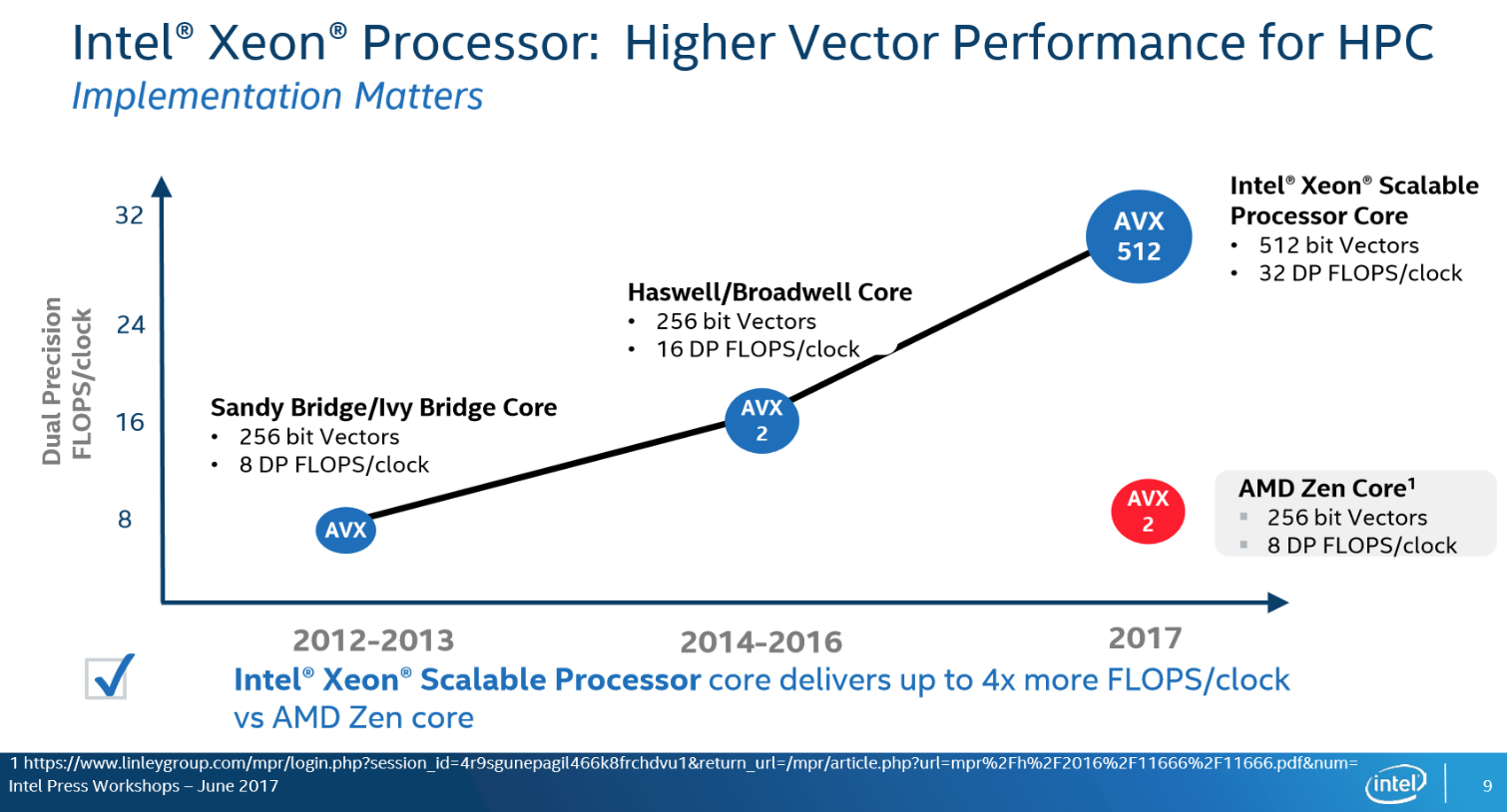

Intel touts its AVX-512 advantage, borne from its most recent architectural improvements, while AMD acknowledges its deficiency in compute. But AMD also has a powerful line of GPUs to complement its server processors. That might also tie into broader Infinity Fabric ambitions, where the fabric could serve as an end-to-end interconnect tying GPUs together with the host processor.

MORE: Best CPUs

MORE: Intel & AMD Processor Hierarchy

MORE: All CPUs Content

Current page: Intel's Take On EPYC

Prev Page Intel Responds Next Page Memory & Bandwidth Projections

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Aspiring techie This is something I'd expect from some run-of-the-mill company, not Chipzilla. Shame on you Intel.Reply -

bloodroses Just like every political race, here comes the mudslinging. It very well could be true that Intel's Data Center is better than AMD's Naples, but there's no fact from what this article shows. Instead of trying to use buzzwords only like shown in the image, back it up. Until then, it sounds like AMD actually is onto something and Intel actually is scared. If AMD is onto something, then try to innovate to compete instead of just slamming.Reply -

redgarl LOL... seriously... track record...? Track record of what? Track record of ripping off your customers Intel?Reply

Phhh, your platform is getting trash in floating point calculation... 50%. And thanks for the thermal paste on your high end chips... no thermal problems involved. -

InvalidError Reply

To be fair, many of those "cheap shots" were fired before AMD announced or clarified the features Intel pointed fingers at.19950405 said:All I see is cheap-shots, kind of low for Intel.

That said, the number of features EPYC mysteriously gained over Ryzen and ThreadRipper show how much extra stuff got packed into the Zeppelin die. That explains why the CCXs only account for ~2/3 of the die size. -

redgarl To Intel, PCIe Lanes are important in today technology push... why?... because of discrete GPUs... something you don't do. AMD knows it, they knows that multi-GPU is the goal for AI, crypto and Neural Network. This is what happening when you don't expend your horizon.Reply

It's taking us back to the old A64. -

-Fran- It's funny...Reply

- They quote WTFBBQTech.

- Use the word "desktop die" all over the place without batting an eye on their own "extreme" platform being handicapped Xeons.

- No word on security features. I guess omission is also a "pass" in this case.

This reads more like a scare threat to all their customers out there instead of trying to sell a product. Miss Lisa Su is doing a good job it seems.

Cheers! -

InvalidError Reply

The extra server-centric stuff (crypto superviser, the ability for PCIe lane to also handle SATA and die-to-die interconnect, the 16 extra PCIe lanes per die, etc.) in Zeppelin didn't magically appear when AMD put EPYC together... so technically, Ryzen chips are crippled EPYC/ThreadRipper dies.19950713 said:- Use the word "desktop die" all over the place without batting an eye on their own "extreme" platform being handicapped Xeons.

-

-Fran- Reply19950773 said:

The extra server-centric stuff (crypto superviser, the ability for PCIe lane to also handle SATA and die-to-die interconnect, the 16 extra PCIe lanes per die, etc.) in Zeppelin didn't magically appear when AMD put EPYC together... so technically, Ryzen chips are crippled EPYC/ThreadRipper dies.19950713 said:- Use the word "desktop die" all over the place without batting an eye on their own "extreme" platform being handicapped Xeons.

I don't know if you're agreeing or not... LOL.

Cheers!