Intel Core i9-7900X Review: Meet Skylake-X

Why you can trust Tom's Hardware

Weaving The Fabric

Again, as you'll see in our benchmarks, we ran into some strange performance trends that didn't add up. Given Skylake-X's frequency advantage, reworked cache, and 2D mesh topology, we didn't expect Broadwell-E to stand a chance. But in some cases, the previous-gen flagship outperformed Core i9-7900X. Asked about these anomalies, Intel responded:

...we have noticed that there are a handful of applications where the Broadwell-E part is comparable or faster than the Skylake-X part. These inversions are a result of the “mesh” architecture on Skylake-X vs. the “ring” architecture of Broadwell-E.Every new architecture implementation requires architects to make engineering tradeoffs with the goal of improving the overall performance of the platform. The “mesh” architecture on Skylake-X is no different. While these tradeoffs impact a handful of applications; overall, the new Skylake-X processors offer excellent IPC execution and significant performance gains across a variety of applications.

We covered Skylake-X's mesh architecture in Intel Introduces New Mesh Architecture For Xeon And Skylake-X Processors. Check that piece out for more detail. Of course, there's a lot more to this story, and much of it remains under embargo. But this is a huge change to an already effective design, so it comes as no surprise that the mesh topology doesn't yield extra performance in all of our metrics.

The Background

Interconnects are pathways for moving data between key components inside of a processor, including cores, caches, and PCIe and memory controllers. They affect latency and power consumption, which in turn affects performance and thermal design power.

Intel's ring bus debuted in 2007 with Nehalem, and AMD's HyperTransport was introduced in 2001. Both technologies evolved, but higher processor core counts, more cache, and greater I/O throughput have strained the interconnects. There are a number of ways to improve their performance, though this often requires bumping up data rates, and thus voltage, in order to realize large performance gains.

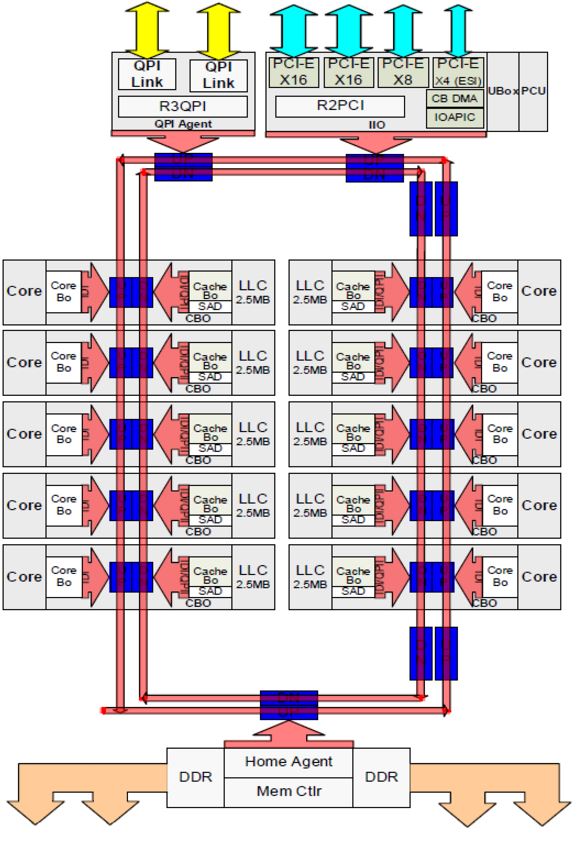

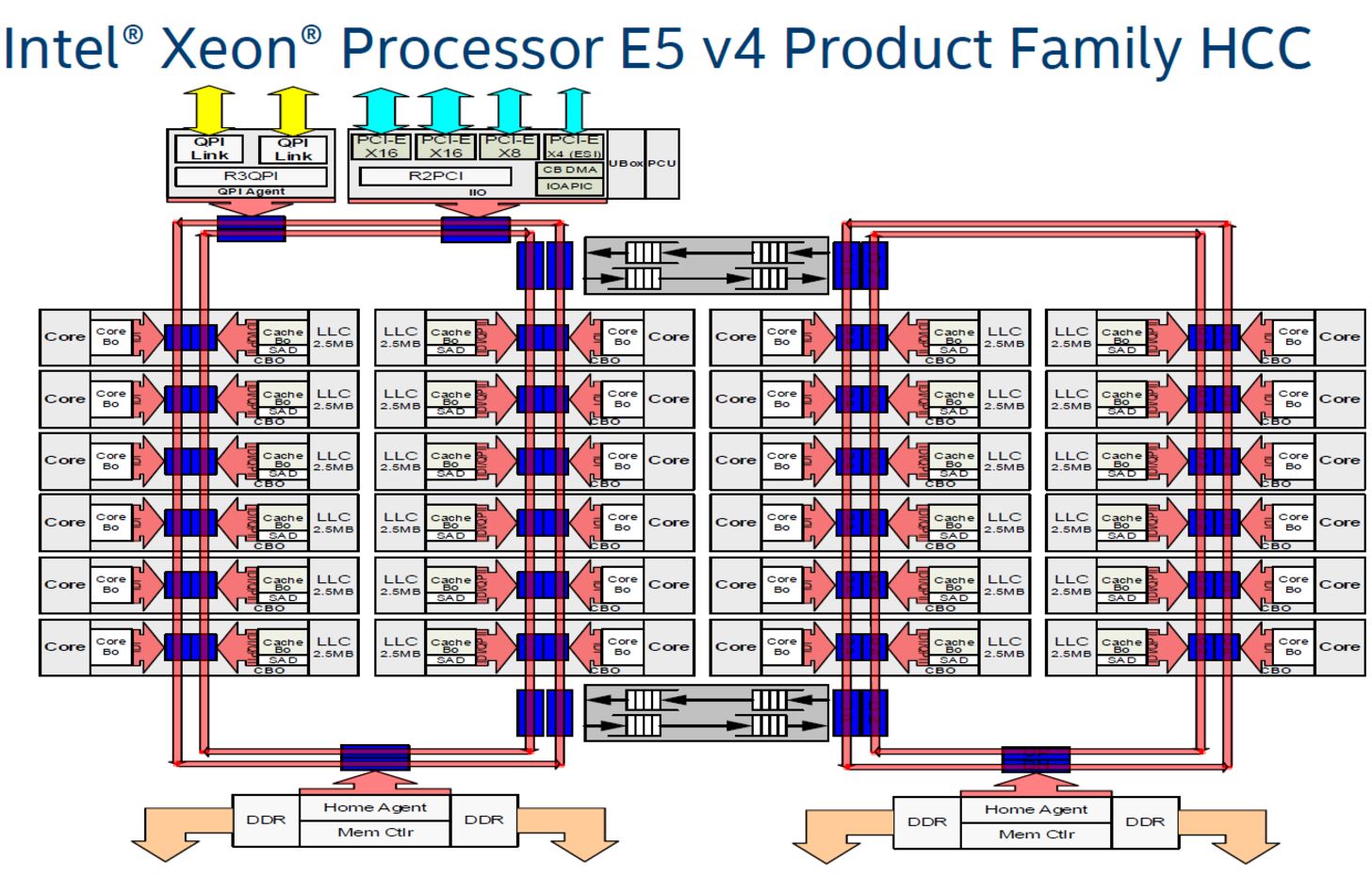

Intel's bi-directional ring bus, pictured above in red on a Broadwell low core-count die, serves as a good example of the challenge. Data travels a circuitous route to reach the components, and latency amplifies as core count increases. The second image shows the Broadwell high core-count die with 24 cores. Aligning the building blocks into a monolithic bus imposes penalties that make it impractical, so Intel divided the larger die into two separate ring buses. This increases scheduling complexity, and the buffered switches that facilitate communication between the rings add a five-cycle penalty, limiting scalability.

In contrast, AMD introduced its Infinity Fabric with the Zen microarchitecture, currently implemented as two quad-core processor complexes communicating over a 256-bit bi-directional crossbar that also handles northbridge and PCIe traffic. They also share a memory controller. The trip across the Infinity Fabric to the other quad-core CCX and its accompanying cache results in increased communication latency. We detailed the design and measured its latency in our AMD Ryzen 5 1600X Review. We also found that higher memory frequencies can improve the Infinity Fabric's latency characteristics, which is likely one of the key reasons that Ryzen's performance increases with faster memory data transfer rates.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

AMD contends that software and platform optimizations can defray some of the performance oddities we've noticed in our testing, and from what we've seen, that is true. AMD's efforts, and an unrelenting string of BIOS, chipset, and software updates, have led to much better performance than we recorded in our inaugural Ryzen 7 review.

AMD's work continues. And now Intel faces the same challenge.

What A Mesh

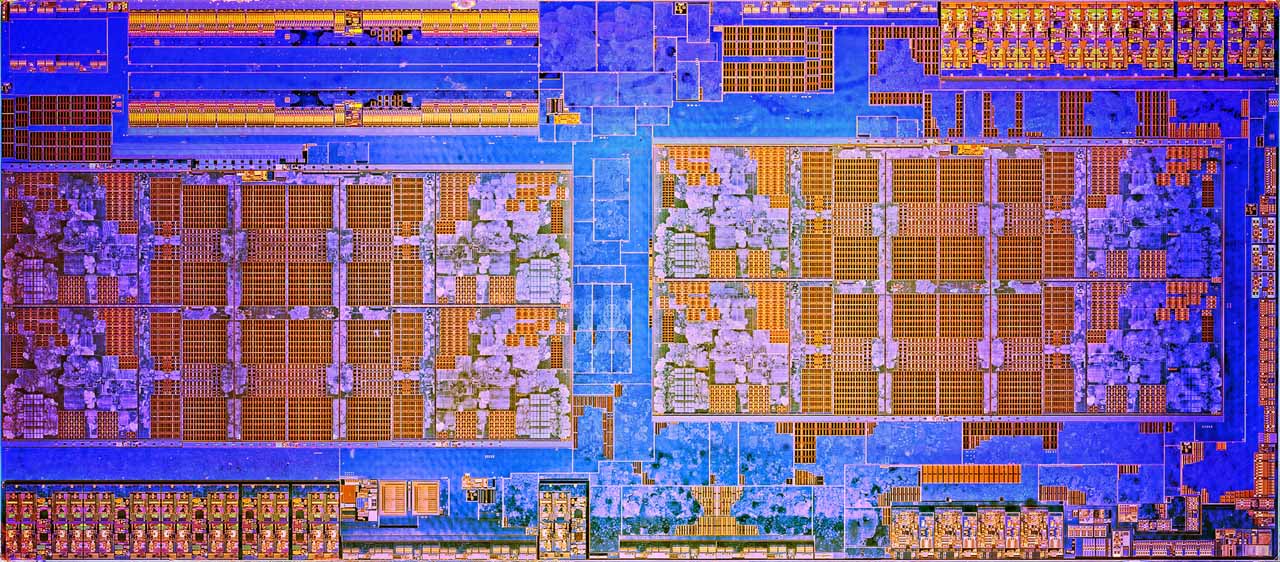

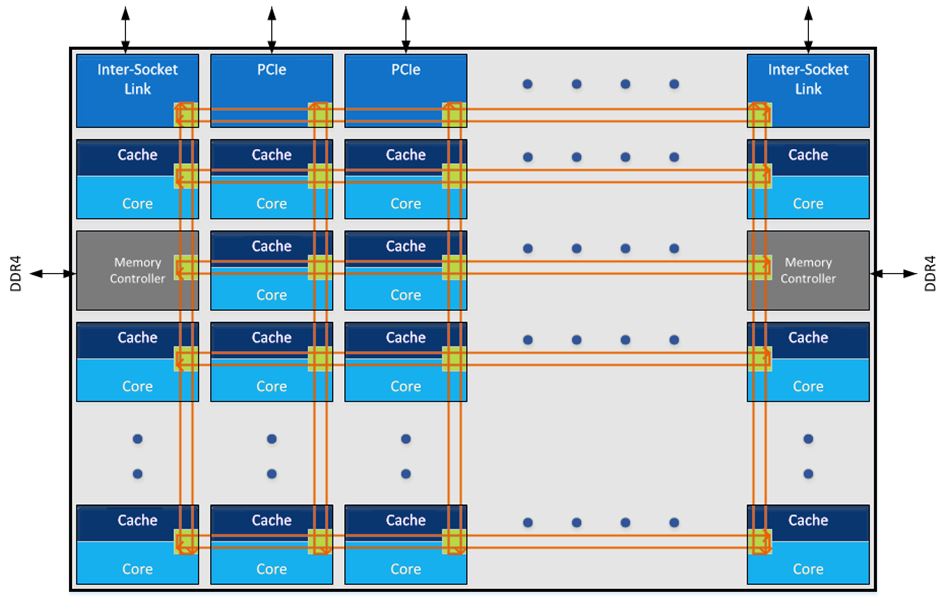

Intel's 2D mesh architecture made its debut on the company's Knights Landing products. The mesh consists of rows and columns of interconnects between the cores, caches, and I/O controllers. As you can see, the latency-killing buffered switches are absent. The ability to 'stair-step' data through the cores allows for much more complex, and purportedly efficient, routing. Intel claims its 2D mesh features a lower voltage and frequency than the ring bus, yet still provides higher bandwidth and lower latency.

Intel moved the DDR4 controllers to the left and the right sides of the 18-core high core-count die, similar to its Knights Landing design. Previously, they were at the bottom of the ring bus-based designs. The Skylake-X die shot suggests there are six memory controllers (second row down on the right and left columns), so it appears Intel disabled two controllers by default. The company likely uses its smaller LCC die for the Core i9-7900X, though representatives won't say for sure.

Things Get Meshy

Intel designed the mesh to increase scalability. There are trade-offs, however. We turned to SiSoftware Sandra's Processor Multi-Core Efficiency test, which measures inter-core, inter-module, and inter-package latency. The software offers Multi-Threaded, Multi-Core Only, and Single-Threaded metrics. We use the Multi-Threaded test with the "best pair match" setting (lowest latency).

The test measures performance between cores with all possible thread pairs, and for Intel's Core i9-7900X, that results in 189 separate results. We employ a data parser to boil the measurements down into average values.

| Processor | Intra-Core Latency | Core-To-Core Latency | Core-To-Core Average Latency | Average Transfer Bandwidth |

| Core i9-7900X | 14.5 - 16ns | 69.3 - 82.3ns | 75.56ns | 83.21 GB/s |

| Core i9-7900X @ 3200 MT/s | 16 - 16.1ns | 76.8 - 91.3ns | 83.93ns | 87.31 GB/s |

| Core i7-6950X | 13.5 - 15.4ns | 54.5 - 70.3ns | 64.64ns | 65.67 GB/s |

| Core i7-7700K | 14.7 - 14.9ns | 36.8 - 45.1ns | 42.63ns | 35.84 GB/s |

| Core i7-6700K | 16 - 16.4ns | 41.7 - 51.4ns | 46.71ns | 32.38 GB/s |

The intra-core measurement quantifies latency between threads that are resident on the same physical core, while the core-to-core numbers reflect thread-to-thread latency between two physical cores. Core i9-7900K is most comparable to the 10-core Core i7-6950X, but we included the four-core models as a reference point.

We recorded slightly higher intra-core latency and a larger 10.92ns average latency delta between the Skylake-X and Broadwell-E models. Despite Core i9-7900X's increased latency, we recorded a 17.54 GB/s advantage in average transfer bandwidth. That's a solid 26.7% increase. After generating our first set of -7900X results with DDR4-2666, we followed up with several DDR4-3200 tests and noticed an increase in mesh latency. But we also recorded higher average transfer bandwidth. These results are preliminary, and we are conducting further latency and game testing with different memory transfer rates and timings to provide a more in-depth analysis.

| Processor | Intra-Core Latency | Intra-CCX Core-to-Core Latency | Cross-CCX Core-to-Core Latency | Cross-CCX Average Latency | Average Transfer Bandwidth |

| Ryzen 7 1800X | 14.8ns | 40.5 - 82.8ns | 120.9 - 126.2ns | 122.96ns | 48.1 GB/s |

| Ryzen 5 1600X | 14.7 - 14.8ns | 40.6 - 82.8ns | 121.5 - 128.2ns | 123.48ns | 43.88 GB/s |

AMD's Ryzen processors employ a vastly different architecture that yields different measurements. The intra-core latency measurements represent communication between two logical threads resident on the same physical core, and they're unaffected by memory speed. Intra-CCX measurements quantify latency between threads on the same CCX that are not resident on the same core. In the past, we observed slight variances, but intra-CCX latency is also largely unaffected by memory speed. However, we've seen up to a 50% decrease in cross-CCX latency, which denotes latency between threads located on two separate CCXes, by increasing the memory data transfer rate from DDR4-1333 to DDR4-3200.

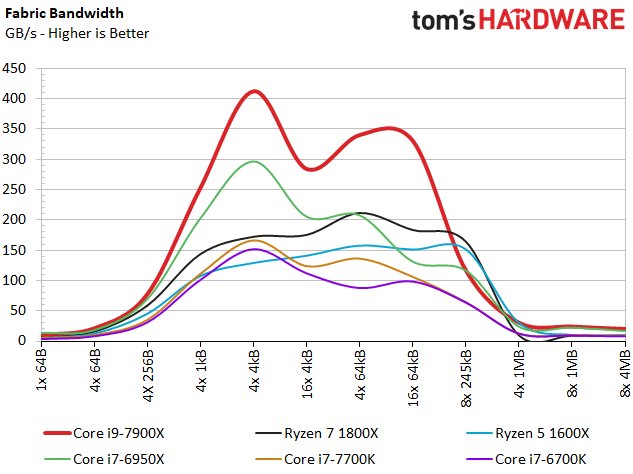

Fabric Bandwidth

We also plotted the fabric bandwidth results from our tests. Core i9-7900X establishes a large advantage over its Broadwell-E predecessor. The Ryzen processors dwarf Intel's quad-core models, but provide far less average bandwidth than the 10-core Intel CPUs.

MORE: Best CPUs

MORE: Intel & AMD Processor Hierarchy

MORE: All CPU Content

Current page: Weaving The Fabric

Prev Page Introduction Next Page Caching Up & IPC, AVX, Cryptographics

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Pros: 10/20 cost now $999Reply

Cons: Everything else

My biggest problem with this Intel lineup is that if you want 44 PCIe you have to pay $999. No, thanks. My money goes to AMD ThreadRipper.

Good review! -

rantoc Doubt many who purchase such high end cpu for gaming runs at a low full hd 1080p resolution, i know its more cpu taxing to run lower res at higher fps but that's for the sake of benchmarking the cpu itself.Reply

I would like to see 1440p + 2160p resolutions on a suitable high end card (1080ti or equalent) benchmarked with the cpu as well as it would represent real scenarios for the peeps considering such cpu.

Thanks for a good review! -

James Mason So it seems like de-lidding the x299 processors is gonna be a standard thing now to replace the TIM?Reply -

elbert Meet netburst 2.0 that not only can hit 100c at only (4.7Ghz)1.2v on good water cooler but only barely beats a 7700k not overclocked in games. All this is yours for the low low price of 3X. Its slower than the old 6950x in a few tests with was odd.Reply -

James Mason Reply

The differences would be less noticeable at higher res than 1080p, so.... you'd just see less dissimilar numbers.19835717 said:Doubt many who purchase such high end cpu for gaming runs at a low full hd 1080p resolution, i know its more cpu taxing to run lower res at higher fps but that's for the sake of benchmarking the cpu itself.

I would like to see 1440p + 2160p resolutions on a suitable high end card (1080ti or equalent) benchmarked with the cpu as well as it would represent real scenarios for the peeps considering such cpu.

Thanks for a good review! -

Dawg__Cester Hmmmmm. I bought a Ryzen 1700, a water cooler, Asrock B350 MB, 16gb ram 3200Mhz for $590 plus tax. I live in New Jersey. I was very nervous about making the purchase as I knew this was coming out this week but the sale prices got me. Unless you all think I got ripped off, (DON'T TELL ME). But in all honesty I have not regretted the purchase one bit!! I even managed to save enough to get a GTX 1080 FE GPU. I did have a few bumps in the road getting the system stable (about 3 hours configuring after assembly) but I am VERY happy. I used Intel primarily and never really considered AMD other than for Video adapters and SSDs.Reply

After reading this along with other articles and YT videos, I have no regerts as I enjoy my Milky Way and play my games among other things.

Just my experience. I am not seeking positive reinforcement nor advice.

I just feel very satisfied that I did not wait and cough up 3oo more fore something I could have for less. I know, I know it makes no sense.

But come on fellas, its the computer game!! -

James Mason Reply19835862 said:Hmmmmm. I bought a Ryzen 1700, a water cooler, Asrock B350 MB, 16gb ram 3200Mhz for $590 plus tax. I live in New Jersey. I was very nervous about making the purchase as I knew this was coming out this week but the sale prices got me. Unless you all think I got ripped off, (DON'T TELL ME). But in all honesty I have not regretted the purchase one bit!! I even managed to save enough to get a GTX 1080 FE GPU. I did have a few bumps in the road getting the system stable (about 3 hours configuring after assembly) but I am VERY happy. I used Intel primarily and never really considered AMD other than for Video adapters and SSDs.

After reading this along with other articles and YT videos, I have no regerts as I enjoy my Milky Way and play my games among other things.

Just my experience. I am not seeking positive reinforcement nor advice.

I just feel very satisfied that I did not wait and cough up 3oo more fore something I could have for less. I know, I know it makes no sense.

But come on fellas, its the computer game!!

PCPartPicker part list / Price breakdown by merchant

CPU: AMD - Ryzen 7 1700 3.0GHz 8-Core Processor ($299.39 @ SuperBiiz)

Motherboard: ASRock - AB350M Micro ATX AM4 Motherboard ($65.98 @ Newegg)

Memory: G.Skill - Ripjaws V Series 16GB (2 x 8GB) DDR4-3200 Memory ($124.99 @ Newegg)

Total: $490.36

Prices include shipping, taxes, and discounts when availableGenerated by PCPartPicker 2017-06-19 10:47 EDT-0400

Depends on which watercooler and which ram, but not really. -

Jakko_ Wow, compared to the Ryzen 1800X, the Intel Core i9-7900X:Reply

is about 25-30% faster

costs 105% more

uses 35-40% more power

Ryzen looks really good here, and together with the temperature problems, Intel seems to be in some deep shit. -

HardwareExtreme Too little, too late. Does Intel really think that just because it has "Intel" written on it that it must be worth $200-$300 than AMD?Reply