Four High-End Quad-Channel DDR3 Memory Kits For X79, Reviewed

You just bought the fastest (and most expensive) desktop platform on the planet. Which company's memory will you use to populate Intel's quad-channel controller? We tested four purportedly high-end kits in order to find out which set is the best.

Test Setup And Benchmarks

| Test System Configuration | |

|---|---|

| CPU | Intel Core i7-3960X (Sandy Bridge-E), 32 nm, 3.3 GHz base clock, 3.9 GHz maximum Turbo Boost, 15 MB Cache, LGA 2011 |

| CPU Cooler | Swiftech Apogee GTX, MCP 655b, Triple-Fan Radiator Kit |

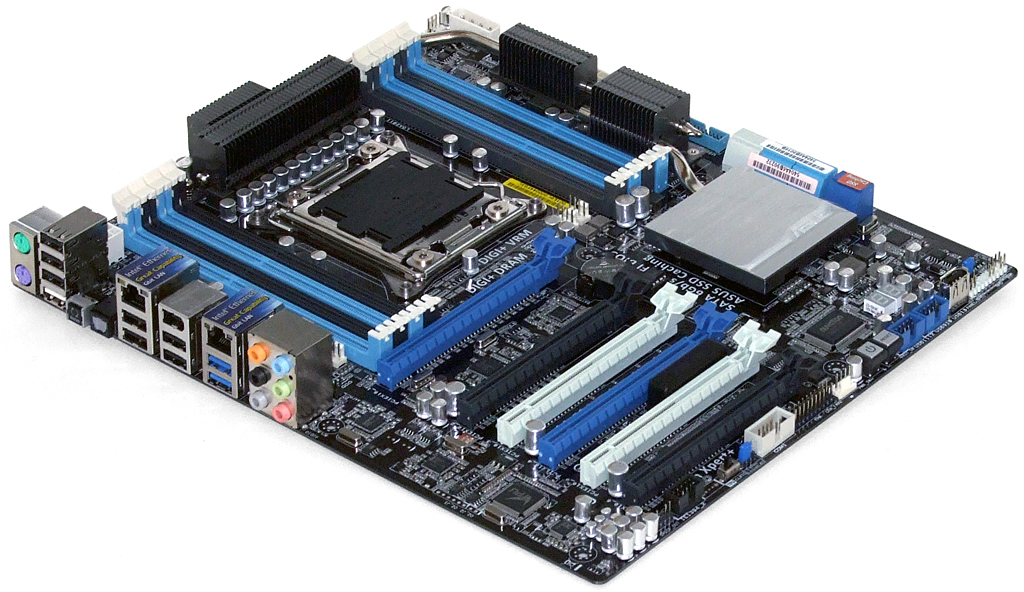

| Motherboard | Asus P9X79 WS, Firmware 0603 (11-11-2011) |

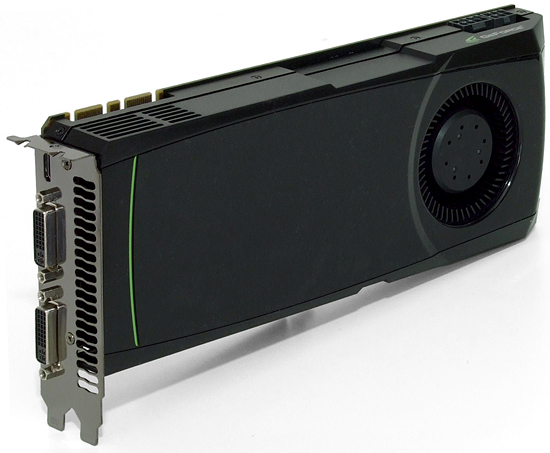

| Graphics | Nvidia GeForce GTX 580: 772 MHz GPU, GDDR5-4008 |

| Hard Drive | Samsung 470 Series MZ5PA256HMDR, 256 GB SSD |

| Sound | Integrated HD Audio |

| Network | Integrated Gigabit Networking |

| Power | Seasonic X760 SS-760KM: ATX12V v2.3, EPS12V, 80 PLUS Gold |

| Software | |

| OS | Microsoft Windows 7 Ultimate x64 |

| Graphics | Nvidia GeForce 285.62 |

| Chipset | Intel INF 9.2.3.1020 |

Asus' P9X79 WS won the memory overclocking portion of our recent high-end X79 motherboard comparison, and by doing so earned its place on this test bench.

We wanted to see what effect various memory speeds might have on program performance, and games are one of the types of programs that occasionally show this difference. Nvidia’s GeForce GTX 580 is fast enough to keep the pressure on our CPU and GPU.

Intel’s Core i7-3960X was locked at 34x throughout testing to keep its clock frequency stable at non-reference base clocks.

The lowest-possible game settings would show the biggest impact of memory performance on frames-per-second, but nobody actually games at those settings. Instead, we selected the lowest settings that high-end buyers would likely use (if forced to do so), along with a couple other applications that have been influenced by memory performance in the past.

| Benchmark Configuration | |

|---|---|

| Stability Test | Memtest86+ v1.70, single pass (~45 minutes) Max Speed at CAS 9 Min Latency at DDR3-1600, -1333, -1066 |

| Bandwidth Test | SiSoftware Sandra 20011 SP4 Bandwidth Benchmark |

| DiRT 3 | 1680x1050, High Quality Preset, No AA |

| Metro 2033 | 1680x1050, DX11, High, AAA, 4xAF, no PhysX/DOF |

| 3ds Max 2012 | Version 14.0 x64: Space Flyby Mentalray, 248 Frames, 1440x1080 |

| WinRAR | Version 4.01: THG-Workload (464 MB) to RAR, command line switches "winrar a -r -m3" |

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Test Setup And Benchmarks

Prev Page Mushkin Redline 993997 Next Page Overclocking And Under-Latency Results-

a4mula The article seems supports the same basic premise we've since at least the bclk limited 1155. Even with the 125 and 166 straps added to LGA2011 there is still too little base clock manipulation available push memory very far.Reply

Performance gains via memory even when given a favorable playing field (reduced graphics) are pretty small. The reference CAS 9 1600 appeared to hold its own at a fraction of the cost. As was eluded to I think kits like this are really only aimed towards the small crowd of super-enthusiasts that want to squeeze every last drop out of a system regardless of price.

Nice article and one that I think illustrates both the benefits (ease of overclocking) and disadvantages (less fine tuning) of the multiplier friendly yet limited bclk of both 1155 and 2011. -

panderaamon I'll take 8 GB per slot Ram's for my X79 thank you very much.Reply

Also it would have been nice to add some Ram Disk benchmarks to the review aswell. -

bauboni It would be nice to compare these 2.4Ghz Quad Channel memories with the usual 1.6Ghz DualChannel kits, specialy at gamming scenarios.Reply -

Crashman a4mulaThe article seems supports the same basic premise we've since at least the bclk limited 1155. Even with the 125 and 166 straps added to LGA2011 there is still too little base clock manipulation available push memory very far. The board supports DDR3-2400 data rate, limitations on this CPU's memory controller made it impossible for most modules to reach that setting. You can underclock or overclock the base clock by a wide enough margin to fill the holes between 2133 and 2400, etc.Reply

bauboniIt would be nice to compare these 2.4Ghz Quad Channel memories with the usual 1.6Ghz DualChannel kits, specialy at gamming scenarios.That's why there's a DDR3-1600 reference data set on each chart. Of course it's quad-channel because that's what the CPU is designed to run, and we wouldn't want to artificially handicap it...would we? -

noob2222 Where is AMD's memory testing? Since SB-E was mostly SB, memory already wasn't expected to improve much. http://www.anandtech.com/show/4503/sandy-bridge-memory-scaling-choosing-the-best-ddr3/6Reply

SB-E hasn't changed much here, at most ~1% boost. -

bauboni CrashmanThe board supports DDR3-2400 data rate, limitations on this CPU's memory controller made it impossible for most modules to reach that setting. You can underclock or overclock the base clock by a wide enough margin to fill the holes between 2133 and 2400, etc.That's why there's a DDR3-1600 reference data set on each chart. Of course it's quad-channel because that's what the CPU is designed to run, and we wouldn't want to artificially handicap it...would we?Reply

Well, I really wanted to see the practical difference between dual to quad channel at gamming =P -

Crashman panderaamonI'll take 8 GB per slot Ram's for my X79 thank you very much.Also it would have been nice to add some Ram Disk benchmarks to the review aswell.The reason you didn't see 16GB kits in the past is that Tom's Hardware has always had trouble finding "widespread" applications that could benefit from more than 8GB. RAMDISK is an interesting option for eight-DIMM motherboards because 64GB can be employed. That would be really handy for a 48GB RAMDISK and 16GB of free memory!Reply

Of course we'd like to gauge the marketability of this concept before putting money behind it, so perhaps you can start a thread in the Forums to gauge its popularity? On a platform limited to $500-1000 CPU's, would any readers really spend that much a second time for memory? -

CaedenV so... while there are differences in synthetic tests, there is no practical difference between 1600 and 2133 (and in some cases a negative effect). A bit disappointing, but it does follow previous test results.Reply

Just wondering, but does this mean there is a bottleneck in the CPU? Is OCing the ram worth it when paired with a 5ghz processor? It is just hard to suggest any of these products when there is so little difference between them and the stock version. Good article though -

CaedenV CrashmanThat would be really handy for a 48GB RAMDISK and 16GB of free memory!Of course we'd like to gauge the marketability of this concept before putting money behind it, so perhaps you can start a thread in the Forums to gauge its popularity? On a platform limited to $500-1000 CPU's, would any readers really spend that much a second time for memory?I have always wanted a RAM disc simply due to the slow seek speed of HDDs, but now with SSDs available (and doping in price like a rock) it simply makes sense (and ease of use) to use an SSD or SSD RAID instead. Sure, system RAM is still faster, but SSDs take the cake for speed/size/performance for most applications where a ram disc would have previously had a sizable advantage. Ram discs still have a home in servers, but for video/audio/3d work on a workstation I think the money would be better spent elsewhere.Reply

All the same I would love to be proved wrong and see some real world tests on the subject! -

theuniquegamer I haven't expected that the price of quad channel Rams will be such a premium price like the gskill ripjawReply