Samsung 950 Pro 256GB RAID Report

Why you can trust Tom's Hardware

What Is RAID And Initial Performance Testing

A Redundant Array of Independent (formally Inexpensive) Disks, or RAID, is often used to combine many physical drives to make one logical volume in an effort to increase performance, capacity and/or data integrity. A case was first made for the technology in a June 1988 white paper called "A Case for Redundant Arrays of Inexpensive Disks (RAID)," presented at the SIGMOD conference. In that paper, the authors suggested the highest-performance mainframe disks could be outperformed by an array of inexpensive drives more commonly found in the PC space. Although failures would rise in proportion to the number of physical devices, by configuring for redundancy, the reliability of an array could far exceed that of any single repository. Prior to this report, five levels of RAID were already in use from various companies, but the technology had yet to be standardized.

The level we're using today is RAID 0. This joins two or more drives to increase performance and storage capacity. The downside to RAID 0 is that it multiplies the risk of a failure. If one drive in a two-disk array dies, the data on both disks is lost.

RAID In The Home PC

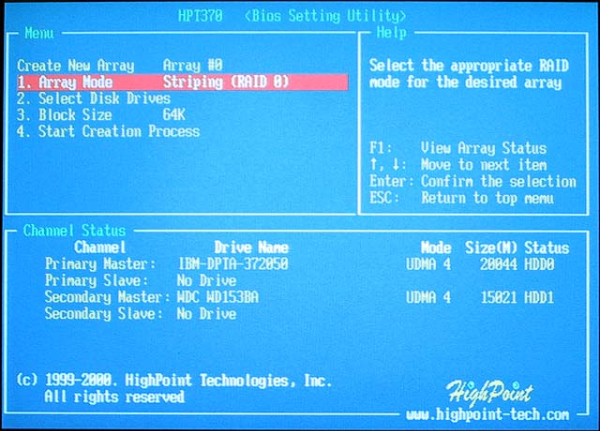

The year 2000 was an interesting one for PC enthusiasts. The Parallel ATA (PATA) bus' ceiling rose from 33 MB/s to 66 MB/s. Intel's wildly popular 440BX chipset didn't support the newer specification, so add-in card manufacturers scrambled to support it through discrete controllers. Promise was one such company, and it built two corresponding products: the Ultra66 (an HBA without RAID support) and the FastTrak66 (a PATA RAID controller). Other than a single resistor, the two were identical. Their firmware was unique, but that didn't stop power users from soldering on the missing surface-mount component and flashing the lower-cost card with the flagship's RAID firmware. You can read more about that procedure from Tom's Hardware founder Tom Pabst.

RAID Goes Mainstream

Shortly after the Promise Ultra66 to FastTrak66 mod became popular, manufacturers added RAID support to overclocking-friendly motherboards like Abit's BE6-II. By then, high-end copper-based air coolers and liquid-cooling kits emerged to take Intel's highly-tweakable Coppermine CPUs to new heights. The $300 Pentium III 500MHz could hit 750MHz right out of the box with minimal effort. That 750MHz model should have cost three times more than the 500MHz version. It was a good time to be an enthusiast. Increasing storage performance through RAID was just the next logical step.

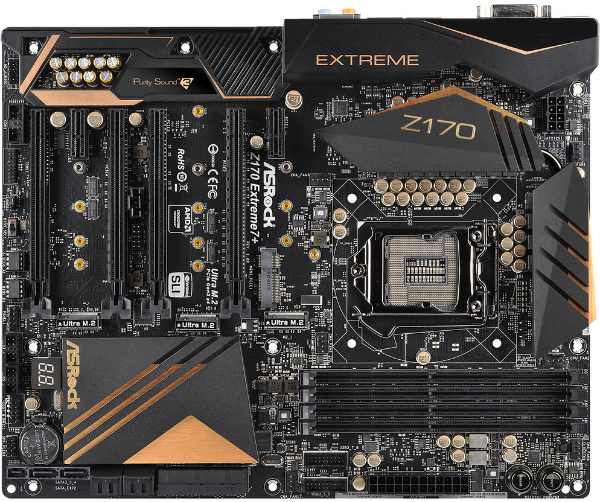

We've come a long way since the 440BX. Most modern motherboards have RAID support built right into the chipset. RAID for PCIe-based devices is another matter entirely. It's a newer concept that leverages concepts standardized in the past.

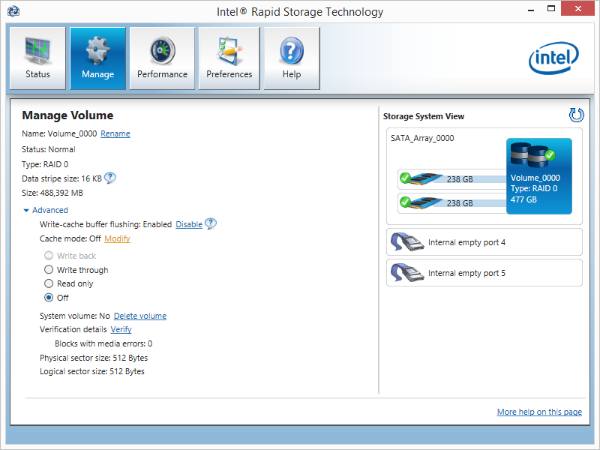

Today, only Intel's Z170 chipset supports booting an operating system from an array of PCIe-attached drives. But not all Z170-based platforms enable the feature. ASRock's Z170 Extreme 7+ goes a step further than most. It supports three M.2 drives for RAID 0 (two-drive performance increase), RAID 1 (two-drive mirroring) and RAID 5 (three-drive performance and parity protection). In this review, we're using the Z170 Extreme 7+ with two 950 Pros in RAID 0 to compare against a single, larger 950 Pro. Our array settings are shown in the image above; we're testing with a 16KB stripe size.

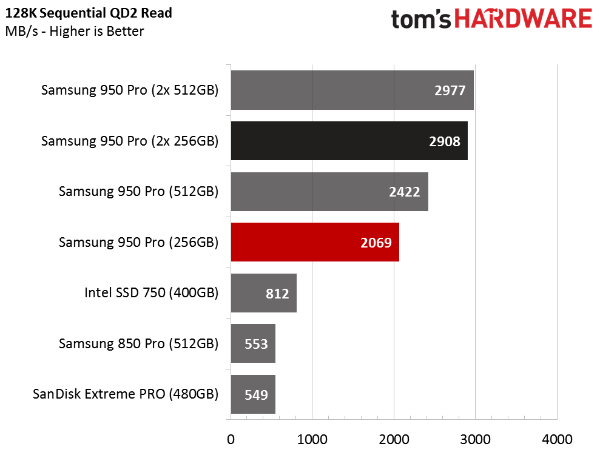

Sequential Read Performance

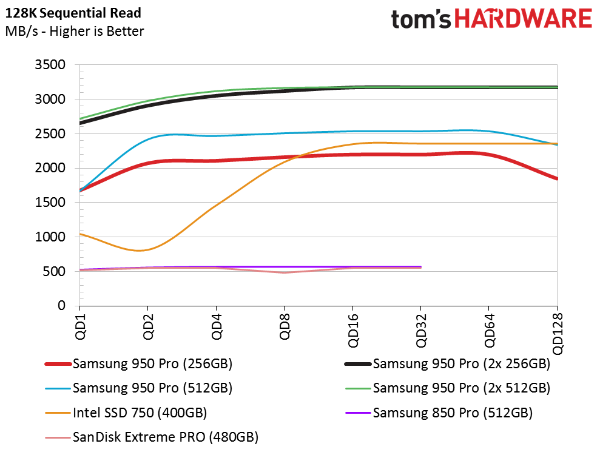

We're including a few alternatives in these charts, including Samsung's 850 Pro and SanDisk's Extreme Pro, the fastest SATA-based client SSDs out there. SATA only scales to a queue depth of 32, so we can't generate data out to 128 commands deep like the PCIe-based drives (they can actually go to 256 with another 256 commands per queue).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Samsung's 256GB 950 Pro delivers just over 2000 MB/s sequential read performance. The Z170's storage controller communicates with the CPU through the Direct Media Interface (DMI), which shares bandwidth with several other devices. The DMI just isn't capable of doubling the speed of a single 950 Pro because it's limited to less than 4 GB/s between the PCH and CPU.

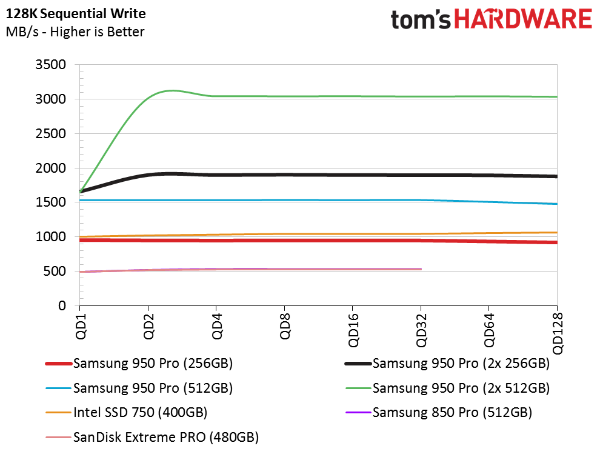

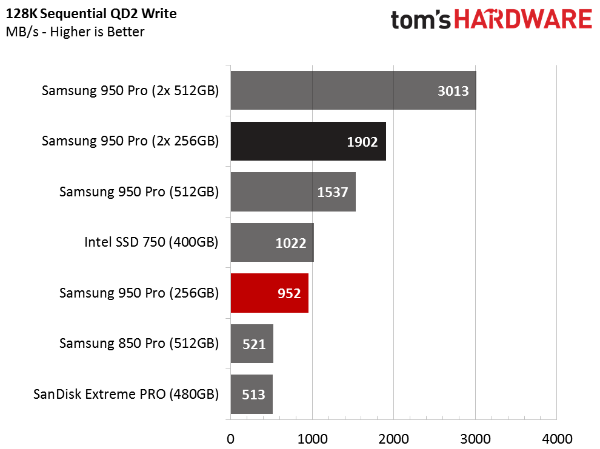

Sequential Write Performance

The 256GB 950 Pro's sequential write speeds differ from the 512GB model. The higher-capacity model can write at 1500 MB/s, while the smaller drive is limited to 900 MB/s, per Samsung's specifications. Both of the striped 950 Pro arrays double the performance of a single drive, and the two 256GB drives outperform one 512GB 950 Pro by nearly 400 MB/s.

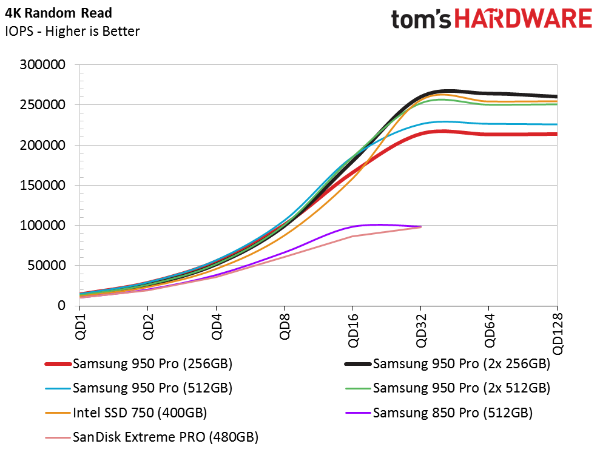

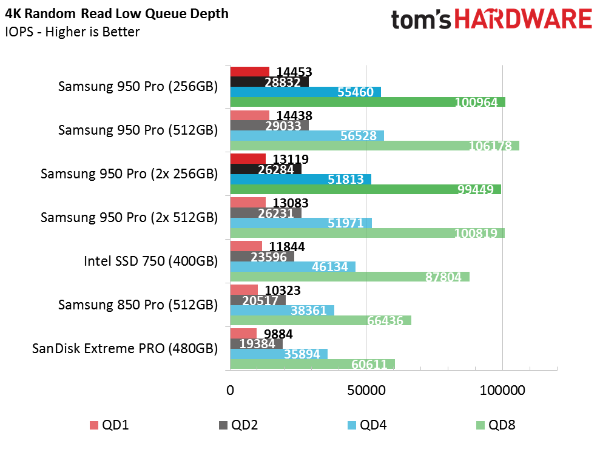

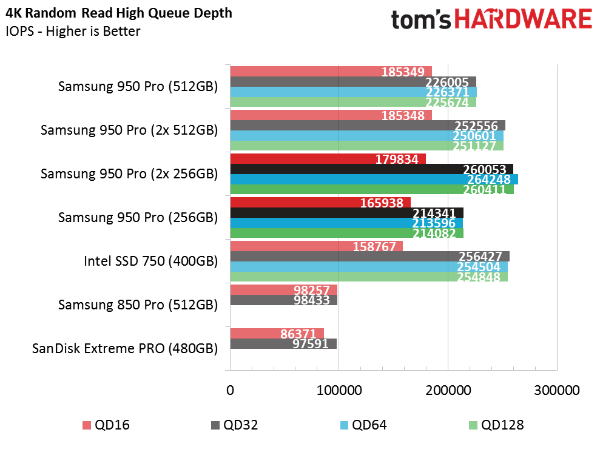

Random Read Performance

The array of 256GB 950 Pros achieves higher random read performance than a single drive, but the increase is only quantifiable at high queue depths. In a normal desktop environment, you simply won't see the benefit. In fact, at low queue depths, where most workloads can be characterized, the RAID array is actually a little slower than a single drive.

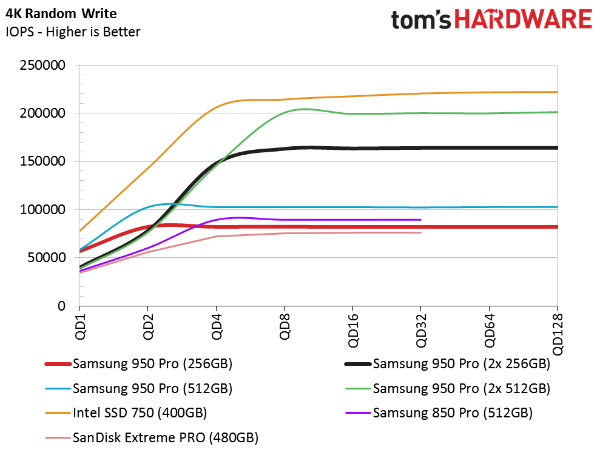

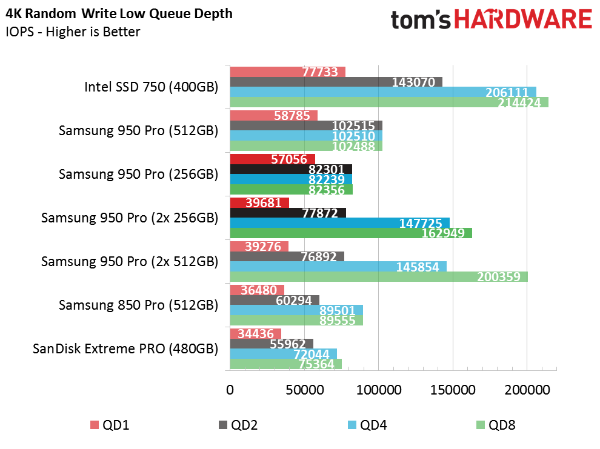

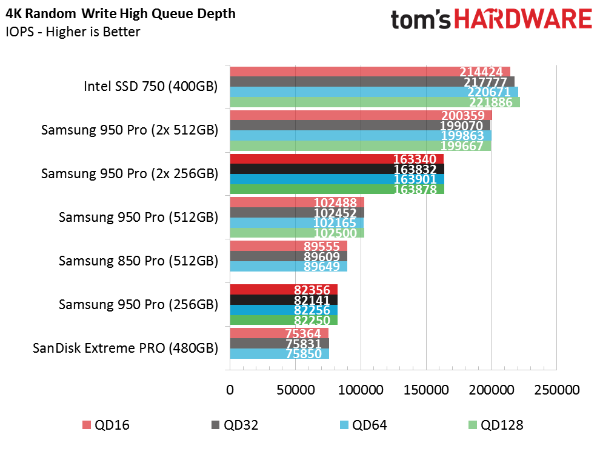

Random Write Performance

We also observe lower random write performance at low queue depths in RAID 0. Configuring the DMI for RAID adds latency, which cuts into measurable IOPS. At queue depths of four and up, the array can use the extra device bandwidth.

Current page: What Is RAID And Initial Performance Testing

Prev Page Specifications, Pricing, Warranty And Accessories Next Page Mixed Workloads And Steady State

Chris Ramseyer was a senior contributing editor for Tom's Hardware. He tested and reviewed consumer storage.

-

USAFRet Reply17700323 said:Why do you expect gains in video games when everything relevant is loaded in RAM?

A lot of people do.

Assuming that the performance gains we saw with spinning disks in RAID 0 automagically does the same with SSD's. It does not. -

Tibeardius Did it have any sort of thermal throttling occur? These pcie m.2 drives can get pretty hot.Reply -

Integr8d "Once upon a time, you could sling a couple of Western Digital Raptors together, fire up a level in Battlefield 2 before anyone else, get the plane and dominate the map."Reply

Someone just explained 24 months of my life:) -

HT ReplyWhy do you expect gains in video games when everything relevant is loaded in RAM?

that's the point of faster drives, loading it all in ram. do you think it magically appears there by itself ? -

HT good article Chris, i'm intrigued by your statement of the samsung driver vs the M$ one, i would've liked to see some numbers comparing the two.Reply -

Virtual_Singularity Interesting article. Also: am a lil' dumbfounded at how much the price of the 850 pro series has dropped since the holidays, a mere 3+ months ago...Reply