AMD FX-4170 Vs. Intel Core i3-3220: Which ~$125 CPU Should You Buy?

AMD has the clock rate on its side. But Intel's Ivy Bridge architecture boasts superior IPC throughput. We pit the 4.2 GHz FX-4170 against Intel's new 3.3 GHz Core i3-3220 in an effort to determine which CPU is the better buy for $125.

A Close Race Today, But Tomorrow Shows More Promise For AMD

We're already intimately familiar with the Bulldozer and Ivy Bridge architectures, so nothing that we saw today is particularly surprising. Single-threaded applications are going to hum on Intel's chip, while applications able to tax the FX's four integer clusters are going to treat two Bulldozer modules more like a quad-core processor (not quite, though, as the Core i5's stellar performance demonstrates).

The real questions, then, are: what does a dramatically higher clock rate do for AMD's offering, specifically, what does that bump up to a 125 W TDP do for power consumption, and how does Intel compare, given its dual-core implementation?

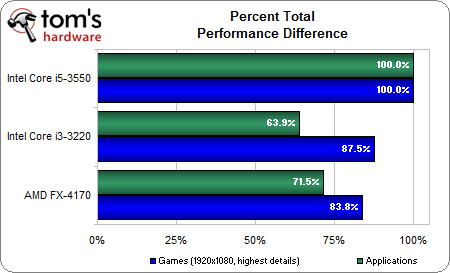

Despite proof that some of the games we tested do take advantage of quad-core CPUs, the dual-core Core i3-3220 takes a lead in this discipline, mostly a result of Skyrim. The FX-4170, on the other hand, serves up better application performance, and by a larger margin. When you consider the way people use their PCs, we're inclined to put more value on the larger productivity win favoring AMD, particularly since the apps where an FX excels are threaded. Those are the workloads that require more processing power.

If we were to make our judgement on performance alone, AMD's FX-4170 would have the edge.

But there's another side to this story. It starts with power consumption, and ends with efficiency.

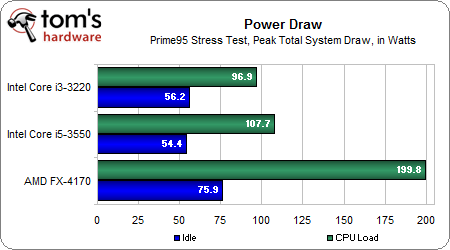

At idle, the FX-4170-based machine uses almost 20 W more than the Core i3-3220-based box. Under load, that gap grows to a staggering 103 W. The dual-module FX almost doubles the consumption of a quad-core Core i5, in fact.

Yes, the load comes from a largely-synthetic Prime95 run, and yes, it's unlikely you'll ever see such nasty power numbers on a day to day basis. Nevertheless, two times the power consumption really puts AMD's small performance advantage into context. Efficient, this CPU is not. Whether or not that matters to you is a personal decision.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

What we're really looking forward to, though, is Vishera. Intel put its cards on the table earlier this year with its Ivy Bridge architecture, and Haswell-based chips won't show up until the second quarter of next year. More immediately, we're expecting FX CPUs based on AMD's Piledriver architecture this month. We've seen evidence that Piledriver may add up to 15% more performance in the same thermal envelope as Bulldozer. If that holds true, then a processor with two Piledriver modules in the same $125 range should help the company claw back some of the performance/watt deficit it currently suffers.

You can bet we'll revisit this topic when those chips start showing up.

Current page: A Close Race Today, But Tomorrow Shows More Promise For AMD

Prev Page Benchmark Results: Metro 2033, The Elder Scrolls V: Skyrim, And StarCraft IIDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

EzioAs Nice review as always. Nothing really too surprising but I guess it was quite necessary to compare the 2 CPUs at the same price point (not everybody prefers Intel). If Piledriver pulls through (I hope it does), then maybe AMD will have the slight edge at performance per dollar against the i3 Ivy BridgeReply -

jerm1027 At $125, they should have included the FX-6100. That's $120 on Newegg, plus an additional $15 off on sale.Reply -

Soma42 Nothing too surprising I suppose. AMD is looking to cut 20% of it's workforce and I think I know why. Performance is hit or miss and at twice the power of Intel's offerings. Intel is getting close to competing with ARM with their mainstream lineup and AMD's dual module is still at 125W. What's wrong here?Reply

Hope Piledriver is all that it promises and more. -

theabsinthehare This hurts. I've been an AMD fan for a long time and I was really excited for Bulldozer. However, after seeing the lackluster performance, I put off upgrading until Piledriver. I'm not so sure about Piledriver now, though, and I think it might be time to throw in the towel and finally buy an Intel chip for once.Reply -

abitoms One thing in the review's last page's title really piqued meReply

"...but Tomorrow Shows More Promise for AMD".

Tomorrow...as in ... Oct 16, 2012 or is it only figurative? -

abhijitkalyane Hope Vishera changes this - a $125 chip that can beat i3 at stock (apps & games) and can ALSO be overclocked would be a great thing for budget enthusiasts, who right now are stuck with either locked Intel CPU or not-so-great-performing AMD CPU. Power numbers still don't look good. Probably due to the 32nm process (and also due to architectural differences I suppose). Is Vishera 32nm or 22? Fingers crossed for healthy competition :)Reply -

cleeve jerm1027At $125, they should have included the FX-6100. That's $120 on Newegg, plus an additional $15 off on sale.Reply

At 3.3 GHz, the 6100 doesn't fare well. It's easily out-gamed by the FX-4170, and only gets a bit of a break in highly threaded apps. -

user32123 Why not compare with Trinity? It has higher performance, lower power and modern chipsetReply