Graphics Beginners' Guide, Part 1: Graphics Cards

The Basic Parts Of A Graphics Card

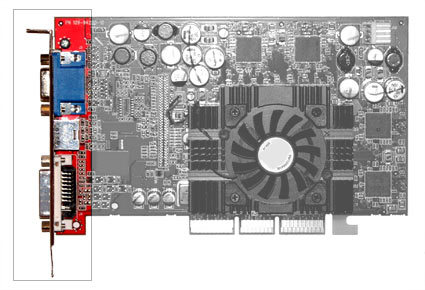

Outputs

This is where the graphics card's outputs are located. Note that one side of almost every expansion card is accessible from the back part of a PC, hence there you will find a metal cover that carries different connectors.

As a graphics card is installed into a PC, you will only see the connectors at the back side of your PC. This is the part of the graphics card that the display cable plugs into. Many graphics cards have multiple (two) outputs, so more than one display device can be used at a time. There are many kinds of display interfaces, while the main PC display interfaces can be either digital or analog.

PCs are digital machines that process binary zeros and ones. Therefore, digital is the native output from the graphics card. The modern display comes from a long linage of cathode ray tubes (CRTs). A CRT display uses an electron gun to blast three different materials on the inside of the tube that emit red, green and blue light when excited. These early devices were analog by nature and to convert from digital to analog a device called a digital to analog converter (DAC) made its way into graphics outputs. With the advent of digital displays such as Liquid Crystal Displays (LCDs), the need for a DAC is becoming obsolete, but the component is still incorporated for analog support.

VGA Outputs (D-Sub)

The analog display connector has 15 pins and can be identified by its blue color.

If you refer to VGA as a resolution it stands for "video graphics array" (consisting of a certain amount of horizontal and vertical pixels). In the graphics hardware sector, however, it stands for "video graphics adapter." The corresponding connector is called D-Sub 15 and it conducts an analog display signal, which may vary in its signal quality depending on the particular product. Most expensive graphics cards should be capable of delivering clean signals that support crisp display at high resolutions.

This interface was the standard output before the digital DVI (Digital Visual Interface) came along, and it is still very common. D-Sub VGA outputs will connect to most classic CRT (tube-style) monitors. They will also connect to most digital projectors and even some HDTVs (high-definition televisions), although we advise against using them with any type of digital display for the sake of image quality.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

DVI Outputs

DVI stands for 'Digital Video/Visual Interface'

DVI is the standard digital output for graphics cards and flat panel displays (with the exception of low-budget models). If you have a model that is not older than 2004, chances are it has a DVI output. Most graphics cards with DVI outputs will come with an adapter to convert the DVI to a VGA/D-Sub if you don't have a DVI display. All state-of-the-art graphics cards feature dual DVI ports to attach two displays and extend your Windows desktop to these. However, any two outputs, whether these are D-SuB and DVI, or two of each output type, can support dual display modes. New digital displays such as the Dell and Apple 30" displays require a dual-link graphics card output and cable to display the native resolution of 2560x1600.

Current page: The Basic Parts Of A Graphics Card

Prev Page Graphics Card Fundamentals Next Page Composite VideoDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

srinivasgtl Is it possible to get different output on different output ports on the graphics card? I want to play two different streams and use the two ports of the same graphics cards to display these streams on two different TV's. Is this possible?Reply