Early Review Shows DLSS 3 Amplification Works Best With Lower Native Frame Rates

DLSS 3 shows promise as well as input lag

Digital Foundry recently released an exclusive first look at DLSS 3 performance on Nvidia's new RTX 40 series Ada Lovelace GPUs. The video showed impressive FPS gains on a RTX 4090, with frame rate boosts going up as high as 500%. However, the tech outlet also demonstrated DLSS 3's flaws, such as increased lag and artefacting with the AI generated frames, which can create some unwanted side effects in the gameplay experience.

DLSS 3 is a revolution of Nvidia's Deep Learning Super Sampling, and now takes on the role of providing both image upscaling and frame generation simultaneously. This means DLSS 3 will upscale an image (like DLSS 2), and also generate AI-created images all by itself — substantially increasing frame rates without the help of traditional 3D rendering.

This doesn't mean DLSS 2 is going away — rather, DLSS 2 and DLSS 3 will exist in tandem, depending on what game developers want to implement. DLSS 3 is simply the frame-amplified version of DLSS 2.

As a result, you'll still be able to choose DLSS 2's image quality presets — such as Quality, Balanced and Performance — with DLSS 3 as well.

The technology works by rendering two physical frames — generated by the GPU cores and upscaled by DLSS — and then inserting an AI-generated frame directly in the middle of those other two frames. As a result, frame rates get boosted immensely, but input lag is heavily increased due to the waiting time of holding back both frames to generate the AI frame.

To counter this, Nvidia is requiring all DLSS 3-enabled games to feature Nvidia reflex technology, to keep input lag down as low as possible.

For now, DLSS 3 only supports RTX 40 series GPUs, since the technology requires a more powerful optical flow accelerator, that only exists in the Ada Lovelace GPU microarchitecture right now. Nvidia does say that technically DLSS 3 can run on previous generations of RTX GPUs, however these older GPUs aren't powerful enough to run DLSS 3 well.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

As a result, there is technically a chance some variation of DLSS 3 might make its way to RTX 20 and 30 series GPUs — but it's highly unlikely. It would take a miracle, from a software optimization standpoint, to get it to run well.

Performance Characteristics

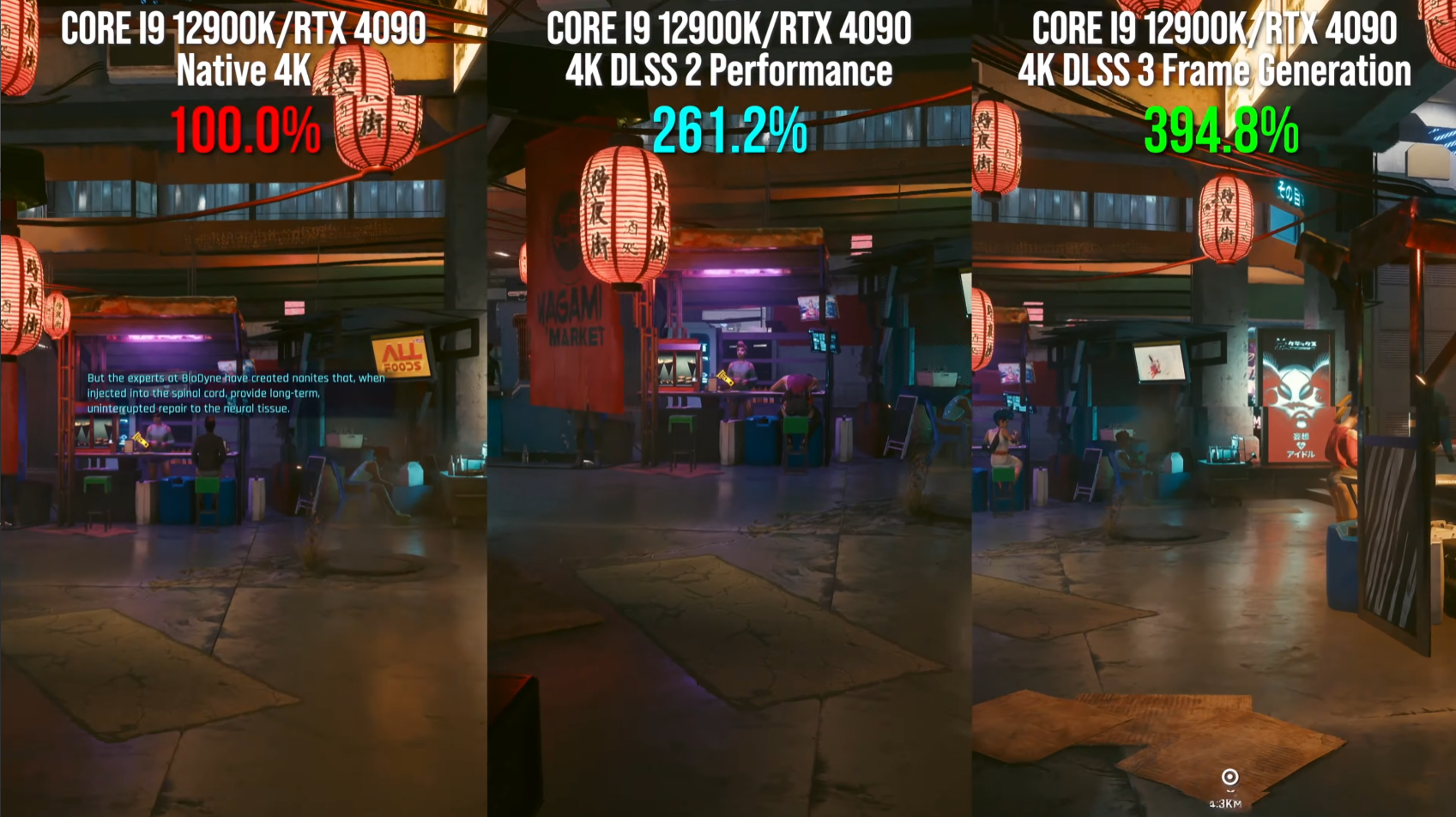

Digital Foundry was given three different titles featuring DLSS 3 integration to look at, including test builds of Cyberpunk 2077, Portal RTX, and Spider-Man Remastered — all running on a GeForce RTX 4090. Due to embargo limitations, Digital Foundry was only able to provide percentage based uplifts and not static frame rate results.

In Cyberpunk 2077, Digital Foundry saw a 3.9x performance multiplier with DLSS 3 enabled, compared to running the game at a native 4k resolution. DLSS 3 is 2.5x slower than DLSS 2 in performance mode — slower being "just" 2.5x faster than native resolution.

Spider-Man Remastered showed a significantly smaller performance boost, with DLSS 3 only providing a 2x FPS multiplier over native 4k resolution. But it was still much faster than DLSS 2 performance mode, which only offers a 9% frame rate boost over native. (The reason for DLSS 2's low performance results is CPU bottlenecking.)

Portal RTX showed the biggest performance leap with DLSS 3 — with a whopping 5.5x frame rate boost over native 4k rendering. DLSS 3 was 2.2x faster than DLSS 2 in performance mode — though DLSS 2 still provides a 3.3x performance improvement over native resolution. (Its worth noting that Portal RTX can be seen running at around 20FPS at 4k native in the YouTube video, so the native frame rate for this game is incredibly low to begin with — especially for a RTX 4090).

DLSS 3 Performance Scales Better At Lower Native Frame Rates

Digital Foundry's analysis shows that DLSS 3 works best when native frame rates are very low, and performance multiplication degrades as native frame rates increase. This is apparent with Portal RTX, where you can actually see the frame rate differences visually from native to the DLSS 2 and 3 version.

This is compared to Spider-Man Remastered, which isn't that difficult of a game to run at max settings on flagship hardware — and can easily hit frame rates well over 60 FPS as long as you aren't CPU bottlenecked. This is why we see the lowest DLSS 3 multiplication gains in this title.

This side effect makes a lot of sense with how DLSS 3 works — DLSS 3 has to wait several milliseconds after generating two complete frames, to then rendering out an AI image, before releasing all three frames to your display. This additional wait time will continuously reduce DLSS 3's frame rate gains as frame rates increase as a whole.

However, even in high FPS environments DLSS 3 is still providing higher FPS boosts than DLSS 2 does in performance mode, so we suspect this will only be a problem at ultra high frame rates — beyond 700 FPS or something like that.

It does mean that DLSS 3 won't be great for competitive shooters, such as Overwatch and Apex Legends, where input lag is just as important as high FPS.

Input Lag Analysis

Digital Foundry shows that DLSS 3's combination with Nvidia Reflex is what makes the technology really shine. In Portal RTX, DLSS 3's input lag was cut nearly in half at 56ms — compared to native 4k rendering with Reflex enabled at 95ms (it was 129 ms with it off).

In Cyberpunk 2077, the gains are different but still good for the DLSS 3/Nvidia Reflex combination. At native 4k with Reflex on, Digital Foundry saw a 62ms average (108ms with it off). With DLSS 3 and Reflex, this went down to 54ms. But input lag was lowest — 31ms with Reflex enabled and 42ms with it disabled — with DLSS 2 in performance mode.

This same behavior can be seen in Spider Man — with a 36ms input delay with Reflex on at native 4K (39ms with it off). DLSS 3 and Reflex fell in between at 38ms. And DLSS 2 in performance mode showed the lowest input delay: 23ms with Reflex on, and 24ms with it off.

Its too bad DLSS 3 can't be used without Reflex, but the DLSS 2 performance mode results show us that Nvidia Reflex technology is doing a lot of the heavy lifting when DLSS 3 enabled, heavily reducing input lag to playable levels.

Image Quality

Perhaps one of the most interesting topics surrounding DLSS 3 is the image quality of the AI-generated frames. Digital Foundry also tested this, and found DLSS 3 to be surprisingly good (though not perfect).

It looks like DLSS 3's weakness lies in hidden geometry, where information is missing between two frames due to geometry overlapping another set of geometry while in motion. This can cause DLSS 3 to "shutter" and output ugly artifacts as it tries to fill in the void of missing detail.

But everything else about the images looks solid, with very few issues.

Digital Foundry says they'll have to test DLSS 3 more thoroughly once they have a more rigorous testing methodology figured out. They haven't figured out a way to demonstrate whether or not DLSS 3's geometry issues can be seen in real time, since frame rates are so high with DLSS 3 anyway.

From what we could tell, DLSS 3's occasional artefacting is not visible in real-time — but we don't know what the game with DLSS 3 will look like in real life, due to compression and frame rate limitations on YouTube. Stay tuned for our own DLSS 3 testing in the near future.

Aaron Klotz is a contributing writer for Tom’s Hardware, covering news related to computer hardware such as CPUs, and graphics cards.

-

GasLighterHavoc Frame interpolation is not free in terms of latency. Easier to make fake frames if the frame rate is low.Reply -

digitalgriffin ReplyAdmin said:A early review of DLSS 3 showed an impressive 5x performance improvement on the RTX 4090 in some situations, but sacrifices in image quality and input lag need to be made to get these ultra high frame rates.

Early Review Shows DLSS 3 Amplification Works Best With Lower Native Frame Rates : Read more

Frame interpolation not available on 20 and 30 series is because money.

The hardware is most certainly there. If it's there in low power 55" 4K televisions, it's there on the 20 series. The hardware is not that technical. It's just money reasons they don't want to implement it. My 1080p 46" TV from 12 years ago has bloody frame interpolation. Are you telling me the 20 series and 30 series is less powerful than that?

It's like saying their broadcast software required tensor cores. Well they got caught in that lie didn't they?

God I hate their Horse Manure. Just tell the truth.