Intel's ATX v3.0 PSU Standard Has More Power for GPUs

There is a new PSU standard from Intel, leading to huge changes in the IT market!

Added ALL of Chapter 3 – PCIe Add-in Card Consideration

This new chapter has all big changes in the ATX spec, which have to do with the PCIe cards, or in other words the Graphics Cards. The 5.0 Revision of the PCIe spec introduced four major updates that affect power supplies.

- A Power Excursion allowance was introduced to support brief, high current demands on power beyond the rated TDP.

- The maximum power consumption for a single Add-in Card was doubled to 600 W. This is the per-card limit from all sources combined.

- A new 48V (nominal) power rail was added.

- Two new Auxiliary Power Connectors were introduced to provide up to 600 W on a single cable connector. The new 12VHPWR connector supports 600W on the 12V rail, while the 48VHPWR provides 600W on the 48V rail. Four new sideband signal conductors permit simple signaling between the Add-in Card and power supply.

With the term "Power Excursion," the PCIe spec refers to power spikes. As you can see, the power consumption for a single PCIe card has been doubled now to 600W. Two new power connectors have also been introduced to deliver 600W each. The 12VHPWR is for the 12V rail, and the 48VHPWR is for a new 48V rail, which the new PCIe CEM 5.0 spec added. The 48VHPWR is not relevant to desktop PSUs, so the ATX spec doesn't refer to it at all.

A significant point of interest is that power spikes for PCIe cards rated from 300 to 600W are only allowed when the 12VHPWR is used, not with the legacy 6 pin and 6+2 pin PCIe connectors. This key phrase signifies the importance of the new PCIe connector and the PSUs' design requirements. You cannot just take an old PSU platform, mount a 12VHPWR connector, and state that you are ATX v3.0 compatible, and we will see below why.

Article continues belowMoreover, Intel is thinking of adding power spike limits for PCIe cards with up to 300W power consumption, again using the 12VHPWR connector.

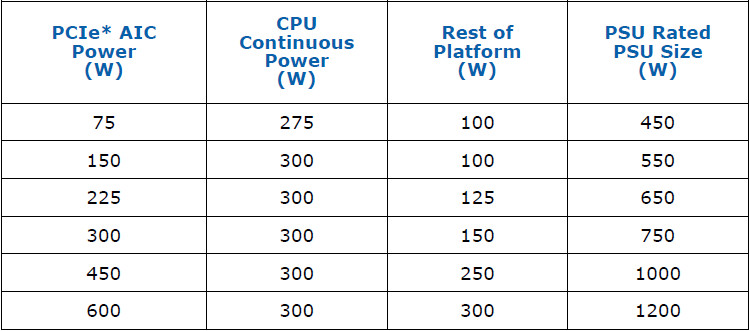

To set the maximum power of the PSU requirements for PC systems Intel takes into account three major factors:

- CPU power consumption

- Graphics card power consumption

- The power consumption of the rest system parts.

As you can see in the table above, for a 600W graphics card and a 300W CPU, along with 300W for the rest parts, which is too high, you need a 1200W power supply, at least. We should note here that for 600W graphics cards, you need a liquid cooling system, while the limit for air cooling solutions is 450W.

PSU Power Spike Tolerance

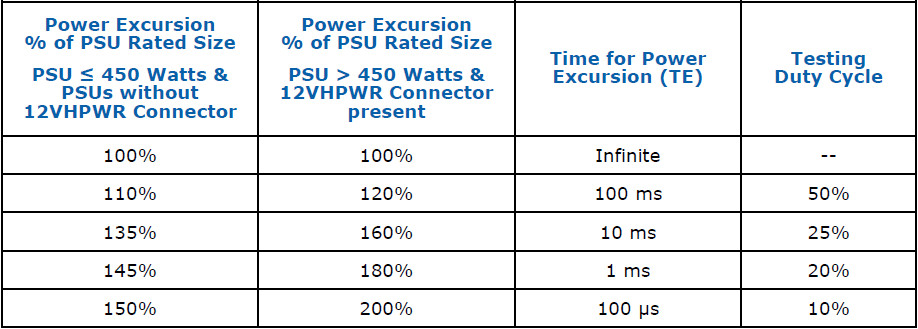

This is the largest change in the new ATX spec, which goes hand in hand with the new 12+4 pin connector (12VHPWR). The table below lists the power limits that the PSU has to withstand, according to its capacity. For PSUs with 450W max power and lower power spikes which should not create any problems are notably lower than the ones for PSUs with over 450W capacity.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Power Spike period, mentioned as Power Excursion time or TE by Intel, is the period in which the PSU should not have a problem operating. If this period is exceeded, the PSU has to shut down to prevent failure.

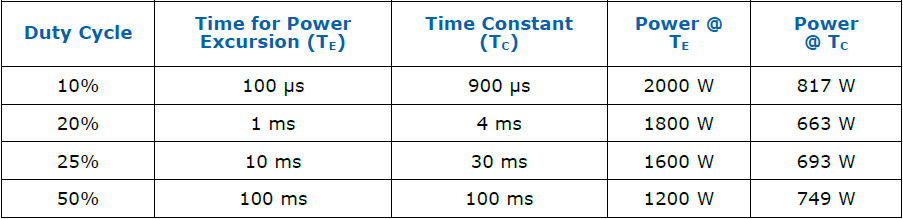

Very briefly, if the PSU has over 450W capacity, it should be able to deliver 200% of its rated power for 100μs with a testing duty cycle of 10%. This means that a 1000W PSU should be able to deliver 2000W for 100μs, return to a lower power level for 900μs, and then do the same cycle again.

The table below is provided by Intel and shows a 1000W PSU example. What worries us is not the 2000W power spike for 100μs but the intermediate power spikes at 1800W and 1600W, which last longer. Most supervisor ICs are not so fast to catch a 100μs power spike, but they might have a problem with 1ms and 10 ms long power spikes, meaning that a significant platform update is required.

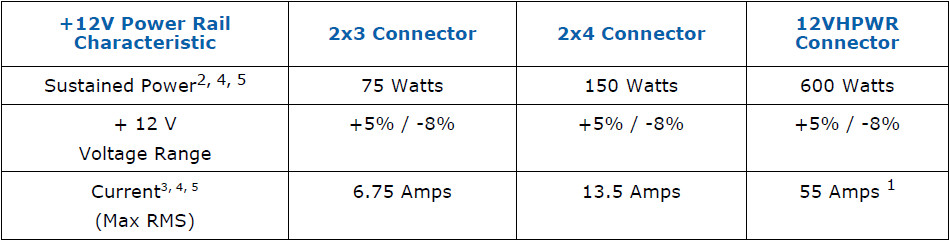

New Load Regulation for +12V

There are no changes in the upper limit in +12V load regulation, but there is a notable change in the lower limit from -5% to -8% on all PCIe connectors. Moreover, Intel states that the slew rate for the 12VHPWR connector should not exceed 5A/μs.

What the Extra Four Sensor Pins Do?

Compared to Nvidia's 12 pin connector, the new one has four extra pins which don't deliver current, but they are sense pins. So what do these pins exactly do? Let's start with the easy part first. Two of these sideband signals are required, the ones coming from the PCIe card are optional while the ones coming from the power supply are required.

- SENSE0 (Required)

- SENSE1 (Required)

- CARD_PWR_STABLE

- CARD_CBL_PRES#

SENSE0 & SENSE1 (Required)

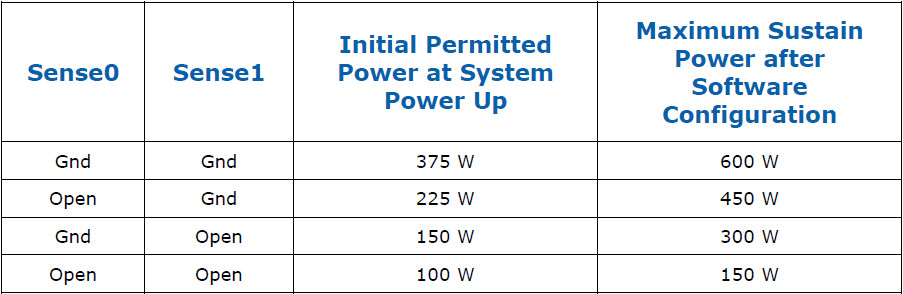

Sense 0 and 1 sideband signals, as the ATX spec calls them, provide important information from the PSU to the graphics card. They state how much power the GPU can draw from the PSU, during the power up phase and afterwards. Do note that once the system boots, the these sense signals must not change their state.

So the PSU informs the GPU how strong it is, and according to the report the GPU sets its power limit. For the full 600W power, which is only possible with a liquid cooling system on the graphics card, both Sense 0 and 1 should be grounded. Moreover, in the worst possible scenario the maximum power that the GPU can draw from the PSU during start-up is no more than 375W, helping to keep a smooth turn-on transient at +12V.

CARD_PWR_STABLE (Optional)

This is a power good signal from the graphics card to the PSU. When the signal is asserted the PCIe card informs the PSU that everything is fine with its power rails. If something goes wrong the PCIe card sends a bad power ok signa, allowing the PSU to enable its protection circuits and save the day!

CARD_CBL_PRES# (Optional)

This signal has two functions, a primary and a secondary one.

The primary function is to provide a signal from the PCIe card to the PSU that the card is present and properly connected to the 12VHPWR connector. All PCIe Gen 5.0 cards require this function.

The secondary function of this signal is to inform the PSU that the PCIe is alive and kicking and can be included in the "Power Budgeting Sense Detect Registers." So the PSU is aware of the installed PCIe cards and the power cables that these use. If more than one PCIe cards using 12VHPWR connectors are attached to the PSU, this is crucial information. By knowing the number of PCIe cards, the PSU can set its SENSE0 and 1 signals NOT to allow the PCIe cards to draw more power than it can support. So say you have a 1200 PSU, which usually supports a single 600W GPU. If you connect two, the PSU will adjust its power output levels on each of the 12VHPWR connectors accordingly to not get overloaded.

The SENSE 0/1 signals cannot be dynamically adjusted, though, when the system is in operation, but this is not so important since no one installs new PCIe cards while the system is in operation!

An example of how this signal can help the PSU cope with multiple graphics cards installed. Say we have a PSU capable of supporting a 600W graphics card. If we install two GPUs, each card gets 300W, and for three or four PCIe cards, power drops to 150W for each.

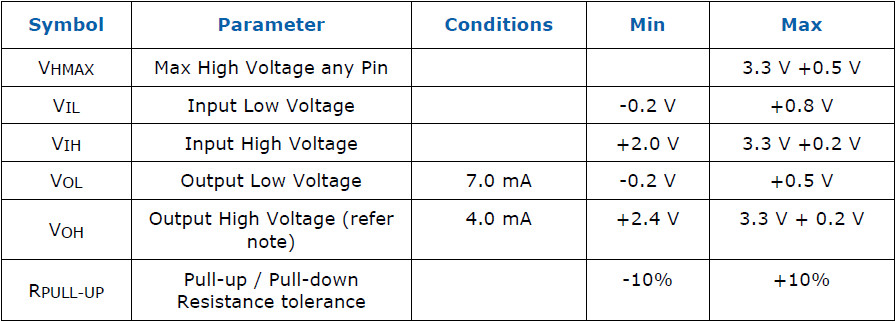

Sideband Signals DC Specifications (Required)

We provide the following table only for educational reasons, since it is of no interest to the average user.

Current page: The BIG CHANGE - Power Spike Tolerance

Prev Page ATX12V v3.0 Changes Next Page DC Output Voltage Regulation and Transient Loads

Aris Mpitziopoulos is a contributing editor at Tom's Hardware, covering PSUs.

-

Elisis It seems the link to Page 6 of the article is bugged, as it just redirects you to the 1st.Reply -

2Be_or_Not2Be Were there any estimates on when we might see these new PSUs hit the market, and/or info on the GPUs that require them?Reply -

digitalgriffin Good article. But the IC's to catch the power spikes and duty cycle time will require a lot of re-engineering. I expect a significant premium on these new models.Reply -

jeremyj_83 Reply

My guess is that the PSU manufacturers have had the final specs for a good 6 months now to be able to make their new products. Same with AMD/nVidia for their GPUs. I suspect that we will see these PSUs come out with the next GPU and CPU releases with PCIe 5.0 support.2Be_or_Not2Be said:Were there any estimates on when we might see these new PSUs hit the market, and/or info on the GPUs that require them?

I do like that the new spec includes the CPU continuous power for HEDT CPUs. Makes the decision on a PSU much easier for people who need those CPUs. Also helps Intel as their CPUs are quite power hungry during boosting. -

digitalgriffin Replyjeremyj_83 said:My guess is that the PSU manufacturers have had the final specs for a good 6 months now to be able to make their new products. Same with AMD/nVidia for their GPUs. I suspect that we will see these PSUs come out with the next GPU and CPU releases with PCIe 5.0 support.

I do like that the new spec includes the CPU continuous power for HEDT CPUs. Makes the decision on a PSU much easier for people who need those CPUs. Also helps Intel as their CPUs are quite power hungry during boosting.

The over power safety IC logic is a lot more complicated and has to be a lot more accurate. 200% power for 100us 10% duty is a nebulous one as I don't think there's anything that fast out there. But also what happens when you start getting mixed spikes like 150% mixed with 200% and 110%?

Power = V*V/R. So you cut the resistance in half and your amps shoot through the roof. The cables in the spec will heat up QUICKLY. As the power grows, the amount of power lost to heat exponentially grows. So you are dealing with an exponential heat problem with mixed amperages and duty cycles. It will require a total waste heat power table that tracks over time. -

2Be_or_Not2Be Replydigitalgriffin said:The over power safety IC logic is a lot more complicated and has to be a lot more accurate. 200% power for 100us 10% duty is a nebulous one as I don't think there's anything that fast out there. But also what happens when you start getting mixed spikes like 150% mixed with 200% and 110%?

Power = V*V/R. So you cut the resistance in half and your amps shoot through the roof. The cables in the spec will heat up QUICKLY. As the power grows, the amount of power lost to heat exponentially grows. So you are dealing with an exponential heat problem with mixed amperages and duty cycles. It will require a total waste heat power table that tracks over time.

Yeah, maybe since I don't see any announced in conjunction with the spec finalization, it's probably a bigger task for the PSU OEMs along with little need for it now. That, combined with parts shortages, probably means we won't see these new PSUs until late 2022 or even 2023. -

Co BIY The binary specification of the "Sense" pins seems over-simplified to me. Allowing these to carry a "bit" more data would make make more "sense".Reply -

wavewrangler Peace! Thanks for readin'.Reply

I wish we could get a proper write up on this like igorslab did (igorslab insight into atx 3.0) I am really... doubly so, shocked...that this is being championed, like more electricity for seemingly no real good reason is a good thing (along with 600watt cards), not to mention increased costs, increased complexity, complete redesigns, more faulty DOA components, list goes on. Those tables Intel put out are far out.

At the very least, I would have liked to have seen some questioning as to why this is a necessity and what some of the ramifications are going to be at the consumer level. Some of these things I have no idea how manufacturers will implement, and how they do it is the only thing I look forward to seeing. I mean, 200%. Why would I need 200% power for any amount of time, regardless of boost?? How do the components handle that? That's cutting amps in half. I feel like this is pretty much just starting over, not building upon.

Moore's Law has now become a doubling of power every 2 years. We'll call it More's Law. This is kind of what happens after you break physics, it collapses in on itself and goes inverse... Intel will soon get into the nuclear energy business to complement their CPUs needs. There are some things I like, but...what I would have liked more is some transparency and communication as to why the world needs this. Nvidia, Intel, AMD, et. al., always could have created 600-watt CPUs/GPUs. Actually, Pentium comes to mind... The real story here, I'm guessing, is that microarchitecture design and innovation has plateaued, and they all know more power will soon be needed to achieve any meaningful performance gain. Meanwhile, look at what DLSS and co. and a little (a lot) of hardware-software complimented innovation did for performance. Christopher Walken would approve, at least. Moar. Moar Pow-uh!

Never thought I'd need more than 1KW, what with the whole "efficiency" thing. Why not just make SLI, CrossFire, SMP work better at this point?! This is wardsback. Hello, a few thoughts on this. Mostly personal rambles.

-

digitalgriffin Replywavewrangler said:Peace! Thanks for readin'.

I wish we could get a proper write up on this like igorslab did (igorslab insight into atx 3.0) I am really... doubly so, shocked...that this is being championed, like more electricity for seemingly no real good reason is a good thing (along with 600watt cards), not to mention increased costs, increased complexity, complete redesigns, more faulty DOA components, list goes on. Those tables Intel put out are far out.

At the very least, I would have liked to have seen some questioning as to why this is a necessity and what some of the ramifications are going to be at the consumer level. Some of these things I have no idea how manufacturers will implement, and how they do it is the only thing I look forward to seeing. I mean, 200%. Why would I need 200% power for any amount of time, regardless of boost?? How do the components handle that? That's cutting amps in half. I feel like this is pretty much just starting over, not building upon.

Moore's Law has now become a doubling of power every 2 years. We'll call it More's Law. This is kind of what happens after you break physics, it collapses in on itself and goes inverse... Intel will soon get into the nuclear energy business to complement their CPUs needs. There are some things I like, but...what I would have liked more is some transparency and communication as to why the world needs this. Nvidia, Intel, AMD, et. al., always could have created 600-watt CPUs/GPUs. Actually, Pentium comes to mind... The real story here, I'm guessing, is that microarchitecture design and innovation has plateaued, and they all know more power will soon be needed to achieve any meaningful performance gain. Meanwhile, look at what DLSS and co. and a little (a lot) of hardware-software complimented innovation did for performance. Christopher Walken would approve, at least. Moar. Moar Pow-uh!

Never thought I'd need more than 1KW, what with the whole "efficiency" thing. Why not just make SLI, CrossFire, SMP work better at this point?! This is wardsback. Hello, a few thoughts on this. Mostly personal rambles.

Power gating is a technique that powers down part of the gpu to save power. This allows it to run cooler. But reinitiating those stages of circuits often creates a massive power inrush. A GPU hardware scheduler can see certain circuits are needed and flip the switch to power up those sections well before the instruction is dispatched. But a high inrush current is needed to make sure said circuit is initialized in time.

Second some mimd matrix operations / tensor ops are massive power hogs.

These two things together result in current influx. When you have a massive use inrush of energy, resistance drops to zero. V = IR. That means voltage drops the zero and why you have all these capacitors which store up energy to reduce voltage swings. Voltage swings are what create instability, and in rare cases, damage.

Now these huge currents are nothing new. They have been around for a decade. However, the sudden burst though and sensitivity to them is new.

As circuits get smaller, the transistor threshold for operating voltage is smaller. Also the number of transistors firing goes exponentially up. Thus lower more sensitive voltage but more current.