Intel Kills Off Xeon Phi 7200 Coprocessor

Intel's Xeon Phi 7200 Coprocessors, the descendants of Intel's failed Larrabee GPU project, are apparently being retired. Intel made the announcement in a rather low key manner via a forum post, as spotted by the Linux-loving Phoronix. Posters complained that Intel apparently scrubbed all reference of the coprocessors from its website, along with the MPSS 4.0 software stack. An Intel representative responded:

Intel continually evaluates the markets for our products in order to provide the best possible solutions to our customer’s challenges. As part of this on-going evaluation process Intel has decided to not offer Intel Xeon Phi 7200 Coprocessor (codenamed Knights Landing Coprocessor) products to the market.Given the rapid adoption of Intel Xeon Phi 7200 processors, Intel has decided to not deploy the Knights Landing Coprocessor to the general market.Intel Xeon Phi Processors remain a key element of our solution portfolio for providing customers the most compelling and competitive solutions possible.

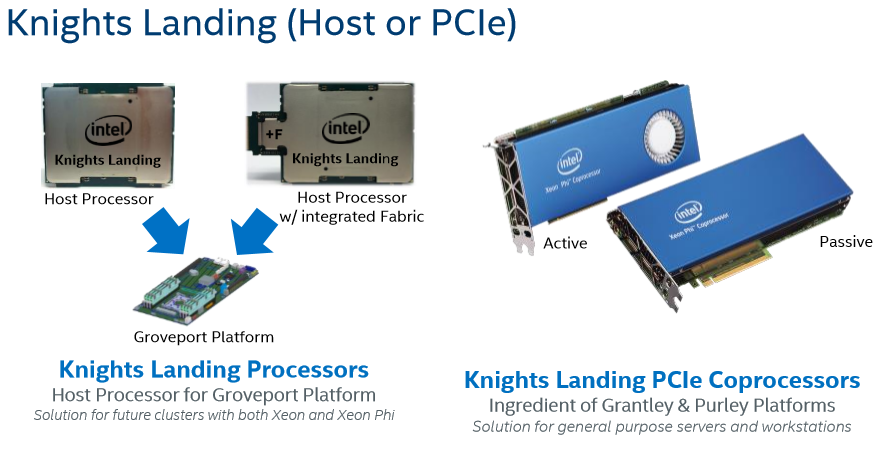

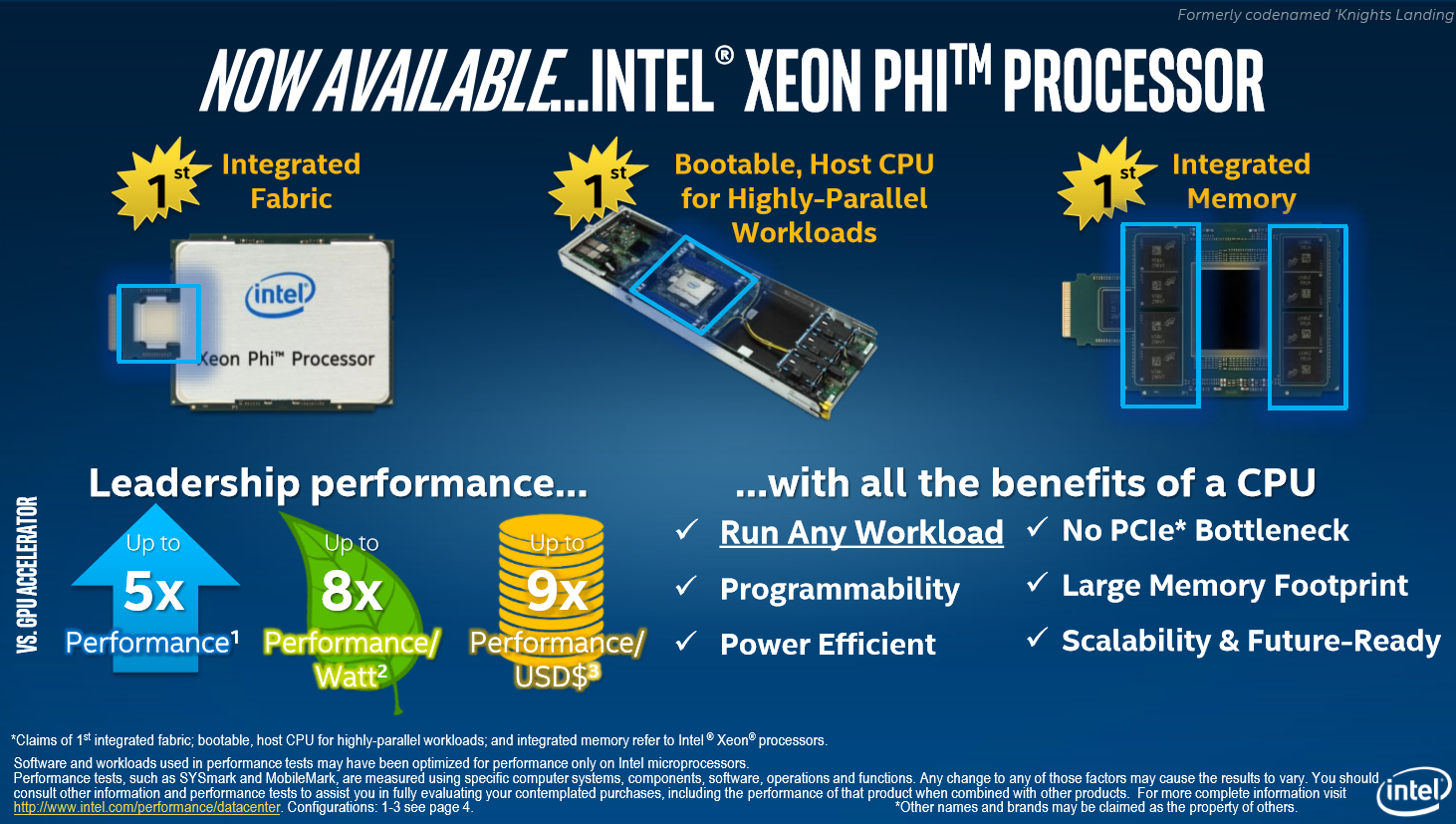

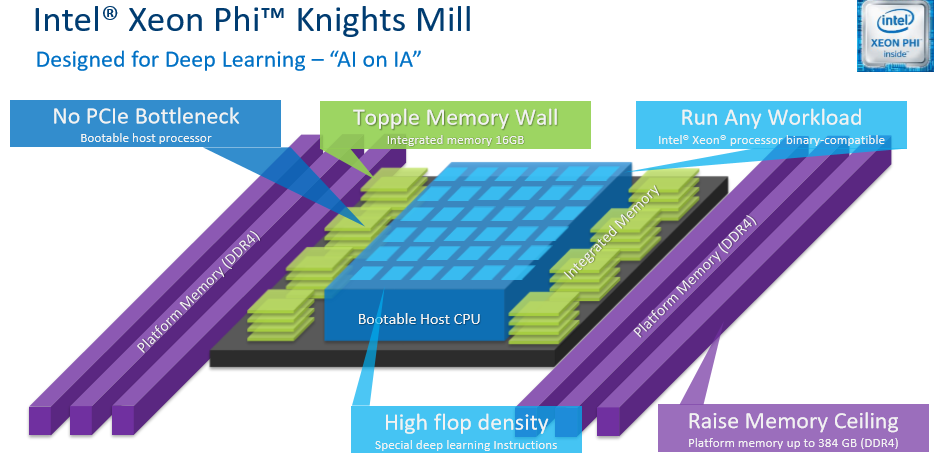

The Xeon Phi Knights Landing processors come in two flavors: a socketed LGA 3647 host processor and a PCIe-attached Add-In Card (AIC). Intel designed the Knights Mill products to address a wide variety of AI and HPC workloads.

Article continues belowThe development isn't entirely unexpected; Intel predicted during the initial Knights Landing launch that more than 50% of its customers would prefer the host processor variant. The socketed processors have a few notable advantages over the AIC models, such as bootability and the option for integrated 100Gb/s Omni-Path fabric.

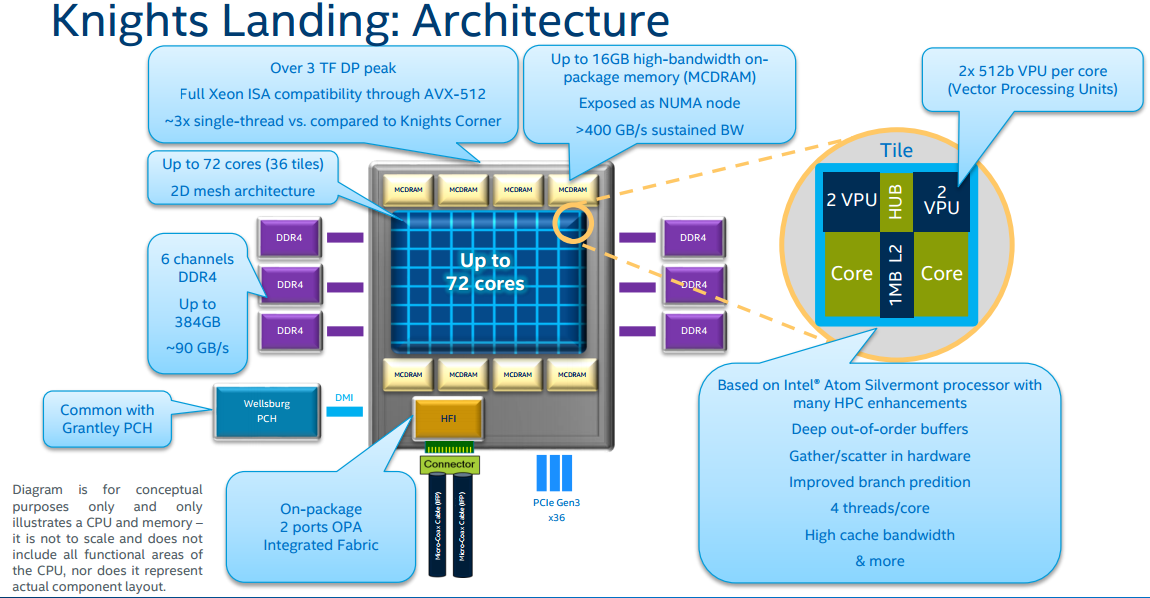

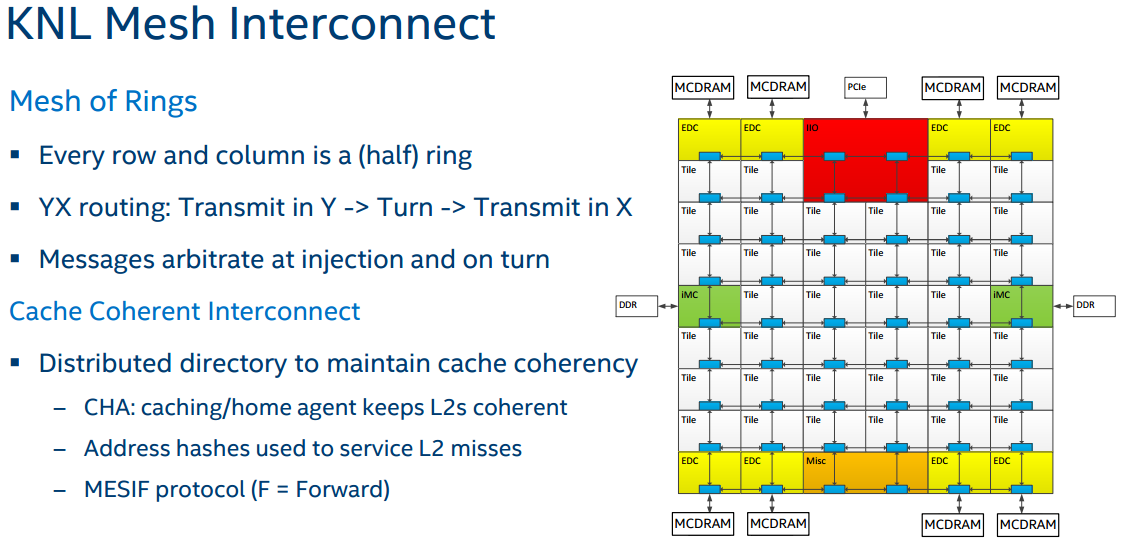

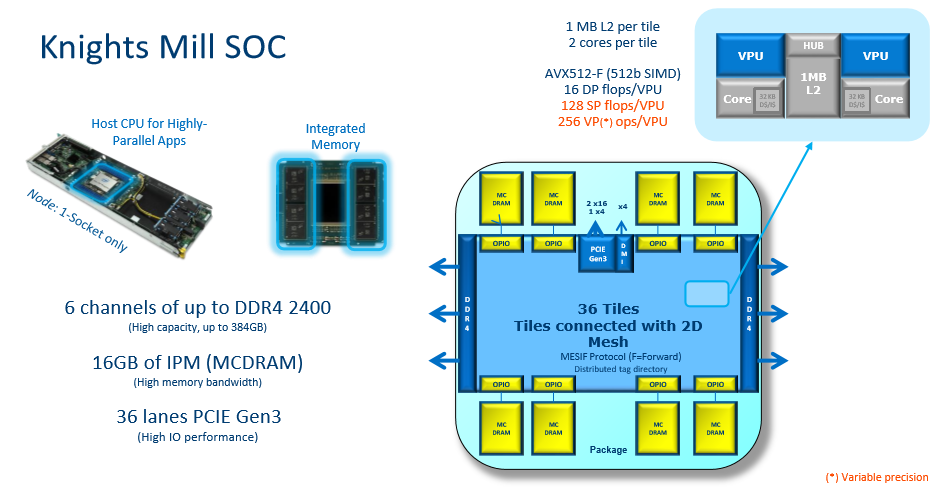

Knights Landing was the first product to come to market wielding Intel's new mesh topology, which connects 72 quad-threaded Silvermont-derived cores into one cohesive unit. The new mesh topology has now filtered down into Intel's Purley and Skylake-X processors.

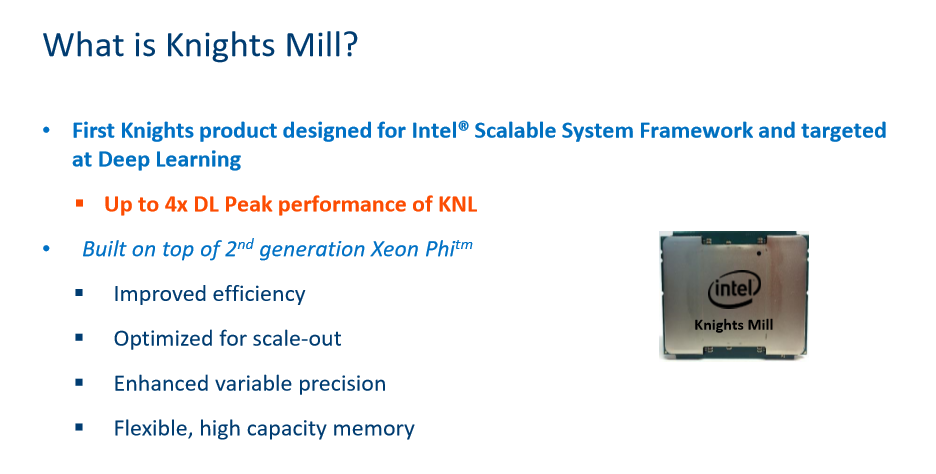

Meanwhile, Intel has its new Knights Mill products coming to market, but Intel has not revealed the core count yet (the slide references the Knights Landing). Tellingly, the Knights Mill family only comes as a host processor, and not in the AIC form factor.

Intel has plenty of options for machine learning tasks, including its Xilinx-derived FPGAs, Nervana ASICs, and Knights Landing host processors. It appears that the company is narrowing its focus to host processors for the Xeon Phi Knights Landing and Mill families, but the statement assures us that Xeon Phi processors remain a key part of Intel's portfolio. We've reached out to Intel for more information and will update as necessary.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Vatharian Curious move - It is probable, that they are afraid of people buying EPYC systems and deploying multiple coprocessors with this?Reply -

knowom If they are smart they are ditching it in favor doing a similar approach with FPGA's instead which are way more multipurpose and can be re-purposed which is great.Reply -

bit_user It was indeed a forum post, but on Intel's own site:Reply

https://software.intel.com/en-us/forums/intel-many-integrated-core/topic/738422

And just to clarify, they killed the PCIe card - not the socketed version that's also the host processor.

Regarding Knights Mill: that's specifically aimed at the deep learning market. It has half the fp64 performance of Knights Landing, making it less suitable for HPC workloads. So, I expect we'll continue to see both being sold until Knights Landing has a proper replacement.

-

Vatharian Reply20104195 said:If they are smart they are ditching it in favor doing a similar approach with FPGA's instead which are way more multipurpose and can be re-purposed which is great.

Intergrating FPGAs has different target - tighter intergration. Btw, they have very old FPGAs intergrated, it's more of a proof of concept so far, while KNL is real product.

My problem is that you can stick only so many KNLs in motherboard (I'm not convinced they support 4 sockets), so in 7U space you could fit probably 28 chips given 4S support. In PCIe mode, I've personally built a system that contains 18x 7120 (watercooled) cards in 2U chassis on PCIe backplane. Host system supported three of those backplanes, allowing total 54 Xeon Phis in 7U space.

Situation looks little better if you use narrow sleds, but they are pricey. PCIe is just so much more universal.

Of course socketed, Omnipath or not, is brilliant idea, but forcibly moving solution to another form factor 'just because'? Not very fun, oh no. -

bit_user Reply

Why would they be afraid of selling more product?20104124 said:Curious move - It is probable, that they are afraid of people buying EPYC systems and deploying multiple coprocessors with this?

No, I think they'd be glad to sell 4 Xeon Phi co-processors per system instead of a single socketed processor, regardless of whether the host is a Xeon or Epyc.

My guess is that most people buying the PCIe version were probably more interested in Knights Mill. Either that, or there were technical problems with the PCIe Knights Landing.

-

bit_user Reply

They only support single-socket.20104283 said:My problem is that you can stick only so many KNLs in motherboard (I'm not convinced they support 4 sockets),

I think the solution to this is to put them on blades, in a blade server.20104283 said:so in 7U space you could fit probably 28 chips given 4S support.

That's impressive, but only useful for workloads that don't require a lot of cross-communictaion.20104283 said:In PCIe mode, I've personally built a system that contains 18x 7120 (watercooled) cards in 2U chassis on PCIe backplane. Host system supported three of those backplanes, allowing total 54 Xeon Phis in 7U space.

For scale, they're going with OmniPath, instead.20104283 said:PCIe is just so much more universal.

-

Is Knights Mill even similar? I don't think it is, that's why they don't list core count, it's not a similar product.Reply

-

bit_user Reply20104395 said:Is Knights Mill even similar? I don't think it is, that's why they don't list core count, it's not a similar product.Announcing Knights Mill, building on top of Knights Landing

Source: http://www.anandtech.com/show/11741/hot-chips-intel-knights-mill-live-blog-445pm-pt-1145pm-utc

...

Builds directly on top of KNL

...

Same core config of KNL: 2 cores sharing 1MB of L2, one VPU per core

...

In KNL, two units do SP and LP

In KNM, remove one DP ports to give space for four SP VNNI units

So 0.5x DP, 2 x SP, 4x VNNI

Pitching KNM for DL but with tradeoffs, same generation as KNL

Core counts were not stated because it's not yet a shipping product. By all accounts (and based on what Intel has previously said about it), it's a variation of KNL tweaked to better target deep learning (i.e. because they were non-competitive with Nvidia's P100).

-

SockPuppet The people that run Intel are complete idiots. I don't think the senior management could set the clock on their microwave without the help of their children.Reply

GPUs are a BIG business and only getting bigger every day. Intel is 2nd to none at etching circuits into silicon. But management "doesn't see any value in making discrete GPU parts". Imbeciles. -

iLLz To be honest, with the recent announcement of Knights Mill, it makes sense not to out Knights landing since they have a newer version in the works. Knights Mill is supposed to have 4x Deep Learning performance, according to Intel.Reply