RAID Scaling Charts, Part 3: 4-128 kB Stripes Compared

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

RAID 0 I/O Performance

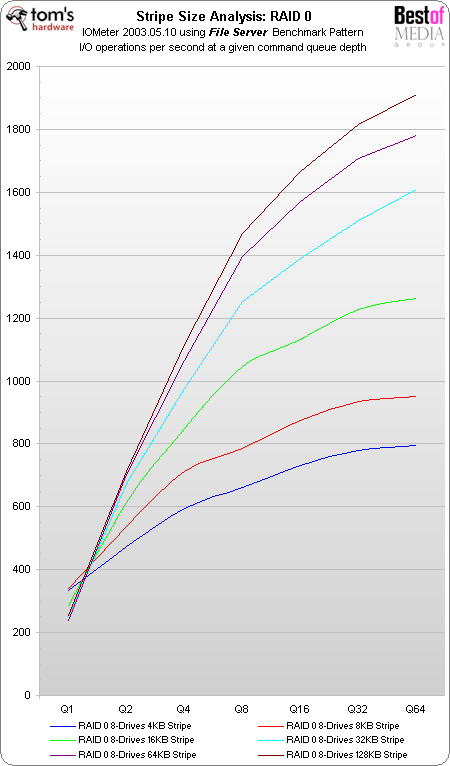

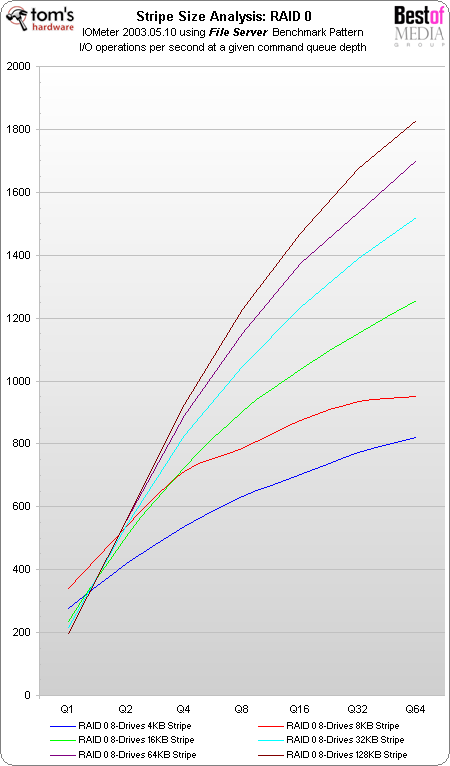

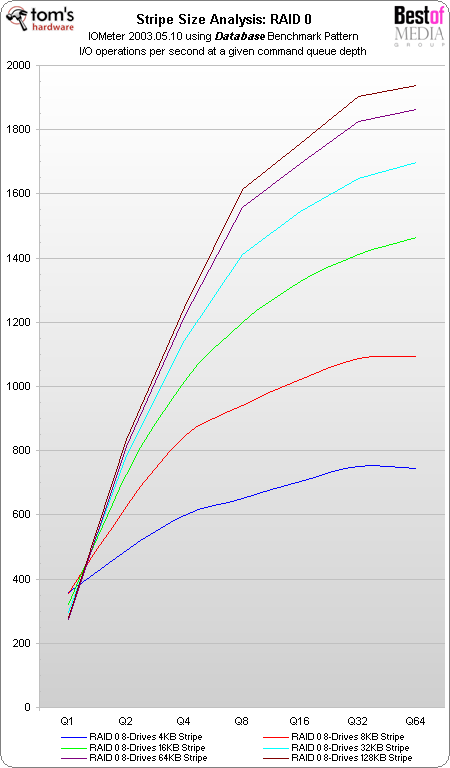

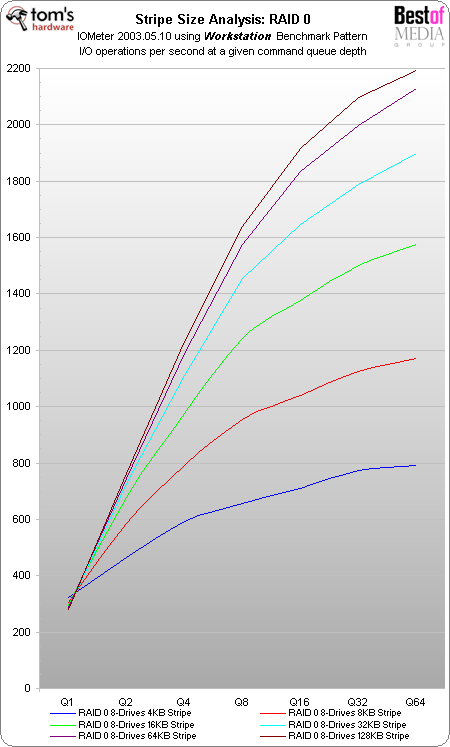

RAID 0 performance scales beautifully, as there is no parity calculation involved, which can be a potential performance hindrance. 128 kB and 64 kB stripes again provide the best performance, but not in all scenarios: in RAID 0, the smallest stripe sizes of 4 kB and 8 kB provide better performance if no commands are in a queue. For tough server workloads, which involve multiple requests at the same time, larger stripe sizes are faster again.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Patrick Schmid was the editor-in-chief for Tom's Hardware from 2005 to 2006. He wrote numerous articles on a wide range of hardware topics, including storage, CPUs, and system builds.

-

alanmeck I've found conflicting opinions re stripe size, so I did my own tests (though I don't have precision measuring tools like you guys). My raid 0 is for gaming only, so all I cared about was loading time. So I used a stopwatch to measure the difference in loading times on Left 4 Dead when using 64kb and 128kb stripe size. Results, by map:Reply

64kb 128kb

No Mercy No Mercy

Level 1: 9.15 Level 1: 9.08

Level 2: 8.31 Level 2: 8.38

Level 3: 8.24 Level 3: 8.31

Level 4: 8.45 Level 4: 8.45

Level 5: 6.56 Level 5: 6.63

Death Toll Death Toll

Level 1: 7.75 Level 1: 7.89

Level 2: 7.19 Level 2: 7.26

Level 3: 9.01 Level 3: 8.94

Level 4: 9.36 Level 4: 9.36

Level 5: 9.5 Level 5: 9.64

Dead Air Dead Air

Level 1: 7.68 Level 1: 7.47

Level 2: 7.96 Level 2: 8.03

Level 3: 9.08 Level 3: 8.87

Level 4: 8.17 Level 4: 8.17

Level 5: 6.98 Level 5: 6.84

Blood Harvest Blood Harvest

Level 1: 8.24 Level 1: 8.17

Level 2: 7.33 Level 2: 7.33

Level 3: 7.68 Level 3: 7.68

Level 4: 8.45 Level 4: 8.31

Level 5: 7.89 Level 5: 8.1

I'm using software raid 0 on my GA-870A-UD3 mobo. The results for me were almost identical (128kb was faster by .07 seconds total). That being the case, I erred on the side of 128kb in order to reduce the potential for write amplification (I'm using 3x ocz vertex 2's). What's remarkable is that, despite using the stopwatch to measure times manually, the results were always either identical, or separated by intervals of .07 seconds. Weird, huh? Btw thanks to Tomshardware, you guys give a lot of helpful info. -

Does anyone want a slower system? why do we have to choose? why do we not just get the fastest option without having to do this? or is that to simple!Reply

-

I wish we could see what 256 does. Or even 1024 but that just sounds like a waste of space unless your doing Video or Music. Maybe gameing but RAM and bandwidth will always give you an edge if no one is hacked the game.Reply

-

Shomare I agree...can you please look into getting one of the new Areca 1882 controllers with 1+GB of mem on it and a dual core 800Ghz processor? We would like to see the larger stripe sizes, the larger processor, and the larger memory footprint's results! :)Reply -

dermoth There is a misconception in this article. The point about capacity used: "For example, if you selected a 64 kB stripe size and you store a 2 kB text file, this file will occupy 64 kB.". This is totally wrong.Reply

The only incidence on size used is the FILESYSTEM block size as all files stored will be rounded up to the upper block (the last block being only partially filled). To the OS, the RAID array still looks like any other storage device and multiple filesystems block can be stored within a single stripe element.

Also note that on RAID 5 & 6, the stripe size is the stripe element size X number of data disks, and writes are fastest when full stripes are written at once. If you write only 4k in a 384k stripe (ex 64k stripe element on a 8-disk RAID6) then all other sectors have to be read on disk before the controller can write out the 4k block & updated parity data.

You will get better performance if you manage to match the filesystem block size to the FULL raid stripe size, and only in that case the statement above is true. Many filesystems offers other means of tuning the filesystem IO access patterns to match the underlying RAID geography without having to use excessively large block sizes, and most filesystems default to 4k block sizes which is also what most standalone rotational medias use internally since many years (even when they show 512k sector sizes for compatibility).