AMD Ryzen Threadripper 1920X Review

Why you can trust Tom's Hardware

2D & 3D Workstation Performance

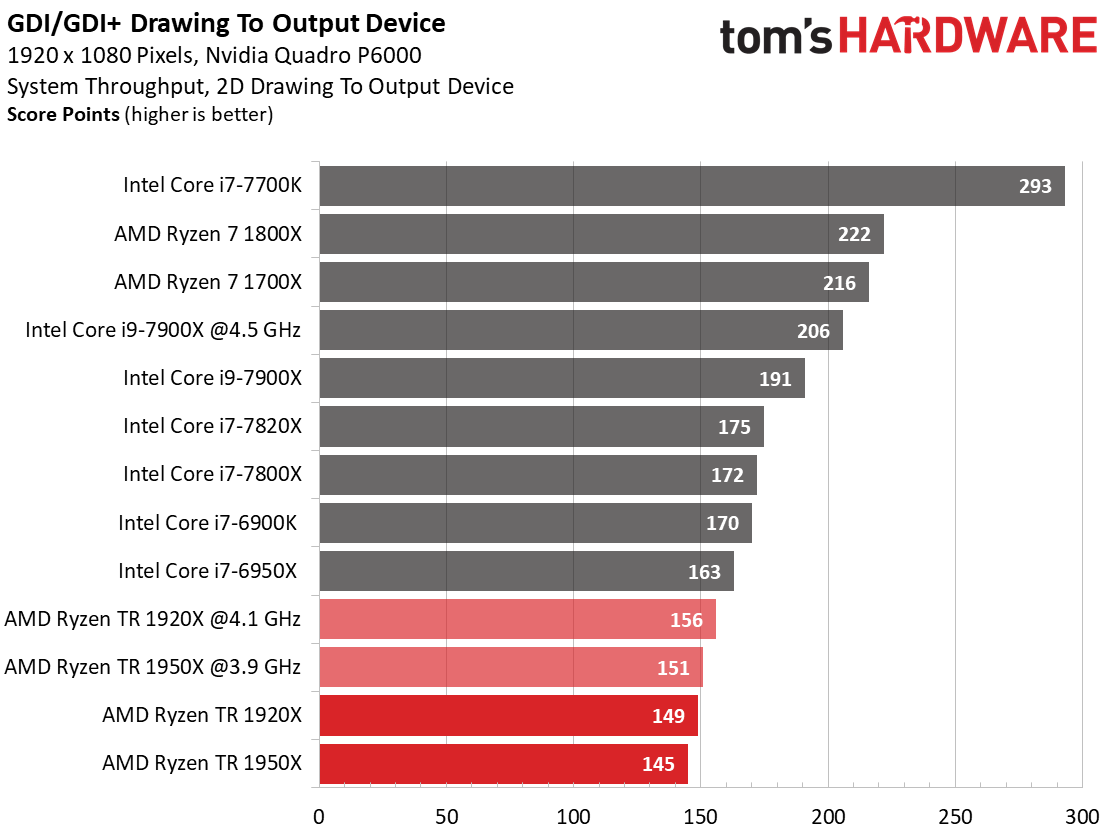

2D Workstation Performance

Our GDI/GDI+ tests are used to test two different output methods that can be found in older applications and printing tasks. Today, they, or at least a modified version of them, are commonly used to display the graphical user interface (GUI). They are also great benchmarks for direct device write throughput and memory performance when handling gigantic device-independent bitmap (DIB) files.

Tom’s Hardware Synthetic 2D Benchmarks

We take a look at direct device write throughput first. There hasn’t been true 2D hardware acceleration since the introduction of the unified shader architecture, and Microsoft's Windows driver model complicates 2D hardware acceleration as well.

The graphics test is lightly threaded, so AMD's Threadripper processors struggle while Intel's Core i7-7700K enjoys a massive advantage.

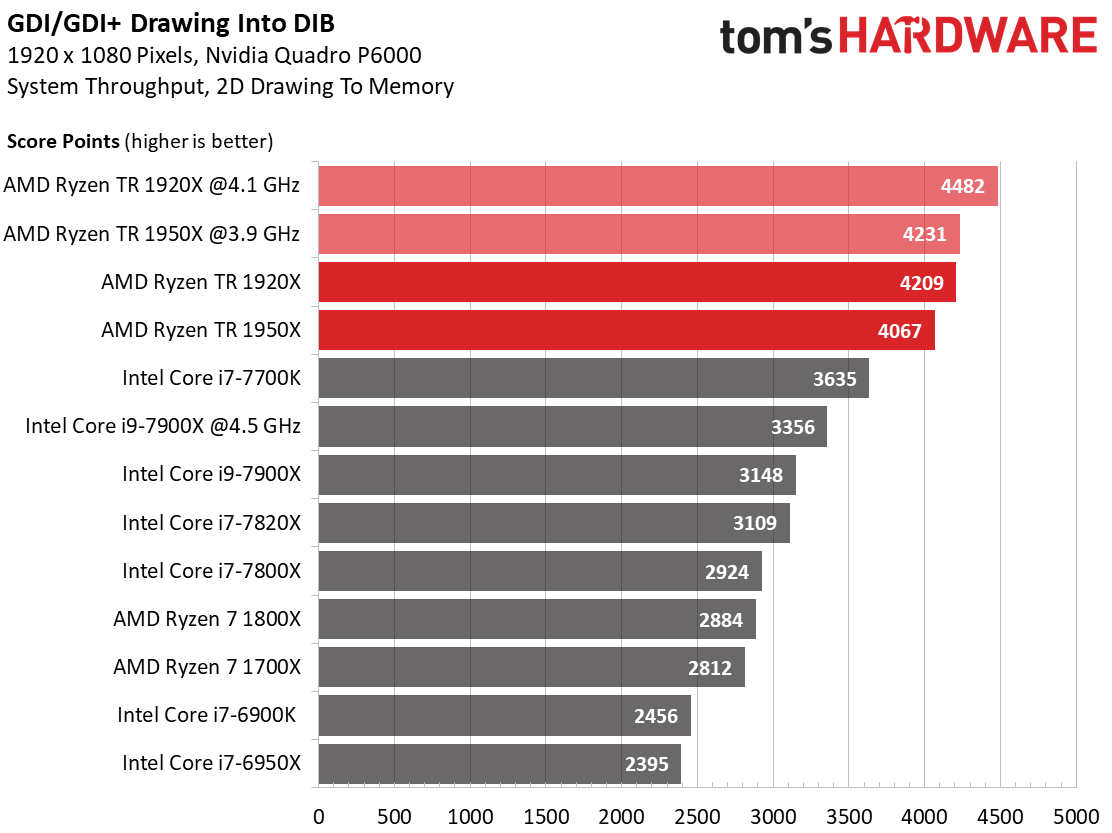

Next, we generate the graphics output in memory, using the only remaining 2D hardware function. The benchmark is the same as before, but we instead plot a bitmap in memory rather than send the information directly to the monitor. The bitmap is only copied once it's complete. This pushes the CPUs, since they’re no longer platform-bound.

Ryzen Threadripper processors dominate the field, with AMD's 1920X landing in first place.

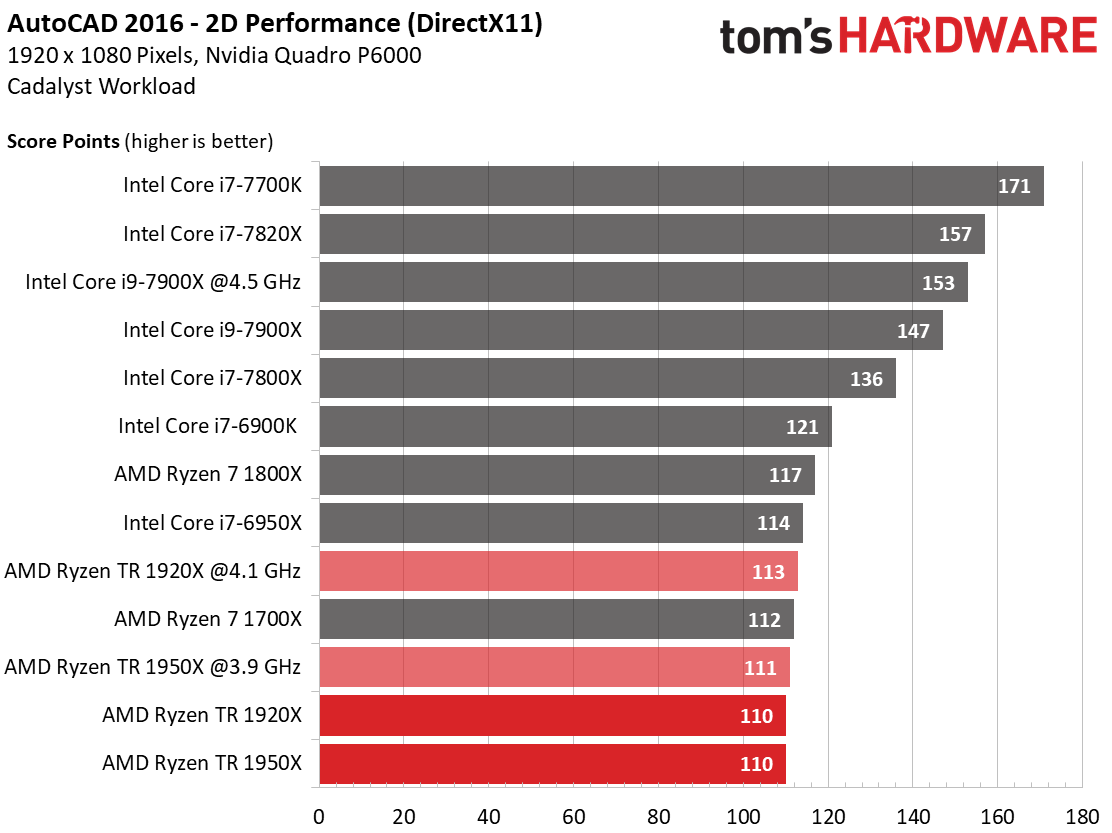

AutoCAD 2016 (2D)

The AutoCAD 2D benchmark doesn't scale well with additional cores. That shifts the focus to IPC throughput, where Intel's processors shine.

Surprisingly, Ryzen 7 1800X beats the tuned Threadripper 1920X. Die-to-die latency may come into play during this test.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

3D Workstation Performance

Most professional development applications have been optimized and compiled with Intel CPUs in mind. This is reflected in their performance numbers. Still, we include them in order to motivate developers to focus their efforts on AMD’s Ryzen processors as well. This would give users more than one choice. The same goes for an emphasis on multi-core processors, at least where that’s feasible and makes sense.

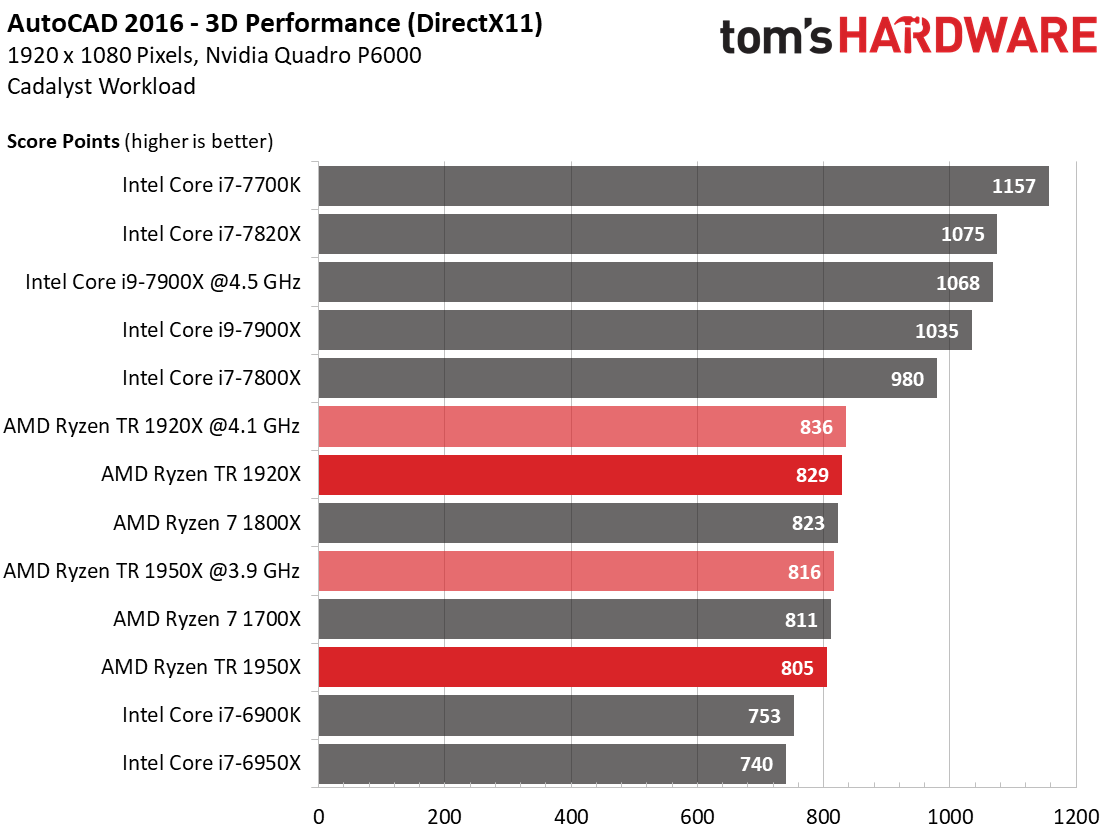

AutoCAD 2016 (3D)

AMD’s Ryzen family lands within a narrow range during this frequency-sensitive application. The Core i7-7700K takes an easy lead, and the -7820X's second-place finish confirms that the workload isn't optimized for parallelism. In fact, AutoCAD’s performance resembles older games because it uses DirectX and doesn't leverage multiple cores effectively.

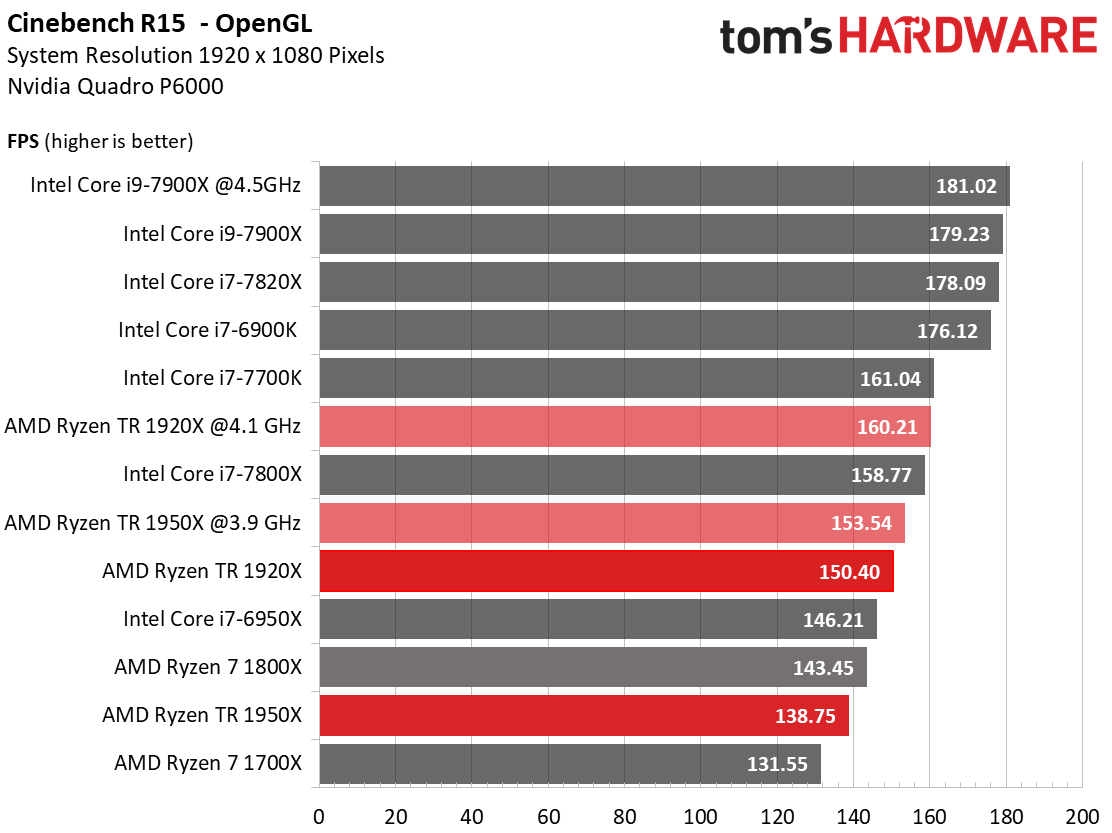

Cinebench R15 OpenGL

Clock rate tends to influence the Cinebench R15 OpenGL benchmark results most. Our numbers indicate that the application could benefit from Ryzen-specific optimizations.

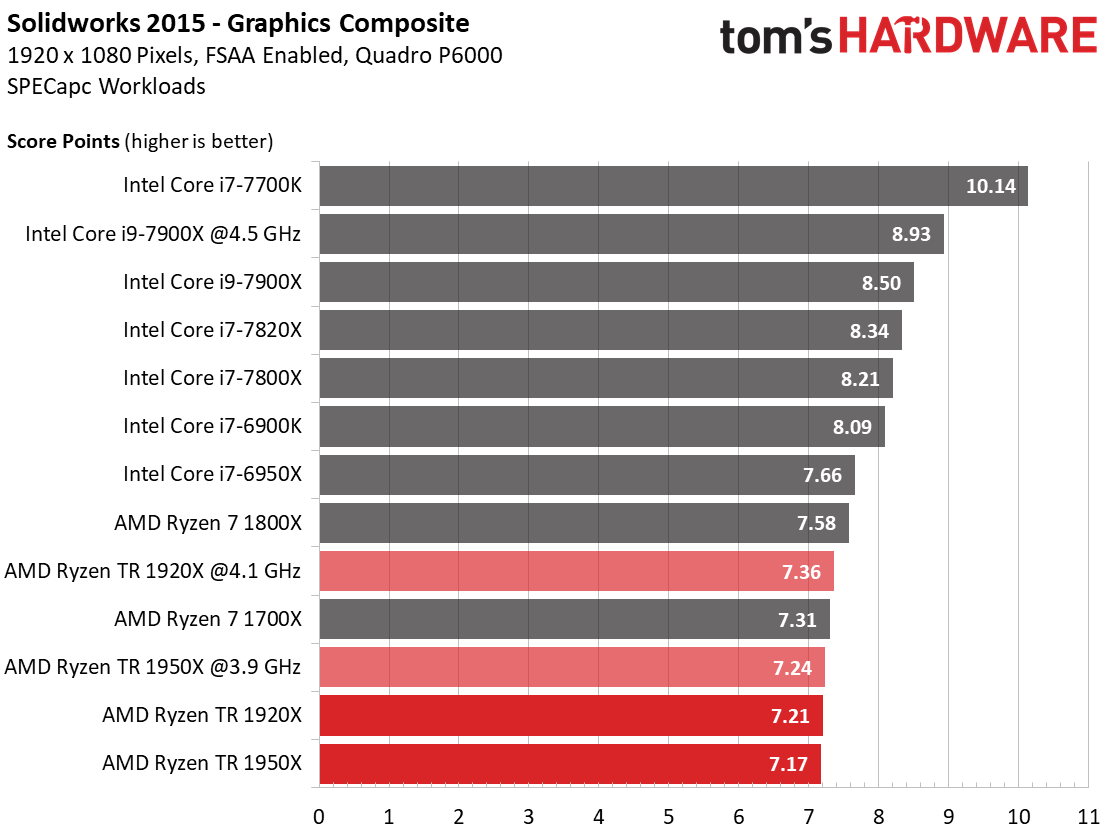

SolidWorks 2015

SolidWorks 2015 tells a similar tale. Even an overclocked Ryzen Threadripper 1920X loses to Ryzen 7 1800X. Switching to NUMA mode could help improve Threadripper's placement, but that'd also have an impact on other applications. You'll have to choose the settings that yield the best experience or face a steady stream of reboots to optimize AMD's platform for whatever you're running at the moment.

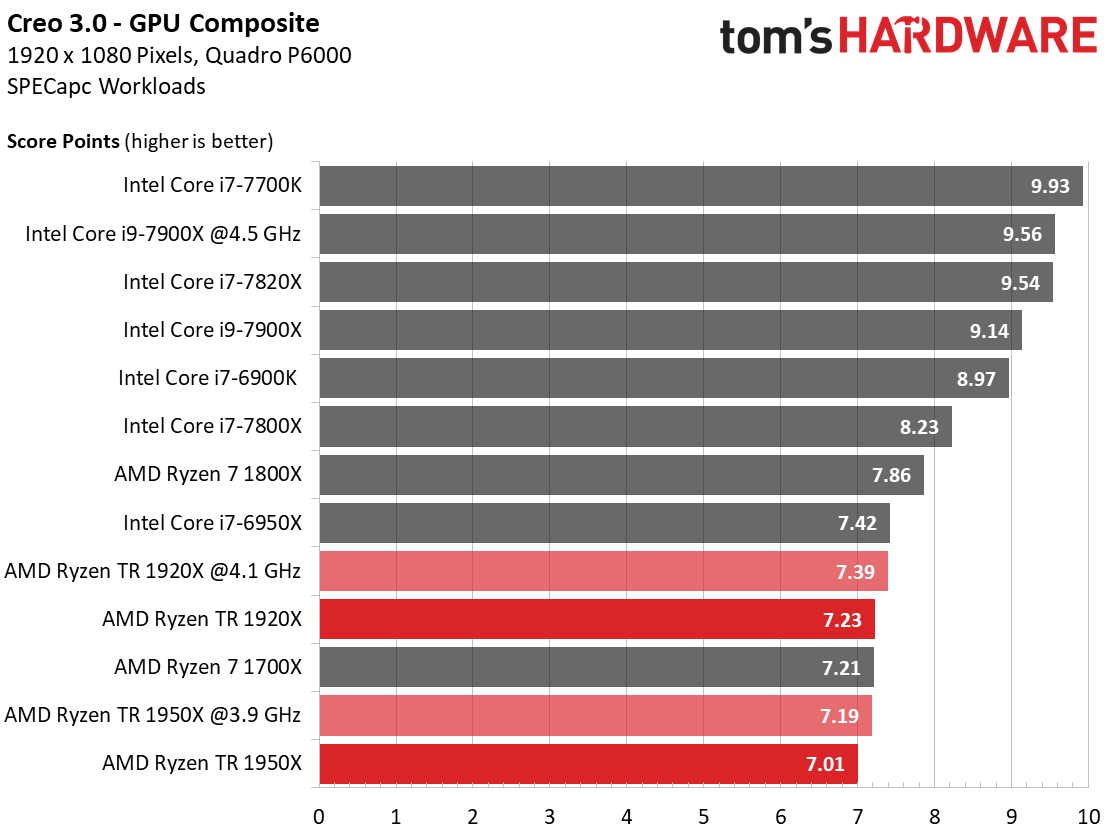

Creo 3.0

The 1920X's lead over the 1950X tells us that frequency is more important than core count during this benchmark.

Moreover, Ryzen 7 1800X's performance advantage over the Threadripper processors suggests the unique MCM design can be problematic in some workloads. Optimized BIOS settings could push those processors up in our field, but they'd also negatively impact Creo's CPU composite score.

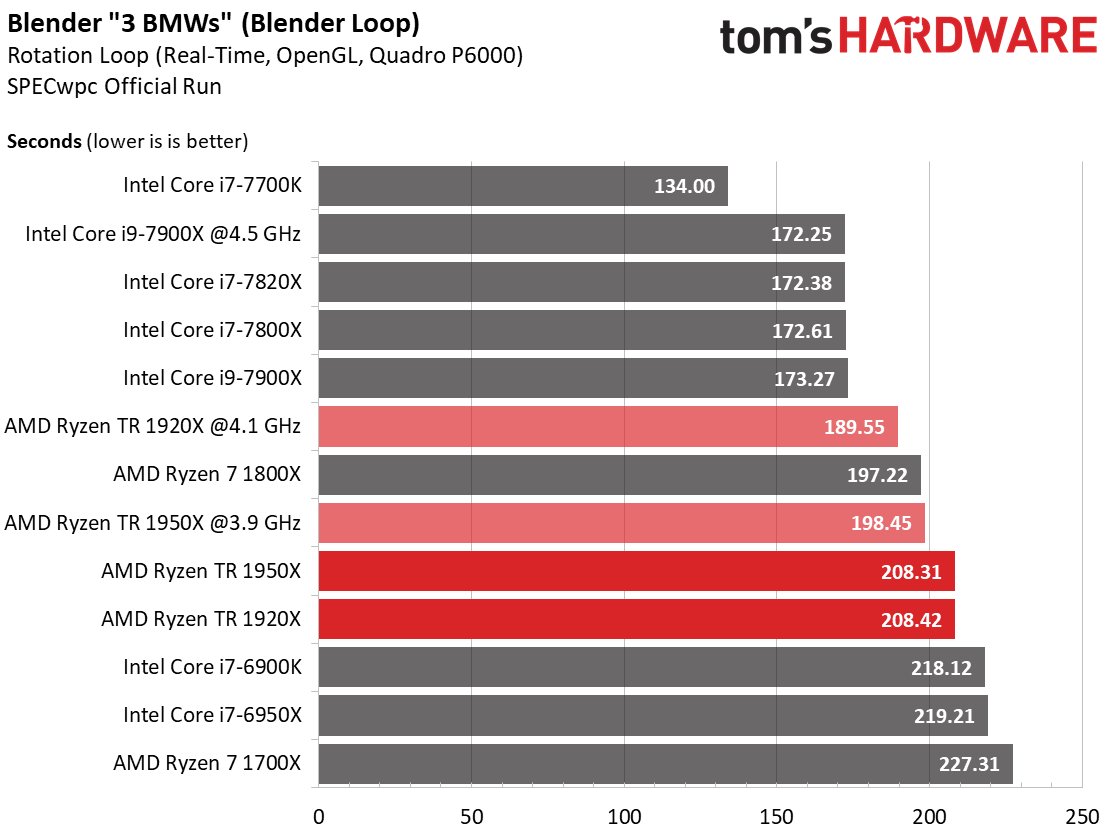

Blender (Real-time 3D Preview)

Threadripper's Blender benchmark results are acceptable for a high-end processor, but the -7700K is a fly in the ointment for both Intel's and AMD's priciest chips. The overclocked 1920X leads AMD's line-up, illustrating the advantages of extra clock rate in workloads not well-optimized for high core counts. Given the great rendering results you'll see on the next page, Threadripper 1920X provides a potent balance of performance in all types of applications.

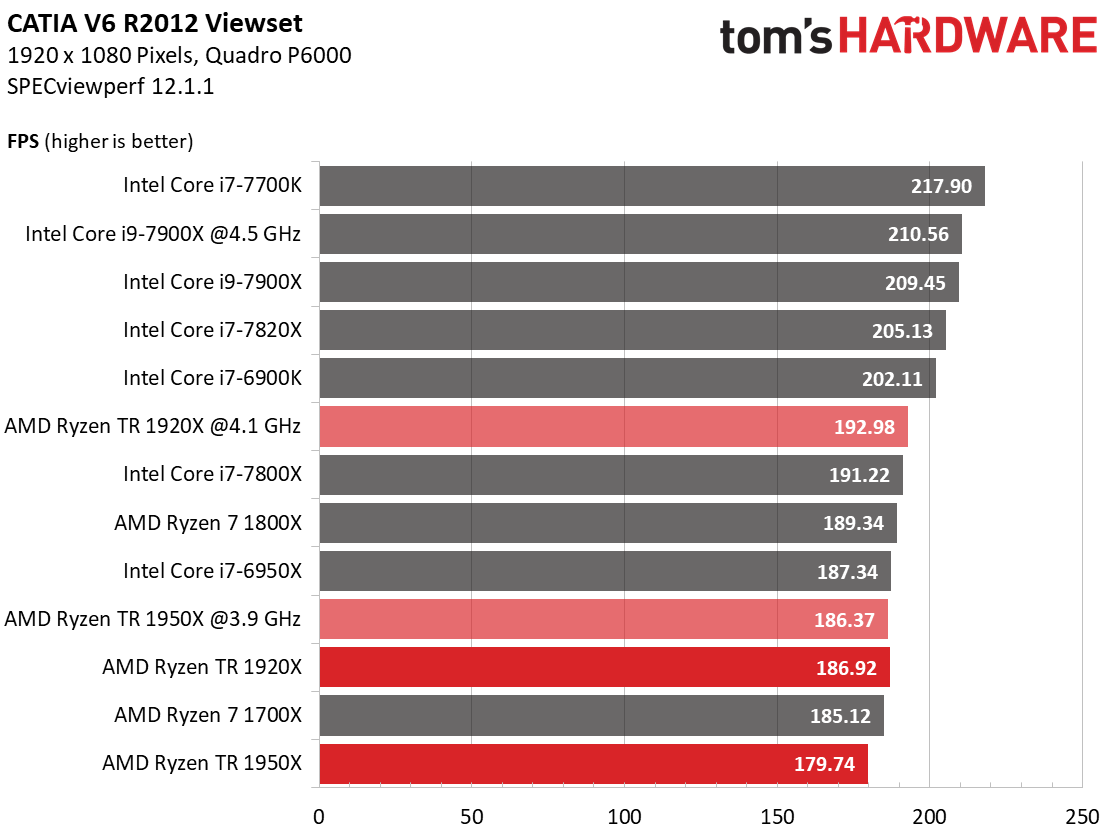

Catia V6 R2012

This graphics benchmark is well-optimized (it’s part of the free SPECviewperf 12 suite, after all). We can see that frequency is particularly important in determining performance.

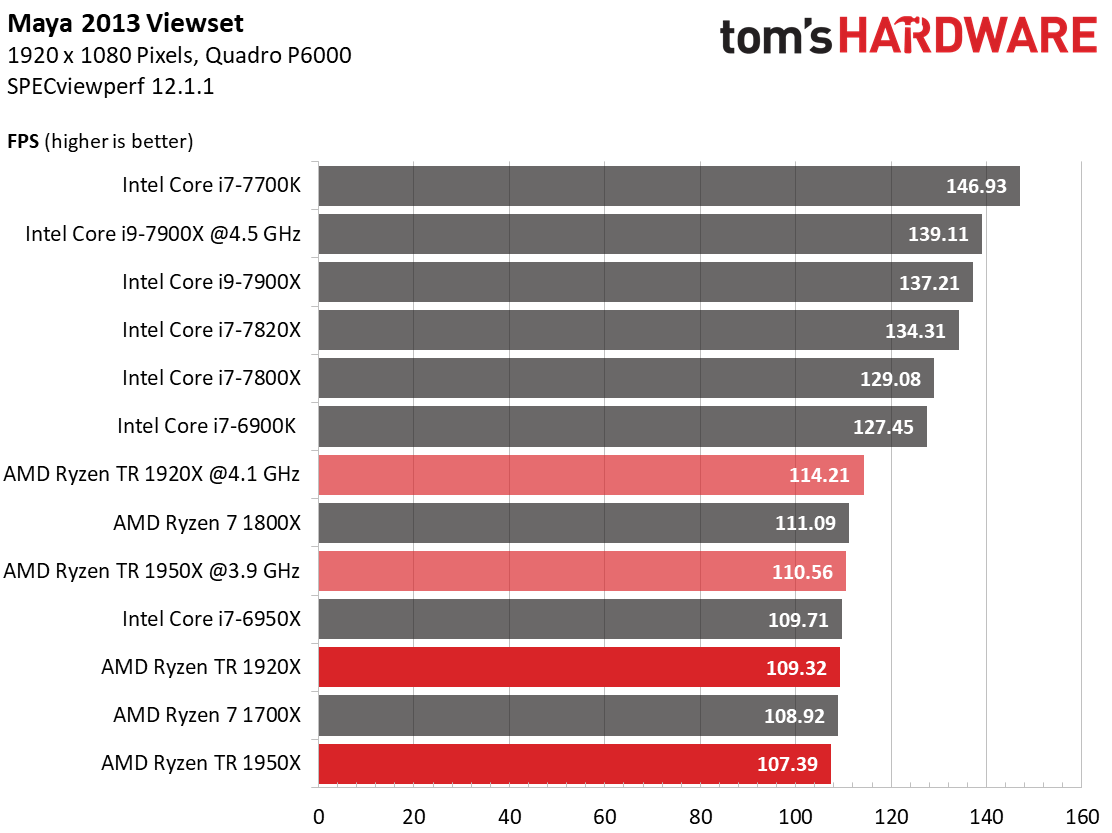

Maya 2013

Maya 2013 also leans heavily on clock rate. But you have to remember that real-time 3D output benchmarks don’t tell the whole story. AMD’s Threadripper processors are much more competitive during final rendering, which we'll cover on the next page.

MORE: Best CPUs

MORE: Intel & AMD Processor Hierarchy

MORE: All CPUs Content

Current page: 2D & 3D Workstation Performance

Prev Page DTP, Office, Multimedia & Compression Performance Next Page CPU Rendering, Scientific & Engineering Computations, & HPC Performance

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Aldain Great review as always but on the power consumption fron given that the 1950x has six more cores and the 1920 has two more they are more power efficient than the 7900x is every regard relative to the high stock clocks of the TRReply -

derekullo "Ryzen Threadripper 1920X comes arms with 12 physical cores and SMT"Reply

...

Judging from Threadripper 1950X versus the Threadripper 1900X we can infer that a difference of 400 megahertz is worth the tdp of 16 whole threads.

I never realized HT / SMT was that efficient or is AMD holding something back with the Threadripper 1900x? -

jeremyj_83 Your sister site Anandtech did a retest of Threadripper a while back and found that their original form of game mode was more effective than the one supplied by AMD. What they had done is disable SMT and have a 16c/16t CPU instead of the 8c/16t that AMD's game mode does. http://www.anandtech.com/show/11726/retesting-amd-ryzen-threadrippers-game-mode-halving-cores-for-more-performance/16Reply -

Wisecracker Hats-off to AMD and Intel. The quantity (and quality) of processing power is simply amazing these days. Long gone are the times of taking days off (literally) for "rasterizing and rendering" of work flowsReply

...or is AMD holding something back with the Threadripper 1900x?

I think the better question is, "Where is AMD going from here?"

The first revision Socket SP3r2/TR4 mobos are simply amazing, and AMD has traditionally maintained (and improved!) their high-end stuff. I can't wait to see how they use those 4094 landings and massive bandwidth over the next few years. The next iteration of the 'Ripper already has me salivating :ouch:

I'll take 4X Summit Ridge 'glued' together, please !!

-

RomeoReject This was a great article. While there's no way in hell I'll ever be able to afford something this high-end, it's cool to see AMD trading punches once again.Reply -

ibjeepr I'm confused.Reply

"We maintained a 4.1 GHz overclock"

Per chart "Threadripper 1920X - Boost Frequency (GHz) 4.0 (4.2 XFR)"

So you couldn't get the XFR to 4.2?

If I understand correctly manually overclocking disables XFR.

So your chip was just a lotto loser at 4.1 or am I missing something?

EDIT: Oh, you mean 4.1 All core OC I bet. -

sion126 actually the view should be you cannot afford not to go this way. You save a lot of time with gear like this my two 1950X rigs are killing my workload like no tomorrow... pretty impressive...for just gaming, maybe......but then again....its a solid investment that will run a long time...Reply -

AgentLozen Replyredgarl said:Now this at 7nm...

A big die shrink like that would be helpful but I think that Ryzen suffers from other architectural limitations.

Ryzen has a clock speed ceiling of roughly 4.2Ghz. It's difficult to get it past there regardless of your cooling method.

Also, Ryzen experiences nasty latency when data is being shared over the Infinity Fabric. Highly threaded work loads are being artificially limited when passing between dies.

Lastly, the Ryzen's IPC lags behind Intel's a little bit. Coupled with the relatively low clock speed ceiling, Ryzen isn't the most ideal CPU for gaming (it holds up well in higher resolutions to be fair).

Threadripper and Ryzen only look as good as they do because Intel hasn't focused on improving their desktop chips in the last few years. Imagine if Ivy Bridge wasn't a minor upgrade. If Haswell, Broadwell, Skylake, and Kabylake weren't tiny 5% improvements. What if Skylake X wasn't a concentrated fiery inferno? Zen wouldn't be a big deal if all of Intel's latest chips were as impressive as the Core 2 Duo was back in 2006.

AMD has done an amazing job transitioning from crappy Bulldozer to Zen. They're in a position to really put the hurt on Intel but they can't lose the momentum they've built. If AMD were to address all of these problems in their next architecture update, they would really have a monster on their hands. -

redgarl Sure Billy Gates, at 1080p with an 800$ CPU and an 800$ GPU made by a competitor... sure...Reply

At 1440p and 2160p the gaming performances is the same, however your multi-threading performances are still better than the overprices Intel chips.