BenQ BL3200PT Review: A 32-Inch AMVA Monitor At 2560x1440

The extra pixel density of a 27-inch monitor sporting a native 2560x1440 resolution can make small text difficult to read. BenQ solves the problem by adding five extra inches to its BL3200PT. Today we test this jumbo 32-inch AMVA-based panel in our lab.

Results: Grayscale Tracking and Gamma Response

The majority of monitors, especially newer models, display excellent grayscale tracking (even at stock settings). It’s important that the color of white be consistently neutral at all light levels from darkest to brightest. Grayscale performance impacts color accuracy with regard to the secondary colors: cyan, magenta, and yellow. Since computer monitors typically have no color or tint adjustment, accurate grayscale is key.

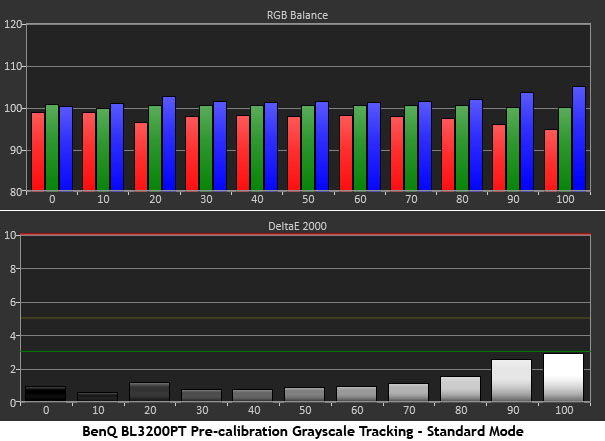

The BL3200PT comes out of the box set to the Standard picture mode, which, as you can see, has pretty good grayscale accuracy. None of the errors are visible, even at 100-percent brightness. In fact, reducing the Contrast control a couple of clicks cleans up that level.

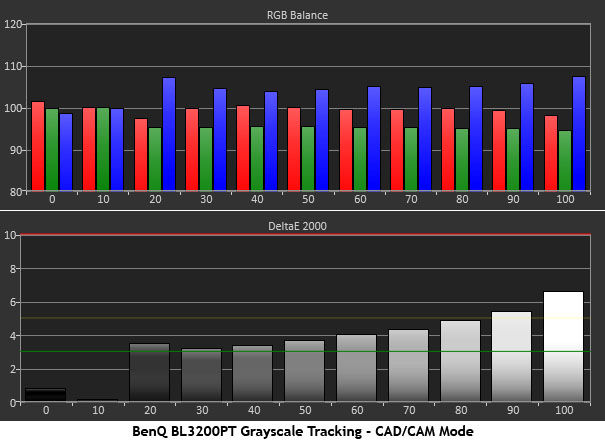

BenQ markets this display as a CAD/CAM monitor, and it has a picture preset with a corresponding designation. We’re including it in our results so you can compare the differences. Obviously, the color temperature is a bit cooler than D65, though not significantly so. It gives the impression of a little extra brightness without actually increasing the contrast or backlight levels. In this preset, the color temp and gamma settings cannot be changed. The average error is 3.68 Delta E.

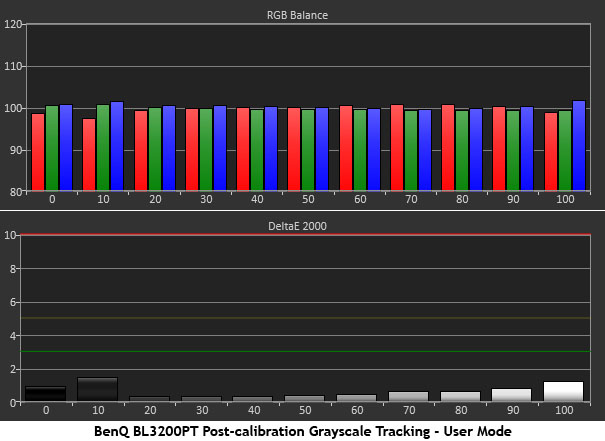

For the most accurate grayscale performance, select the User mode and adjust the RGB sliders. We also lowered the Contrast to 45 to achieve almost perfect results across the board.

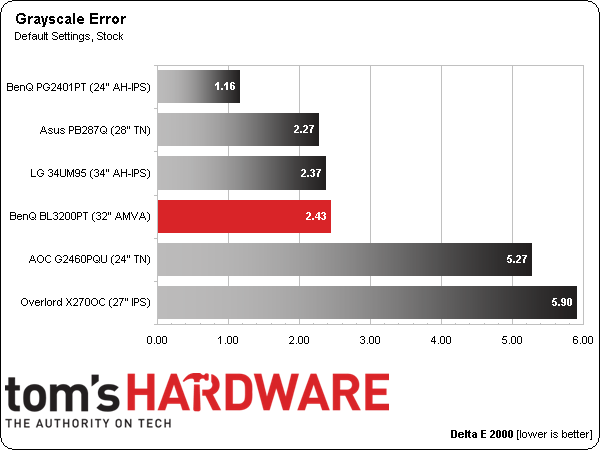

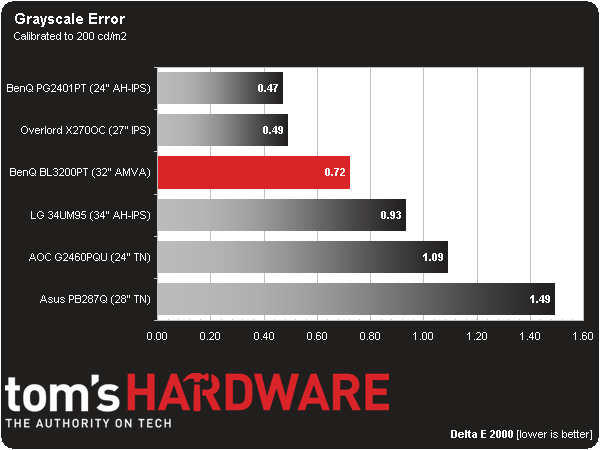

Here is our comparison group:

The BL3200PT’s out-of-box grayscale accuracy in Standard mode is comfortably under the visible level of three Delta E. That bodes well for the majority of users who won’t be calibrating their monitor.

If you plan to use this display for color-critical work, an OSD calibration produces professional-quality results. An average error of less than one Delta E puts the BenQ in elite territory.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Gamma Response

Gamma is the measurement of luminance levels at every step in the brightness range from 0 to 100 percent. It's important because poor gamma can either crush detail at various points or wash it out, making the entire picture appear flat and dull. Correct gamma produces a more three-dimensional image, with a greater sense of depth and realism. Meanwhile, incorrect gamma negatively affects image quality, even in monitors with high contrast ratios.

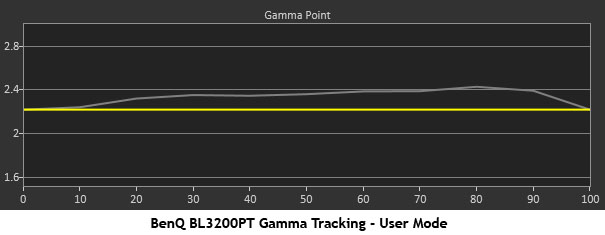

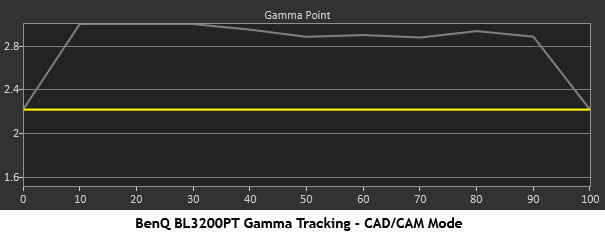

In the gamma charts below, the yellow line represents 2.2, which is the most widely used standard for television, film, and computer graphics production. The closer the white measurement trace comes to 2.2, the better.

Gamma is the only area where the BL3200PT could use improvement. The default Gamma 3 setting results in an average value of over 3.0, which is much too dark for typical content and puts the trace off of our chart. Video and gaming looks flat and dull with poor detail. Changing to Gamma 1 improves image quality significantly. The trace is still a little darker than 2.2, but it’s pretty close.

High gamma values can make a monitor appear to have greater contrast, but this display doesn’t need any help in that department.

If you use the CAD/CAM preset, the gamma control is locked to a very high setting. The graph above shows what you end up with. While this may work fine for industrial design and CAD applications, it is not practical for use with typical computing tasks or entertainment.

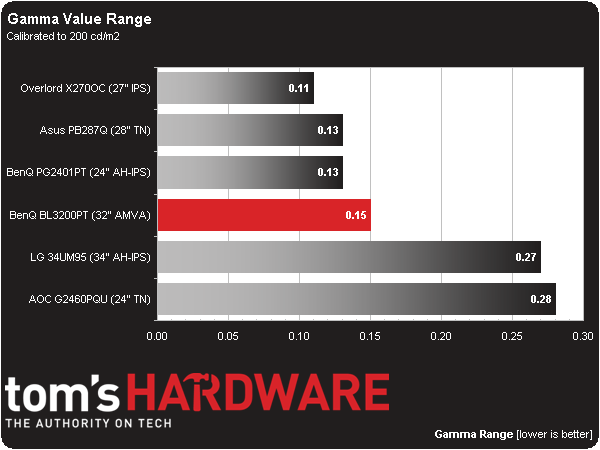

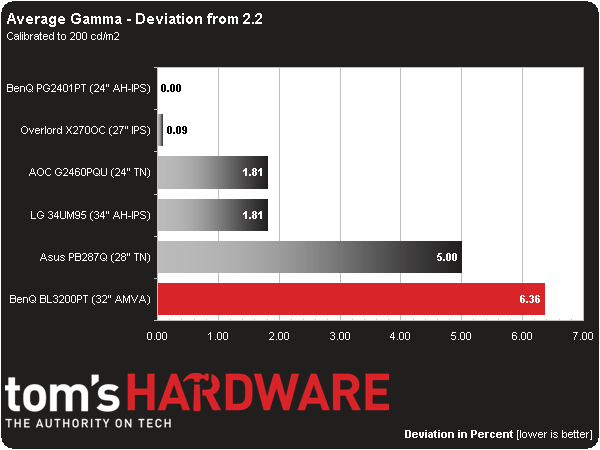

Here is our comparison group again:

Even though the gamma runs a tad dark, it tracks reasonably well. Thanks to a tremendous amount of available contrast, the BL3200PT’s image pops better than the TN or IPS displays we’ve seen run through our labs lately.

We calculate gamma deviation by simply expressing the difference from 2.2 as a percentage.

The average gamma value is 2.34. Obviously, that's a little darker than 2.2. The error is fairly consistent from 10- to 100-percent brightness and runs to a maximum of 5.5 cd/m2. We still love the BL3200PT's image quality, but wish there was one more gamma preset to match the 2.2 standard.

Current page: Results: Grayscale Tracking and Gamma Response

Prev Page Results: Brightness and Contrast Next Page Results: Color Gamut nd Performance

Christian Eberle is a Contributing Editor for Tom's Hardware US. He's a veteran reviewer of A/V equipment, specializing in monitors. Christian began his obsession with tech when he built his first PC in 1991, a 286 running DOS 3.0 at a blazing 12MHz. In 2006, he undertook training from the Imaging Science Foundation in video calibration and testing and thus started a passion for precise imaging that persists to this day. He is also a professional musician with a degree from the New England Conservatory as a classical bassoonist which he used to good effect as a performer with the West Point Army Band from 1987 to 2013. He enjoys watching movies and listening to high-end audio in his custom-built home theater and can be seen riding trails near his home on a race-ready ICE VTX recumbent trike. Christian enjoys the endless summer in Florida where he lives with his wife and Chihuahua and plays with orchestras around the state.

-

npyrhone "Remember that 92 ppi number we mentioned at the beginning of today's story? That seems to be a sweet spot. It works fine at 24 inches if your screen is FHD. You won’t discern individual pixels, but you’ll be quickly wishing for more screen real estate. Moving up to 2560x1440 at 27 inches increases density to 109 ppi. That’s great for gaming and photo work. However, text and small objects become difficult to see for many users."Reply

I can't understand why I would need a monitor with lower pixel density? Why not just zoom the text a notch in your word processor or whatever software you are using? Of two otherwise similar monitors I would always choose the one with higher PPI, even if I used it only for word processing. -

kid-mid I rather have the 27" QNIX Evo II 1440p for $300 or the ROG Swift for $600.Reply

The days of 60Hz are almost over with.. -

moogleslam ReplyI rather have the 27" QNIX Evo II 1440p for $300 or the ROG Swift for $600.

Except that the Swift cost $800

The days of 60Hz are almost over with.. -

Merry_Blind "The only complaint we’ve registered along the way involves font size. With a pixel density of 109 ppi, text in most Windows applications becomes pretty small."Reply

That's why I don't understand people saying 1080p is crap and has to go away. I've always find that even at 1080p, the fonts are really small, and icons and interfaces in general are very tiny. In my case, it's not even a case of not being able to read, it's just that everything looks so out of place and hideous, like, Windows wasn't meant for such resolutions.

I can't imagine 1440p. Must be ridiculous to look at. It's just aesthetically not nice.

Bring on the downvotes... -

animalosity Why in God's green earth would you pay $1000 for a 1440p display at 60hz when you can get a 4K for way less than that now. Rather have UHD....Reply -

Bondfc11 I agree with npyrhone - there are ways to enlarge everything on your screen if the density is too low. Having said that - this is an interesting panel. However, I cannot wait for the days when not TNs, but also IPS and VA panels (in large formats) become standard at 120Hz. The hertz do make a noticeable difference in everything you do on the screen.Reply -

ohim I`ll wait to see what Active Sync monitors will be able to do , an IPS with Active sync over a TN with 144hz.Reply -

Merry_Blind ReplyI`ll wait to see what Active Sync monitors will be able to do , an IPS with Active sync over a TN with 144hz.

What is Active Sync? -

Merry_Blind ReplyWhy in God's green earth would you pay $1000 for a 1440p display at 60hz when you can get a 4K for way less than that now. Rather have UHD....

It's not 1000$ though...