Tom's Hardware Verdict

GeForce GTX 1650 has potential. It needs to be less expensive, for starters. We also want to see a card that gets all of its power from the PCIe slot. For the time being, AMD's Radeon RX 570 8GB is faster, less expensive, and better able to handle games with big memory requirements.

Pros

- +

Some versions do not require auxiliary power

- +

Great performance per watt

Cons

- -

Expensive relative to Radeon RX 570

- -

Some versions do require auxiliary power

- -

Not quite capable of smooth performance at max-quality 1080p

- -

Missing newest-generation NVENC encoder hardware

Why you can trust Tom's Hardware

Nvidia GeForce GTX 1650 4GB Review

AMD's Radeon RX 570 launched almost exactly two years ago. Back then, nobody could have anticipated that the card, based on an even older Ellesmere GPU, would score a fresh win in 2019. But here we are, benchmarking Nvidia’s new GeForce GTX 1650 4GB against the Radeon RX 570 8GB and finding AMD’s board to be not only faster, but in some cases less expensive as well.

Surely, Nvidia has some advantage in this competition. Right?

Well, the GeForce GTX 1650 and its TU117 processor are technically rated for 75W of power consumption, putting them in that rare category of gaming graphics cards capable of pulling all the current they need from a PCI Express slot. Except the sample we’re testing has a six-pin auxiliary connector along its top edge. And if you don’t use it, “PLEASE POWER DOWN AND CONNECT THE PCIe POWER CABLE(S) FOR THIS GRAPHICS CARD” appears as soon as you boot up.

Of course, it’s not all doom and gloom for the GeForce GTX 1650. We were able to confirm the existence of multiple models that don’t require external power. Even those that do should use about half the power of AMD’s Radeon RX 570 under load, making them immensely more efficient. How does Nvidia achieve such an advantage? It’s all in the Turing architecture…

TU117: A New GPU With Familiar Tricks

The GPU at the heart of GeForce GTX 1650 is called TU117-300-A1, and it’s trimmed down even more than GeForce GTX 1660’s TU116 processor. Not surprisingly, TU117 is quite a bit smaller than TU116: it comprises 4.7 billion transistors in a 200 mm² die. The chip is still manufactured using TSMC’s 12nm FinFET process and naturally lacks the RT and Tensor cores so commonly associated with Turing.

Some of the architecture’s other features do rub off on TU117, though. Like the higher-end GeForce RTX 20-series cards, GeForce GTX 1650 supports simultaneous execution of FP32 arithmetic instructions, which constitute most shader workloads, and INT32 operations (for addressing/fetching data, floating-point min/max, compare, etc.).

Turing’s Streaming Multiprocessors are composed of fewer CUDA cores than Pascal’s, but the design compensates in part by spreading more SMs across each GPU. The newer architecture assigns one scheduler to each set of 16 CUDA cores (2x Pascal), along with one dispatch unit per 16 CUDA cores (same as Pascal). Four of those 16-core groupings comprise the SM, along with 96KB of cache that can be configured as 64KB L1/32KB shared memory or vice versa, and four texture units. Because Turing doubles up on schedulers, it only needs to issue an instruction to the CUDA cores every other clock cycle to keep them full. In between, it's free to issue a different instruction to any other unit, including the INT32 cores.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

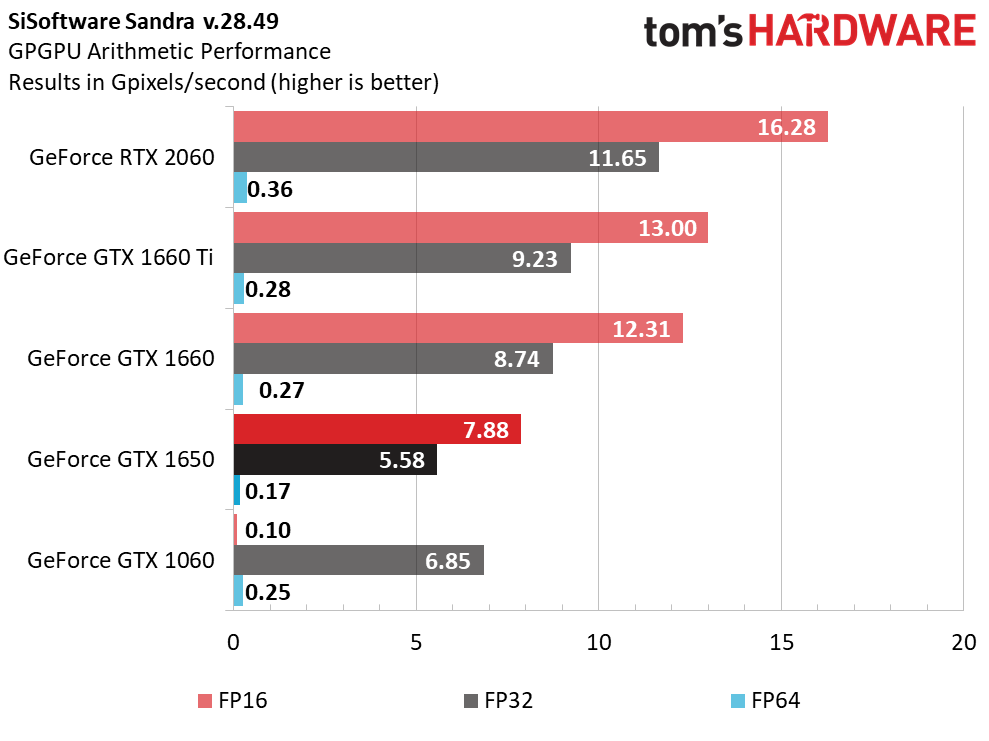

In TU117, Nvidia replaces Turing’s Tensor cores with 128 dedicated FP16 cores per SM, which allow GeForce GTX 1650 to process half-precision operations at 2x the rate of FP32. TU106, TU104, and TU102 boast double-rate FP16 as well through their Tensor cores, so TU117’s configuration serves to maintain that standard through hardware put in place specifically for this GPU. The following chart is an updated version of the one published in our GeForce GTX 1660 review, which illustrates TU117’s massive improvement to half-precision throughput compared to GeForce GTX 1060 and its Pascal-based GP106 chip.

In addition to the Turing architecture’s shaders and unified cache, TU117 also supports a pair of algorithms called Content Adaptive Shading and Motion Adaptive Shading, together referred to as Variable Rate Shading. We covered this technology in Nvidia’s Turing Architecture Explored: Inside the GeForce RTX 2080. That story also introduced Turing's accelerated video encode capabilities, which carried over to GeForce GTX 1660, but did not make it into GeForce GTX 1650. Check out the following screen capture from Nvidia’s website, referring to the 1650’s NVENC engine as Volta-equivalent, making it similar to Pascal.

That means support for H.265 8K encode at 30 FPS is gone, along with the 25% bitrate savings for HEVC and up to 15% bitrate savings for H.264 that Nvidia touted when Turing launched.

Putting It All Together…

Whereas GeForce GTX 1660 is armed with 22 Streaming Multiprocessors, the 1650 features just 14 SMs spread across two Graphics Processing Clusters. One GPC hosts four Texture Processing Clusters and the other has three. With 64 FP32 cores per SM, we end up with 896 active CUDA cores and 56 usable texture units.

Board partners will undoubtedly target a range of frequencies to differentiate their cards. However, the official base clock rate is 1,485 MHz with a GPU Boost specification of 1,665 MHz. Both of those numbers trail GeForce GTX 1660’s clocks, so in addition to losing on-die resources, GeForce GTX 1650 operates at lower frequencies, too.

Since Gigabyte doesn’t seem entirely content with those specs, we’re testing a GeForce GTX 1650 Gaming OC 4G with its GPU Boost clock set to 1,815 MHz. The card had no trouble maintaining a range between 1,890 and 1,920 MHz through three runs of Metro: Last Light.

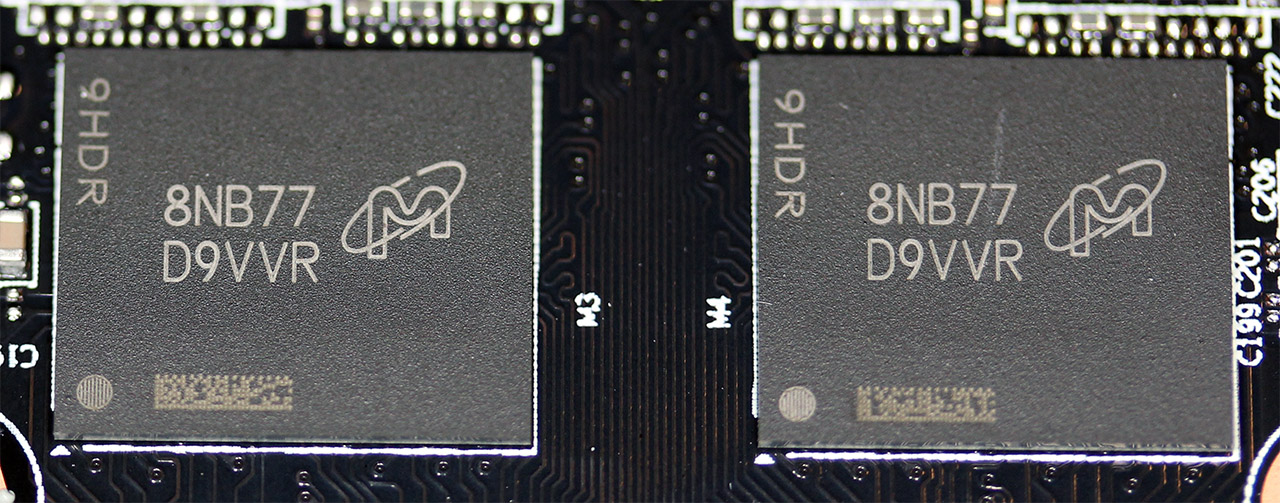

Four 32-bit memory controllers give TU117 an aggregate 128-bit bus, which is populated by 8 Gb/s GDDR5 modules pushing up to 128 GB/s. That’s a mere 12.5% improvement over GeForce GTX 1050/1050 Ti.

Each memory controller is associated with eight ROPs and a 256KB slice of L2 cache, totaling 32 ROPs and 1MB of L2 across TU117. Similar to TU116, this chip’s L2 cache slices are half as large compared to TU106.

All of the cutting is good for a 45W reduction in power consumption compared to GeForce GTX 1660 and 1660 Ti. By hitting the magic 75W threshold, Nvidia can claim that GeForce GTX 1650 doesn’t need an auxiliary power connector. But pay close attention as you shop—some implementations lack a six-pin connector, while others (like ours) require one. If your card needs external power, attaching that connector won’t be optional.

| Row 0 - Cell 0 | GeForce GTX 1650 | GeForce GTX 1660 | GeForce GTX 1660 Ti | GeForce GTX 1060 FE |

| Architecture (GPU) | Turing (TU117) | Turing (TU116) | Turing (TU116) | Pascal (GP106) |

| CUDA Cores | 896 | 1408 | 1536 | 1280 |

| Peak FP32 Compute | 3 TFLOPS | 5 TFLOPS | 5.4 TFLOPS | 4.4 TFLOPS |

| Tensor Cores | N/A | N/A | N/A | N/A |

| RT Cores | N/A | N/A | N/A | N/A |

| Texture Units | 56 | 88 | 96 | 80 |

| Base Clock Rate | 1485 MHz | 1530 MHz | 1500 MHz | 1506 MHz |

| GPU Boost Rate | 1665 MHz | 1785 MHz | 1770 MHz | 1708 MHz |

| Memory Capacity | 4GB GDDR5 | 6GB GDDR5 | 6GB GDDR6 | 6GB GDDR5 |

| Memory Bus | 128-bit | 192-bit | 192-bit | 192-bit |

| Memory Bandwidth | 128 GB/s | 192 GB/s | 288 GB/s | 192 GB/s |

| ROPs | 32 | 48 | 48 | 48 |

| L2 Cache | 1MB | 1.5MB | 1.5MB | 1.5MB |

| TDP | 75W | 120W | 120W | 120W |

| Transistor Count | 4.7 billion | 6.6 billion | 6.6 billion | 4.4 billion |

| Die Size | 200 mm² | 284 mm² | 284 mm² | 200 mm² |

| SLI Support | No | No | No | No |

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: How to Stress-Test Graphics Cards (Like We Do)

MORE: All Graphics Content

Current page: Nvidia GeForce GTX 1650 4GB Review

Next Page Meet Gigabyte's GeForce GTX 1650 Gaming OC 4G-

Thanks for the Review....I think the RX 570 is still going strong and ahead of the GTX 1650..This TURING entry-level GPU seems to have poor price/performance ratio though.Reply

EDIT, Btw, you are correct about the all the points which you have mentioned under CON, including the last one as well. GTX 1650 lacks Turing NVENC Encoder, packs Volta's Multimedia Engine.

https://www.techpowerup.com/254861/nvidia-gtx-1650-lacks-turing-nvenc-encoder-packs-voltas-multimedia-engine -

renz496 nvidia most likely did not intend this card to be price/performance king. they most likely banking on it's power efficiency to gain momentum in similar way to GTX750ti and GTX1050ti before. right now this is the fastest sub 75w GPU. nvidia probably can be more aggressive on the pricing but this generation their attention is mostly on the mid range. hence we saw much aggressive pricing with GTX1660ti and GTX1660.Reply -

TMTOWTSAC I think the GPU itself makes sense, but this partner board doesn't. For users who would otherwise have to purchase a new PSU you can charge a price premium. Take that away and actually charge more for it, while offering less performance per dollar? I've never understood the partner board mentality of tricking out a lower tier model until it costs more than a higher tier while still falling short of its performance.Reply -

cryoburner Reply

I wouldn't expect all that much more from it. The 1650 is already pushing the limits of what this graphics chip can do within a 75 watt power envelope, and even this $180 factory overclocked model that requires an external power connector performs around 10% below an RX 570 on average. The 1650 Ti will probably manage to outperform the RX 570 and 1060 3GB, but that card is expected to start around $180 for the base models.Diabl0 said:Waiting for 1650Ti

And that's the biggest problem with these cards. They are terribly priced. 2 1/2 years ago, the 1050 launched with a $109 MSRP and the 1050 Ti launched with a $139 MSRP. The 1650 is already launching for a higher base price than the 1050 Ti launched for, and the 1650 Ti will be launching for a price not far below what the 1060 3GB and RX 480 4GB were back in 2016, for performance that will likely not be much better. If you wanted that level of performance for that price, you could have had it years ago. And at this point, you can get that level of performance for around $130 with an RX 570. If these cards were priced closer to what the previous generation hardware launched for, perhaps starting around $120 for the 1650, and $150 for the 1650 Ti, they would have been decent options. They're priced at least 20% higher than they should be though.

About the only real advantage these cards hold is that they have low power draw, allowing them to run on low-end 300-350 watt power supplies found in some pre-built systems, at least assuming you get a card that doesn't require a PCIe power cable, but those will likely perform slightly behind what's shown here. -

Loadedaxe I had high hopes for this card. I almost bought a RX 580 4GB on sale for $149. last month. Now I found a RX580 4gb on sale for $169.Reply

Sorry nVidia. You will have to do better in this price range. Maybe if it cost $129...but not $179 -

salata I am looking for the GPU for my OEM Dell. This card does not perform to justify the price now. For me is better to consider replacement including moding my MT case in order to fit ATX psu and RX580 or 1660. This card should 30-40 bucks cheaper for me to consider buying it. I'm as well fine waiting for NAVI 12 based 75W GPU.Reply -

How did this review thread/Topic land here, under Graphics cards sub-forum ? Shouldn't it supposed to be here:Reply

https://forums.tomshardware.com/forums/reviews-comments.67/ -

escksu This card isn't really a fail.... It may be sucky as a normal desktop card but its the fastest low profile GPU out there and it doesn't require additional power. Anyone who has a small casing and need additional juice, this is the best card for them!!Reply -

cryoburner Reply

Except it's not a low-profile card. Looking on PCPartPicker, every 1650 that's available right now is a full-width card. There is apparently one low-profile Zotac 1650, but it's not stocked by any US retailers tracked by PCPartPicker at this time...escksu said:This card isn't really a fail.... It may be sucky as a normal desktop card but its the fastest low profile GPU out there and it doesn't require additional power. Anyone who has a small casing and need additional juice, this is the best card for them!!

https://pcpartpicker.com/products/video-card/#c=443&sort=price&page=1

Further, a majority of them DO require external power, including the one tested for this review, and the ones that don't tend to perform a little slower.

That's not to say the card is useless though, and for someone looking for a budget card for a pre-built system with something like a 350 watt PSU, it could make sense (provided they are not trying to fit it in a slim case).