GeForce 9600 GT/GTS 250/GTX 260 Non-Reference Roundup

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Gigabyte’s GV-N96TSL-1GI And GV-N96TZL-1GI: Different Personalities

We’ll start with the GeForce 9600 GT-based cards from Gigabyte. While these products are based on identical printed circuit boards (PCBs), their value-added features make them stand out from each other in practice.

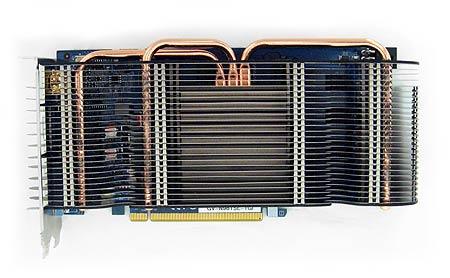

Gigabyte’s GV-N96TSL-1GI: Silent running for home-theater PC (HTPC) users who want to get their game on

The GV-N96TSL-1GI's most salient selling point is silence, thanks to a large, dual-slot Silent Cell cooler that does without a fan. The impressive dual-slot cooler does not push the GPU-heated air out of the back of the case, as most dual-slot coolers do. Instead, the GV-N96TSL-1GI draws cool air in from behind your chassis, which lowers the temperature of the graphics card. The heated air then travels upward, blown out through the case's fans and PSU. Gigabyte claims the Silent Cell 3 cooler can be up to 18 degrees Celsius cooler than the active fan heat sink of the GeForce 9600 GT reference design. Of course, this heatsink/fan combo relies completely on the case fans in your PC, so if you have poor chassis airflow, then you might want to address that before installing the GV-N96TSL-1GI.

The GV-N96TSL-1GI's GPU and memory clock speeds are identical to those of the reference board (that is to say 650 MHz for the GPU core, 1,625 MHz for the shader clock, and 900 MHz [1,800 MHz DDR] for the memory). Performance will therefore be very close to that of the GeForce 9600 GT reference card, although the 1 GB of RAM might help a bit in certain situations.

Gigabyte’s GV-N96TZL-1GI: A low-priced, overclocked gaming card with 1 GB of RAM

The GV-N96TZL-1GI is a straightforward improvement over the GeForce 9600 GT. It is quieter, cooler, and overclocked. Its thermal and acoustic enhancements come courtesy of a Zalman heat sink and a fan that is thick enough to make the card take up two slots of space, although, like the aforementioned card, this one also doesn't exhaust heated air out of your case.

With regard to overclocking, the card's 700 MHz core represents a mild 50 MHz increase in GPU speed compared to the reference design. And at 1,800 MHz on the shaders, the board features a 175 MHz increase. Memory speed is identical to the reference at 900 MHz (1,800 MHz DDR).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Packaging and Bundle

Our samples didn’t include a retail box or bundle, but we're told that they will be shipped with a Molex-to-PCI Express (PCIe) converter cable and an audio cable. Note that audio over HDMI isn’t natively supported in the GeForce cards, and this is what amounts to a workaround. A manual and a driver/utility CD are also included. Gigabyte’s Gamer HUD Lite utility is on the CD, which can also be downloaded from the Gigabyte Web site.

The only difference between the two models' bundles is that the GV-N96TZL-1GI comes with a DVI-to-VGA dongle, while the GV-N96TSL-1GI includes a DVI-to-HDMI dongle. This this goes to emphasize how the silent card is targeted to the HTPC user who can make use of more HDMI inputs rather than another VGA input.

Current page: Gigabyte’s GV-N96TSL-1GI And GV-N96TZL-1GI: Different Personalities

Prev Page Introduction Next Page Gigabyte’s GV-N96TSL-1GI And GV-N96TZL-1GI: Identical PCBs And OverclockingDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

Mottamort I was rather disappointed with this article. Not the article itself but with the slightly misleading Title/Intro. When clicking the article I thought I was going to find a massive battle between these vendors on different tiers, instead you show us different instances of 2 slightly different cards of the same type from one vendor....if that makes senseReply

I mean you have Gigabyte vs Gigabyte in the 9600gt section, Asus vs Asus in the 250 section and so on.

:-/ -

dragonsprayer Great articleReply

i wish it had more cards, i think you need 4 parts, try some back cards like the 4870x2 darkknight? good stuff as always!

thx! -

crisisavatar wow how is the gts 250 performing so close to the gtx 260 wasn't the gtx 260 20% faster ?Reply -

enterco It's not clear to me why are you comparing '3dmark score' when you should post 'GPU score'.... It's a graphics card comparision, not platform comparision.Reply -

randomizer entercoIt's not clear to me why are you comparing '3dmark score' when you should post 'GPU score'.... It's a graphics card comparision, not platform comparision.Nothing but the cards is changed so you're not comparing platforms.Reply -

acasel We cannot see clearly the bang for the buck card there if we ain't seeing some ati cards like the 4770 and others..Reply

The drop down menu sure is fast... :-) -

enterco randomizerNothing but the cards is changed so you're not comparing platforms.Sure. A reason more to show GPU score. 3dmark score is too much influenced by CPU's power, and it's no longer relevant, the way it used to be once...Reply

By using a Quad Core and a low-performing GPU you can achive same 3dmark score as using a dual core combined with a considerably stronger GPU, 3dmark Vantage gives too much credit to CPU. But the overall FPS in games it's often higher in the second case: dual core + better GPU. -

marraco Recent review showed the 260 being neck to neck with the 4870; both in price and performance, those cards are in the same point.Reply

Since my 8800GT should be between the 9600 and the 250, I guess that the best upgrade path is to buy a second 8800GT, reaching probably 260/4870 performance.

I searched the web for 8800GT SLI benckmark running in i7 920, but got no one single review...

I think that tomshardware should review non up-to-date cards as the 8800 and the ATI equivalents, in crossfilre/SLI, since for many users, it should make sense to buy a second card that to upgrade to a 260/4870.

older reviews on those cards does not accounted for the scalability on I7 x58 platform, and probably ATI and Nvidia dedicated more time tweaking drivers for newer cards, so maybe the 8800GT does not perform well today (the SLI on core 2/Quad did not worked very well in the past)