GeForce GTX 750 Ti Review: Maxwell Adds Performance Using Less Power

Introducing The GM107 GPU, Based On Maxwell

These days, gamers like their graphics cards beefy. Double-slot coolers and fancy fan shrouds are typically what elicit Tim Allen-style grunts and knowing nods of approval. After all, high frame rates require complex GPUs. Billions of transistors cranking away at Battlefield 4 get hot. And all of that heat needs to go somewhere.

So if you're coming to the table with a short, naked PCB, it'd better have a trick or two up its figurative sleeve.

Yet, Nvidia, perhaps trying to prove a point, shipped out its reference GeForce GTX 750 Ti on a less than six-inch board. With no auxiliary power connector. Sporting a little bolted-on orb-style heat sink and fan. It's pretty much the same size as GeForce GTX 650 Ti. But without the big cooler, GTX 750 Ti is daintier than even a lot of sound cards we've tested.

Nevertheless, Nvidia claims that its first Maxwell architecture-based product targets gaming at 1920x1080 in the latest titles using some pretty demanding settings. Could this be the graphics world’s Prius?

Maxwell In The Middle

Maxwell’s story is intriguing, partly because of what it means to the company’s design approach moving forward, but also because Nvidia is keeping more architectural details to itself than usual. Let’s start with the design.

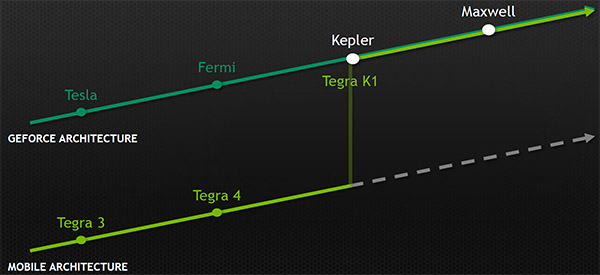

Back in December of last year, we were in Santa Clara learning about Nvidia’s Tegra K1 SoC. We already knew that K1’s graphics engine was Kepler-based, essentially a single SMX with notable changes to the structures connecting various subsystems in a bid to optimize for power. But Jonah Alben, senior vice president of GPU engineering, also made it clear that every new architecture, from Maxwell onward, would be built with mobile in mind. Engineers would optimize the fabrics between GPU components based on performance targets and power budgets. However, the fundamental building blocks would stay common between segments, and efficiency would guide the important decisions.

Tegra: Whence It Came

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The impetus for Maxwell comes from Nvidia's effort in the smartphone and tablet space. Read Nvidia Tegra K1 In-Depth: The Power Of An Xbox In A Mobile SoC? to learn more about that architecture.

This is clearly good news for the Tegra family, which continues clawing around for a more meaningful slice of market share. K1-based devices aren't even here yet and we're already thinking about Nvidia's claim that Maxwell offers two times the performance-per-watt of Kepler, and what such sizable improvements could mean to mobile gaming.

A renewed emphasis on efficiency should be good on the desktop too though, providing the company's retooled architecture continues scaling up from single- to double- and triple-digit power ceilings.

Fortunately, you won't have to wait long for an answer. The GeForce GTX 750 Ti launching today should demonstrate what Maxwell can do (at least at a 60 W TDP). Nvidia says its more effective design pulls power consumption way down and nudges performance up, even in a GPU featuring fewer CUDA cores. Knowing that it wouldn’t have a new process technology node to lean on, Nvidia had to make its improvements to Maxwell with 28 nm manufacturing in mind. In other words, it needed to gets its GPUs working smarter, since simply tacking on more resources wouldn’t be an option.

The Maxwell Streaming Multiprocessor

Company representatives tell us that Maxwell’s biggest gains come from a redesign of the Streaming Multiprocessor, now abbreviated as SMM.

In Kepler, each SMX plays host to 192 CUDA cores, four warp schedulers, and a 256 KB register file. There’s also 64 KB serving as shared memory and L1 cache, a separate texture cache, and a uniform cache, plus 16 texture units. The big jump in CUDA core count and control logic helped Nvidia overcome losing Fermi’s doubled shader frequency. But the SMX apparently proved difficult to fully utilize in this configuration.

Maxwell attempts to address that by partitioning the SMX into four blocks, each with its own instruction buffer, warp scheduler, and pair of dispatch units. Kepler’s 256 KB register file now gets split into four 64 KB slices. And the blocks have 32 CUDA cores each, totaling 128 across the SMM (down from Kepler’s 192). The previous architecture’s 32 load/store and 32 special function units carry over to Maxwell. However, double-precision math is further pared back to 1/32 the rate of FP32; that was 1/24 in the mainstream Kepler-based GPUs.

| GM107 SMM (Left) Versus GK106 SMX (Right) |

Per SM:GM107GK106RatioCUDA Cores1281922/3xSpecial Function Units32321xLoad/Store32321xTexture Units8161/2xWarp Schedulers441xGeometry Engines111x

Every pair of blocks is tied to a 12 KB texture and L1 cache, adding up to 24 KB per SMM. Block pairs are also associated with four texture units, meaning SMMs come armed with eight. That’s half as many texture units compared to Kepler’s SMX. And the table above makes it look like GM107 actually gives up some ground to GK106. But don’t freak out about bottlenecks quite yet. Remember, the architecture is supposed to get more done using less resources.

Lastly, there’s a 64 KB shared memory space for the SMM, which carries over from Fermi and then Kepler, but is no longer called out as L1 cache for compute tasks. It used to be that this space could be configured as 48 KB of shared space and 16 KB of L1 and vice versa. Now that's not necessary, so all 64 KB is used as a shared address space for GPU compute.

As you might imagine, cutting 64 CUDA cores and eight texture units from the SMM means that each building block consumes significantly less die size. Meanwhile, Nvidia claims that it’s able to hold onto ~90% of the multiprocessor’s performance by keeping cores busy in a sustained way. If you’re contemplating what that might mean to a tablet, you’re not alone. But in a desktop application, Nvidia’s simply able to pack more SMMs into a set amount of space. The GeForce GTX 650 Ti this card replaces employed four SMX blocks, while GeForce GTX 750 Ti incorporates five SMMs.

Constructing GM107

This is the first time we’ve seen Nvidia introduce a new architecture on a decidedly mid-range graphics card. With Fermi, it was the full-force GF100. Even the Kepler-based GK104 was an impressively-fast way to meet that architecture. So, the messaging is quite a bit different with GM107 leading the charge. Of course, that’s because GeForce GTX 750 Ti has to slot into a portfolio still dominated by Kepler, rather than simply ascending a throne.

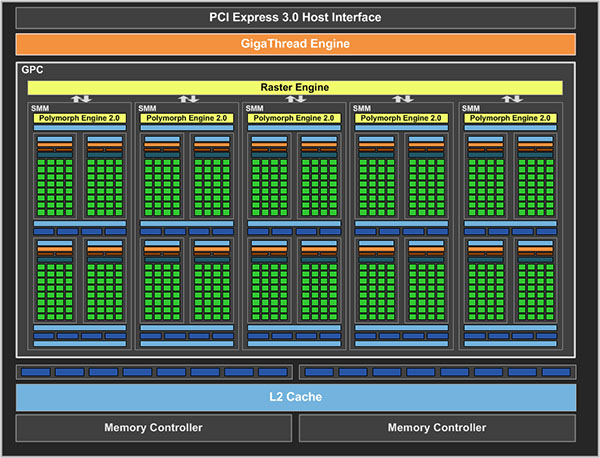

And so it does so using a fully-enabled implementation of GM107, composed of five SMMs in a single Graphics Processing Cluster with its own Raster Engine. GM107 can set up one visible primitive per clock cycle, which is just behind GK106's primitive rate of 1.25 prim/clock and double GK107's .5 prim/clock.

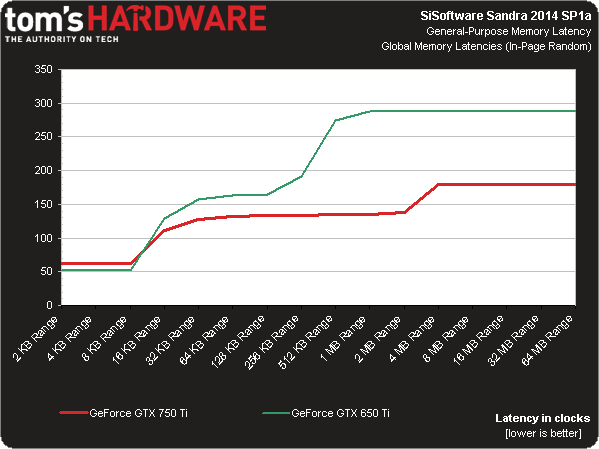

As in Nvidia architectures prior, ROP partitions and L2 cache slices are aligned. And like the GeForce GTX 650 Ti’s GK106 processor, GM107 sports two partitions with eight units each, giving you up to 16 32-bit integer pixels per clock. Where the two GPUs really diverge is their L2 cache capacity. In GK106, you were looking at 128 KB per slice, adding up to 256 KB in an implementation with two ROP partitions. GM107 appears to wield 1 MB per slice, yielding 2 MB of memory used for servicing load, store, and texture requests. According to Nvidia, this translates to a significant load shifted away from the external memory system, along with notable power savings.

Going easy on memory bandwidth is smart, since GM107 exposes a pair of 64-bit memory controllers to which 1 or 2 GB of 1350 MHz GDDR5 DRAM is attached. Peak throughput is, interestingly, exactly what we got from GeForce GTX 650 Ti: 86.4 GB/s. The memory is feeding fewer CUDA cores, but they’re managed more efficiently. So, the big L2 is supposed to play an instrumental role in preventing a bottleneck.

Indeed, a look at global in-page random cache latencies helps illustrate how Maxwell's memory hierarchy keeps the GPU busy more consistently.

Beyond the pieces of GM107 devoted to gaming and compute tasks, Nvidia also says it improved the fixed-function NVEnc block. That’s the bit of logic responsible for letting ShadowPlay encode your frag fest with minimal performance impact. It’s what enables streaming to the Shield. And it accelerates a few transcoding apps to get big movies onto your portable devices quickly. Whereas Kepler was capable of encoding H.264-based content ~4x faster than real-time, Maxwell purportedly achieves 6-8x real-time. H.264 decode performance is said to be eight to 10 times quicker than it was before, also. Nvidia achieves those gains, it says, by simply speeding up the fixed-function blocks.

| Header Cell - Column 0 | GeForce GTX 650 | GeForce GTX 650 Ti | GeForce GTX 750 Ti | GeForce GTX 660 |

|---|---|---|---|---|

| GPU | GK107 | GK106 | GM107 | GK106 |

| Architecture | Kepler | Kepler | Maxwell | Kepler |

| SMs | 2 | 4 | 5 | 5 |

| GPCs | 1 | 2 | 1 | 3 |

| Shader Cores | 384 | 768 | 640 | 960 |

| Texture Units | 32 | 64 | 40 | 80 |

| ROP Units | 16 | 16 | 16 | 24 |

| Process Node | 28 nm | 28 nm | 28 nm | 28 nm |

| Core/Boost Clock | 1058 MHz | 925 MHz | 1020 /1085 MHz | 980 / 1033 MHz |

| Memory Clock | 1250 MHz | 1350 MHz | 1350 MHz | 1502 MHz |

| Memory Bus | 128-bit | 128-bit | 128-bit | 192-bit |

| Memory Bandwidth | 80 GB/s | 86.4 GB/s | 86.4 GB/s | 144.2 GB/s |

| Graphics RAM (GDDR5) | 1 or 2 GB | 1 or 2 GB | 1 or 2 GB | 2 GB |

| Power Connectors | 1 x 6-pin | 1 x 6-pin | None | 1 x 6-pin |

| Maximum TDP | 64 W | 110 W | 60 W | 140 W |

| Price | $130 (2 GB) | $150 (2 GB) | $150 (2 GB) | $190 (2 GB) |

All told, the GM107 GPU ends up with 1.87 billion transistors in a 148 mm² die. If you keep the comparison to GeForce GTX 650 Ti going, then the first Maxwell-based processor replaces GK106, which packs 2.54 billion transistors into a 221 mm² die. Before we get to our performance results, we have to assume Nvidia’s emphasis on efficiency is significant enough to let the company use less transistors on a smaller die, cut out a lot of CUDA cores and texture units, and still improve performance overall. At least, that’s what we’ll be looking for…

Alternatively, you can put GM107 up against the 1.3 billion-transistor, 118 mm² GK107, if your preference is a face-off of thermal ceilings. In that case, the Maxwell-based processor is more complex, larger, significantly faster, and yet it should still use less power.

Current page: Introducing The GM107 GPU, Based On Maxwell

Next Page Nvidia's GeForce GTX 750 Ti Reference Card-

meluvcookies on performance, I'll take the extra frames of the 265, but damn, for 60w, I'm totally impressed by this card. both the 750Ti and the R7 265 would be decent upgrades from my aging GTX460.Reply -

s3anister ReplyBut without the big cooler, GTX 750 Ti is daintier than a lot of sound cards we've tested.

I'm pretty sure you meant to type "video cards" on page one there. Cheers. -

Bloob AlsoReplyIt’s difficult to make this story all about frame rates when we’re comparing a 60 W GPU to a 150 W processor

Is a bit confusing. -

cangelini Reply

Actually meant sound card :) It's definitely smaller than a small video card, but I even have sound cards here that are larger.But without the big cooler, GTX 750 Ti is daintier than a lot of sound cards we've tested.

I'm pretty sure you meant to type "video cards" on page one there. Cheers. -

Sangeet Khatri Well.. there is not a lot of performance in it, but I love it for a reason that it is a 60W card. I mean for 60W Nvidia has seriously nailed it. The only competition is way behind, the 7750 performs a lot less for similar wattage.Let's see how AMD replies to this because after the launch of 750Ti, the 7750 is no longer the best card for upgrading for people who have a 350W PSU.I don't generally say this, but Nvidia well done! Take a bow.Reply -

houldendub Nice little card, awesome! I feel like this would be an absolutely awesome test bed for a dual chip version, great performance with minimal power usage.Reply -

thdarkshadow The whole time I was reading the review I was like it isn't beating the 650ti boost... :( but then I remembered it uses less than half the power lol. I am impressed nvidia. While I make purchases more on performance than power consumption I can still appreciate what nvidia is doingReply -

houldendub Reply12707408 said:Anybody else notice the lesser shaders and TMUs on the Zotac card in GPU-Z?

Don't take this as fact, but the drivers look newer for the Zotac card than the others, possibly just a bug with the older drivers? The cards are advertised as having 640 shaders anyway.

Also weird, the GPU-Z screenshot is taken with Windows 8, whereas the Gigabyte and MSI cards are on Windows 7. The mystery continues...