Challenging FPS: Testing SLI And CrossFire Using Video Capture

What if the performance data you used for deciding which graphics card to buy was flawed? We're taking a deeper look at some of the problems with benchmarking multi-GPU configs using conventional tools. Nvidia's new FCAT suite helps us collect more info.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Results: Far Cry 3

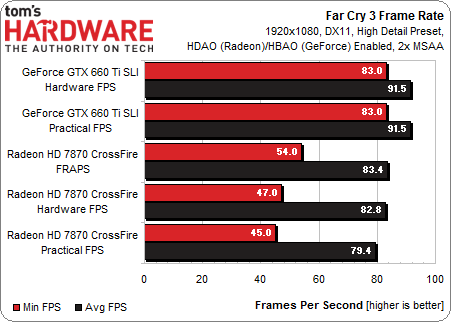

Performance-wise, these settings favor Nvidia's cards, which yield identical numbers for our actual and practical frame rates. The Radeon boards see 3.4 FPS between those same two measurements. Correlating with Fraps gives us a results that'd predictably come close to what the Radeon cards are actually rendering.

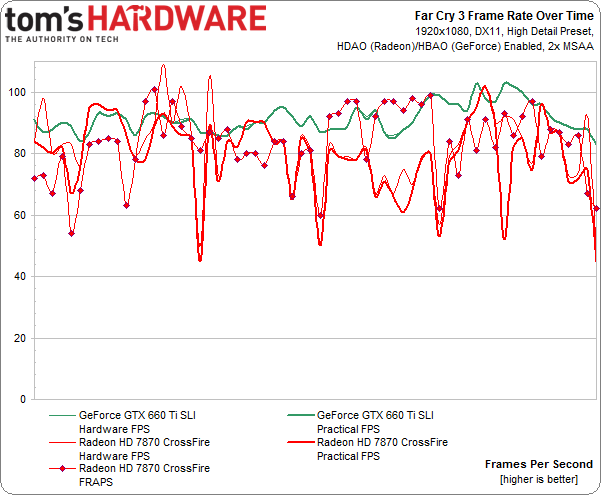

As before, we see spikes from what AMD's cards are actually rendering, which includes the dropped and runt frames.

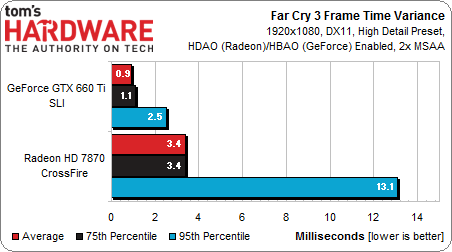

The spikiness from AMD's Radeon cards can be demonstrated by looking at frame time variance. At the 95th percentile, we can see that frames aren't being delivered as consistently. I did notice the game felt a little laggier, too.

Article continues belowGet Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Don Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

kajunchicken Hopefully someone besides Nvidia develops this technology. If no one does, Nvidia can charge whatever they want...Reply -

cleeve kajunchickenHopefully someone besides Nvidia develops this technology. If no one does, Nvidia can charge whatever they want...Reply

FCAT isn't for end users, it's for review sites. The tech is supplied by hardware manufacturers, Nvidia just makes the scripts. They gave them to us for testing.

-

cangelini kajunchickenHopefully someone besides Nvidia develops this technology. If no one does, Nvidia can charge whatever they want...And actually, it'd be nice to see someone like Beepa incorporate the overlay functionality, taking Nvidia out of the equation.Reply -

cravin I wish there were an easy way to make my frame rates not dip and spike so much. A lot of times it can go up to like 120fps but then dipts down to 60 -70. It makes it look super choppy and ugly. I know I could limit it at 60 frames a second, but wouldn't that just be like vsync? Would that have input lag.Reply -

DarkMantle Good review, but honestly I wouldnt use a tool touched by Nvidia to test AMD hardware, Nvidia has a track record of crippling the competition's hardware every chance they have. Also, i was checking prices in Newegg and to be honest the HD7870 is much cheaper than the GTX660Ti, why didn't you use 7870LE (Tahiti core) for this test? The price is much more closer.Reply

The problem i have with the hardware you picked for this reviews is that even though, RAW FPS are not the main idea behind the review, you are giving a Tool for every troll on the net to say AMD hardware or drivers are crap. The idea behind the review is good though. -

krneki_05 Vsync would only cut out the frames above 60FPS, so you would still have the FPS drops. but 60 or 70 FPS is more then you need (unless you are using 120Hz monitor and you have super awesome eyes to see the difference between 60 and 120FPS, that some do). No, the choppy felling you have must be something else not the frames.Reply -

BS Marketing Does it really matter? Over 60 FPS there will be screen tearing. So why is this sudden fuss? I guess nvidia marketing engine is in full flow. Only explanation is that Nvidia is really scared now, trying everything in there power to deceive people.Reply -

rojodogg I enjoyed the artical and it was very informative, I look forward to more testing of other GPU-s in the future.Reply -

bystander Great article.Reply

But as great as the review is, I feel one thing that review sites have dropped the ball on is the lack of v-sync comparisons. A lot of people play with v-sync, and while a 60hz monitor is going to limit what you can test, you could get a 120hz or 144hz monitor and see how they behave with v-sync on.

And the toughest thing of all, is how can microstutter be more accurately quantified. Not counting the runt frames gives a more accurate representation of FPS, but does not quantify microstutter that may be happening as a result.

It seems the more info we get, the more questions I have. -

rene13cross @DarkMantle, exactly my thinking. I don't want to sound like a paranoid goof who thinks everything is a conspiracy but a test suite created by Nvidia to test AMD hardware doesn't sound like a very trustworthy test. I'm not saying that the results here are all false but Nvidia has had a slight history in the past with attempting to present the competition in an unfair light.Reply