Early Verdict

The Intel SSD 750 400GB is the lowest priced product in this new ultra high performance SSD category. The $400 price point brings the cost of entry down but NVMe still has compatibility limitations that need to be considered. Workstation users will find this model a bargain compared to the 1.2TB model while enjoying nearly identical performance.

Pros

- +

Extremely high performance at a lower cost than the flagship SSD 750 1.2TB and Samsung SM951 512GB.

Cons

- -

At $1 per GB the SSD 750 400GB is still expensive. NVMe boot compatibility is limited to the latest motherboards and the 400GB capacity size is over 100GB less than the Samsung SM951 512GB.

Why you can trust Tom's Hardware

Introduction

Native PCIe-based drives are to SSDs what SSDs are to hard disks. With a direct link to the CPU, PCIe-based storage further reduces latency, increases available throughput and gives enthusiasts the best experience you can buy. Unchained from the limitations of SATA, the products we're testing deliver up to 4x the performance of previous-gen SSDs that slammed into a ceiling at 6 Gb/s.

PCIe storage isn't a new concept. In the past, solutions like OCZ's RevoDrive tapped the PCIe bus as well. Until recently, the golden example was actually from Fusion-io, which made one of the first native PCIe-to-flash controllers. The RevoDrive sought to serve up Fusion-io-like performance. But, in order to keep costs low, presented a PCIe-based SATA/SAS RAID controller in front of multiple SATA SSDs. It delivered high throughput for fast sequential reads and writes, but lacked Fusion-io's low latency for the quickest random data accesses.

Native PCIe is the key. You can think of the SSD controller as a bridge. In this case, the controller connects the CPU's PCIe connectivity to the NAND flash, which holds the data. All-in-one RAID products like the RevoDrive and G.Skill Phoenix Blade take PCIe to a RAID controller, and then through several SATA interfaces to the solid-state storage. The extra hops increase latency, add cost and reduce efficiency.

In order to remain focused, we're limiting the number of products compared today. Right now, Intel's SSD 750 series and Samsung's SM951 are in a unique position. This is the story that power users and enthusiasts have asked for, a showdown devoid of fluff and filler.

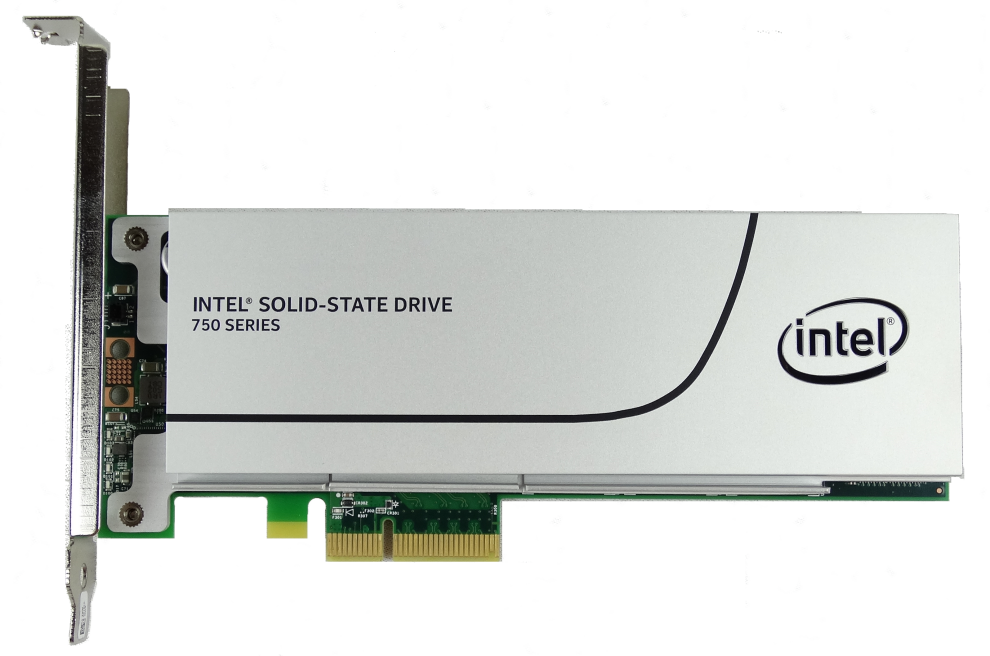

Intel recently released its SSD 750 series in two capacities. But the launch didn't go as smoothly as it could have. Reviewers tested the 1.2TB that sells for more than $1000. We called it The Extreme Edition of SSDs. If you need lots of capacity and can afford the high cost of procurement, then rest assured that Intel's big SSD 750 is the largest drive in this performance class. For the rest of us, Intel introduced a 400GB model priced just over $400. At over $1/GB, that's still nowhere near cheap.

The only direct competitor to Intel's native NVMe-capable SSD is Samsung's SM951 512GB, which used the AHCI standard when it was released, but will leverage NVMe in the near future. For now, the AHCI models in 128, 256 and 512GB capacities are all you can get. Despite the difference, we've been inundated by readers asking which of the two SSDs is better. Today we'll present an answer.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Chris Ramseyer was a senior contributing editor for Tom's Hardware. He tested and reviewed consumer storage.

-

BoredErica Hey, I would love to see a new article about trace-based analysis of hard drive load. I've got some money to splurge on storage I don't strictly NEED, but actually I have no idea what to buy. Just a thought. :)Reply -

Arabian Knight agaiiiinReply

How about testing the Intel 750 card in Raid 0 ?

please add another Card and try software raid . -

PaulBags RE: Sequential Steady State, what is the percent scale in that graph? I assume one end is writes and one read, but which? Graph needs to be properly labeled.Reply -

PaulBags Sequential steady state vs random steady state, switching between IOPs and MB/s, yay that's comparible. Bah, I'm done with this article. Bppppppt.Reply -

Blueberries I'd take a 750 over the SM951 any day, you just can't beat the latency and random read/write performance. The SM951 is probably hands down the best SSD right now for the typical PC user, all things considered; having neck-and-neck or better performance at low queue depths and of course a much faster boot time.Reply

Get the 750 if you're an enthusiast and you can afford it. Otherwise the SM951 is going to be the best performance you've experienced in your life. -

Osiricat Hi! Anything about temps? I read in earliers reviews that SM951 warms up to 70-75ºC, for some reason intel added passive cooling over theirs memory chips!Reply -

CRamseyer The industry standard is to measure random performance in IOPS and sequential performance in throughput. Why would you want to compare sequential IOPS to random IOPS or sequential throughput to random throughput?Reply

The sequential steady state shows read percentage. 100% read to 0% read. It's mainly an enterprise test I imported a few years ago in my testing to see the bathtub curve of the devices under test.

As for RAID with these drives. I'm not sure if a RAID Report is really needed. You can't boot from devices in Windows software RAID. If 5% of the market cares about these premium parts to start with then RAID performance has to amount to 5 to 10% of those readers. I don't think there are enough readers to justify the time and expense for that level of testing. If Intel wants to provide the parts I don't mind testing and writing the article.

Chris -

PaulBags Reply

So that I can see the difference? How much of a performance drop is there with random vs sequential? Yes I don't get to decide how my data travels, but is random throughput significantly above the sata 6gb ceiling or does interface not _really_ matter yet unless you have a lot of sequential reads/writes to do? Additionally, what kind of performance is there when there is mixed sequential and random; somewhere in between the two or would comparing the two be completely irrelevant?15929620 said:The industry standard is to measure random performance in IOPS and sequential performance in throughput. Why would you want to compare sequential IOPS to random IOPS or sequential throughput to random throughput?

...

Chris -

CRamseyer Random performance is never higher than the limits of SATA 6Gbps unless you have a product like the Memblaze or P320H that can deliver full PCIe bandwidth random 4K reads and writes. Those are both enterprise products that cost more than a used Honda.Reply

Measuring 4K data in throughput is like telling someone the length of a dollar bill in miles.