Intel Cascade Lake Xeon Platinum 8280, 8268, and Gold 6230 Review: Taking The Fight to EPYC

Why you can trust Tom's Hardware

Test Platforms and How We Test

The Roster

We're testing a wide range of Intel CPUs that we have on-hand, along with the 32C/64T AMD EPYC 7601, in dual-socket server configurations.

| Row 0 - Cell 0 | Cores / Threads | Base / Boost | L3 Cache | TDP | Memory Support | Price |

| Cascade Lake Platinum 8280 | 28 / 56 | 2.7 / 4.0 | 38.5MB | 205W | 6-Channel DDR4-2933 | $10,009 |

| AMD EPYC Naples 7601 | 32 / 64 | 2.2 / 3.2 | 64MB | 180W | 8-Channel DDR4-2666 | $4,500 |

| Cascade Lake Platinum 8268 | 24 / 48 | 2.9 / 3.9 | 35.75MB | 205W | 6-Channel DDR4-2933 | $6,302 |

| Cascade Lake Gold 6230 | 20 / 40 | 2.1 / 3.9 | 28MB | 125W | 6-Channel DDR4-2933 | $1,894 |

| Skylake-SP Platinum 8176 | 28 / 56 | 2.1 / 3.8 | 38.5MB | 165W | 6-Channel DDR4-2666 | $8,719 |

| Skylake-SP Gold 6152 | 22 / 44 | 2.1 / 3.7 | 30.25MB | 140W | 6-Channel DDR4-2666 | $3,661 |

| Skylake-SP Gold 6154 | 18 / 36 | 3.0 / 3.7 | 24.75MB | 200W | 6-Channel DDR4-2666 | $3,543 |

| Broadwell Xeon E5-2697 v4 | 18 / 36 | 2.3 / 3.6 | 45MB | 145W | 4-Channel DDR4-2400 | $2,706 |

| Haswell Xeon E5-2699 v3 | 18 / 36 | 2.3 / 3.6 | 45MB | 145W | 4-Channel DDR4-2133 | $4,115 |

The Test Platforms

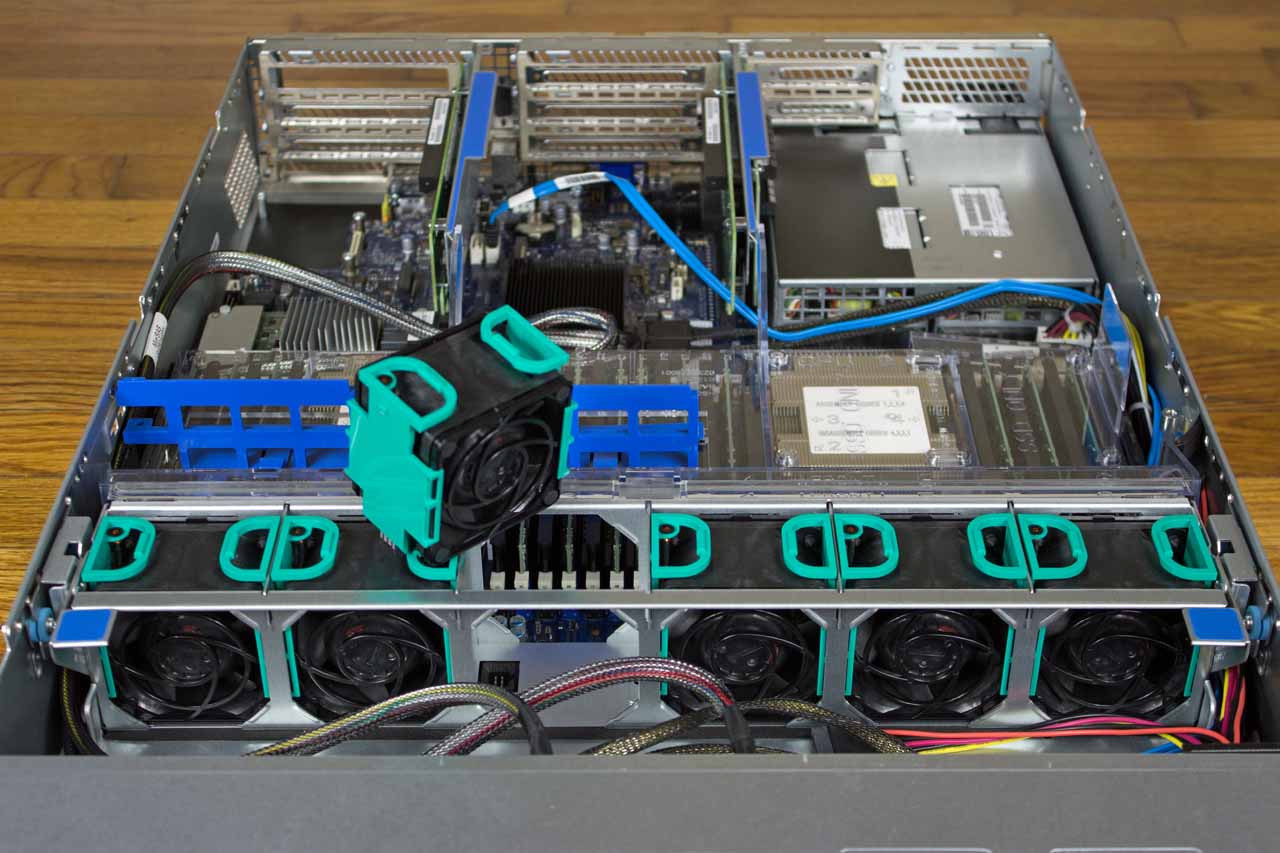

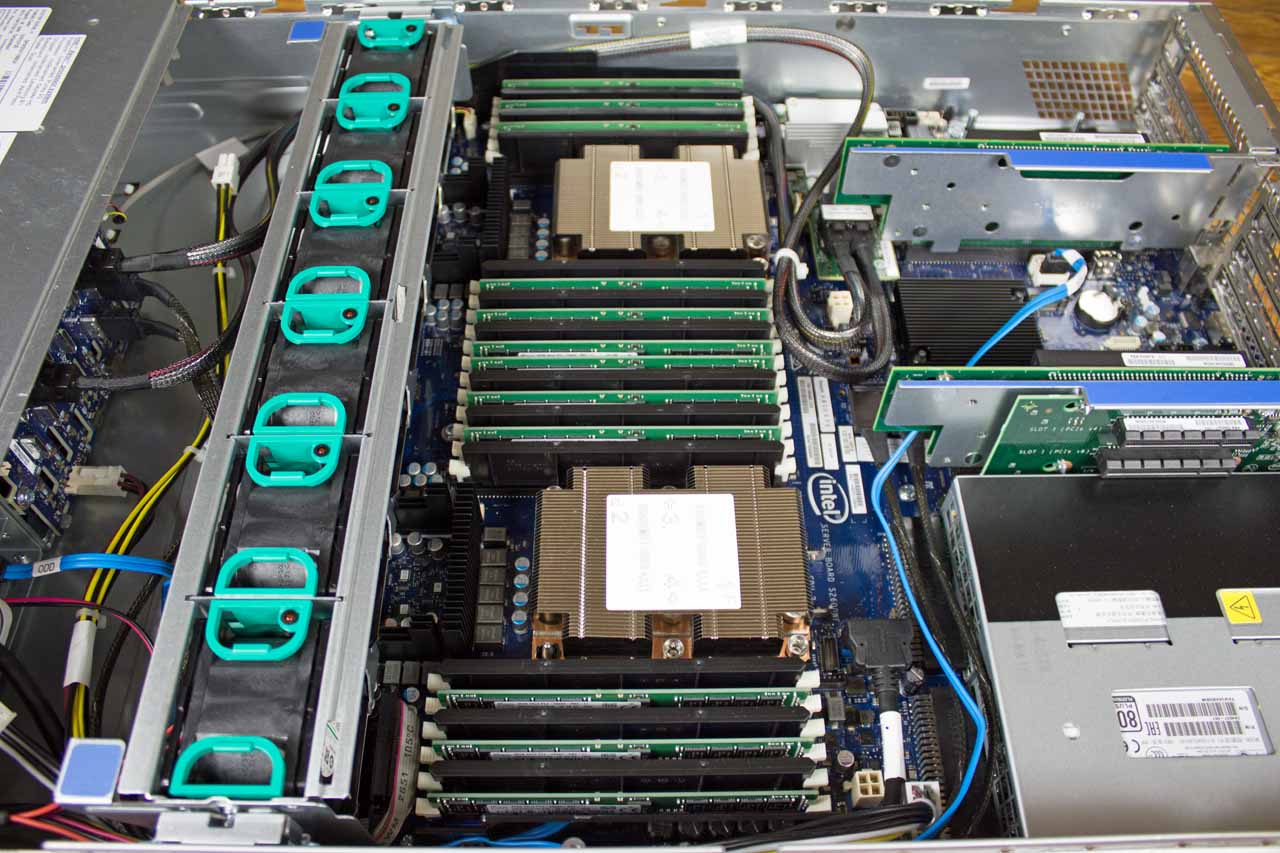

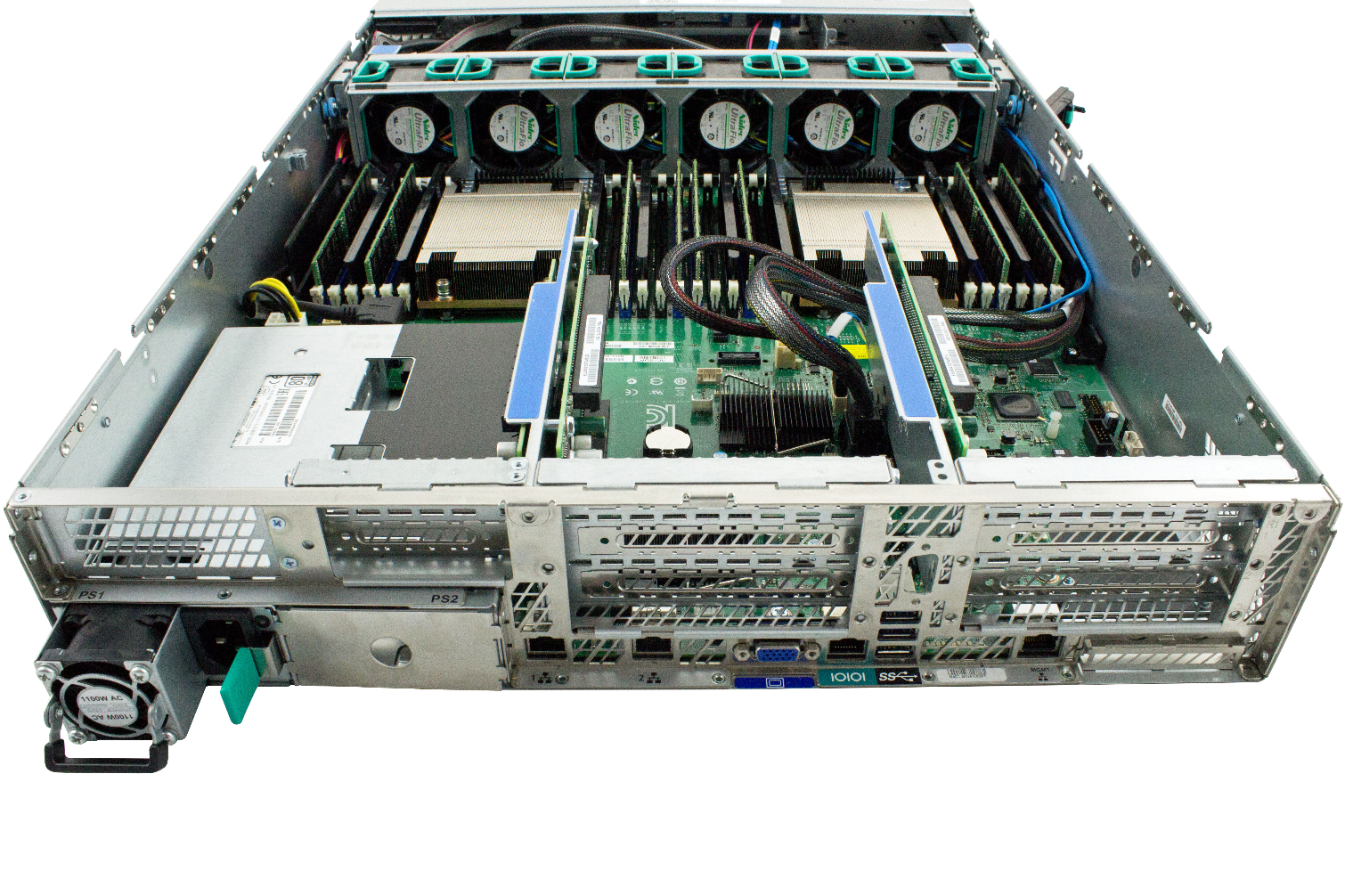

Intel Purley S2P2Y3Q Server

For our first-gen Xeon Scalable review, Intel sent a Server System S2P2SY3Q platform powered by a dual-socket Intel Server Board 2600WF. We pressed this machine back into service to test the second-gen CPUs, highlighting that these chips are backward-compatible with previous-gen LGA 3647-equipped servers.

This system originally came equipped with 12x 32GB Hynix DDR4-2666 DIMMs, which we swapped out for 12x 32GB SK hynix DDR4-2933 DIMMs in order to tap into the second-gen Cascade Lake design's increased bandwidth. We tested all first- and second-gen Xeon Scalable processors (Cascade Lake and Skylake) with these modules, which clocked down to DDR4-2666 on the Skylake chips.

The Software Development Platform includes two redundant 80 PLUS 1100W power supplies. The PSUs, like the fans, are hot-pluggable to avoid downtime issues due to component failure.

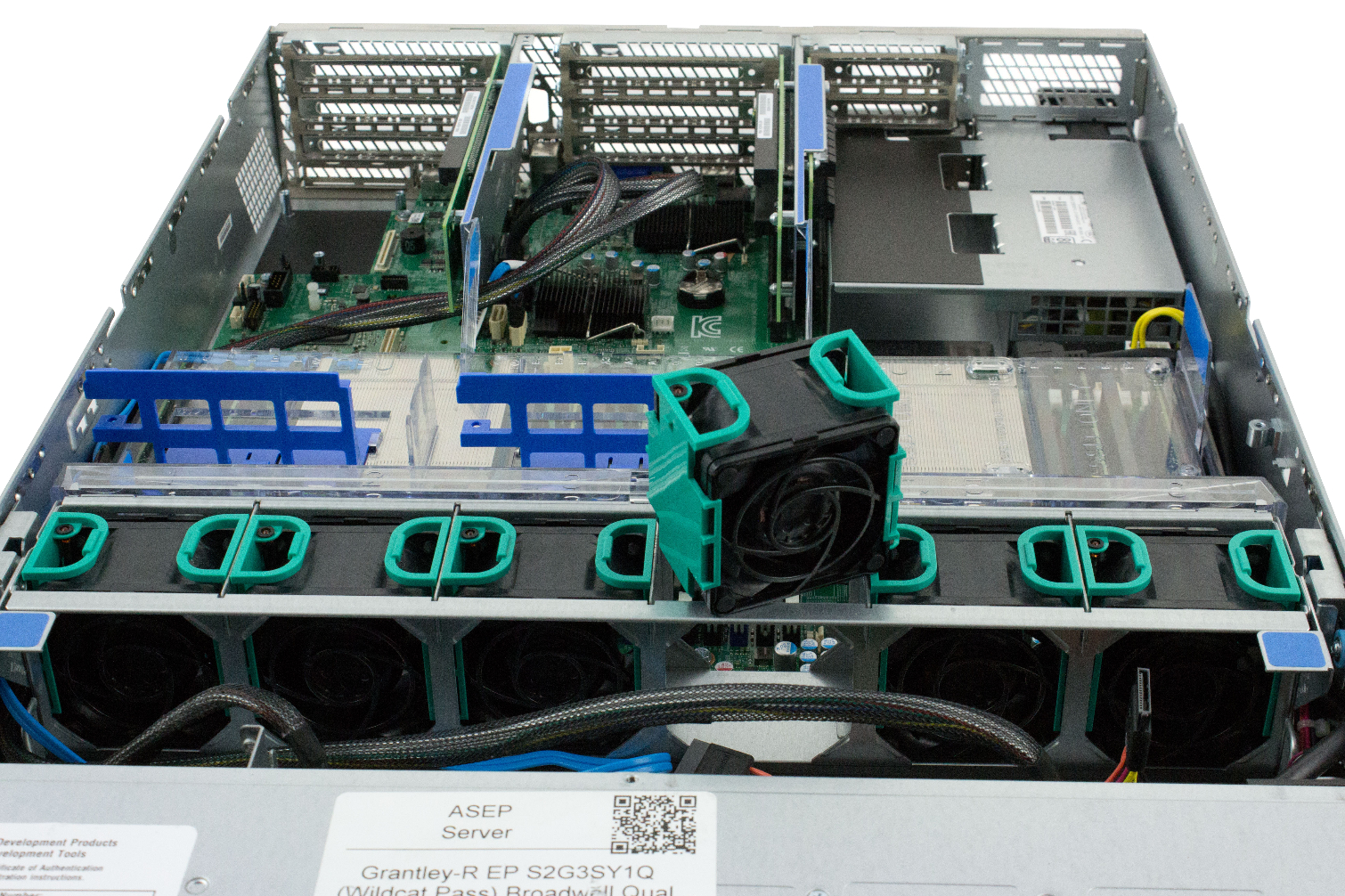

Intel Wildcat Pass S2G3SY1Q Server

We tested the Broadwell-EP-based Xeon E5-2697 v4 and the Haswell-EP-based Xeon E5-2699 v3 on an Intel Software Development Platform server. The pre-production Grantley-R EP S2G3SY1Q (Wildcat Pass) Broadwell Qualification 2U test bed originally came with two Xeon E5-2697 v4 CPUs sporting 18 Hyper-Threaded cores and 45MB of shared cache apiece.

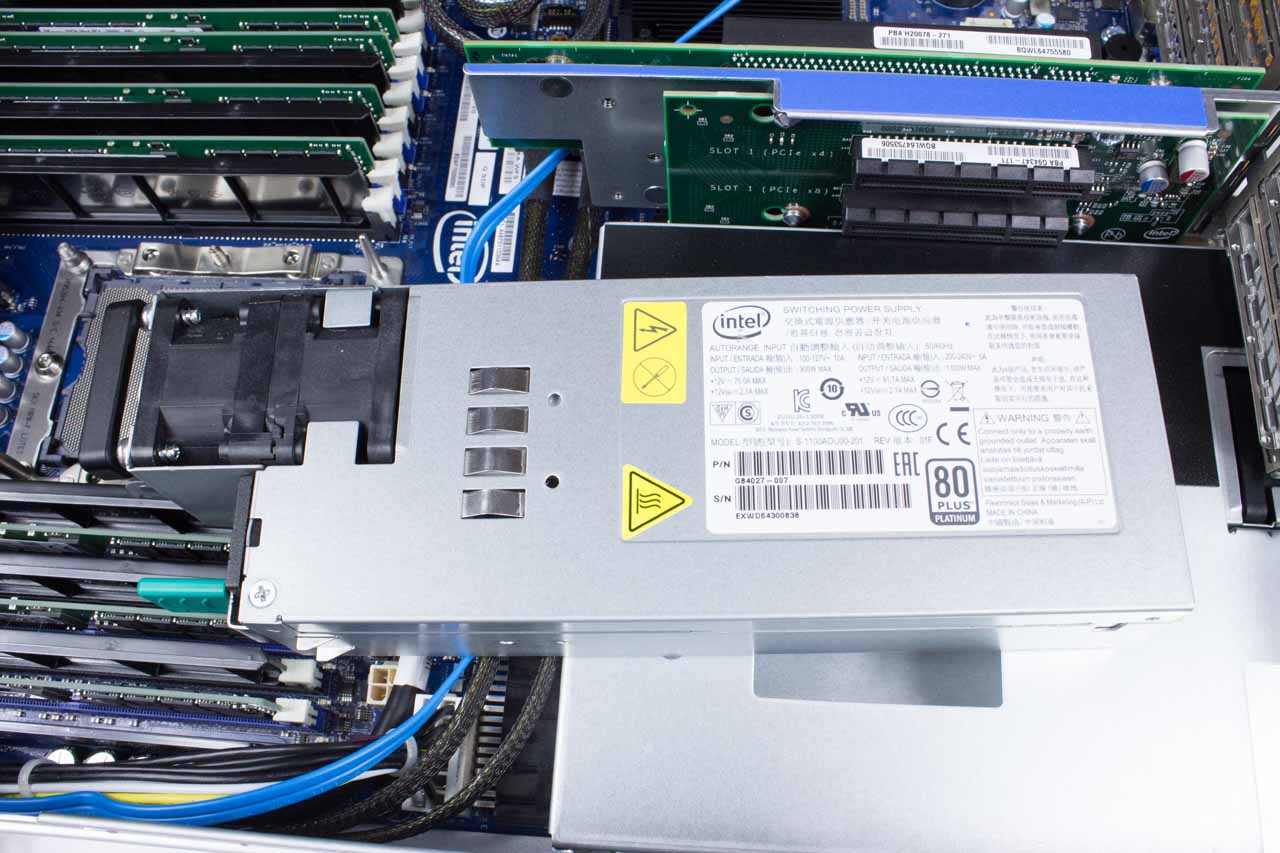

The test platform features Intel's C610 chipset and includes eight 32GB SK hynix DDR4-2400 DIMMs (HMA84GL7AMR4N-UH). Intel provided this server for use as a software development platform; it's not designed for use in a production environment. As such, it lacks some of the features that facilitate redundancy, such as dual PSUs. One of the PSU bays is covered, while the other houses a single 900W power supply.

AMD Speedway-AMG-16 Server

We tested the EPYC Naples 7601 CPUs, delivering a combined 64 cores and 128 threads, on the Speedway-AMG-16 server. This system came with 16x 16GB Samsung DDR4-2666 DIMMs, totaling 256GB of capacity. It also included redundant hot-swappable 1200W power supplies and four hot-swappable fans.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

How We Test

We benchmarked the servers with the open source Linux-Bench script, which is available on Linux-Bench.com and GitHub. ServeTheHome and others in the open source community maintain it. We tested with Ubuntu Server 18.04.2 LTS. The script installs dependencies and runs several well-known independent open source benchmarks in a Docker container. The test suite also leverages the standard GCC compiler, which is used across AMD, Intel, ARM, and Power architectures. Naturally, in many cases, both AMD and Intel's respective compilers unlock more performance for the respective architectures.

Most enterprise deployments are built for specific needs and workloads, and as tempting as application testing is, there are far too many variables to make the results applicable to all but a small subset of users. The benchmarks in this article encompass several industry-standard tools that quantify performance trends. But it's noteworthy that optimized deployments could unlock even more performance.

MORE: Best CPUs

MORE: Intel & AMD Processor Hierarchy

MORE: All CPU Content

Current page: Test Platforms and How We Test

Prev Page Silicon Mitigations, Product Stack and Turbo Boost Frequencies Next Page Linux Compile, Unix Bench, NASPB

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Murissokah In the first page there's a paragraph stating "Like the previous-gen Xeon Scalable processors, Intel's Cascade Lake models drop into an LGA 4637 interface (Socket P) on platforms with C610 (Lewisburg) platform controller hubs, and the processors are compatible with existing server boards."Reply

Wouldn't that be LGA 3647? And shouldn't it read C620-famliy chipset for Lewisburg? -

JamesSneed It just hit me how big of a deal EPYC will be. AMD is already pulling lower power numbers with the 32 core 7601 however AMD will have a 64 core version with Zen2 and has stated the power draw will be about the same. If the power draw is truly is the same, AMD's 64 core Zen2 parts will be pulling less power than Intel's 28 core 8280. That is rather insane.Reply -

Amdlova lol 16x16gb 2666 for amdReply

8x32gb intel 2400

12x32gb intel 2933

How To incrase power compsumation to another level. add another 30w in memory for the amd server and its done. -

Mpablo87 The promise or realityReply

Cascade Lake Xeons employ the same microarchitectur as their predecessors, but, we can hope, it is promising!) -

The Doodle Slight problem this author fails to tell you in this article and that's OEM's can only buy the Platinum 9000 processor pre-mounted on Intel's motherboards. That's correct. None of the OEM's are likely to ship a solution based on this beast because of this fact. So at best you will be able to buy it from an Intel reseller. So forget seeing it from Dell, HPE, Lenovo, Supermico and others. your in white box territory.Reply

Besides, why would they? A 64C 225W AMD Rome will run rings around this space heater and cost a whole lot less.