The Intel Z68 Express Review: A Real Enthusiast Chipset

SSD Caching Benchmarks: PCMark Vantage

By default, Intel uses a write-through strategy to keep data synchronized between the SSD and the hard drive. If you lose power or experience a SSD failure, no data is lost. This is what Intel calls Enhanced mode in its RST software. When you write to your hard drive, you write simultaneously to the SSD.

In comparison, Maximized mode gets a performance benefit from write-back caching. This involves synchronizing data between the SSD and hard drives in intervals, though. If you choose to go with that caching policy, a power loss means that information not synchronized can be compromised. Intel claims that a pre-boot recovery process will automatically restore the correct cache state though, and that the risk is similar to a mechanical hard disk running with its internal write cache enabled.

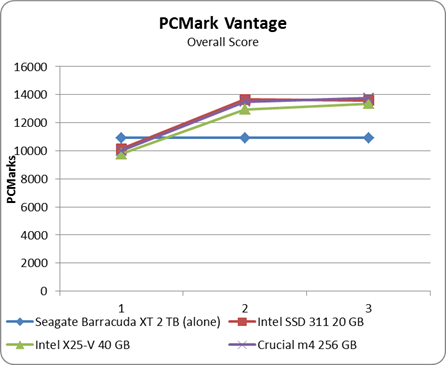

You'll notice that we're testing with three different SSDs. The idea, of course, is to figure out how much performance you need from your solid-state device to get maximum benefit from Smart Response Technology.

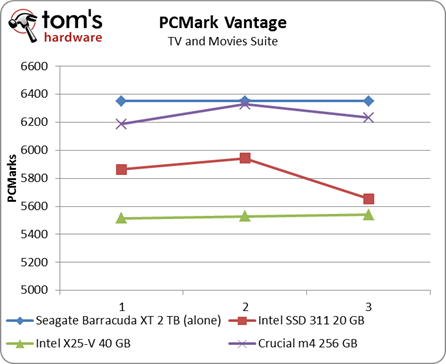

PCMark Vantage paints a rosy picture of better performance with SSD caching enabled. But once you look at the individual test suites, you understand why. Remember that Intel's algorithm is "smart." It deliberately tries to avoid caching large chunks of data read sequentially, assuming that sort of usage pattern is only going to be touched once by the user. That's our explanation as to why caching doesn't help improve performance in the TV and Movies suite.

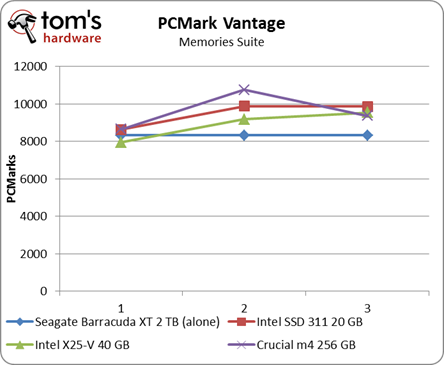

The current cache strategy specifically places a priority on application, user, and boot data. Photos are smaller, do get cached, and the Memories suite benefits as a result.

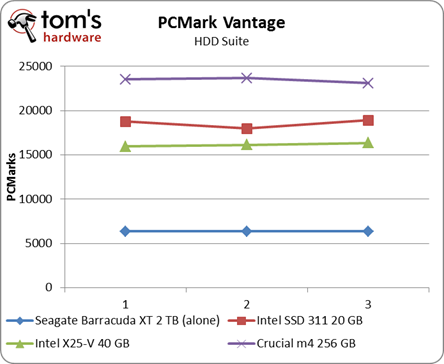

If you read our review of Crucial's m4, then you know the drive should achieve an HDD suite score above 50 000 PCMarks. This graph helps illustrate that caching with an SSD is in no way equivalent to letting the SSD run unfettered. At the same time, Crucial's m4 has a profound impact on performance compared to Seagate's Barracuda XT on its own. The hard drive's maximum outside-diameter data rate of 138 MB/s is still a far cry from the m4's 260 MB/s sequential write speed.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: SSD Caching Benchmarks: PCMark Vantage

Prev Page Test Setup Next Page SSD Caching Benchmarks: Real-World-

LuckyDucky7 The Intel 311 might be one of the weirdest products I've seen for a while.Reply

It doesn't have an impact on games and apps which are too large to be cached and 60 GB drives that blow the 311 out of the water can be had for 20 bucks more.

And as far as getting QuickSync, it's about time. Should have been done in P67 (along with USB 3.0 support and 6 x SATA III ports) is all I can say. -

acku In an ideal world, that's what we should have seen, but Lucidlogix's Virtu really makes Z68 worth it.Reply -

ghnader hsmithot Sir and madam working at intel.You make us customers look retarded.Thank you.Reply -

Olle P mayankleoboy1is this realy the platform for enthusiasts? with almost daily news of lga2011 ... its a little bit hard to get too happy with thisYes it is!Reply

I am going to buy myself a Z68 mobo and a Core i5-2500K within a few weeks.

If you buy yourself an LGA2011 based platform we can get together a month from now and compare the results!

... or rather not, since it will take at least half a year for the 2011 to become available.

Let's face it. For at least a full month from now the Z68 will be the enthusiast platform.

Then AMD's competition will arrive, and we'll see how much of an option that is. -

acku hmp_gooseWhat is this "QuickSync"? My people do not have this word …Reply

http://www.tomshardware.com/reviews/sandy-bridge-core-i7-2600k-core-i5-2500k,2833-4.html -

ta152h A good comparison would have been striping hard disks to compare against caching with EEPROMs. You'd have more capacity, a lot more, and wouldn't have a technology that dies after a certain amount of writes, which is dubious to use for something that's being used as a cache, and written on rather consistently.Reply

Performance of Raid 0 would be higher than a single disk, and you'd be increasing performance without a loss in capacity (per dollar). Or, if you wanted the same capacity. you could get higher performance disks, and compare them that way.

If I want to spend an extra $100 to make my computer faster, will it? Duh, of course. That's all this article is saying. Is it the best way to spend that $100? Well, that much isn't clear at all. It wasn't compared with much of anything else. Two high capacity disks striped, and two higher performance disks (but lower capacity) striped, versus one disk and EEPROMs. All should be the same cost. It's more useful information. You'd have three fundamental choices - huge capacity, high "Winchester" performance, and low capacity with EEPROM caching. You could do a search on the capacity trade-offs pretty easily, but the performance difference between this caching and a high performance magnetic disk in RAID 0 is much less clear. Obviously, the hard disks would win a lot of tests, and could be a better buy for a lot of people.

It is worth looking at. -

Olle P Another little detail:Reply

Larsen Creek was the work name for Intel's SSD.

The final name now in use is Larson Creek, as can be easily read in the picture. -

flong Hey, did I read this right, the theoretical maximum of the 2600K and 2500k chips is 5.7 ghtz???? Has anyone ever got a cpu that high? The most Ive read about is 5.0 ghtz and that was with water cooling. So does 5.7 ghtz exist?Reply -

My GoD!Reply

Intels output is capped at 1920x1200? Below my native res! I've been forced to put my buy on hold...

What were they thinking?