The Intel Z68 Express Review: A Real Enthusiast Chipset

Smart Response Technology: Improving Storage Performance, Transparently

We already explained the concept behind SSD caching in our Z68 preview, but for those unfamiliar, the idea is simple. You want to keep as much data as possible as close to your processor as possible. Of course, as you get closer, the storage space gets more expensive. That's why CPUs have kilobytes of L1 cache and megabytes of L3 cache, system memory is composed of gigabytes, and hard drives store terabytes of data. But, ew; hard drives are so comparatively slow. What if you could insert lots of faster storage between the disk and RAM? That's where SSD caching comes into play.

Smart Response Technology: Smart Caching

In essence, the technology operates on blocks of data (as opposed to entire files). This helps maximize the efficiency of the cache capacity. The caching mechanism itself exploits the principle of locality, which is at the heart of every hierarchical memory architecture. CPU cache, for example, depends on temporal locality; it holds recently-referenced data expected to be used again. If it is, the system doesn't have to retrieve information from a slower class of storage. Rather, it can simply pull it from cache and realize a speed-up. The same holds true for the SSD cache. Intel's algorithms try keep the most frequently-accessed data on the solid-state storage.

Article continues belowCached information is non-volatile, meaning that your accessed data continues to live on the SSD, even between reboots. Of course, the only way you can get a read cached on the SSD is to access it from the hard drive at least once. It goes without saying, then, that a first read with the cache enabled won't be any faster than the hard drive running on its own. The second and third reads are where you'll notice the speed-up attributable to solid-state storage. Intel says a write can be cached immediately. However, it's important to understand that caching improves the performance of read-based operations. Writes might get cached, but write-heavy tasks don't get sped-up because maintaining data coherency requires a write-through operation that puts information in cache and on the hard drive simultaneously. Basically, you're still working at the speed of the disk.

Now, up until this point, we've explained caching in a fairly basic way. Read data is cached after one access, and information written can be inserted into cache right away. That's not a very efficient approach, though. See, Intel's algorithm intelligently distinguishes between what it considers high-value blocks representing application, user, and boot data, and low-value data. Anything read in from a virus scanner, for instance, is low-value. Similarly, purely sequential streams that manifest as playing a movie or copying large files are considered one-touch data and not cached. After all, how often do you watch a movie and then watch the same movie two days later, justifying it occupying space on an SSD? Intel is specifically going after frequently-read blocks that can be accessed more quickly from the SSD a second or third time around.

It’s important to point out that the mobile HM67 and QM67 chipsets are also capable of enabling SSD caching. However, the caching policy on AC is different from the caching policy when the system is running on DC power. According to Intel, this is mainly a power management issue. We have to imagine that it's throttling back on caching, though, to prevent both storage devices from sucking down battery life.

Is Caching Right For Me?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

This is an important question because dedicating an SSD to caching means you can't manually control what data lives on flash-based storage. That's handled silently by Intel's software. And as a result, you don't benefit from the better write speed of an SSD, either.

| $200 Budget | Option 1 | Option 2 |

|---|---|---|

| Priority | Speed | Capacity |

| Hard Drive | 1 x 320 GB Seagate Barracuda 7200.12 | 1 x 2 TB WD Caviar Green, 1 x 250 GB WD Caviar Blue |

| SSD | 1 x 90 GB OCZ Vertex 2 90 GB | 1 x Corsair Nova 32 GB |

| Total Price | $199.98 | $198.97 |

Let's say you have a storage budget of $200. You have a couple of different options:

- Sink a majority of the cash into a "large" SSD and spend what's left on a relatively modest hard drive.

- Spend more on magnetic storage to maximize capacity, then get one of the least-expensive SSDs you can find.

Naturally, running all of your applications from a 90 GB SSD yields the best performance. But a 64-bit install of Windows 7 consumes up to 20 GB. Once we install Office 2010, Photoshop CS5, WinRAR, Adobe Acrobat, Crysis 2, World of Warcraft: Cataclysm, and Call of Duty: Modern Warfare 2, we're pushing 90 GiB of data (more than our 90 GB drive can hold). Practically, an enthusiast with lots of music, movies, and user data simply cannot afford to give up hundreds of gigabytes of space for a few gigs of flash-based storage at the same budget point.

Caching lets you put the emphasis back on copious repositories by limiting the solid-state device to something very small, and controlling its capacity automatically.

So really, the question is: can I afford to spend more money on an 80+ GB SSD, plus a nice big hard drive for my user data, or do I really need to stick to that budget and use caching to help performance a little bit without compromising space for my files?

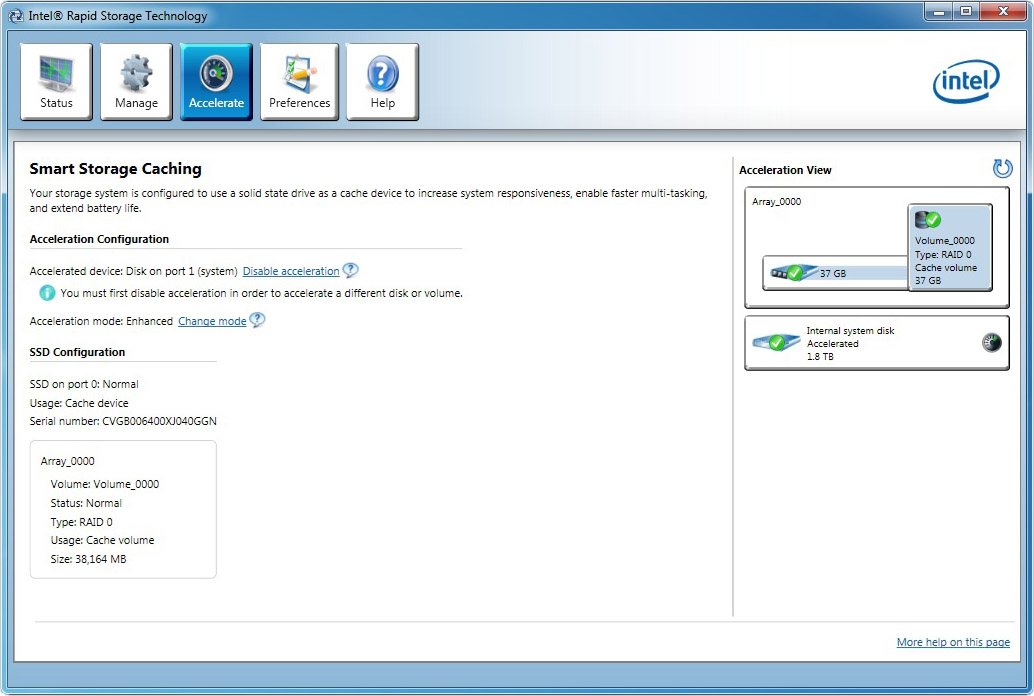

Enabling SSD Caching

Let's say you've made the decision to jump into Sandy Bridge with an Z68-based platform and want to use Smart Response Technology to help improve storage performance without sinking a ton of money into a large SSD. You have to satisfy a handful of requirements before exploiting the feature:

- The SATA controller must be set to RAID mode in the BIOS.

- You must install Windows Vista, 7, or 2008 while in RAID mode.

- You need a SATA-based SSD with at least 18.6 GB of free space.

- There must be no RAID recovery volume.

- You must have Intel’s Rapid Storage Technology software version 10.5 or later.

In addition to those requirements, it's important to note that the cache can't be any larger than 64 GB. But beyond a certain drive capacity, you’re better off using your SSD as a system drive. You’re going to get faster application loading from a 120 GB Vertex 2, for example, than any combination of SSD caching. With that said, you could still use a 120 GB SSD to cache data. However, Intel leaves everything beyond 64 GB as a separate user-accessible partition.

Setup hasn’t changed since we first described it in our Z68 preview. You just need to install Windows on your hard drive, add your SSD, and enable acceleration. It’s as simple as that. The only hiccup is that several motherboard vendors seem to be shipping Z68 driver CDs with RST version 10.1, and you need at least version 10.5 or later to enable SSD caching.

Current page: Smart Response Technology: Improving Storage Performance, Transparently

Prev Page Z68 Express: CPU Overclocking Next Page Intel SSD 311 (Larson Creek): Z68-Optimized-

LuckyDucky7 The Intel 311 might be one of the weirdest products I've seen for a while.Reply

It doesn't have an impact on games and apps which are too large to be cached and 60 GB drives that blow the 311 out of the water can be had for 20 bucks more.

And as far as getting QuickSync, it's about time. Should have been done in P67 (along with USB 3.0 support and 6 x SATA III ports) is all I can say. -

acku In an ideal world, that's what we should have seen, but Lucidlogix's Virtu really makes Z68 worth it.Reply -

ghnader hsmithot Sir and madam working at intel.You make us customers look retarded.Thank you.Reply -

Olle P mayankleoboy1is this realy the platform for enthusiasts? with almost daily news of lga2011 ... its a little bit hard to get too happy with thisYes it is!Reply

I am going to buy myself a Z68 mobo and a Core i5-2500K within a few weeks.

If you buy yourself an LGA2011 based platform we can get together a month from now and compare the results!

... or rather not, since it will take at least half a year for the 2011 to become available.

Let's face it. For at least a full month from now the Z68 will be the enthusiast platform.

Then AMD's competition will arrive, and we'll see how much of an option that is. -

acku hmp_gooseWhat is this "QuickSync"? My people do not have this word …Reply

http://www.tomshardware.com/reviews/sandy-bridge-core-i7-2600k-core-i5-2500k,2833-4.html -

ta152h A good comparison would have been striping hard disks to compare against caching with EEPROMs. You'd have more capacity, a lot more, and wouldn't have a technology that dies after a certain amount of writes, which is dubious to use for something that's being used as a cache, and written on rather consistently.Reply

Performance of Raid 0 would be higher than a single disk, and you'd be increasing performance without a loss in capacity (per dollar). Or, if you wanted the same capacity. you could get higher performance disks, and compare them that way.

If I want to spend an extra $100 to make my computer faster, will it? Duh, of course. That's all this article is saying. Is it the best way to spend that $100? Well, that much isn't clear at all. It wasn't compared with much of anything else. Two high capacity disks striped, and two higher performance disks (but lower capacity) striped, versus one disk and EEPROMs. All should be the same cost. It's more useful information. You'd have three fundamental choices - huge capacity, high "Winchester" performance, and low capacity with EEPROM caching. You could do a search on the capacity trade-offs pretty easily, but the performance difference between this caching and a high performance magnetic disk in RAID 0 is much less clear. Obviously, the hard disks would win a lot of tests, and could be a better buy for a lot of people.

It is worth looking at. -

Olle P Another little detail:Reply

Larsen Creek was the work name for Intel's SSD.

The final name now in use is Larson Creek, as can be easily read in the picture. -

flong Hey, did I read this right, the theoretical maximum of the 2600K and 2500k chips is 5.7 ghtz???? Has anyone ever got a cpu that high? The most Ive read about is 5.0 ghtz and that was with water cooling. So does 5.7 ghtz exist?Reply -

My GoD!Reply

Intels output is capped at 1920x1200? Below my native res! I've been forced to put my buy on hold...

What were they thinking?