Toshiba's $7000+ 400 GB SSD: SAS 6Gb/s, SLC Flash, And Big Endurance

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Benchmarking For The Enterprise: A Whole New World

Just as server- and workstation-oriented processors have to be tested differently than desktop CPUs, so too does enterprise-oriented storage need to be evaluated in a unique way.

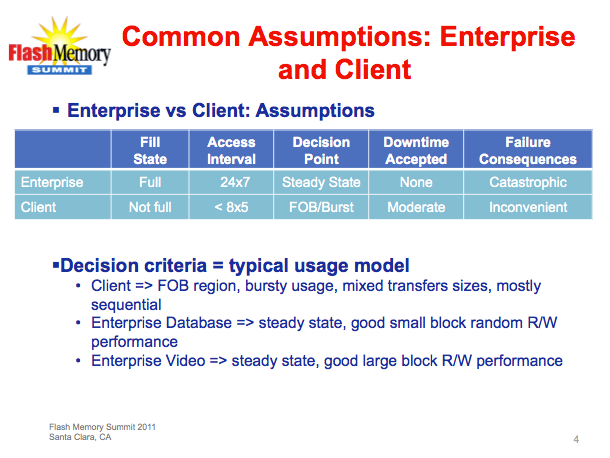

We got the above slide from last year's Flash memory Summit in Santa Clara, CA. The assumption for enterprise storage is that it's generally full, and often being accessed around the clock. As a result, fresh-out-of-box/burst performance is largely irrelevant. There's little to no idle time for the background garbage collection and TRIM commands to recover performance, which means an SSD in such a taxing environment is going to hit its steady-state and stay there.

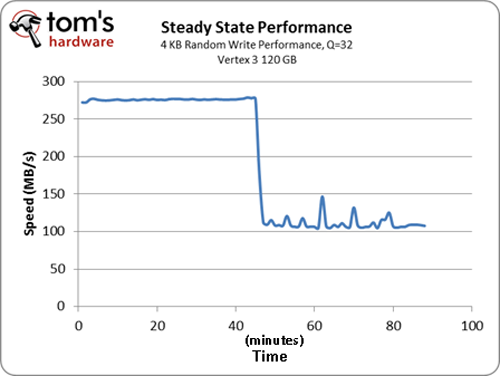

Ultimately, we have to use a different methodology to get to and then test steady-state performance. The goal is to benchmark at a point where an SSD's performance no longer changes over time, necessitating constant writes in order to determine sustained performance. The chart above illustrates how, after some period of use, an SSD drops from its out-of-box performance level to a more sustainable steady-state level.

Article continues belowIn order to attain that second point, we precondition our SSDs before running our enterprise benchmarks. But because every drive's steady-state point is different (and because there are multiple steady states, depending on the workload you run), we specifically subject our SSDs to two types of conditioning:

- For our 4 KB random, database, file server, and Web server tests, we write 3x full capacity of the drive using random writes.

- For our 128 KB sequential tests, we write 3x full capacity of the drive sequentially.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmarking For The Enterprise: A Whole New World

Prev Page Test Setup And Benchmarks Next Page 4 KB Random Performance-

compton Good job, Mr. Ku.Reply

Perhaps the Enterprise SSD Fairy will bring you a Hitatchi UltraStar with Intel's 6gbps controller. I'd be eager to see how it compares.

There is no substitute for SLC though. -

bennaye nebun$7000 any company willing to pay this much for an SSD is fullishReply

...fullish of cash? Definitely. Foolish? Probably not.

-

nebun bennaye...fullish of cash? Definitely. Foolish? Probably not.damn the english language.....there are way to many words that sound alikeReply -

nitrium Why is the 4KB Random read/write performance shown as IOPS, but 128KB and 2MB performance is in MB/sec? What speed (in MB/sec) does this drive achieve in 4KB? I guess I could calculate it from (IOPS * 4KB) / 1024 (I think that's right), but why should I have to?Reply -

spazoid amdfreakIt is too expensive for the performance it offers. You can get a RAID array of many Intel SSDs beating Toshiba in every segment.Reply

You've clearly not understood the purpose of this article. Stick to commenting the desktop drive reviews in the future, please.

Thank you for this review, and especially your estimations on the endurance of the drive. It's something that's damn near impossible for us IT professionals to get accurate estimations of in the real world. For some reason, bosses tend to want the expensive hardware to be put to use instead of being thoroughly tested.

More of these types of articles please! :] -

@spazoid, so you are telling me that you are willing to pay 10x for an endurance of 3x over the INTEL 520 SSD?Reply

Even when the INTEL SSD already has an endurance longer than your refresh cycle for your tech stack?

-

EJ257 frozonicLOL, i can just imagine myself in ten years telling my kids that we had to pay 7000$ for a 400gb ssd...by that time we are gonna have 400+ TB ssdsReply

"Back in my days storage drives used to have moving parts. Now its all solid state." -

jaquith I own a small data center and thankfully have access to a 'major' financial institutions test data, and I agree with your conclusions especially regarding deployment into production. $7K SSD is a tough call with a 5-year, but if it were 7~10-year then probably an easy call.Reply

Unlike super-sized enterprise which I am not, the cost/benefit calculations would be difficult for myself. I know firsthand the money that i.e. financial institutions push into their data centers, and for those folks $7K isn't out of the question.

Interesting SSD and if the prices come down and warranty extended then IMO it would be something to consider and compare against Intel's products.