ATI Radeon HD 5450: Eyefinity And HTPCs For Everyone?

Test Setup And Benchmarks

We decided to test the Radeon HD 5450 against its primary competition in the $40 to $50 price segment. This includes its predecessors, the Radeon HD 4550 and 4650, the GeForce 210 and DDR2-based GeForce 9500 GT.

First, we must note that the Radeon HD 5450 sample we received was tuned to a 900 MHz memory clock, which is 100 MHz faster than the reference specification. Since the beta driver necessary for use with this card doesn't support Overdrive (and our overclocking tools weren't able to properly identify the card), we were stuck with the overclock. An AMD representative offered to let us retest with a new card, but that came just hours before the embargo lift, leaving too little time for new numbers.

Getting the GeForce cards to do what we wanted proved to be a little more complicated, as both are factory-overclocked models and the newest 196.21 driver broke all of our overclocking tools. RivaTuner, MSI Afterburner, and Gigabyte's Gamer HUD Lite were unusable on our test system. In addition, the GeForce 9500 GT test sample we had was a DDR3-based model that is priced too high to compete fairly.

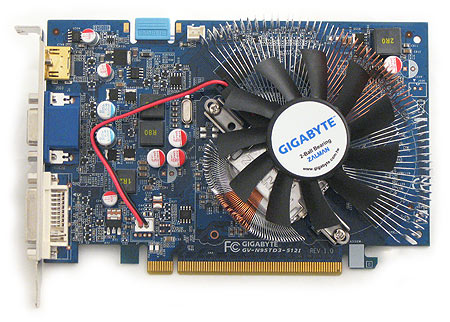

Our solution for the 9500 GT, a factory-overclocked Gigabyte GV-N95TD3-512I, was to use the older 195.62 driver to enable overclocking tools and then underclock the core to 550 MHz and the memory to 450 MHz. This should give us results comparable to a reference GeForce 9500 GT. We allowed the 50 MHz memory speed advantage versus the reference DDR2 model in an attempt to negate the notable latency disadvantage that the DDR3 memory would suffer.

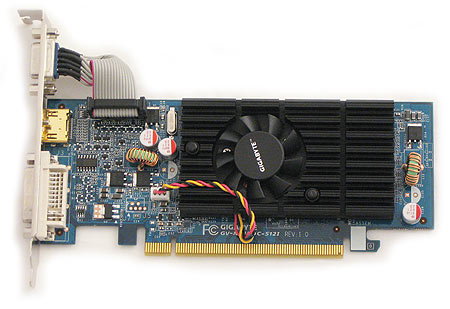

As for the GeForce 210, we left the clock speeds at the factory setting and used the newest 196.21 driver. Why did we do this? Most important, the overclocked Gigabyte card can be purchased for $42 online, which is notably below the Radeon HD 5450's $50 price tag.

While the Radeon HD 5450 is admittedly intended for low-impact gaming scenarios, we will put it through a gamut of game tests at lower settings to see if the card is able to slog through some of the titles we enjoy.

| Header Cell - Column 0 | Graphics Test System |

|---|---|

| CPU | Intel Core i7-920 (Nehalem), 2.67 GHz, QPI-4200, 8MB Shared L3 CacheOverclocked to 3.06 GHz @ 153 MHz BCLK |

| Motherboard | ASRock X58 SuperComputer Intel X58, BIOS P1.90 |

| Networking | Onboard Realtek Gigabit LAN controller |

| Memory | Kingston PC3-10700 3 x 1,024MB, DDR3-1225, CL 9-9-9-22-1T |

| Graphics | ATI Radeon HD 5450650 MHz Core, 900 MHz Memory, 512MB DDR3Memory factory overclocked 100 MHz above referenceSapphire Radeon HD 4650600 MHz Core, 400 MHz Memory, 512MB DDR2Diamond Radeon HD 4550600 MHz Core, 800 MHz Memory, 512MB DDR3Gigabyte GeForce 9500 GT650 MHz Core, 1,625 MHz Shaders, 800 MHz Memory, 512MB DDR3Underclocked to 550 MHz core, 1375 MHz shaders, 450 MHz memory to simulate reference DDR2 9500 GTGigabyte GeForce 210650 MHz Core, 1,547 MHz Shaders, 400 MHz Memory, 512MB DDR2 |

| Hard Drive | Western Digital Caviar WD50 00AAJS-00YFA500GB, 7200 RPM, 8MB cache, SATA 3.0 Gb/s |

| Power | Thermaltake Toughpower 1,200W1,200 W, ATX 12V 2.2, EPS 12v 2.91 |

| Software and Drivers | |

| Operating System | Microsoft Windows Vista Ultimate 64-bit 6.0.6001, SP1 |

| DirectX version | DirectX 10 |

| Graphics Drivers | AMD Catalyst 10.1, Nvidia GeForce 195.62 (GeForce 9500 GT), 196.21 (GeForce 210) |

| Benchmark Configuration | |

|---|---|

| 3D Games | |

| Crysis | Patch 1.2.1, DirectX 9, 64-bit executable, benchmark tool Low Quality, Medium Textures, Physics, and Sound, No AA |

| Far Cry 2 | Patch 1.02, in-game benchmark Medium Quality, No AA |

| DiRT 2 | Version 1.0.0, Custom THG Benchmark Run 1: Medium Settings, No AA, DirectX 9Run 2: Medium Settings, No AA, DirectX 11 |

| World In Conflict | Patch 1009, DirectX 9, timedemo Medium Details, No AA/No AF |

| Tom Clancy's H.A.W.X. | Patch 1.02, DirectX 10 & 10.1, in-game benchmark Low Shadows, Sun ShaftsMedium View Distance, Environment, SSAOHigh Forest, TexturesHDR, Engine Heat, and DOE On, No AA |

| Left 4 Dead | Version 1.0.1.5., Custom THG Benchmark High Settings, Medium Shaders, 4xAA, 8xAF |

| Synthetic Benchmarks and Settings | |

| 3DMark Vantage | Version: 1.02, PhysX Off, 3DMark scores |

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Test Setup And Benchmarks

Prev Page Radeon HD 5450: The Reference Card Next Page Benchmark Results: 3DMark Vantage And Far Cry 2Don Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

popaholic For the all the idiots out there, yes it can run Crysis, slightly.Reply

Whats the point of releasing a new graphics card thats worse than older cards? It runs Dx11 but there's no way it could even run a supported game.

-

The links to the article pages are either missing or directed wrongly. For example, the "Power and Temperature Benchmarks", "Conclusion" pages are missing or directed wrongly.Reply

-

cangelini serokichimThe links to the article pages are either missing or directed wrongly. For example, the "Power and Temperature Benchmarks", "Conclusion" pages are missing or directed wrongly.Reply

Try refreshing the page. Should be working correctly now! -

acasel a crossfire config with this video card + overclock will make this article much better in a gamers point of view...Reply -

cleeve acasela crossfire config with this video card + overclock will make this article much better in a gamers point of view...Reply

Not really, look at the specs. In CrossFire these cards would cost $100 for a total 160 shader cores. They still wouldn't hold a candle to a single $100 5670 when gaming, which has 400 shader cores all by itself.

CrossFiring the 5450 would be a total waste. -

masterjaw Passively-cooled 5450 in crossfire = failReply

How do you expect it to handle the increase in temps? Even if you got some good airflow inside the case, that won't be sufficient. -

skora How selfish you all are thinking THG only does gaming cards!!!! When ATI cuts the hardware (shaders/ROPs) to the bone, its not about gaming. Its for the HTPC and multi-monitor office crowd and thats it. It's a niche card and looks to do that admirably.Reply -

shubham1401 Lol...Reply

They needed a i7 and 1200W PSU to test this card... :)

Useless...Either get a good card or stick with integrated.