ATI Radeon HD 5450: Eyefinity And HTPCs For Everyone?

Power And Temperature Benchmarks

Let's shift our perspective from games to power usage.

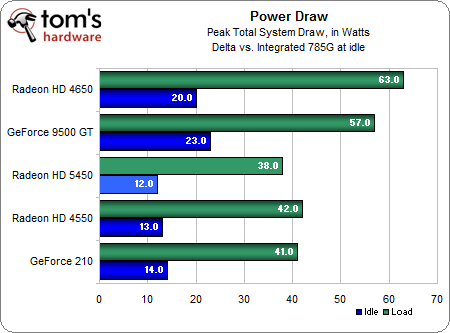

We can see the Radeon HD 5450 uses a bit less power than the Radeon HD 4550 and slightly less than the GeForce 210, despite the new card's vastly superior gaming performance. We also see that a higher power draw is the price that the Radeon HD 4650 demands for its gaming performance. But in the big scheme of things, a 43W increase under load isn't bad at all.

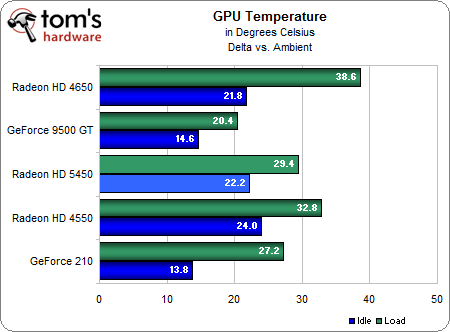

All of these temperatures are acceptable, but the new Radeon HD 5450 fares particulalry well for a passively-cooled card. It's notable that the GeForce 9500 GT is a Gigabyte model fitted with a beefy aftermarket cooler, and this explains its ability to keep load temperatures so very low. The GeForce 210 makes a great showing here, but it is the only card in the bottom three that sports an active fan cooler instead of a passive unit.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Power And Temperature Benchmarks

Prev Page Anti-Aliasing And Anisotropic Filtering Next Page ConclusionDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

popaholic For the all the idiots out there, yes it can run Crysis, slightly.Reply

Whats the point of releasing a new graphics card thats worse than older cards? It runs Dx11 but there's no way it could even run a supported game.

-

The links to the article pages are either missing or directed wrongly. For example, the "Power and Temperature Benchmarks", "Conclusion" pages are missing or directed wrongly.Reply

-

cangelini serokichimThe links to the article pages are either missing or directed wrongly. For example, the "Power and Temperature Benchmarks", "Conclusion" pages are missing or directed wrongly.Reply

Try refreshing the page. Should be working correctly now! -

acasel a crossfire config with this video card + overclock will make this article much better in a gamers point of view...Reply -

cleeve acasela crossfire config with this video card + overclock will make this article much better in a gamers point of view...Reply

Not really, look at the specs. In CrossFire these cards would cost $100 for a total 160 shader cores. They still wouldn't hold a candle to a single $100 5670 when gaming, which has 400 shader cores all by itself.

CrossFiring the 5450 would be a total waste. -

masterjaw Passively-cooled 5450 in crossfire = failReply

How do you expect it to handle the increase in temps? Even if you got some good airflow inside the case, that won't be sufficient. -

skora How selfish you all are thinking THG only does gaming cards!!!! When ATI cuts the hardware (shaders/ROPs) to the bone, its not about gaming. Its for the HTPC and multi-monitor office crowd and thats it. It's a niche card and looks to do that admirably.Reply -

shubham1401 Lol...Reply

They needed a i7 and 1200W PSU to test this card... :)

Useless...Either get a good card or stick with integrated.