Investigation: Is Your SSD More Reliable Than A Hard Drive?

SSD Reliability: Is Your Data Really Safe?

Back in 2008, Intel made a case to us about storage bottlenecking its Nehalem architecture. We were at IDF in San Francisco, the company was introducing its first solid-state drives, and its representatives stood on stage, describing the ways in which a conventional hard drive slowed down a Core i7 processor. Three years later, we've seen over and over in benchmarks that SSDs are legitimate performance-adders, changing the computing experience fairly dramatically.

With that said, performance isn’t everything. When it comes to your data, all of the speed in the world means little if you can't trust the device holding that important information. After all, when you read about Corsair's Force 3 recall, OCZ's firmware updates to prevent BSODs, Crucial's link power management issues, and Intel's SSD 320 that loses capacity after a power failure, all within a two-month period, you have to acknowledge that we're dealing with a technology that's simply a lot newer (and consequently less mature) than mechanical storage.

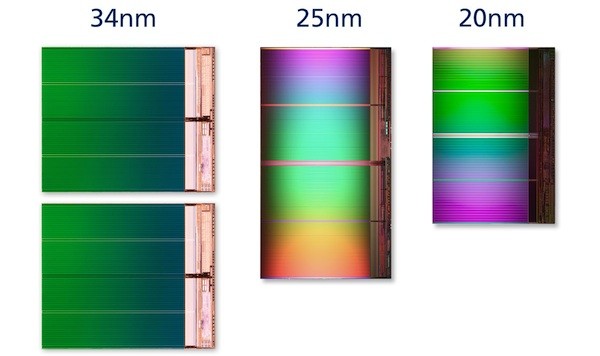

This topic is even more relevant now, in the wake of a swift shift from 3x nm NAND to flash memory manufactured at 25 nm. We've talked to some very bright minds in solid-state drive design, and the theme is consistent. It's more difficult to overcome the challenges presented by flash manufactured at 25 nm than it was at 34 nm. But today's buyers should still expect better performance and reliability compared to previous-generation products. Succinctly, the lower number of program/erase cycles inherent to NAND cells created using smaller geometry continues to be overblown.

| Header Cell - Column 0 | P/E Cycles | Total Terabytes Written (JEDEC formula) | Years till Write Exhaustion (10 GB/day, WA = 1.75) |

|---|---|---|---|

| 25 nm, 80 GB SSD | 3000 | 68.5 TBW | 18.7 years |

| 25 nm, 160 GB SSD | 3000 | 137.1 TBW | 37.5 years |

| 34 nm, 80 GB SSD | 5000 | 114.2 TBW | 31.3 years |

| 34 nm, 160 GB SSD | 5000 | 228.5 TBW | 62.6 years |

You shouldn’t have to worry about the number of P/E cycles that your SSD can sustain. The previous generation of consumer-oriented SSDs used 3x nm MLC NAND generally rated for 5000 cycles. In other words, you could write to and then erase data 5000 times before the NAND cells started losing their ability to retain data. On an 80 GB drive, that translated into writing 114 TB before conceivably starting to experience the effects of write exhaustion. Considering that the average desktop user writes, at most, 10 GB a day, it would take about 31 years to completely wear the drive out. With 25 nm NAND, this figure drops down to 18 years. Of course, we're oversimplifying a complex calculation. Issues like write amplification, compression, and garbage collection can affect those estimates. But overall, there is no reason you should have to monitor write endurance like some sort of doomsday clock on your desktop.

Obviously, we know that SSDs still fail, though. All it takes is 10 minutes of flipping through customer reviews on Newegg's listings. But write-cycle exhaustion isn't the problem. Sometimes firmware is to blame. We know this because of the firmware updates vendors issue specifically targeting a documented problem. Other failures are electronic in nature. A capacitor or memory IC might go out, taking the SSD with it. Of course, we'd expect fewer issues with SSDs than hard drives, which have moving parts that invariably wear out over time. Do solid-state drives' lack of moving parts translate into higher reliability? Is the data on your SSD any safer than it would be on a hard drive?

With that question weighing on an increasing number of enthusiasts' and IT professionals' minds, we set out to investigate SSD reliability and sort the facts from the fiction.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: SSD Reliability: Is Your Data Really Safe?

Next Page What Do We Know About Storage?-

hardcore_gamer Endurance of floating gate transistor used in flash memories is low. The gate oxide wears out due to the tunnelling of electrons across it. Hopefully phase change memory can change things around since it offers 10^6 times more endurance for technology nodesReply -

acku Reply10444003 said:Endurance of floating gate transistor used in flash memories is low. The gate oxide wears out due to the tunnelling of electrons across it. Hopefully phase change memory can change things around since it offers 10^6 times more endurance for technology nodes

As we explained in the article, write endurance is a spec'ed failure. That won't happen in the first year, even at enterprise level use. That has nothing to do with our data. We're interested in random failures. The stuff people have been complaining about... BSODs with OCZ drives, LPM stuff with m4s, the SSD 320 problem that makes capacity disappear... etc... Mostly "soft" errors. Any hard error that occurs is subject to the "defective parts per million" problem that any electrical component also suffers from.

Cheers,

Andrew Ku

TomsHardware.com -

slicedtoad hacker groups like lulsec should do something useful and get this kind of internal data from major companies.Reply -

jobz000 Great article. Personally, I find myself spending more and more time on a smartphone and/or tablet, so I feel ambivalent about spending so much on a ssd so I can boot 1 sec faster.Reply -

You guys do the most comprehensive research I have ever seen. If I ever have a question about anything computer related, this is the first place I go to. Without a doubt the most knowledgeable site out there. Excellent article and keep up the good work.Reply

-

acku slicedtoadhacker groups like lulsec should do something useful and get this kind of internal data from major companies.Reply

All of the data is so fragmented... I doubt that would help. You still need to take a fine toothcomb to figure out how the numbers were calculated.

gpm23You guys do the most comprehensive research I have ever seen. If I ever have a question about anything computer related, this is the first place I go to. Without a doubt the most knowledgeable site out there. Excellent article and keep up the good work.

Thank you. I personally love these type of articles.. very reminiscent of academia. :)

Cheers,

Andrew Ku

TomsHardware.com -

K-zon I will say that i didn't read the article word for word. But of it seems that when someone would change over from hard drive to SSD, those numbers might be of interest.Reply

Of the sealed issue of return, if by the time you check that you had been using something different and something said something else different, what you bought that was different might not be of useful use of the same thing.

Otherwise just ideas of working with more are hard said for what not to be using that was used before. Yes?

But for alot of interest into it maybe is still that of rather for the performance is there anything of actual use of it, yes?

To say the smaller amounts of information lost to say for the use of SSDs if so, makes a difference as probably are found. But of Writing order in which i think they might work with at times given them the benefit of use for it. Since they seem to be faster. Or are.

Temperature doesn't seem to be much help for many things are times for some reason. For ideas of SSDs, finding probably ones that are of use that reduce the issues is hard from what was in use before.

When things get better for use of products is hard placed maybe.

But to say there are issues is speculative, yes? Especially me not reading the whole article.

But of investments and use of say "means" an idea of waste and less use for it, even if its on lesser note , is waste. In many senses to say of it though.

Otherwise some ideas, within computing may be better of use with the drives to say. Of what, who knows...

Otherwise again, it will be more of operation place of instances of use. Which i think will fall into order of acccess with storage, rather information is grouped or not grouped to say as well.

But still. they should be usually useful without too many issues, but still maybe ideas of timiing without some places not used as much in some ways. -

cangelini To the contrary! We noticed that readers were looking to see OWC's drives in our round-ups. I made sure they were invited to our most recent 120 GB SF-2200-based story, and they chose not to participate (this after their rep jumped on the public forums to ask why OWC wasn't being covered; go figure).Reply

They will continue to receive invites for our stories, and hopefully we can do more with OWC in the future!

Best,

Chris Angelini -

ikyung Once you go SSD, you can't go back. I jumped on the SSD wagon about a year ago and I just can't seem to go back to HDD computers =Reply