Microsoft Posts In-Depth AMD EPYC Milan-X Benchmarks

AMD officially announced the company's latest EPYC Milan-X processors with 3D V-Cache, among other interesting things, such as the Instinct MI200 and a roadmap for Zen 4. The chipmaker didn't list the specifications for the cache-stacked chips, though, but Microsoft has shared Milan-X benchmarks to show the performance uplift that 3D V-Cache brings to the table.

Microsoft will tap into Milan-X to power its new Azure HBv3 Series VMs, which are based on a pair of EPYC 7V73X processors. Each processor delivers up to 64 Zen 3 cores for a total of 128 cores per server. However, eight cores from each server are reserved to feed the Azure hypervisor and other orchestration routines. As a result, Microsoft offers its customers up to five configurations with different core counts: 120 cores, 96 cores, 64 cores, 32 cores, and 16 cores. The EPYC 7V73X sports a peak clock speed up to 3.5 GHz.

Milan-X features up to 768MB of L3 cache (L3 + 3D V-Cache) per chip, so a dual-socket configuration delivers up to 1.5GB of L3 cache per system, or in Microsoft's case, per VM. Logically, the L3 allocation will depend on the setup. For example, the 16-core VM has access to 96MB per core, whereas the 32-core setup drops to 48MB per core. At any rate, Milan-X's L3 capacity represents a 3x upgrade over current Milan chips, or a 6x improvement over the previous Rome processors.

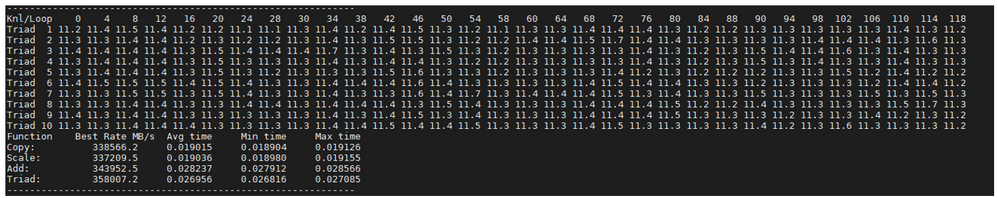

The Azure HBv3's other hardware has not been changed. There's still 448GB of memory with a bandwidth of 350 GBps (measured with STREAM TRIAD). In addition, two 900GB NVMe SSDs provide high-speed storage with read and write speeds up to 6.9 GBps and 2.9 GBps, respectively, and a Mellanox ConnectX-6 NIC provides 200 Gbps Ethernet connectivity.

Microsoft Azure HBv3 Specifications

| VM Size | 120 CPU cores | 96 CPU cores | 64 CPU cores | 32 CPU cores | 16 CPU cores |

|---|---|---|---|---|---|

| VM Name | standard_HB120rs_v3 | standard_HB120- 96rs_v3 | standard_HB120- 64rs_v3 | standard_HB120- 32rs_v3 | standard_HB120- 16rs_v3 |

| InfiniBand | 200 Gb/s HDR | 200 Gb/s HDR | 200 Gb/s HDR | 200 Gb/s HDR | 200 Gb/s HDR |

| Peak CPU Frequency | 3.5 GHz | 3.5 GHz | 3.5 GHz | 3.5 GHz | 3.5 GHz |

| RAM per VM | 448 GB | 448 GB | 448 GB | 448 GB | 448 GB |

| RAM per core | 3.75 GB | 4.67 GB | 7 GB | 14 GB | 28 GB |

| Memory B/W per VM | 350 GB/s | 350 GB/s | 350 GB/s | 350 GB/s | 350 GB/s |

| Memory B/W per core | 2.91 GB/s | 3.65 GB/s | 5.46 GB/s | 10.9 GB/s | 21.9 GB/s |

| L3 Cache per VM | 1.5 GB | 1.5 GB | 1.5 GB | 1.5 GB | 1.5 GB |

| L3 Cache per core | 12.8 MB | 16 MB | 24 MB | 48 MB | 96 MB |

| SSD Perf per VM | 2 * 960GB NVMe - 6.9 GB/s (Read) / 2.9 GB/s (Write), 200K IOPS (Read) / 190K IOPS (Write) | 2 * 960GB NVMe - 6.9 GB/s (Read) / 2.9 GB/s (Write), 200K IOPS (Read) / 190K IOPS (Write) | 2 * 960GB NVMe - 6.9 GB/s (Read) / 2.9 GB/s (Write), 200K IOPS (Read) / 190K IOPS (Write) | 2 * 960GB NVMe - 6.9 GB/s (Read) / 2.9 GB/s (Write), 200K IOPS (Read) / 190K IOPS (Write) | 2 * 960GB NVMe - 6.9 GB/s (Read) / 2.9 GB/s (Write), 200K IOPS (Read) / 190K IOPS (Write) |

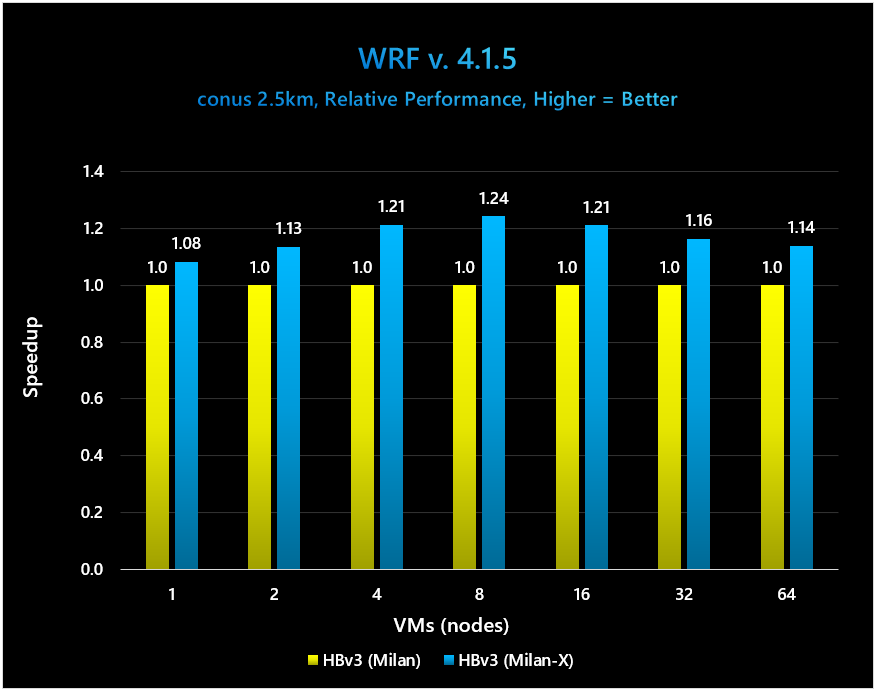

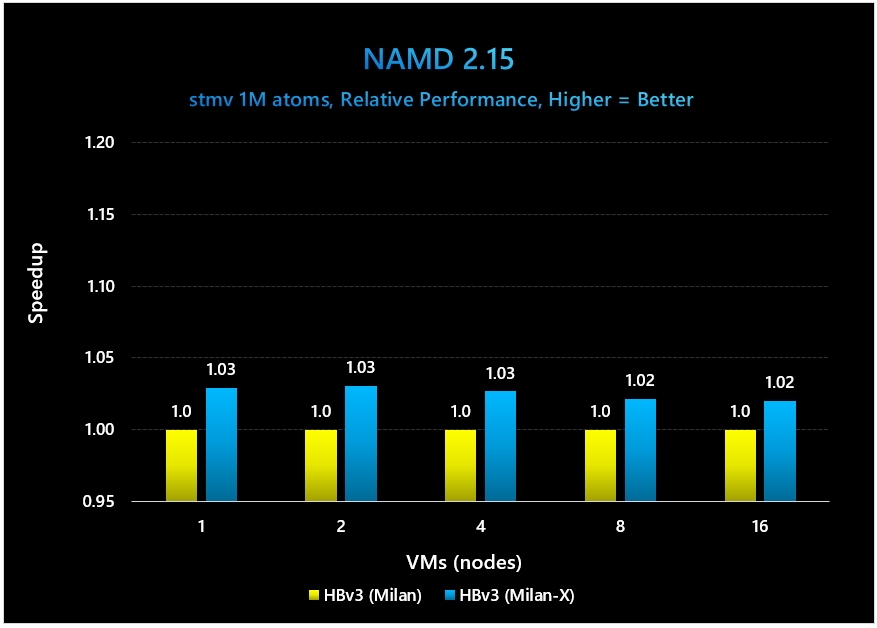

Microsoft noted that a large cache obviously boosts effective memory bandwidth and latency. Workloads, such as computational fluid dynamics (CFD), explicit finite element analysis (FEA), weather simulation, and EDA RTL simulation will benefit from Milan-X's generous helping of L3 cache. On the contrary, workloads that are dependant on peak FLOPS, clock speeds, or memory capacity are immune to large L3 caches. These include molecular dynamics, EDA full-chip design, EDA parasitic extraction, and implicit finite element analysis.

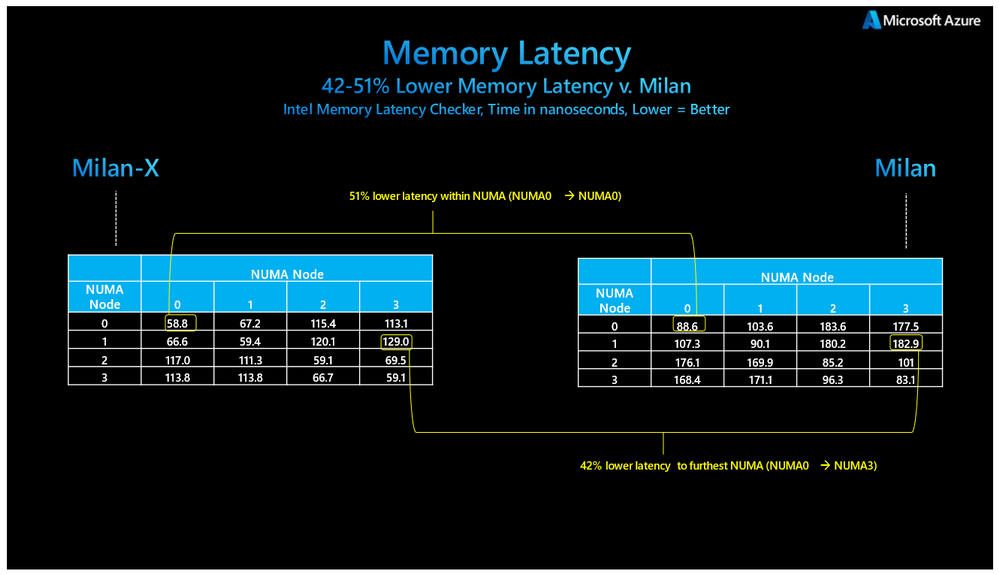

The results revealed that Milan-X (EPYC 7V73X) had between 42% to 50% lower memory latency than the current-gen Milan (EPYC 7V13). Milan-X presents one of the biggest jumps in relative performance in terms of memory latency ever since memory controllers have transitioned into the processor. It's essential to mention that Microsoft's results aren't indicative that Milan-X has improved the latency of DRAM accesses.

According to Microsoft, large caches allow for higher cache hit rates and create a combination of L3 and DRAM latencies for improved effective real-world results. Due to the way AMD stacked the L3 cache, the width of the L3 latency distribution has expanded. Nonetheless, Microsoft believes that Milan-X should have an L3 memory latency in the same ballpark as Milan. In a worst-case scenario, Milan-X may present slightly slower L3 latency.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Memory on Milan-X follows a similar story. Milan-X puts up around 358 GB/s of throughput on the STREAM TRIAD benchmark. The result is identical to a conventional dual-socket server with Milan chips paired with DDR4-3200 memory in a single-DIMM-per-channel setup.

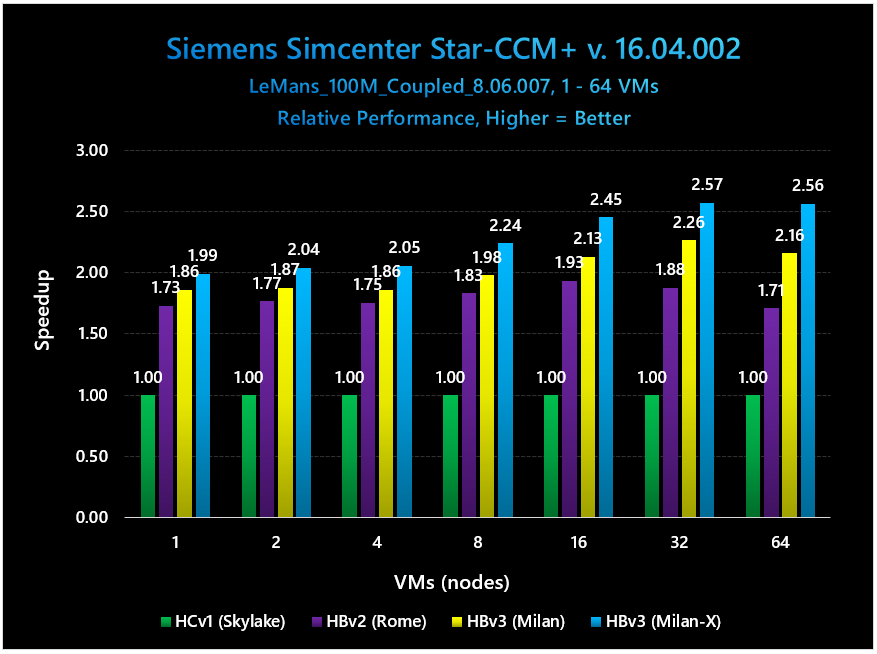

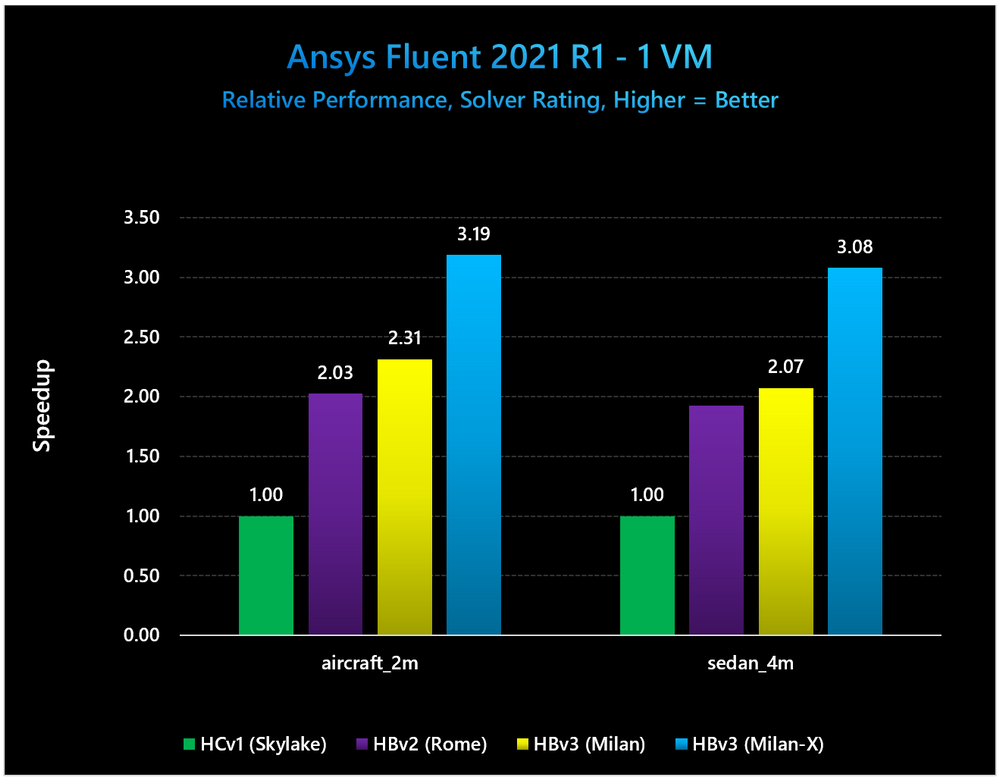

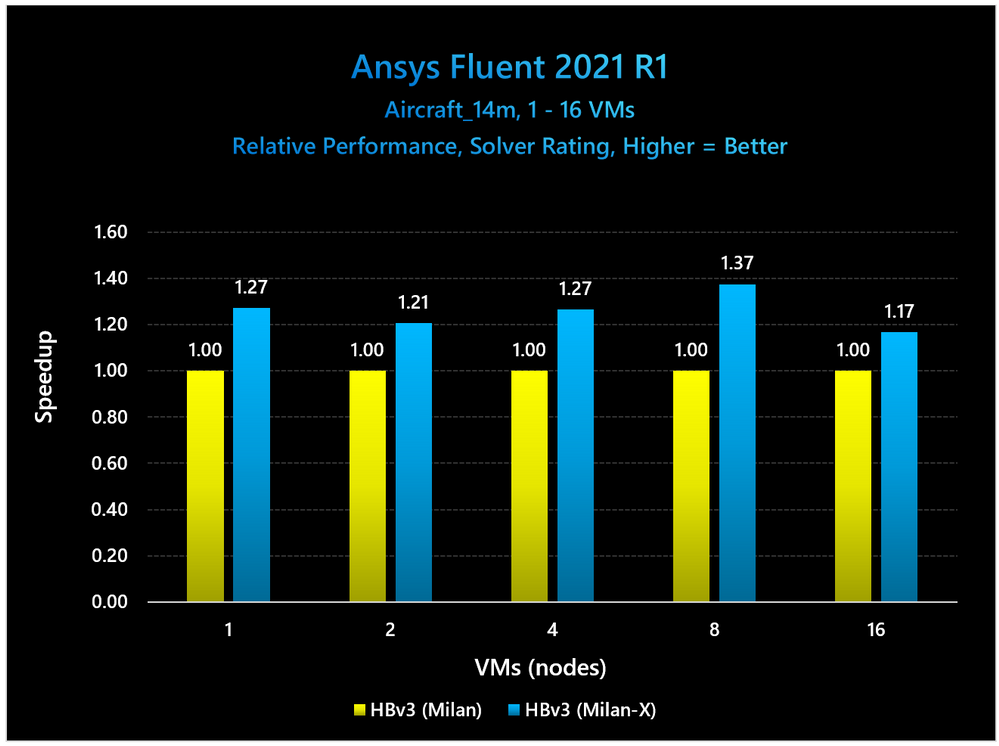

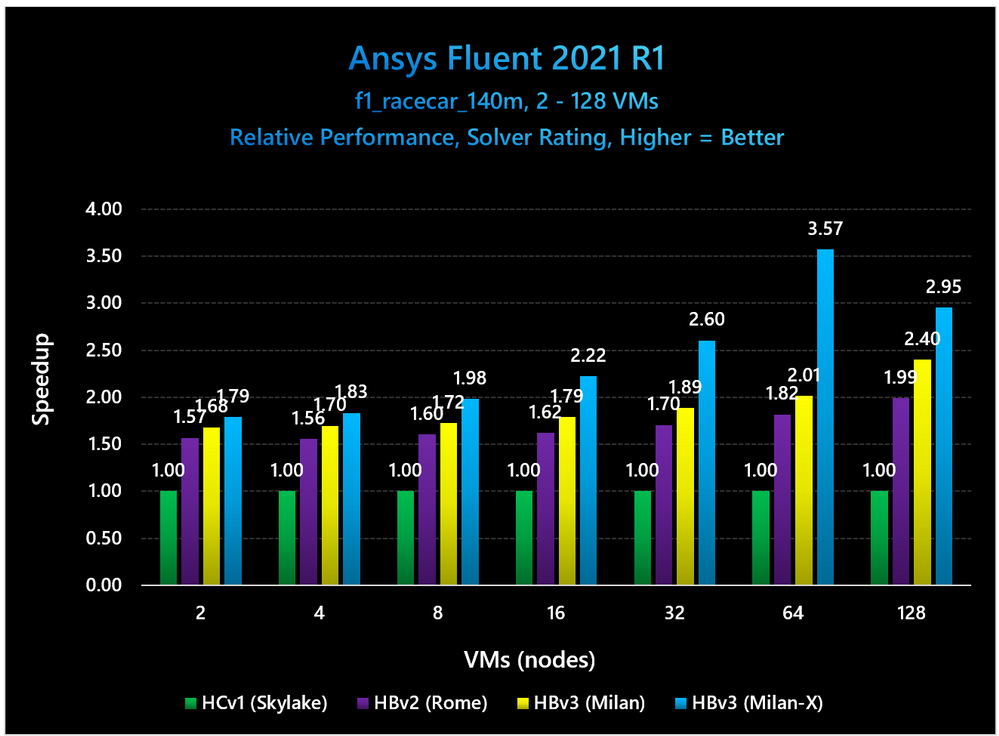

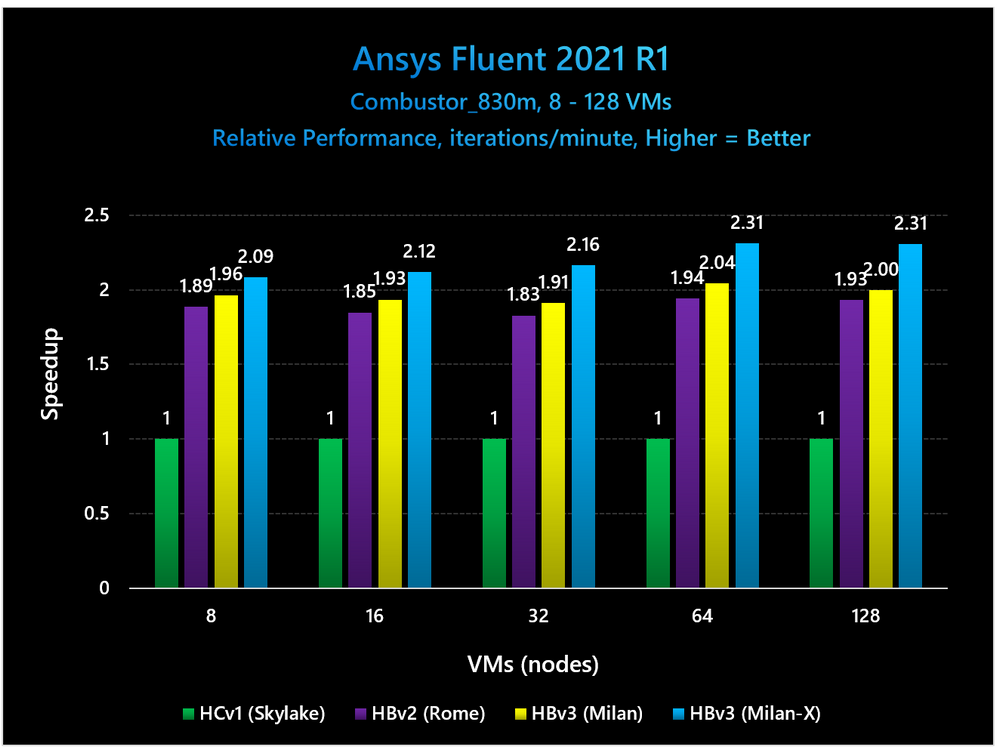

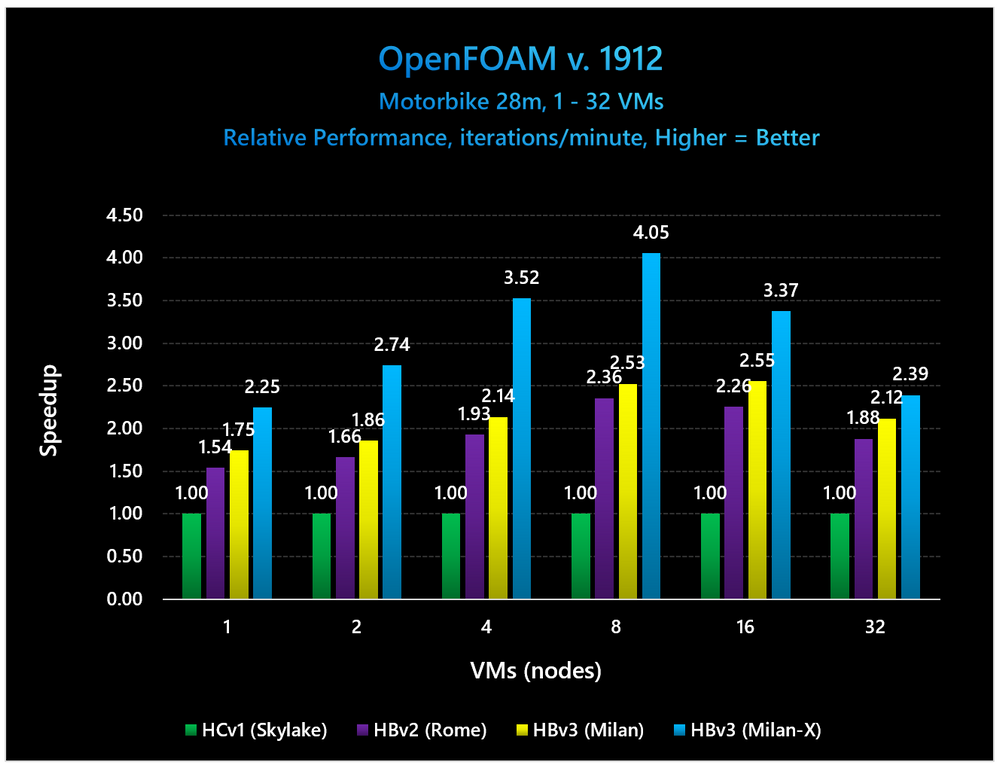

Microsoft put the EPYC 7V73X through its paces and compared the Milan-X chip to the company's Azure HBv3 VMs with EPYC Milan, EPYC Rome and Xeon Platinum (Skylake) processors. Needless to say, Milan-X's performance is nothing short of amazing.

At the 64 VM configuration, Milan-X delivered up to 77% higher performance than Milan and was up to 257% faster than Skylake with the f1_racecar_140 model on Ansys Fluent 2021 R1. With the combustor_830m model, Milan-X posted 16% and 131% higher performance numbers than Milan and Skylake, respectively, with the 128 VM arrangement.

Under the OpenFOAM Motorbike benchmark, Milan-X was up to 60% faster than Milan and 305% faster than Skylake at the 8 VM setup. The trend was clear as Milan-X boasted double-digit performance improvements over its predecessor and triple-digit enhancements over Skylake.

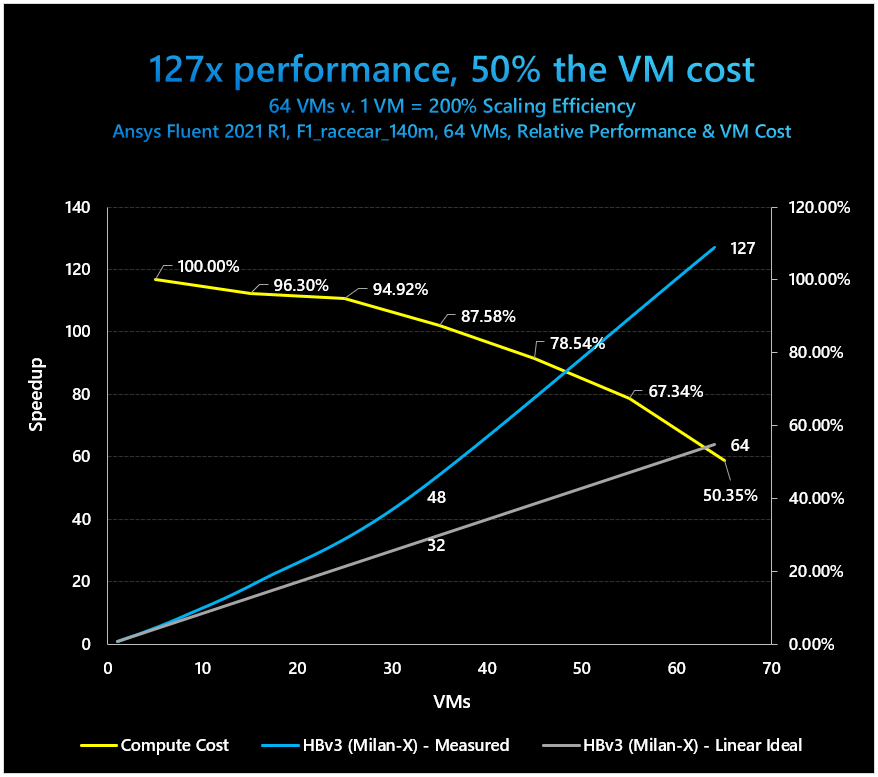

Thanks to the implementation of AMD's 3D V-Cache, Milan-X's scaling efficiency was off the charts. Utilizing the Ansys Fluent 2021 R1 benchmark with the f1_racecar_140 model as a point of reference, Milan-X demonstrated a scaling efficiency of up to 200% when comparing 64 VMs to 1 VM. In other words, 64 HBv3 VMs with Milan-X get the job done in half as long as it would take one HBv3 instance. At the end of the day, customers benefit from a 50% reduction in VM costs at the rate of a 127x faster solution time.

Microsoft has always prided itself in offering its customers linear performance increases. Linear efficiency, which is considered the gold standard in HPC, is when performance increases linearly with cost in comparison to one VM (or the minimum number of VMs to solve a problem). With Milan-X, Microsoft's customers can enjoy substantially faster solution times and lower VM costs.

Zhiye Liu is a news editor, memory reviewer, and SSD tester at Tom’s Hardware. Although he loves everything that’s hardware, he has a soft spot for CPUs, GPUs, and RAM.

-

Alex/AT Reply

For 1P systems these are very rare cases like suspend/resume where latency does not matter already.popatim said:Makes me wonder how much lag is introduced if the cache needs to be flushed.

Would probably be on a scale of one to few seconds for completely dirty L3 cache (which is rare as well).

For xP SMP systems, cross node memory access will prevent need to flush L3 as well.

Memory shared with devices and accessible by devices is usually set up as writethrough or uncached and flushed manually/in ranges as required.

All in all I don't see any reasonable use cases where flushing whole L3 is a necessity, or would be of any concern actually :) -

helper800 Reply

Just curious what is your background with tech? Are you an engineer or computer science guy?Alex/AT said:For 1P systems these are very rare cases like suspend/resume where latency does not matter already.

Would probably be on a scale of one to few seconds for completely dirty L3 cache (which is rare as well).

For xP SMP systems, cross node memory access will prevent need to flush L3 as well.

Memory shared with devices and accessible by devices is usually set up as writethrough or uncached and flushed manually/in ranges as required.

All in all I don't see any reasonable use cases where flushing whole L3 is a necessity, or would be of any concern actually :) -

Alex/AT Reply

Well, not a hardware person per se, meaning not involved in any hardware development. Do understand how hardware works though, not on electrical level though, but on logical level.helper800 said:Just curious what is your background with tech? Are you an engineer or computer science guy?

But 20+ years of ISP/TSP/CSP systems and services background, including PC and server building/assessment/experience, virtualization, Linux/OSS, kernel tuning, software development and debugging, heavy networking, heavy VoIP, SQL, bits of x86 & ARM assembly programming, etc. :)

So just a broad range systems engineer in short. -

waltc3 AMD will roll over everyone next year, it seems clear. Shows the benefits of having aggressive roadmaps over a multi-year period that you can actually keep! I don't see much daylight for Intel next year at this time.Reply