Nvidia Drops its First PCIe Gen 4 GPU, But It's Not for Gamers

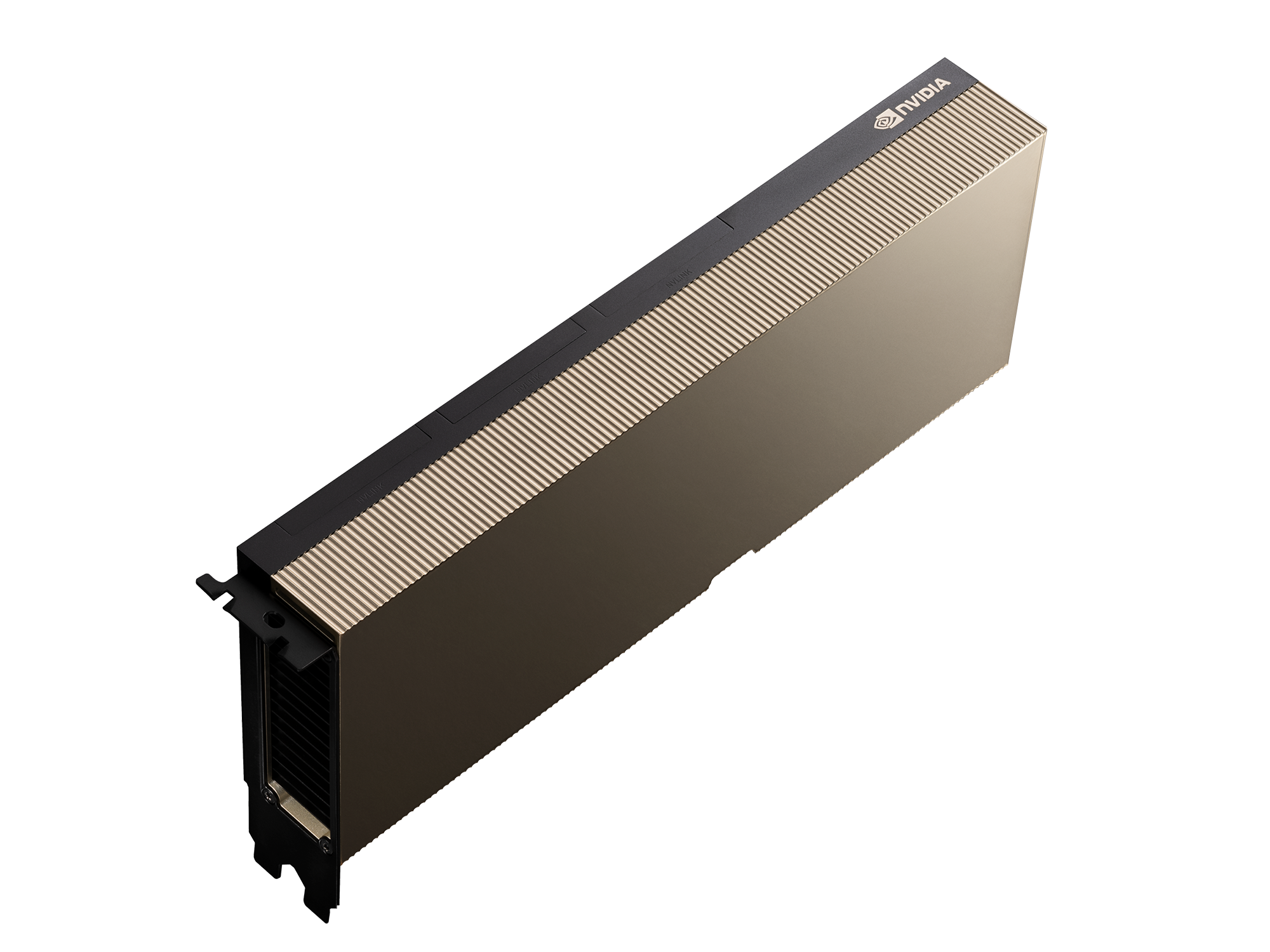

Meet the A100 in its PCI-Express 4.0 flavor

Nvidia had already announced its next-generation Ampere A100 GPU last month, but that was the SXM4 version of the graphics card aimed at scientific and data center applications. Now, Nvidia announces the PCI-Express 4.0 variant of the data center GPU, which comes with the same GPU and memory, but that's where the similarities end.

Of course, the most notable difference is its connectivity. The PCIe 4 slot on this graphics card makes it much more flexible than the SXM variant, as it can be slotted into most PC's instead of only purpose-built systems. This allows for easy upgrading of existing server infrastructures, or installation into workstations for scientific use. It also allows many system integrators to easily build the A100 into their systems.

“Adoption of NVIDIA A100 GPUs into leading server manufacturers’ offerings is outpacing anything we’ve previously seen,” said Ian Buck, VP and GM of Accelerated Computing at NVIDIA. “The sheer breadth of NVIDIA A100 servers coming from our partners ensures that customers can choose the very best options to accelerate their data centers for high utilization and low total cost of ownership.”

But, this flexibility will come at a cost. The PCIe 4 variant of the Ampere A100 GPU has a significantly lower TDP of 250W vs the SXM's 400W TDP. Nvidia says this leads to a 10% performance penalty in single-GPU workloads. However, multi-GPU scaling is limited to just two GPUs via NVLink, where NVSwitch supports up to eight GPUs. For distributed workloads, Nvidia says the performance penalty (looking at eight GPUs) is up to 33%.

It appears much of the additional power TDP on the SXM variant goes to inter-GPU communications, based on the 10% performance difference for a single GPU. 400W is also the maximum power limit, so the GPU may not actually use that much in some workloads. Note that power delivery is also much easier with SXM, as the power comes through the mezzanine connector. The PCIe models by contrast only get up to 75W of power from the x16 slot, with additional power coming via 8-pin or 6-pin PEG connectors.

Power and TDP aside, the graphics cards are nearly identical. The same Ampere GPU is utilized with its 6912 CUDA cores, making the GPU measure in at an impressive 826mm square in size, despite being manufactured on the 7nm process. The GPU is also still flanked with six HBM2 stacks that offer up a whopping 40 GB of ultra-fast memory.

How Long Do Gamers Have to Wait?

For the gamers among us, we'll have to wait a little longer to get access to Nvidia's PCI-Express 4.0 goodness. We do expect the consumer Ampere graphics cards to feature the new connectivity standard, but don't expect to see Ampere GeForce RTX 3000 cards until around September. Given the leaks are rolling in faster than we can write about them, we'll surely be seeing new GPUs by the end of the year.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Niels Broekhuijsen is a Contributing Writer for Tom's Hardware US. He reviews cases, water cooling and pc builds.

-

Phaaze88 Gen 4 only benefits multi-gpu setups anyway, as far as gpus are concerned. Gen 3 is still fine, except if one has 2080Ti or better on SLI.Reply -

Deicidium369 Reply

I run dual 2080TI NVlinked together - not sure even the 2080TI is using all 16 PCIe3 lanes.Phaaze88 said:Gen 4 only benefits multi-gpu setups anyway, as far as gpus are concerned. Gen 3 is still fine, except if one has 2080Ti or better on SLI. -

Deicidium369 Reply

NVSwitch supports upto 16 GPUs in the DGX. HGX is limited to 8.chalabam said:But can it play Crysis? -

Phaaze88 Reply

Are you running an i9 on X299 or Threadripper?Deicidium369 said:I run dual 2080TI NVlinked together - not sure even the 2080TI is using all 16 PCIe3 lanes.

If neither, then those 2080Tis are running in x8 mode. PCIe 3.0 x8 has a theoretical max bandwidth of 7880MB/s.

The 2080Ti just manages to exceed that limit. Albeit minor, there is some performance lost.

The reason for my initial question is because those 2 platforms can run SLI x16/x16 mode.

Just for reference:

https://www.techpowerup.com/review/nvidia-geforce-rtx-2080-ti-pci-express-scaling

https://www.gamersnexus.net/guides/3366-nvlink-benchmark-rtx-2080-ti-pcie-bandwidth-x16-vs-x8 -

Deicidium369 Reply

Doesn't matter - the NVLink is key to dual 2080Ti performance. Current iteration is Gigabyte Z390, i9900K at 5GHz, 32 GB DDR4 4000 (4133 XMP, never was able to get the full 4133), Samsung M.2 NVMe. Acer 4K 120/144Hz Gsync. NH-D15 SSO, Seasonic 1000W Prime Ti.Phaaze88 said:Are you running an i9 on X299 or Threadripper?

If neither, then those 2080Tis are running in x8 mode. PCIe 3.0 x8 has a theoretical max bandwidth of 7880MB/s.

The 2080Ti just manages to exceed that limit. Albeit minor, there is some performance lost.

The reason for my initial question is because those 2 platforms can run SLI x16/x16 mode.

Just for reference:

https://www.techpowerup.com/review/nvidia-geforce-rtx-2080-ti-pci-express-scaling

https://www.gamersnexus.net/guides/3366-nvlink-benchmark-rtx-2080-ti-pcie-bandwidth-x16-vs-x8

I have the same Gigabyte 1080Ti in my old system - wasn't the VG248QE the first monitor with hardware Gsync? I had one back in that era - got destroyed in a move. I have a Steelseries Mouse - a Sensei from 5 or 6 years ago, it will not die - been though several Razers and Logitechs - and the Sensei keeps truckin... haven't run a sound card in ages.

On Gamer's Nexus, the difference is minimal - pretty happy with the performance I get - will be placed next to the 8700K with their dual 1080Tis soon enough. I tend to turn shadows down a bit - I drop until I notice a diff, then back up. Not looking for any records.. Other than the minor tweak for 5GHz on all cores, I do not overclock - even the Gigabyte OC WIndforce 2080's are just at Gigabyte's OC. The only metric I care about is that is does what I want it to do. Dual 1080s - wanted at least 45fps at 4K and with dual 2080Tis I wanted something north of 100fps. -

Phaaze88 Reply

Ok, but you weren't sure if the 2080Ti uses all 16 lanes of PCIe 3.0. I went in a rather roundabout way of answering that with a "Well no(x16), but actually yes(x8)."Deicidium369 said:Doesn't matter - the NVLink is key to dual 2080Ti performance. Current iteration is Gigabyte Z390, i9900K at 5GHz, 32 GB DDR4 4000 (4133 XMP, never was able to get the full 4133), Samsung M.2 NVMe. Acer 4K 120/144Hz Gsync. NH-D15 SSO, Seasonic 1000W Prime Ti.

I have the same Gigabyte 1080Ti in my old system - wasn't the VG248QE the first monitor with hardware Gsync? I had one back in that era - got destroyed in a move. I have a Steelseries Mouse - a Sensei from 5 or 6 years ago, it will not die - been though several Razers and Logitechs - and the Sensei keeps truckin... haven't run a sound card in ages.

On Gamer's Nexus, the difference is minimal - pretty happy with the performance I get - will be placed next to the 8700K with their dual 1080Tis soon enough. I tend to turn shadows down a bit - I drop until I notice a diff, then back up. Not looking for any records.. Other than the minor tweak for 5GHz on all cores, I do not overclock - even the Gigabyte OC WIndforce 2080's are just at Gigabyte's OC. The only metric I care about is that is does what I want it to do. Dual 1080s - wanted at least 45fps at 4K and with dual 2080Tis I wanted something north of 100fps.

Sorry if I said more than necessary. -

chalabam Reply

It was an AI jokeDeicidium369 said:NVSwitch supports upto 16 GPUs in the DGX. HGX is limited to 8. -

Deicidium369 Reply

Looking back, I may have responded to the wrong message - I get the Crysis comment - it's an extremely old quipchalabam said:It was an AI joke