Killer Wireless-N 1103 Review: Can Qualcomm Take On Centrino?

What And How We Tested

Here are the specifications for the aforementioned Dell/Alienware systems submitted by Qualcomm. Note that the memory and hard drive options are lower-end than Dell's original spec. However, in the context of these networking performance tests, we see no cause for concern.

| Model | M17x-R3 |

|---|---|

| Processor | Intel Core i7-2630QM @ 2.00 GHz |

| Chipset | HM67 (Sandy Bridge) |

| Memory | 8 GB Dual-Channel DDR3-1333 |

| Integrated Graphics | Mobile Intel HD Graphics |

| Discreet Graphics | AMD Radeon HD 6870M |

| Storage | Western Digital 320 GB WD3200BEKT |

| WLAN 1 | Intel Centrino Ultimate-N 6300 (633ANHMW) |

| WLAN 2 | Killer Wireless-N 1103 |

| Operating System | Microsoft Windows 7 |

We also grabbed a Linksys AE2500 Dual-Band Wireless-N USB adapter. We used this on the notebook equipped with Intel's Centrino Ultimate-N 6300, disabling the internal wireless adapter whenever the USB-based controller was inserted. Our supposition going in was that the USB adapter would yield poorer performance than both internally-mounted contestants since the Qualcomm and Intel devices had the benefit of larger antennas running around the notebooks’ screens. But you never know. Linksys' offering might yield some surprises.

The specifics of our server system are fairly irrelevant, as the network connection is easily the main bottleneck. Suffice it to say that we used a 3.4 GHz Core i7-2600K on an Intel DP67BG motherboard (including a gigabit Ethernet port) with 8 GB of Corsair Vengeance DDR3-1600 and a 240 GB Patriot Wildfire SSD loaded with Windows 7 Professional 64-bit.

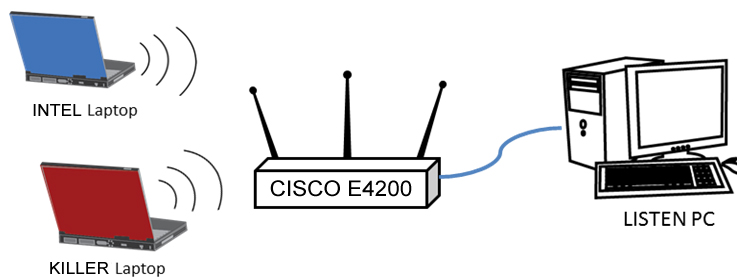

Article continues belowWe then made a direct Ethernet connection between the server and a Cisco Linksys E4200 router. We chose this router primarily based on our own positive experiences with the E-series in prior networking stories, but also heard from Qualcomm that it has run tests with the same unit in its own facilities. Simply, our arrangement resembled this:

With this configuration, we ran through three different tests in three locations within the author’s home.

Location 1: Ten feet separated the router and clients with direct line of sight between them. This was across opposite sides of the same room. Note that this room also had a set of active Logitech wireless speakers, as well as an unassociated Actiontec 802.11b/g router, just to make the environment good and noisy. There were also six to eight other WLANs detected by the clients at any given time. We’re not interested in how the adapters work under ideal conditions, only in real life.

Location 2: Twenty feet separated the router and clients, with one wall between them. We moved the notebooks into an adjacent room.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Location 3: Approximately sixty feet separated the router and clients. The router was located in one upstairs corner of the house while the notebooks with in the home’s downstairs opposite corner. This location is known for its sub-par reception and represents a sort of worst-case space within the house.

Our three benchmarks were:

File transfer tests: We used two file sets here. The first was simply a single 2 GB ZIP archive. The second was a folder containing several hundred data files and documents totaling 200 MB. The purpose of the first was to ascertain a sustained throughput in order to even out any fleeting environmental RF fluctuations, while the second aimed to give a better look at the overhead impact from having to pass many files rather than only one.

PassMark PerformanceTest 7: While many people have yet to try out this thorough benchmarking suite, it’s quickly becoming one of our favorite testing Swiss Army knives. Specifically, we used the suite’s Advanced Network Test.

We ran each PerformanceTest run for 180 seconds, examining both TCP and UDP throughput. Moreover, we tested each case with both 4 KB and 16 KB block sizes to better assess the impact of varying data sizes on network performance.

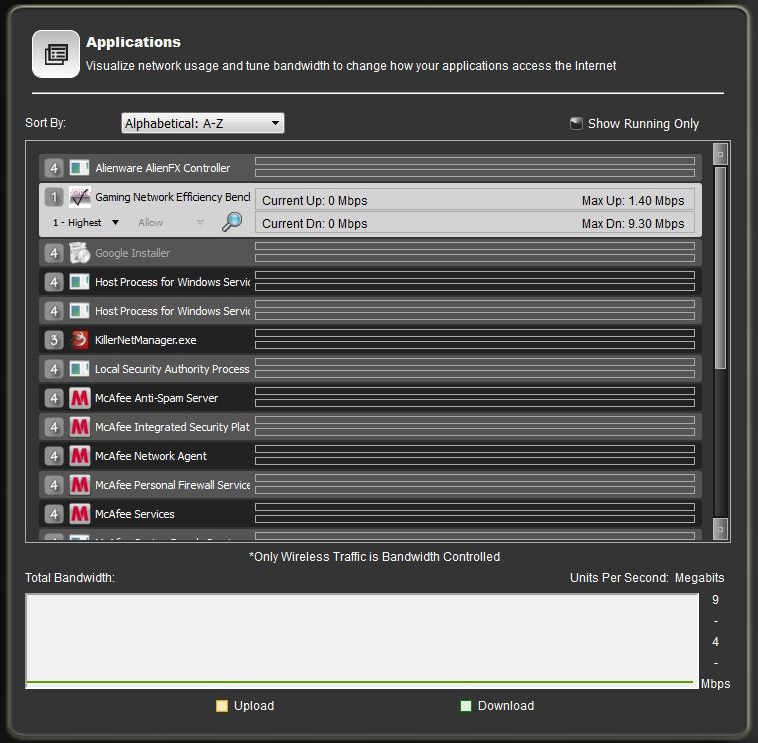

Gaming Network Efficiency (GaNE): This benchmark was both designed and supplied by Qualcomm/Bigfoot Networks as a quick but effective way to test ping times and jitter. We were especially drawn to its easy graphing capabilities. As usual with vendor-supplied software, we took a hard look at this tool, wanting to make sure it wasn’t playing favorites. After considerable hours of testing, we’re confident that GaNE is above board, in part because our early tests showed the Qualcomm adapter underperforming, suffering massive latencies. As happens all too often when we communicate with a vendor during the testing process, Qualcomm followed up with a driver update that greatly reduced these problems...and forced us to start our testing from scratch.

Speaking of drivers, know that we set the aforementioned Killer Network Manager app to give GaNE the “highest” priority. We debated about this, feeling that this might be giving an unfair advantage. Finally, we decided that the Killer software is just as much a part of the product platform as the hardware, and there’s nothing keeping its competitors from offering their own software optimizations. So, we let it run.

Current page: What And How We Tested

Prev Page Killer Wireless-N 1103: Nebulous Claims To Superiority Next Page Benchmark Results: 2.4 GHz Transfer Tests-

phamhlam I wish they would build better PCI-Express WiFi Adapter. Some of us can't have a cable going through our house or have our computer sit next to the router.Reply -

KelvinTy I think if you have the lowest latency at your end and leave everything on the server and internet end. Then it would be a lot better, especially there is input lag from everything, monitor, mouse, keyboard, wireless card, router and internet...Reply -

reghir There are 2 versions of the E4200 did you use version 1 or 2 as version 2 increases to 450Mbps on both bands and full spatial on its 3X3 streams?Reply -

MKBL I hope TH will review on powerline Ethernet adapter against typical RJ45 and wifi. For the same reason as phalmhlam, my desktop is connected to router by a long cable running across floor, which bothers me and my family sometimes. I've been considering powerline ethernet, but I can't make decision between that and wireless-N, because I have no idea which one has better performance/price.Reply -

CaedenV Great article! I learned quite a few things from it.Reply

I still think I will be waiting for 802.11ac before upgrading from G though. -

jaylimo84 M. Van Winkle,Reply

Thanks for this nice article.

I own an Alienware M17xR3, with the Killer 1103.

Upon installation, the driver was causing me issues (nothing big tho), and I decided to follow a forum recommendation and install the Atheros Osprey driver instead of Killer's.

It seems the two card are identical apart from the name on it. (Maybe I am misleaded)

It could be interesting to see if the Killer 1103 gets any improvement using the Killer driver vs. the vanilla Atheros drivers, and see if "years of working with the windows tcp stack" pays off. Or if your performance improvement is due to a good, but still normal card. -

CaedenV MKBLI hope TH will review on powerline Ethernet adapter against typical RJ45 and wifi. For the same reason as phalmhlam, my desktop is connected to router by a long cable running across floor, which bothers me and my family sometimes. I've been considering powerline ethernet, but I can't make decision between that and wireless-N, because I have no idea which one has better performance/price.Indeed, it is an issue. I ended up wiring the house through the HVAC ducts, which is a terrible idea (breaks all sorts of building codes), but better than drilling holes all throughout the house only to move to wireless within the next 5-10 years.Reply -

XmortisX I would like to try this out. If they can make a good pci-e/pci version of this card then definitely would try to push it with my clients. Even though we may get more labor hours for running wires the convenience and idea of avoiding HVAC ducts building codes makes this appealing.Reply