Intel Core i7 (Nehalem): Architecture By AMD?

The Return Of Hyper-Threading

So, the front end hasn’t been profoundly overhauled; neither has the back end. It has exactly the same execution units as the most recent Core processors, but here again the engineers have worked on using them more efficiently.

With Nehalem, Hyper-Threading makes its great comeback. Introduced with the Northwood version of Intel’s NetBurst architecture, Hyper-Threading—also known outside the world of Intel as Simultaneous Multi-Threading (SMT)—is a means of exploiting thread parallelism to improve the use of a core’s execution units, making the core appear to be two cores at the application level.

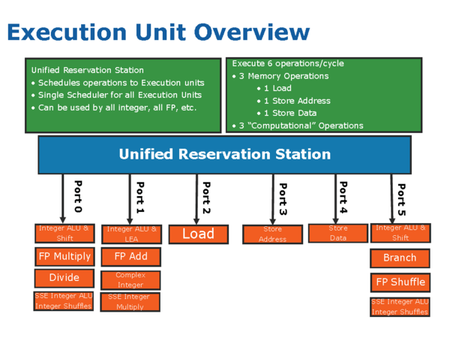

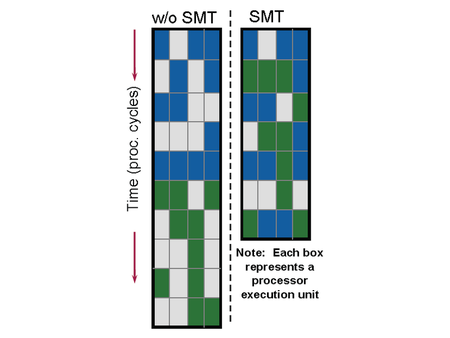

In order to use parallel threads, certain resources—such as registers—must be duplicated. Other resources are shared by the two threads, and that includes all the out-of-order execution logic (the instruction reorder buffer, the execution units, and cache memory). A simple observation led to the introduction of SMT: the “wider” (meaning more execution units) and “deeper” (meaning more pipeline stages) processors become, the harder it is to extract enough parallelism to use all the execution units at each cycle. Where the Pentium 4 was very deep, with a pipeline having more than 20 stages, Nehalem is very wide. It has six execution units capable of executing three memory operations and three calculation operations. If the execution engine can’t find sufficient parallelism of instructions to take advantage of them all, “bubbles”—lost cycles—occur in the pipeline.

To remedy that situation, SMT looks for instruction parallelism in two threads instead of just one, with the goal of leaving as few units unused as possible. This approach can be extremely effective when the two threads are executing tasks that are highly separate. On the other hand, two threads involving intensive calculation, for example, will only increase the pressure on the same calculating units, putting them in competition with each other for access to the cache. It goes without saying that SMT is of no interest in this type of situation, and can even negatively impact performance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: The Return Of Hyper-Threading

Prev Page Branch Predictors Next Page SMT Implementation-

cl_spdhax1 good write-up, cant wait for the new architecture , plus the "older" chips are going to become cheaper/affordable. big plus.Reply -

neiroatopelcc No explaination as to why you can't use performance modules with higher voltage though.Reply -

neiroatopelcc AuDioFreaK39TomsHardware is just now getting around to posting this?Not to mention it being almost a direct copy/paste from other articles I've seen written about Nehalem architecture.I regard being late as a quality seal really. No point being first, if your info is only as credible as stuff on inquirer. Better be last, but be sure what you write is correct.Reply

-

cangelini AuDioFreaK39TomsHardware is just now getting around to posting this?Not to mention it being almost a direct copy/paste from other articles I've seen written about Nehalem architecture.Reply

Perhaps, if you count being translated from French. -

randomizer Yea, 13 pages is quite alot to translate. You could always use google translation if you want it done fast :kaola:Reply -

Duncan NZ Speaking of french... That link on page 3 goes to a French article that I found fascinating... Would be even better if there was an English version though, cause then I could actually read it. Any chance of that?Reply

Nice article, good depth, well written -

neiroatopelcc randomizerYea, 13 pages is quite alot to translate. You could always use google translation if you want it done fast I don't know french, so no idea if it actually works. But I've tried from english to germany and danish, and viseversa. Also tried from danish to german, and the result is always the same - it's incomplete, and anything that is slighty technical in nature won't be translated properly. In short - want it done right, do it yourself.Reply -

neiroatopelcc I don't think cangelini meant to say, that no other english articles on the subject exist.Reply

You claimed the article on toms was a copy paste from another article. He merely stated that the article here was based on a french version. -

enewmen Good article.Reply

I actually read the whole thing.

I just don't get TLP when RAM is cheap and the Nehalem/Vista can address 128gigs. Anyway, things have changed a lot since running Win NT with 16megs RAM and constant memory swapping. -

cangelini I can't speak for the author, but I imagine neiro's guess is fairly accurate. Written in French, translated to English, and then edited--I'm fairly confident they're different stories ;)Reply