Intel Core i7 (Nehalem): Architecture By AMD?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Introduction

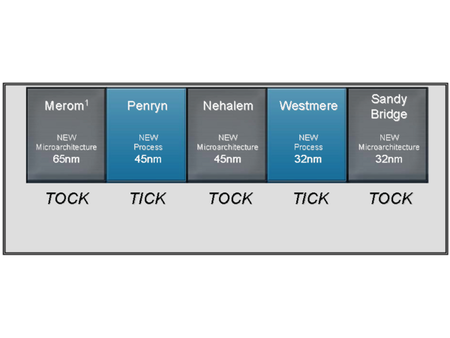

Two years ago, Intel pulled off a coup with the introduction of its Conroe architecture, which surfaced as the Core 2 Duo and Core 2 Quad. With this move, the company won back the performance crown after losing a bit of favor in the debacle that was its Pentium 4 "Prescott" design. At that time, Intel announced an ambitious plan to return to evolving its processor architectures at a rapid pace, as they had done in the mid-1990s. The first phase of the plan was the release of a “refresh” of the architecture 12 months after its introduction, to take advantage of progress in fabrication processes. That was done with Penryn. Then a whole new architecture was set to arrive 24 months later, with the code name Nehalem. That new architecture is the subject of this article.

The Conroe architecture offered first-rate performance and very reasonable power consumption, but it was far from perfect. Admittedly, the conditions under which it was developed weren’t ideal. When Intel realized its Pentium 4 was a dead-end, it had to reinvent an architecture in a hurry—something that’s far from easy for a company the size of Intel. The team of engineers in Haifa, Israel that, up until then had had responsibility for mobile architectures, was suddenly responsible for providing a design that’d power the entire new line of Intel processors. It was a challenging task for the team, which now bore Intel’s future on its shoulders. Given those conditions—with the tight schedule they had to stick to and the pressure they were under—the results that the Intel engineers achieved are remarkable. The situation also explains why the team had to make some compromises.

Although it was a serious reworking of the Pentium M, the Conroe architecture still sometimes betrayed its mobile roots. For one thing, the architecture wasn’t really modular. It had to cover the entire Intel range, from notebooks to servers. But in practice, it was practically the same chip in each case; the only place for variation was in the L2 cache memory. The architecture was also clearly designed to be dual-core, and moving to a quad-core version required the same kind of trick that Intel had resorted to for its first dual-core processors—two dies in a single package. The presence of the FSB also hampered the development of configurations using several processors, since it was a bottleneck in terms of memory access. And a final little giveaway: one of the new features introduced with the Conroe architecture—macro-ops fusion—which combines two x86 instructions into a single one, didn’t work in 64-bit mode, the standard operating mode for servers.

These compromises were understandable two years ago, but today Intel can no longer justify them—especially when faced with its rival AMD and the Opteron processor still a compelling play for enterprise environments. With Nehalem, Intel needed to remedy its last weaknesses by designing a modular architecture that could adapt to the differing needs of the all three major markets: mobile, desktop and server.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

cl_spdhax1 good write-up, cant wait for the new architecture , plus the "older" chips are going to become cheaper/affordable. big plus.Reply -

neiroatopelcc No explaination as to why you can't use performance modules with higher voltage though.Reply -

neiroatopelcc AuDioFreaK39TomsHardware is just now getting around to posting this?Not to mention it being almost a direct copy/paste from other articles I've seen written about Nehalem architecture.I regard being late as a quality seal really. No point being first, if your info is only as credible as stuff on inquirer. Better be last, but be sure what you write is correct.Reply

-

cangelini AuDioFreaK39TomsHardware is just now getting around to posting this?Not to mention it being almost a direct copy/paste from other articles I've seen written about Nehalem architecture.Reply

Perhaps, if you count being translated from French. -

randomizer Yea, 13 pages is quite alot to translate. You could always use google translation if you want it done fast :kaola:Reply -

Duncan NZ Speaking of french... That link on page 3 goes to a French article that I found fascinating... Would be even better if there was an English version though, cause then I could actually read it. Any chance of that?Reply

Nice article, good depth, well written -

neiroatopelcc randomizerYea, 13 pages is quite alot to translate. You could always use google translation if you want it done fast I don't know french, so no idea if it actually works. But I've tried from english to germany and danish, and viseversa. Also tried from danish to german, and the result is always the same - it's incomplete, and anything that is slighty technical in nature won't be translated properly. In short - want it done right, do it yourself.Reply -

neiroatopelcc I don't think cangelini meant to say, that no other english articles on the subject exist.Reply

You claimed the article on toms was a copy paste from another article. He merely stated that the article here was based on a french version. -

enewmen Good article.Reply

I actually read the whole thing.

I just don't get TLP when RAM is cheap and the Nehalem/Vista can address 128gigs. Anyway, things have changed a lot since running Win NT with 16megs RAM and constant memory swapping. -

cangelini I can't speak for the author, but I imagine neiro's guess is fairly accurate. Written in French, translated to English, and then edited--I'm fairly confident they're different stories ;)Reply