G-Sync Technology Preview: Quite Literally A Game Changer

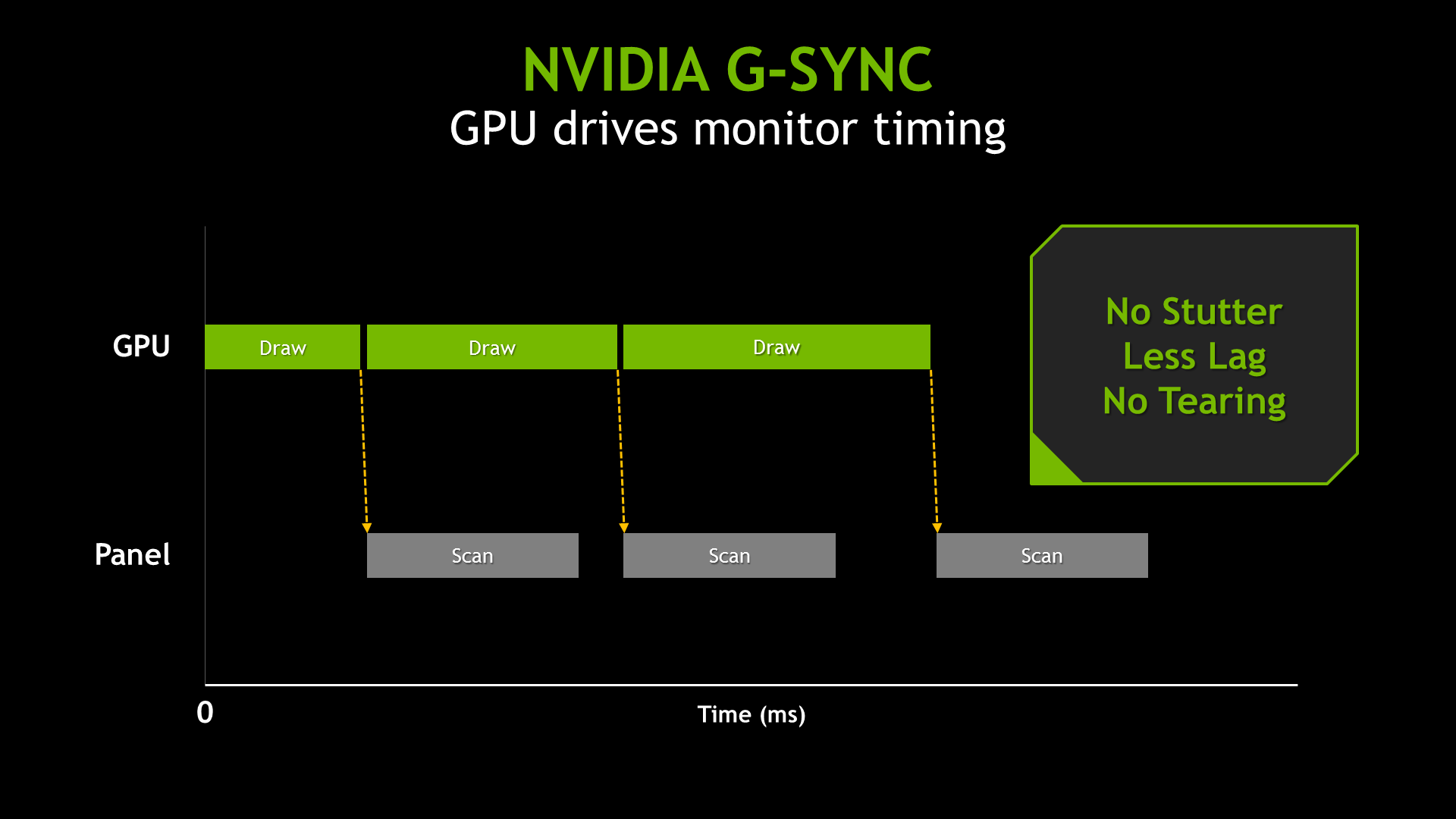

You've forever faced this dilemma: disable V-sync and live with image tearing, or turn V-sync on and tolerate the annoying stutter and lag? Nvidia promises to make that question obsolete with a variable refresh rate technology we're previewing today.

To Synchronize Or Not To Synchronize, That Is (No Longer) The Question

A Brief History of Fixed Refresh Rates

A long time ago, PC monitors were big heavy items that contained curiously-named components like cathode ray tubes and electron guns. Back then, the electron guns shot at the screen to illuminate the colorful dots we call pixels. They did this one pixel at a time in a left-to-right scanning pattern for each line, working from the top to the bottom of the screen. Varying the electron guns' speed from one complete refresh to the next wasn't very practical, and there was no real need since 3D games were still decades away. So, CRTs and the associated analog video standards were designed with fixed refresh rates in mind.

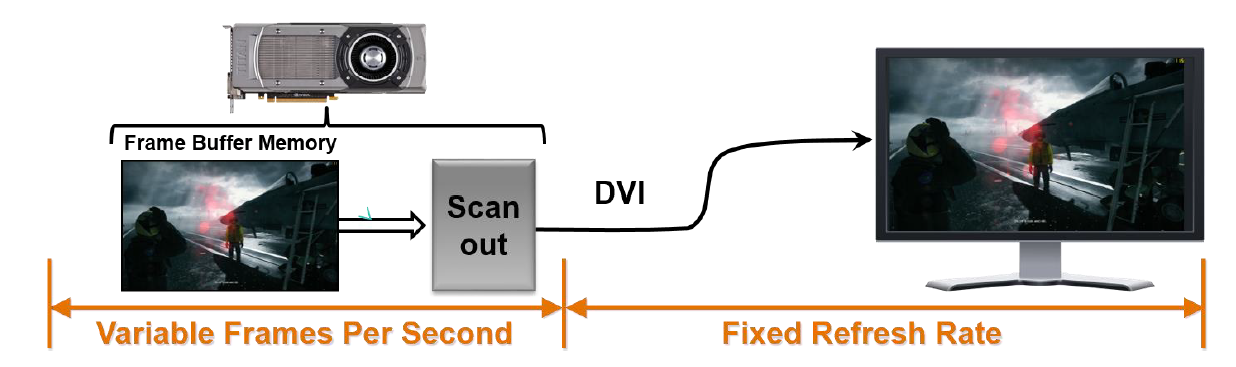

LCDs eventually replaced CRTs, and digital connections (DVI, HDMI, and DisplayPort) replaced the analog ones (VGA). But the boards responsible for setting video signal standards (with VESA on top) haven't shifted away from those fixed refresh rates. Movies and television, after all, still rely on an input signal with a constant frame rate. Again, the need for a variable refresh didn’t seem so important.

Variable Frame Rates and Fixed Refresh Rates Don’t Match

Until the advent of advanced 3D graphics, a fixed refresh rate for displays was never an issue. However, an issue surfaced as we started getting our hands on powerful graphics processors: the rate at which GPUs render individual frames (what we refer to as their frame rate, commonly expressed in FPS or frames per second) isn’t constant. Rather it varies over time. A given card might be able to generate 30 frames per second in a particularly taxing scene and then 60 FPS moments later when you look up into an empty sky.

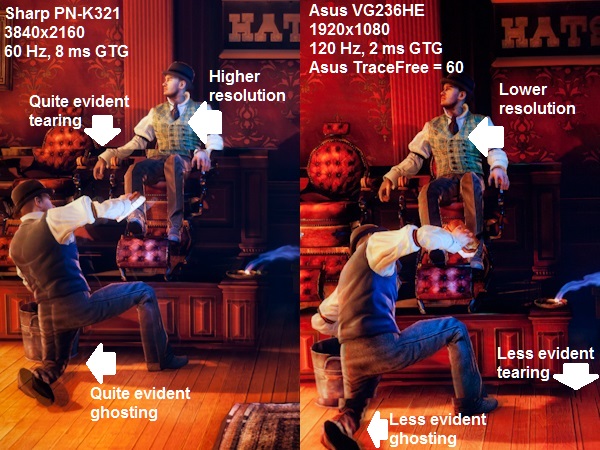

As it turns out, variable frame rates coming from a graphics card and fixed refresh rates on an LCD don't work particularly well together. In such a configuration, you end up with an on-screen artifact that we call tearing. This happens when two or more partial frames are rendered together during one monitor refresh cycle. They're typically misaligned, yielding a very distracting effect corresponding to motion.

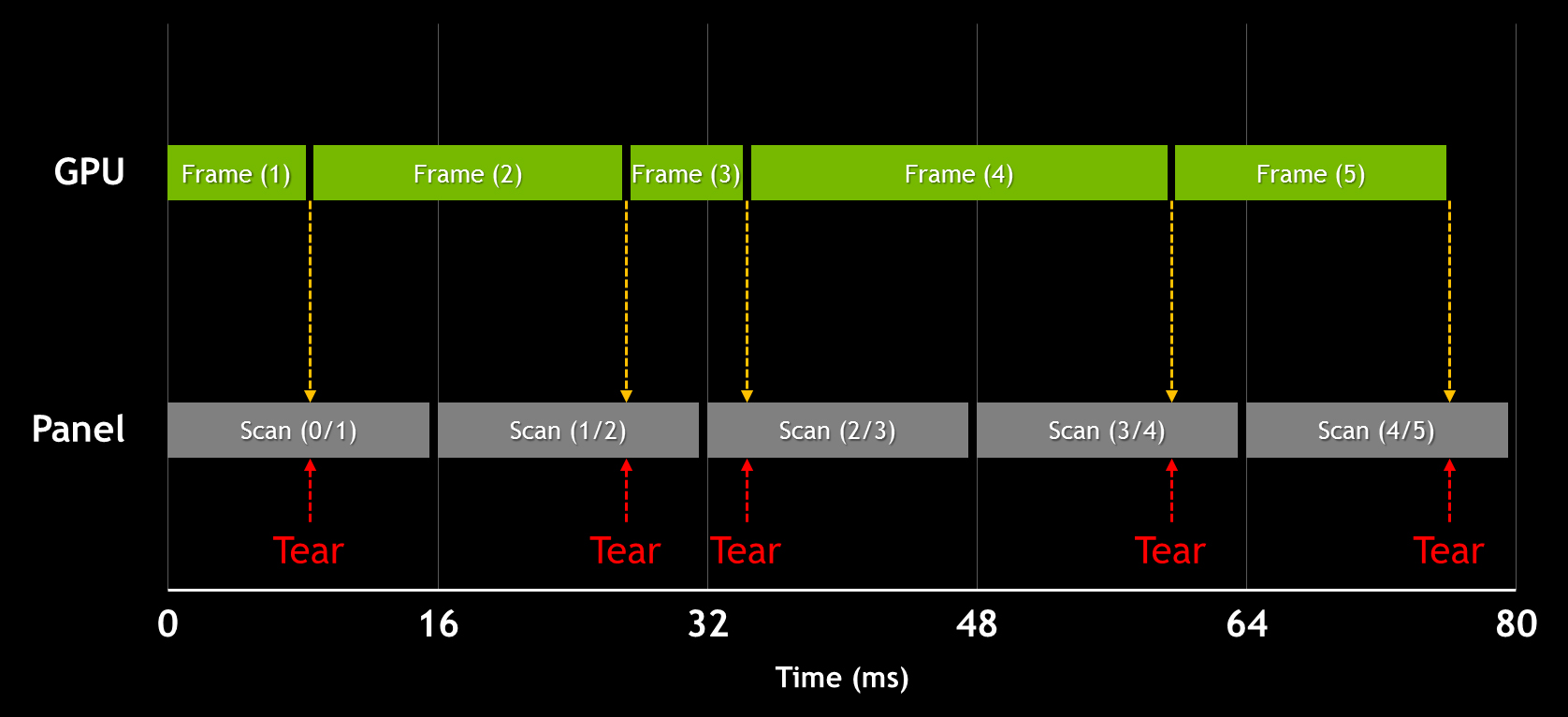

The image above shows two well-known artifacts, which are commonly seen, but often difficult to document. Because these are display artifacts, they don't show up in regular screenshots taken in-game, but instead represent the image you actually experience. You need a fast camera to accurately capture and display them. Or if you have access to a capture card, which is what we use for our FCAT-based benchmarking, you can record an uncompressed video stream from the DVI port and clearly see the transition from one frame to another. At the end of the day, though, the best way to see these effects is with your own eyes.

You can see the tearing effect in both images above, taken with a camera, and the one below, captured through a card. The picture is cut horizontally, and appears misaligned. In the first shot, we have a 60 Hz Sharp screen on the left and a 120 Hz Asus display on the right. Tearing at 120 Hz is naturally less pronounced since the refresh is twice as high. However, it's still noticeable in similar ways. This type of visual artifact is the clearest indicator that the pictures were taken with V-sync disabled.

The other issue we see in the BioShock: Infinite comparison shot is called ghosting, and it's particularly apparent at the bottom of the left side. This is due to screen latency. To make a long story short, individual pixels don't change color quickly enough, leading to this type of afterglow. The in-game effect is far more dramatic than a still image can convey. A panel with 8 ms gray-to-gray response time, such as the Sharp, will appear blurry whenever there's fast movement on-screen. That's why those displays typically aren't recommended for first-person shooters.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

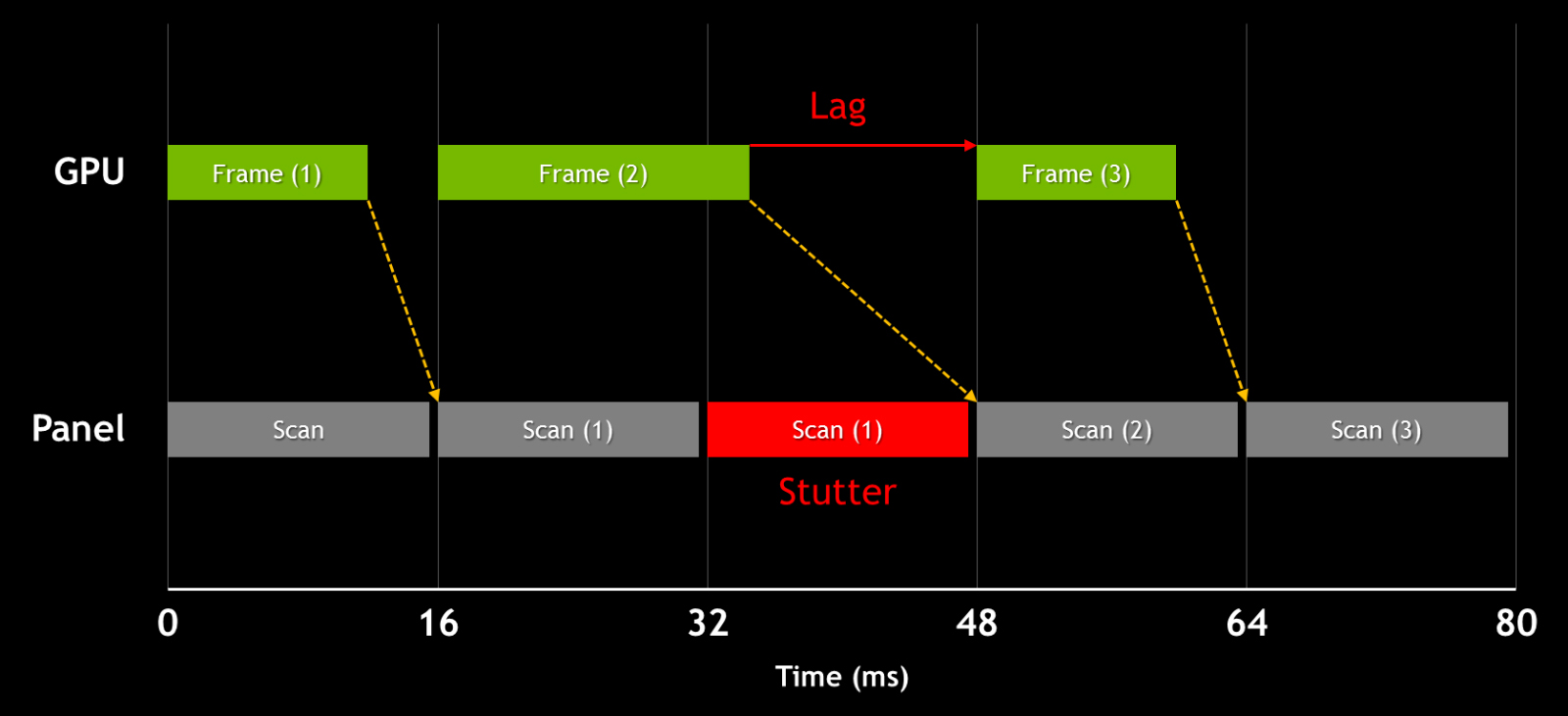

V-sync: Trading One Problem For Another

Vertical synchonization, or V-sync, is a very old solution to the tearing problem. Enabling V-sync essentially tells the video card to try to match the screen's refresh, eliminating tearing entirely. The downside is that, if your video card cannot keep up and the frame rate dips below 60 FPS (on a 60 Hz display), effective FPS bounces back and forth among integer multiples of the screen's refresh rate (so, 60, 30, 20, 15 FPS, and so on), which in turn causes perceived stuttering.

Furthermore, because it forces the video card to wait and sometimes relies on a third back buffer, V-sync can introduce additional input lag in the chain. Thus, V-sync can be both a blessing and a curse, trading one compromise for another set of compromises. An informal survey around the office suggests that most gamers keep V-sync off as a general rule, turning it on only when the tearing artifacts become unbearable.

Getting Creative: Nvidia Introduces G-Sync

With the launch of its GeForce GTX 680, Nvidia enabled a driver mode called Adaptive V-sync, which attempted to mitigate the issues with V-sync by turning it on at frame rates above the monitor's refresh rate, and then quickly switching it off if instantaneous performance dropped below the refresh rate. Although this technology did its job well, it was really more of a workaround and did not prevent tearing when the framerate dropped below the display's refresh.

The introduction of G-Sync is much more interesting. Nvidia is basically showing that, instead of forcing video cards to display games on monitors with a fixed refresh, we can make the latest screens work at variable rates.

DisplayPort’s packet-based data transfer mechanism provided a window of opportunity. By using variable blanking intervals in the DisplayPort video signal, and replacing a monitor scaler with a module that works with a variable blanking signal, an LCD can be driven at a variable refresh rate aligned to whichever frame rate the video card is putting out (up to the screen's refresh rate limit, of course). In practice, Nvidia is taking a creative approach in leveraging specific capabilities enabled by DisplayPort, taking the opportunity to kill two birds with one stone.

Even before we jump into the hands-on testing, we have to commend the creative approach to solving a very real problem affecting gaming on the PC. This is innovation at its finest. But how well does G-Sync work in practice?

Nvidia sent over an engineering sample of Asus' VG248QE with its scaler replaced by a G-Sync module. We're already plenty familiar with this specific display; we reviewed it in Asus VG248QE: A 24-Inch, 144 Hz Gaming Monitor Under $300 and it earned a prestigious Tom's Hardware Smart Buy award. Now it's time to preview how Nvidia's newest technology affects our favorite games.

Current page: To Synchronize Or Not To Synchronize, That Is (No Longer) The Question

Next Page 3D LightBoost, On-Board Memory, Standards, And 4K-

gamerk316 I consider Gsync to be the most important gaming innovation since DX7. It's going to be one of those "How the HELL did we live without this before?" technologies.Reply -

monsta Totally agree, G Sync is really impressive and the technology we have been waiting for.Reply

What the hell is Mantle? -

wurkfur I personally have a setup that handles 60+ fps in most games and just leave V-Sync on. For me 60 fps is perfectly acceptable and even when I went to my friends house where he had a 120hz monitor with SLI, I couldn't hardly see much difference.Reply

I applaud the advancement, but I have a perfectly functional 26 inch monitor and don't want to have to buy another one AND a compatible GPU just to stop tearing.

At that point I'm looking at $400 to $600 for a relatively paltry gain. If it comes standard on every monitor, I'll reconsider. -

expl0itfinder Competition, competition. Anybody who is flaming over who is better: AMD or nVidia, is clearly missing the point. With nVidia's G-Sync, and AMD's Mantle, we have, for the first time in a while, real market competition in the GPU space. What does that mean for consumers? Lower prices, better products.Reply -

This needs to be not so proprietary for it to become a game changer. As it is, requiring a specific GPU and specific monitor with an additional price premium just isn't compelling and won't reach a wide demographic.Reply

Is it great for those who already happen to fall within the requirements? Sure, but unless Nvidia opens this up or competitors make similar solutions, I feel like this is doomed to be as niche as lightboost, Physx, and, I suspect, Mantle. -

ubercake I'm on page 4, and I can't even contain myself.Reply

Tearing and input lag at 60Hz on a 2560x1440 or 2560x1600 has been the only reason I won't game on one. G-sync will get me there.

This is awesome, outside-of-the-box thinking tech.

I do think Nvidia is making a huge mistake by keeping this to themselves though. This should be a technology implemented with every panel sold and become part of an industry standard for HDTVs, monitors or other viewing solutions! Why not get a licensing payment for all monitors sold with this tech? Or all video cards implementing this tech? It just makes sense.

-

rickard Could the Skyrim stuttering at 60hz w/ Gsync be because the engine operates internally at 64hz? All those Bethesda tech games drop 4 frames every second when vsync'd to 60hz which cause that severe microstutter you see on nearby floors and walls when moving and strafing. Same thing happened in Oblivion, Fallout 3, and New Vegas on PC. You had to use stutter removal mods in conjunction with the script extenders to actually force the game to operate at 60hz and smooth it out with vsync on.Reply

You mention it being smooth when set to 144hz with Gsync, is there any way you cap the display at 64hz and try it with Gsync alone (iPresentinterval=0) and see what happens then? Just wondering if the game is at fault here and if that specific issue is still there in their latest version of the engine.

Alternatively I suppose you could load up Fallout 3 or NV instead and see if the Gsync results match Skyrim. -

Old_Fogie_Late_Bloomer I would be excited for this if it werent for Oculus Rift. I don't mean to be dismissive, this looks awesome...but it isn't Oculus Rift.Reply -

hysteria357 Am I the only one who has never experienced screen tearing? Most of my games run past my refresh rate too....Reply