G-Sync Technology Preview: Quite Literally A Game Changer

You've forever faced this dilemma: disable V-sync and live with image tearing, or turn V-sync on and tolerate the annoying stutter and lag? Nvidia promises to make that question obsolete with a variable refresh rate technology we're previewing today.

3D LightBoost, On-Board Memory, Standards, And 4K

As we were going through Nvidia's press material, we found ourselves asking a number of questions about G-Sync as a technology today, along with its role in the future. During a recent trip to the company's headquarters in Santa Clara, we were able to get some answers.

G-Sync And 3D LightBoost

The first thing we noticed was that Nvidia was sending out that Asus VG248QE monitor, modified to support G-Sync. That monitor also supports what Nvidia currently calls 3D LightBoost technology, which was originally introduced to improve brightness in 3D displays, but has long been unofficially used in 2D mode as well, using its panel-pulsing backlight to reduce the ghosting (or motion blur) artifact we mentioned on page one. Naturally, we wanted to know if that could be used with G-Sync.

Nvidia answered that no, although using both technologies at the same time is what you'd want ideally, today, strobing the backlight at a variable refresh currently results in flicker and brightness issues. Solving them is incredibly complex, since you have to adjust luminance and keep track of pulses. As a result, you currently have to choose between the two technologies, although the company is working on a way to use them together in the future.

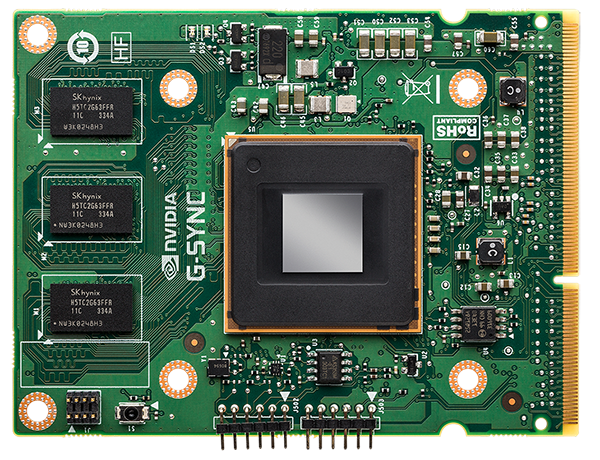

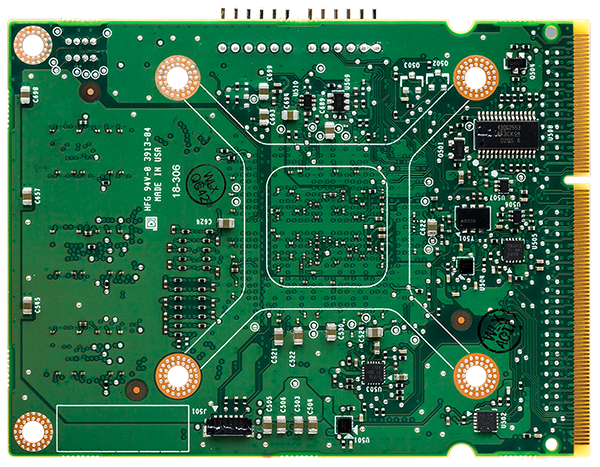

The G-Sync Module's On-Board Memory

As we already know, G-Sync eliminates the incremental input lag associated with V-sync, since there's no longer a need to wait for the panel to scan. However, we noticed that the G-Sync module has on-board memory. Could the module be buffering frames itself? If so, how much time would it take for a frame to make its way through the new pipeline?

According to Nvidia, frames are never buffered in the module's memory. As data comes in, it's displayed on the screen, and the memory does perform several other roles. However, the processing time related to G-Sync is way less than one millisecond. In fact, this is roughly the same latency encountered with V-sync off, and is related to the game, graphics driver, the mouse, and so on.

Will G-Sync Ever Be Standardized?

This came up during a recent AMA with AMD when a reader wanted to get the company's reaction to G-Sync. However, we also wanted to follow up with Nvidia directly to see if the company had any plans to push its technology as an industry standard. In theory, it could propose G-Sync as an update to the DisplayPort standard, exposing variable refresh rates. Nvidia is a member of VESA, the industry's main standard-setting board, after all.

Simply, there are no plans to introduce a new spec to DisplayPort, HDMI, or DVI. G-Sync is already working with DisplayPort 1.2, meaning there's no need for a standards change.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

As mentioned, Nvidia is working to make G-Sync compatible with what it currently refers to as 3D LightBoost (and will be called something else soon). It's also trying to bring the module's cost down to make G-Sync more accessible.

G-Sync At Ultra HD Resolutions

Nvidia's online FAQ promises G-Sync-capable monitors with resolutions as high as 3840x2160. However, the Asus model we're previewing today maxes out at 1920x1080. Currently, the only Ultra HD monitors employ STMicro's Athena controller, which uses two scalers to create a tiled display. We were curious, then: does the G-Sync module support an MST configuration?

In truth, we'll be waiting a while before 4K displays show up with variable refresh rates. Today, there is no single scaler able to support 4K resolutions, and the soonest that's expected to arrive is Q1 of 2014, with monitors including it showing up in Q2. Because the G-Sync module replaces the scaler, compatible panels would only start surfacing sometime after that point. Fortunately, the module does natively support Ultra HD.

Keeping Up: What Happens Under 30 Hz?

The variable refresh enabled by G-Sync works down to 30 Hz. The explanation for this is that, at very low refresh rates, an image on an LCD starts to decay after a while and you end up with visual artifacts. If your source drops below 30 FPS, the module knows to refresh the panel automatically, avoiding those issues. That might mean displaying the same image more than once, but the threshold is set to 30 Hz to maintain the best-looking experience possible.

Current page: 3D LightBoost, On-Board Memory, Standards, And 4K

Prev Page To Synchronize Or Not To Synchronize, That Is (No Longer) The Question Next Page 60 Hz Panels, SLI, Surround, And Availability-

gamerk316 I consider Gsync to be the most important gaming innovation since DX7. It's going to be one of those "How the HELL did we live without this before?" technologies.Reply -

monsta Totally agree, G Sync is really impressive and the technology we have been waiting for.Reply

What the hell is Mantle? -

wurkfur I personally have a setup that handles 60+ fps in most games and just leave V-Sync on. For me 60 fps is perfectly acceptable and even when I went to my friends house where he had a 120hz monitor with SLI, I couldn't hardly see much difference.Reply

I applaud the advancement, but I have a perfectly functional 26 inch monitor and don't want to have to buy another one AND a compatible GPU just to stop tearing.

At that point I'm looking at $400 to $600 for a relatively paltry gain. If it comes standard on every monitor, I'll reconsider. -

expl0itfinder Competition, competition. Anybody who is flaming over who is better: AMD or nVidia, is clearly missing the point. With nVidia's G-Sync, and AMD's Mantle, we have, for the first time in a while, real market competition in the GPU space. What does that mean for consumers? Lower prices, better products.Reply -

This needs to be not so proprietary for it to become a game changer. As it is, requiring a specific GPU and specific monitor with an additional price premium just isn't compelling and won't reach a wide demographic.Reply

Is it great for those who already happen to fall within the requirements? Sure, but unless Nvidia opens this up or competitors make similar solutions, I feel like this is doomed to be as niche as lightboost, Physx, and, I suspect, Mantle. -

ubercake I'm on page 4, and I can't even contain myself.Reply

Tearing and input lag at 60Hz on a 2560x1440 or 2560x1600 has been the only reason I won't game on one. G-sync will get me there.

This is awesome, outside-of-the-box thinking tech.

I do think Nvidia is making a huge mistake by keeping this to themselves though. This should be a technology implemented with every panel sold and become part of an industry standard for HDTVs, monitors or other viewing solutions! Why not get a licensing payment for all monitors sold with this tech? Or all video cards implementing this tech? It just makes sense.

-

rickard Could the Skyrim stuttering at 60hz w/ Gsync be because the engine operates internally at 64hz? All those Bethesda tech games drop 4 frames every second when vsync'd to 60hz which cause that severe microstutter you see on nearby floors and walls when moving and strafing. Same thing happened in Oblivion, Fallout 3, and New Vegas on PC. You had to use stutter removal mods in conjunction with the script extenders to actually force the game to operate at 60hz and smooth it out with vsync on.Reply

You mention it being smooth when set to 144hz with Gsync, is there any way you cap the display at 64hz and try it with Gsync alone (iPresentinterval=0) and see what happens then? Just wondering if the game is at fault here and if that specific issue is still there in their latest version of the engine.

Alternatively I suppose you could load up Fallout 3 or NV instead and see if the Gsync results match Skyrim. -

Old_Fogie_Late_Bloomer I would be excited for this if it werent for Oculus Rift. I don't mean to be dismissive, this looks awesome...but it isn't Oculus Rift.Reply -

hysteria357 Am I the only one who has never experienced screen tearing? Most of my games run past my refresh rate too....Reply