RAM Wars: Return of the JEDEC

SDRAM, Continued

SDRAM was initially introduced as the answer to all performance problems, however it quickly became apparent that there was actually little performance benefit and a lot of compatibility problems. The first SDRAM modules contained only two clock lines, though it was soon determined that this was insufficient. This led to the creation of two different module designs (2-clock and 4-clock), and you needed to know which your motherboard required. Though the timings were theoretically supposed to be 5-1-1-1 @ 66 MHz, many of the original SDRAM would only run at 6-2-2-2 when run in pairs, mostly because the chipsets (i430VX, SiS5571) had trouble with the speed and coordinating the accesses between modules. The i430TX chipset and later non-Intel chipsets improved upon this, and the SPD chip (serial presence detect) was added to the standard so chipsets could read the timings from the module. Unfortunately, for quite some time the SPD EEPROM was either not included on many modules, or not read by the motherboards.

SDRAM chips are officially rated in MHz, rather than nanoseconds (ns), so that there is a common denominator between the bus speed and the chip speed. This speed is determined by dividing 1 second (1 billion ns) by the output speed of the chip. For example, a 67 MHz SDRAM chip is rated as 15ns. Note that this nanosecond rating is not measuring the same timing as an asynchronous DRAM chip. Remember, internally, all DRAM operates in a very similar manner, and most performance gains are achieved by 'hiding' the internal operations in various ways.

The original SDRAM modules either used 83 MHz chips (12ns) or 100 MHz chips (10ns); however, these were only rated for 66 MHz bus operation. Due to some of the delays introduced when having to deal with the synchronization of signals, the 100 MHz chips will produce a module that operates reliably at about 83 MHz, in many cases. These SDRAM modules are now called PC66, to differentiate them from those conforming to Intel's PC100 specification.

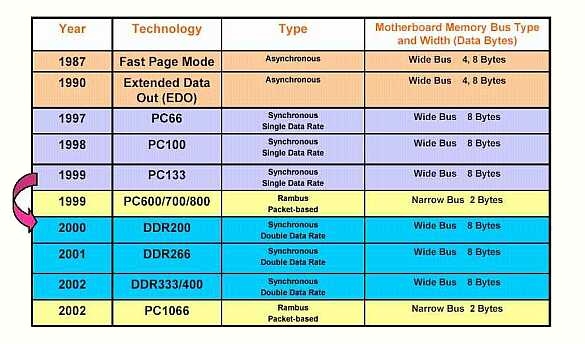

The history of PC RAM as portrayed by Kingston Technology shows how SDRAM has been a long time in the making.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.