8K Going Mainstream? What YouTube for Android TV Support Means for Next-Gen High-Res

8K content still faces many hurdles.

Google has added "limited" 8K resolution (7680 x 4320) support to its YouTube app for Android TV, its operating system targeting smart TVs, set-top boxes and the like. Previously, playing 8K YouTube videos required a properly specced PC or console, but this recent addition is a key development in the industry's adoption of 8K.

The Xbox Series X/S and PlayStation 5 are already helping to set the stage for wider adoption of 8K in general. Some of the new best graphics cards also support 8K.

The latest update for the YouTube app for Android TV (version 2.12.08) came out last week, bringing support for playback of 8K videos if you have an TV screen that's using the Android 10 OS or newer, reports PCWatch.

The announcement may seem odd to those who follow YouTube, since the service added support for 8K videos back in 2015 (only for PCs). However, this is still key because it brings 8K content to the living room without a console or PC. But how big of a breakthrough is it?

Monitors and televisions featuring 8K resolution are still expensive. Many are just getting into 4K, and it'll be a while before the industry has convinced shoppers that an 8K screen is necessary.

Also making 8K hardware seem less beneficial is the lack of 8K content actually available to enjoy. 8K content, be it broadcast, streaming videos or discs, are still rare. This is partially due to complexities with cost and 8K content distribution.

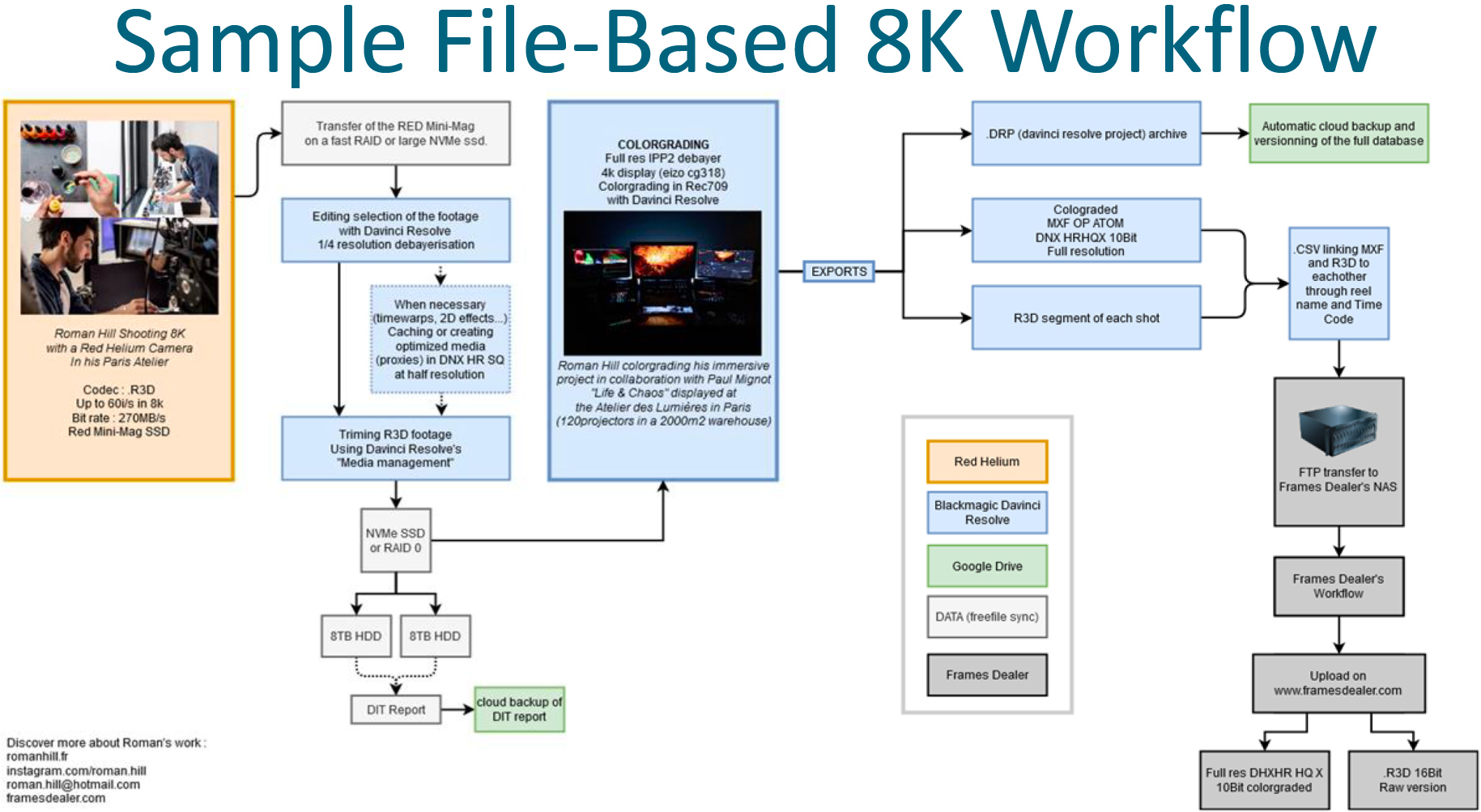

8K Production Costs

From a production point of view, everything is relatively straightforward. There are broadcast, cinema and even consumer-grade 8K digital cameras (and even smartphones with 8K capabilities) available from more than a dozen companies. Movies shot on film can also be scanned in 8K. There are 8K post-production hardware/software solutions and processes. Several major existing, as well as upcoming, projects were at least partly shot in 8K (Bloodshot, The New Mutants, Black Widow, Morbius), and there are already a bunch of movies shot in the ARRIRAW 6.5K format (Mulan, The Revenant, The Call of the Wild).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Note that the ongoing 8K rollout coincides with the rollout of the Rec. 2020/BT.2020 color space both for cinemas and televisions, which adds certain challenges to 8K post-production. Due to higher requirements for processing power, storage and memory, 8K/Rec.2020 production equipment and post-production tools are more expensive when compared to 2K and 4K. Therefore, while beyond-4K hardware and software is available, decision-makers have to invest in 8K production in a bid to make 8K content widespread.

8K Distribution Challenges

Shooting a movie using a high-end camera and post-processing it in a 6.5K or 8K resolution is a bit harder and more expensive compared to making 2K or 4K film, but that's only half of the story. The distribution of 8K content faces numerous roadblocks.

Several 8K projectors are already available, but that doesn't mean they're flying off shelves. Cinemas that recently invested in 4K at 120 Hz Rec. 2020 projectors are having a particularly hard time making money off of them due to pandemic-related lockdowns and movie release delays. As a consequence, not a lot of cinemas are 8K-ready today.

8K TVs are more widespread, but getting actual 8K content on them is somewhat tricky. The 8K Association says that it is highly unlikely that another optical disc format for 8K movies will be introduced anytime soon. That makes streaming the main option, since satellite broadcasting isn't exactly widespread. In contrast, local IPTV services are available in select territories.

Distributing 8K content via the Internet is a bandwidth challenge for streaming services, ISPs and sometimes even end users. An 8K HEVC-encoded video stream can use 50-100 Mbps of bandwidth, which is expensive both from a bandwidth and storage point of view.

YouTube and Vimeo already have hundreds of 8K videos encoded using various codecs (AV1 or HEVC), but many of them come from TV manufacturers wanting to demonstrate capabilities of their latest devices, travel bloggers, indie producer or even end users. This kind of content isn't likely to become widely popular, but if more people get 8K TVs, and popular 8K content is released, bandwidth might become a problem.

'Smart Streaming' Might Help

On the codec side of matters, there are AV1, HEVC and LCEVC encoders available for 8K today. They use quite a lot of bandwidth for 8K videos. In the longer term, more advanced AVS3 (finalized in 2020), EVC (feature freeze as of August 2020) and VVC/H.266 (finalized in 2020) codecs will be used. These codecs significantly increase the complexity of decoders (about twice that of HEVC), so their adoption will take some time. Furthermore, new hardware, like SoCs and TVs, will be required to take advantage of the new compression formats.

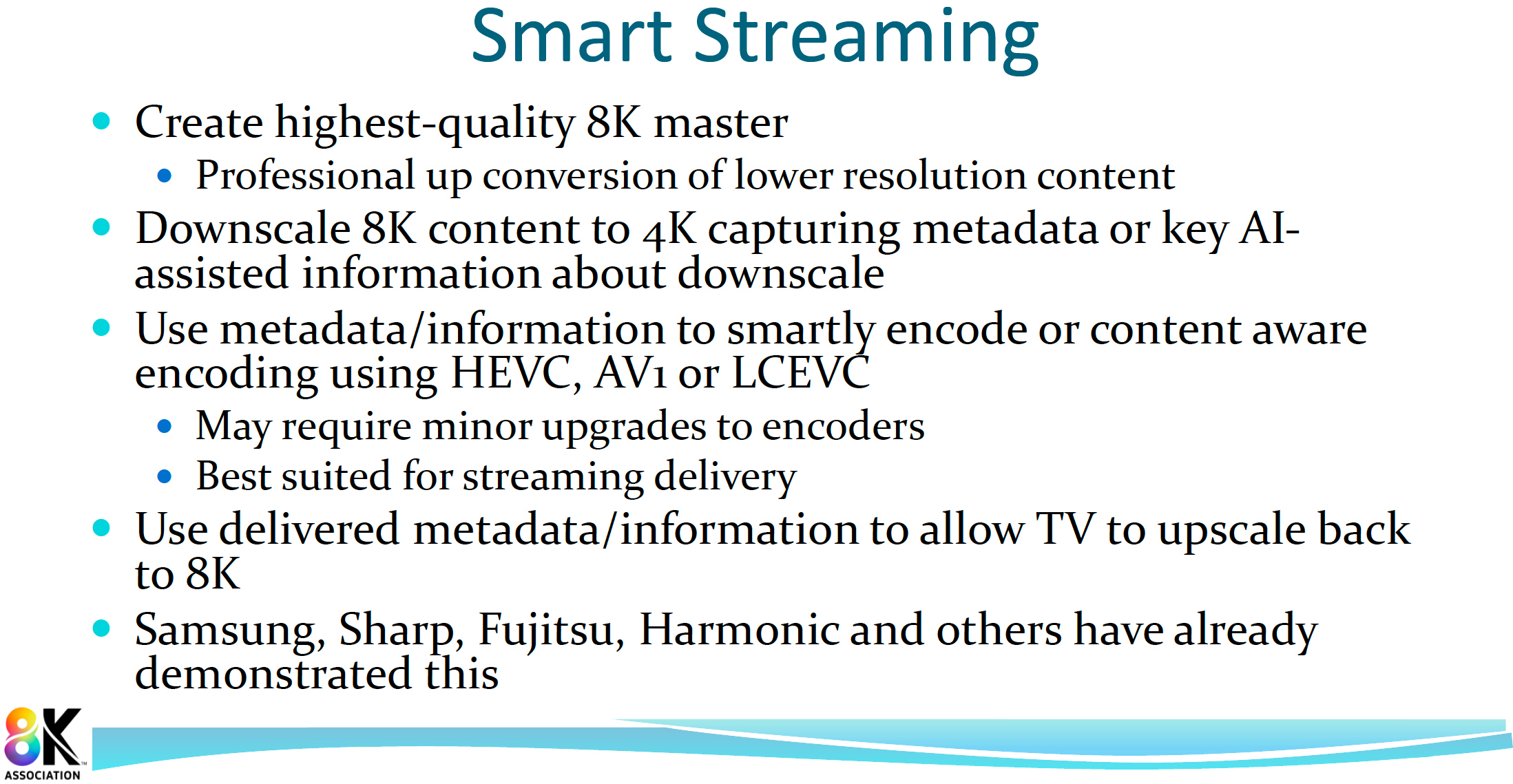

Currently, streaming services and hardware makers are trying to find a way to reduce bitrates. One such method is dubbed Smart Streaming.

Smart Streaming is an umbrella term that describes proprietary approaches to reduce the bandwidth required for 8K streaming. Several hardware manufacturers — Samsung, Sharp and Fujitsu, just to name a few — are considering Smart Streaming in combination with existing codecs as the most practical option for near-term 8K streaming solution.

Samsung is looking to provide content creators with its AI upscaler algorithm to downscale their 8K master while capturing downscaling metadata and embedding it into the HEVC stream. According to Samsung, its approach reduces bandwidth requirements from 50 Mbps to 15 Mbps. This is a proprietary solution for Samsung TVs, but technically it could work with live TV. According to the 8K Association, Amazon is experimenting with the technology.

Harmonic, a developer of video streaming and cable access solutions, proposes to use content-adaptive encoding (CAE), along with existing codecs. This solution might work on a broad range of hardware but will unlikely work for live TV.

Since Smart Streaming is not a universal standard, it remains to be seen whether it'll take off. In any case, it always takes a while before such standards are finalized.

Final Thoughts

Google deserves some kudos for adding 8K playback to its YouTube for Android TV app. It's possible that Microsoft and Sony's latest game consoles nudged them in this direction, but it's still a big step for mainstream 8K.

8K streaming support by Android TVs, the Xbox Series X/S and PlayStation 5 sets the stage for wider adoption of 8K in general and particularly 8K streaming.

Major Hollywood studios have produced numerous 6.5K and 8K movies already with more to come. Without a new optical disc format, they are poised to distribute these movies using various streaming platforms. While we don't expect blockbuster 8K movies from the Big Five to debut on YouTube, the latter might develop original 8K content.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

N0Spin While I and many others with a specific interest in cameras and video processing will most likely find news and articles like this forward looking and therefore interesting, I believe it might make real sense to ground such articles with further qualification and discussion about exactly how and where a user might actually be able to perceive a true benefit from 8K video.Reply

I know there were some basic concepts in the past about requiring at least a 40-50" screen (I believe?), before many users could even truly recognize much of a difference between a 1080p and 4K video, let alone between 2k and 4K.

Now for cinemas that want to push the envelop, etc. I completely understand where this can and will have it's place, I truly wonder just how large a TV or home projection screen would be required or recommended to best utilize 8K, let alone what consumer level processing hardware is truly up to the task to properly deliver up such content at a meaningful level of quality. -

voyteck Not sure about TV/Cinema since too much detail can spoil the experience (steal the viewer's focus) but I would really want to have 8K in my desktop display. It might be just enough to turn off font smoothing completely. Ultra HD on a 27" screen might seem sharp enough but it's not, even with subpixel rendering. It's not only about seemingly smooth edges because even in Ultra HD the thickness of letters changes, as well as blank space between them, which in turn affects more or less readability and understanding (depends on the font choice).Reply -

N0Spin I could definitely understand your point for graphics or print production professionals, though I can't say I've actually witnessed the impact myself.Reply

Currently my main home PC has a decent couple year old 27" 2K display which unfortunately appears to have a single dead (dark) pixel at this point. One of the things which reinforces my view about the value (at least for me) on increasing resolutions is the fact that while sitting 1-2' from my screen, unless the image surrounding that one pixel happens to be a completely white field I can 't even find it, and even then, it is so small I have to literally go looking for it.

As for movies and video content, I can see where 4K TVs make some sense, but even that (at least AFAIK) seemed to really require at least a minimum of around a 50" TV to truly appreciate it. Once again though the amount of real 4K content which is actually available over cable etc. appears to be somewhat limited as far as I've seen. -

voyteck By the way, there is a simple test. Download IntelliJ and watch in a live view how different font smoothing settings change the content or the sample text. It's really startling. I'm not even sure 8K will be enough to get rid of smoothing entirely.Reply

Even comparing laser print to screen reveals substantial difference at how fonts look. Obviously, the smaller the print, the bigger the difference. Everything might seem perfectly sharp from two feet away but some letters (lines) seem thicker than others even if they shouldn't. For example, m can have three different stems and i can swell either to the left or to the right. Which, as I said previously, changes the intended distance between letters, which, in turn, needs to be compensated somehow by the brain (provided the original font has been truly optimized for readability).

That's why proofreaders and copy editors have (or had?) always tended to read a printed copy at least once.