SanDisk A110 PCIe SSD: Armed With The New M.2 Edge Connector

We got our hands on an early sample of SanDisk's A110 SSD. So what? Big deal? Not a chance. This thing is PCI Express-attached and sports the new M.2 edge connector. Read on to learn more about the next generation of solid-state storage connectivity.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

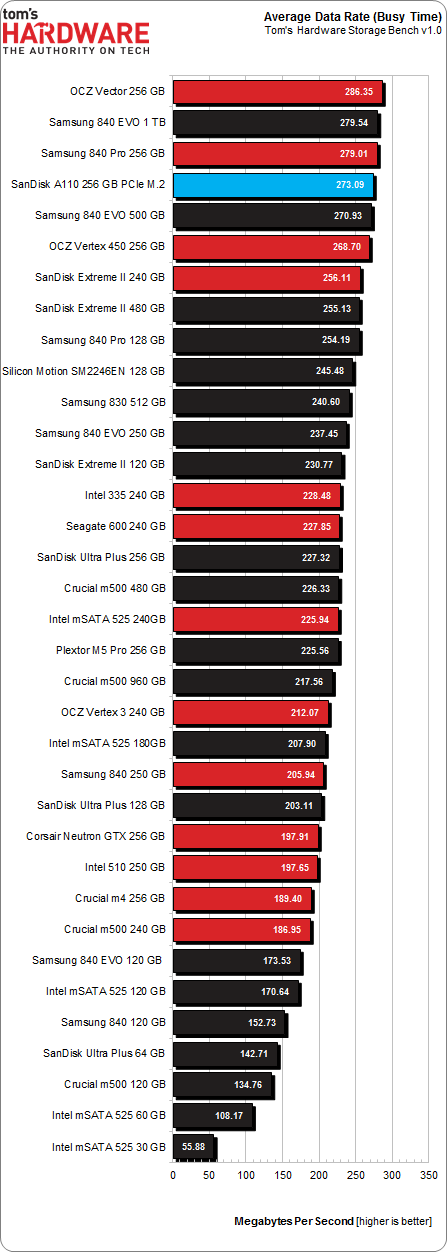

Results: Tom's Hardware Storage Bench v1.0

Storage Bench v1.0 (Background Info)

Our Storage Bench incorporates all of the I/O from a trace recorded over two weeks. The process of replaying this sequence to capture performance gives us a bunch of numbers that aren't really intuitive at first glance. Most idle time gets expunged, leaving only the time that each benchmarked drive was actually busy working on host commands. So, by taking the ratio of that busy time and the the amount of data exchanged during the trace, we arrive at an average data rate (in MB/s) metric we can use to compare drives.

It's not quite a perfect system. The original trace captures the TRIM command in transit, but since the trace is played on a drive without a file system, TRIM wouldn't work even if it were sent during the trace replay (which, sadly, it isn't). Still, trace testing is a great way to capture periods of actual storage activity, a great companion to synthetic testing like Iometer.

Incompressible Data and Storage Bench v1.0

Article continues belowAlso worth noting is the fact that our trace testing pushes incompressible data through the system's buffers to the drive getting benchmarked. So, when the trace replay plays back write activity, it's writing largely incompressible data. If we run our storage bench on a SandForce-based SSD, we can monitor the SMART attributes for a bit more insight.

| Mushkin Chronos Deluxe 120 GBSMART Attributes | RAW Value Increase |

|---|---|

| #242 Host Reads (in GB) | 84 GB |

| #241 Host Writes (in GB) | 142 GB |

| #233 Compressed NAND Writes (in GB) | 149 GB |

Host reads are greatly outstripped by host writes to be sure. That's all baked into the trace. But with SandForce's inline deduplication/compression, you'd expect that the amount of information written to flash would be less than the host writes (unless the data is mostly incompressible, of course). For every 1 GB the host asked to be written, Mushkin's drive is forced to write 1.05 GB.

If our trace replay was just writing easy-to-compress zeros out of the buffer, we'd see writes to NAND as a fraction of host writes. This puts the tested drives on a more equal footing, regardless of the controller's ability to compress data on the fly.

Average Data Rate

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Storage Bench trace generates more than 140 GB worth of writes during testing. Obviously, this tends to penalize drives smaller than 180 GB and reward those with more than 256 GB of capacity.

Despite a heady advantage in the sequential performance benchmarks, the little A110 doesn't quite secure a first-place finish (even if the difference between those top four finishers is negligible at best). The 256 GB Vector actually earns top honors, though that model is comparatively very expensive. SanDisk's PCIe-connected A110 is otherwise surrounded by Samsung's compelling SSDs.

Current page: Results: Tom's Hardware Storage Bench v1.0

Prev Page Results: Random Performance Next Page Results: Tom's Hardware Storage Bench v1.0, Continued-

Mike Friesen Awesome new stuff. Can't wait to see if this drive actually uses the full potential of the M2, and if Samsung or OCZ can one-up them.Reply -

cryan Reply11487924 said:Awesome new stuff. Can't wait to see if this drive actually uses the full potential of the M2, and if Samsung or OCZ can one-up them.

Samsung actually has some pretty awesome M.2 PCIe action going on. We're trying to get our hands on everything, so stay tuned.

Regards,

Christopher Ryan

-

It will be nice to see vendors implement the NVMe connectors in the desktop mobo's, which in turn will redefine case design, as less storage space will be required for storage. I am aware that the initial intent is to direct these at the mobile market, but desktops can benefit as well.Reply

-

cryan Reply11488018 said:It will be nice to see vendors implement the NVMe connectors in the desktop mobo's, which in turn will redefine case design, as less storage space will be required for storage. I am aware that the initial intent is to direct these at the mobile market, but desktops can benefit as well.

You'll really see NVMe take off on the desktop with the move towards SATA Express. A SSD on SATA Express will leverage NVMe and two PCIe Gen 3 lanes. Though some motherboards will (and already do) have M.2 connectors, M.2 really makes more sense in mobile applications. M.2 will only get traction on the desktop insofar as it will begin to replace mSATA. Tons of mainboards, especially smaller form factor products embrace mSATA, and moving to M.2 is a natural transition. However, M.2 drives are hard to find right now, and we really won't see a plethora of options until next year.

Regards,

Christopher Ryan

-

nekromobo I got M.2 toshiba ssd in my Sony Vaio Pro 13.. review that?Reply

and it should have samsung M.2 in some countries.. -

CaedenV I may no longer have motivation to upgrade my system based on CPU specs, but with DDR4, M.2, new restive storage based SSDs, and better chipset features I will still have enough reason to upgrade in a year or two.Reply -

jimmysmitty Reply11488122 said:11488018 said:It will be nice to see vendors implement the NVMe connectors in the desktop mobo's, which in turn will redefine case design, as less storage space will be required for storage. I am aware that the initial intent is to direct these at the mobile market, but desktops can benefit as well.

You'll really see NVMe take off on the desktop with the move towards SATA Express. A SSD on SATA Express will leverage NVMe and two PCIe Gen 3 lanes. Though some motherboards will (and already do) have M.2 connectors, M.2 really makes more sense in mobile applications. M.2 will only get traction on the desktop insofar as it will begin to replace mSATA. Tons of mainboards, especially smaller form factor products embrace mSATA, and moving to M.2 is a natural transition. However, M.2 drives are hard to find right now, and we really won't see a plethora of options until next year.

Regards,

Christopher Ryan

That's what I was thinking. SATA Express is going to be fast enough for now as I have used PCIe SSDs before (OCZ Revo based drive) and compared to my 520 its hard to notice a difference, especially since there are other bottlenecks stopping it from being able to utilize that bandwidth.

This will be great for ultra portable systems though and ITX systems.

-

cryan Reply11488367 said:I got M.2 toshiba ssd in my Sony Vaio Pro 13.. review that?

and it should have samsung M.2 in some countries..

Absolutely... just send it my way and consider it done.

Regards,

Christopher Ryan

-

mikeangs2004 will there be RAID or SLI/CFX for PCIe based SSD's?Reply

I don't think so b/c it's already way above 6G limit.