ASRock's E350M1: AMD's Brazos Platform Hits The Desktop First

We had the opportunity to preview the Zacate APU late last year at AMD’s headquarters in Austin, Texas. Now we have the first retail motherboard based on the Brazos platform in ASRock’s E350M1. Today we’re asking: what can the Fusion initiative really do?

Transcode Performance: The APU, CUDA, Stream, And Software

Limited to decode acceleration, our expectations of what AMD’s Zacate APU will be able to do in transcode-oriented workloads has to be tempered.

We’re testing each solution with a 10.5 Mb/s trailer of the movie Death Race from Apple’s Web site, encoded using H.264 video and AAC multi-channel audio.

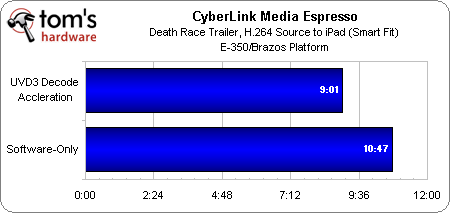

Because the E-350 doesn’t include hardware-accelerated encode, we’re only able to test it in software-only mode and with decode acceleration enabled. Using an optimized copy of CyberLink MediaEspresso 6.5, and converting to the canned iPad profile (Smart Fit, H.264, AAC) we cut what would have been a nearly 11-minute transcode down to just over nine minutes—an almost-20% speed-up.

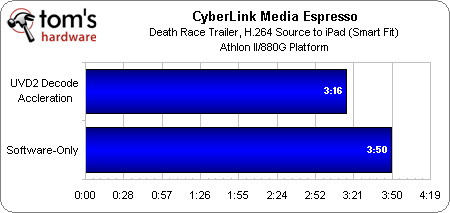

AMD’s 880G chipset, armed with Radeon HD 4250 graphics (40 stream processors) is similarly too anemic to handle encode acceleration. That task rests on the low-power Athlon II X2 240e running at 2.8 GHz. As a result, we’re able to compare software transcoding to AMD’s chipset with decode acceleration enabled. Because the desktop-class CPU is so much faster than Zacate, even the software-based test blazes by in less than four minutes. Decode acceleration helps get the job done 17% faster, though.

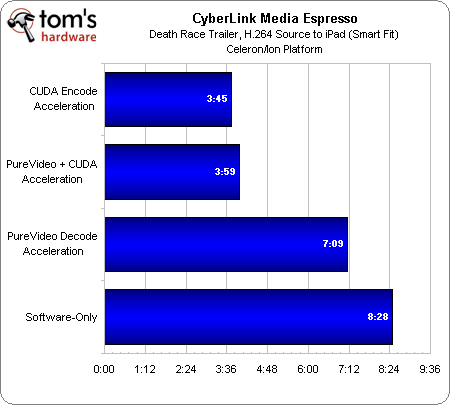

In software-only mode, Intel’s 10 W Celeron SU2300 takes more than eight minutes to complete its transcode task. Flipping the switch on Nvidia’s Ion chipset, enabling PureVideo support, helps cut that number to just over seven minutes. Adding hardware-accelerated encoding makes an even more dramatic impact, cutting the transcode to less than four minutes. All told, you get a 212% performance boost by turning on hardware-accelerated encode and decode.

Now, the most interesting result surfaces when you switch off decode acceleration, leaving accelerated encoding enabled. The job drops to 3:45, 14 seconds less than decode/encode enabled. Why is this? As it turns out, if you ask Ion to handle both encode and decode, the decode side of that equation slows down, causing the fully-accelerated configuration to take longer than if you let the CPU handle decoding on its own.

If you’re using a mobile system, you’ll still want to leave both hardware options turned on, though, because you’ll benefit from reduced host processor loading with full acceleration enabled, and consequently, longer battery life.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

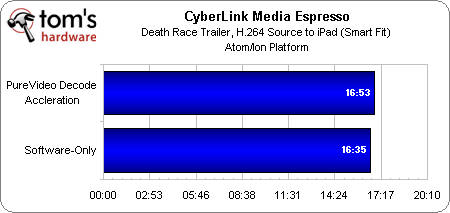

Despite its Ion chipset, the Atom-based platform does not show CUDA acceleration as an option in MediaEspresso. As such, we were only able to select PureVideo decode acceleration. But as the results demonstrate, that doesn't help our transcode job at all. In fact, the data transfer from graphics to processor is enough to slow down the workload versus a software-only implementation.

Current page: Transcode Performance: The APU, CUDA, Stream, And Software

Prev Page More Inklings: Video Transcoding Next Page Is Performance The Only Variable In Play?-

iam2thecrowe now they need some devs to take advantage of that apu to see its full potential as a processor.Reply -

Reynod This is an awesome processor ...Reply

Chris ... did you manage to overclock it at all?

Give it your best shot ... call crashman in with the liquid nitrogen if you need to mate !!

Really impressive stats for such a small piece of silicon. -

dogman_1234 So the Brazo is great for media and hard processing I assume. If someone came to me and asked for a good platrom to watch Blu-Ray...I would say get the Brazo APU for them, right?Reply -

sparky2010 Nice, things are starting to look good for AMD, and i hope it stays that way as they start unveiling their mainstream and highend processors, because i'm really fed up with intel dictating crazy prices.....Reply -

cangelini reynodThis is an awesome processor ... Chris ... did you manage to overclock it at all?Give it your best shot ... call crashman in with the liquid nitrogen if you need to mate !!Really impressive stats for such a small piece of silicon.Reply

Didn't get a chance to mess with overclocking. If this is something you guys want to see, I might try to push it a little harder over the weekend. -

joytech22 cangeliniDidn't get a chance to mess with overclocking. If this is something you guys want to see, I might try to push it a little harder over the weekend.Reply

Yeah that would be much appreciated, these little chips are so much faster than Atom, let's see if you can get them to perform similarly to a Dual-Core CPU at 1.8GHz -

cangelini Alright, I'll see what I can do. A shiny new video card landed this afternoon, so that's going to monopolize the bench for much of the weekend ;)Reply -

dEAne Yes integration is the key to higher performance, lower power consumption and lower price (affordability this is what people really wanted).Reply -

haplo602 can you also run gaming benchmarks with a 5670 or similar plugged into the PCIe slot ? Just to have a look how the limited memory interface will bottleneck ...Reply

also what happens with the intgrated graphics core when you plug in a discreet GPU ? you gave so much detail about this in the sandy bridge review but totaly skip it for Fusion ...

the board got me interested. I am trying to buy a small "workstation terminal" ... something to code OpenGL/OpenCL on a budget. Seems this is what I am looking for.