Call Of Duty: Ghosts Graphics Performance: 17 Cards, Tested

It's already a commercial blockbuster. But does Call of Duty: Ghosts improve the first-person shooter genre, or simply rehash it? We look at this series' newest installment and test to see what kind of hardware you'll need for smooth play on the PC.

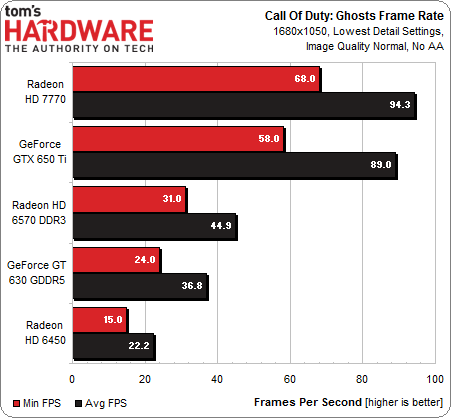

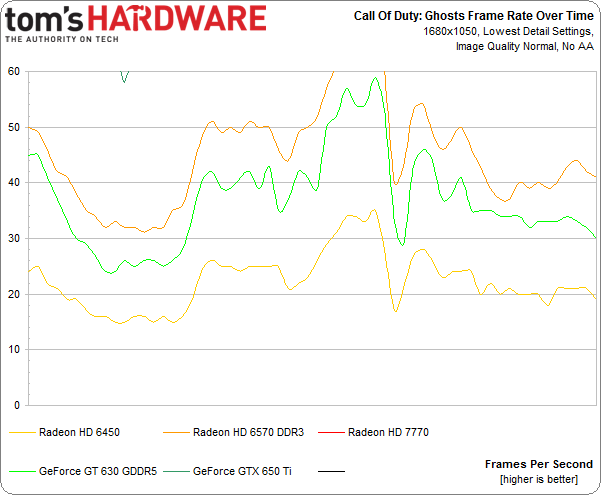

Results: Low Quality, 1680x1050

Using the same settings as last time, we kick the resolution up to 1680x1050. The Normal image quality setting is still upscaling what we see on-screen from a lower render target, though. Because of this, average frames per second are barely any lower than the 1280x720 results.

Once again, AMD's Radeon HD 6570 DDR3 is the lowest you'd want to go for playability.

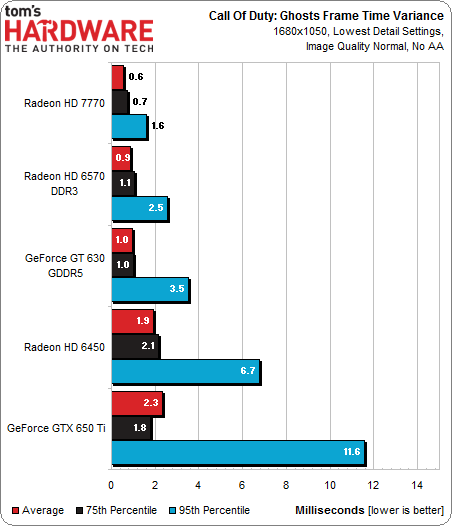

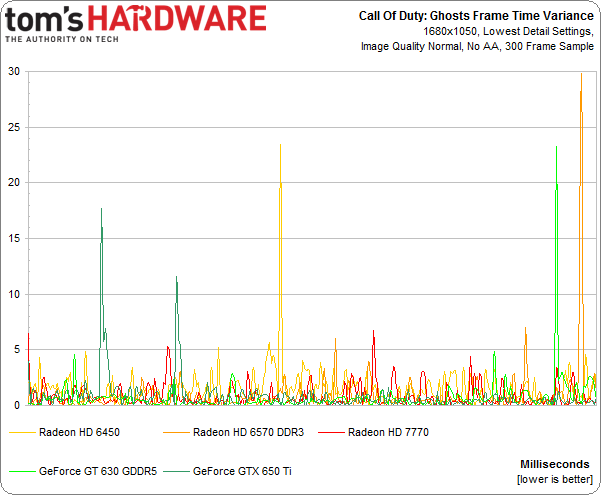

The first frame time variance chart shows us that latency typically isn't an issue, though graphing 300 frames shows some spikes on a few of the cards we're testing.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Results: Low Quality, 1680x1050

Prev Page Results: Low Quality, 1280x720 Next Page Results: High Quality, 1680x1050Don Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

jimmysmitty ReplyI think it's safe to say that Call of Duty defined, and then refined, the console-based first-person shooter experience

It is funny to see this as CoD1 and CoD2 were originally PC games. CoD2 was the first to be ported to the 360 but CoD3 was the first multi-console one of the series, with no release on the PC.

I loved 1 and 2 and 4 was pretty good but now CoD is just the same thing every year. It's just a cash cow currently with no innovation while 1 & 2 were very innovative (CoD1 was the first to have real recorded sounds for every gun used in the game).

I haven't done a CoD since 2. It's too bad as it could have been a great series if it didn't become console and money centric.

Also, on page 9 the chart for the FPS says Battlefield 4...... -

lunyone If you have a PhII x4 965 BE, you can just OC it to get a bit more FPS if you like, so there is that option. Obviously you want more CPU, but not all of us have the $ to do so.Reply -

Cons29 my last cod was mw2 which i stopped playing due to lack of dedicated server. The last i enjoyed was cod4.Reply

bf is much better (personal opinion), 64 players on a huge map with vehicles and desctructions, better than cod -

Frank Zigfreed Loving these game graphics performance reviews!!! keep them coming tomshardware!!Reply

B -

animeman59 Been playing this game on PC ever since it's release, and I gotta say, this is probably one of the worst performing games that I've ever seen. I'm running an FX-8350, a GTX 780, and 32GB of RAM, and this game will still dip below 45fps. I don't care what anyone says, but CoD and IW6 should be running with no issues on a rig like that. It's a little suspicious when I can get 60fps consistent on a game like Battlefield 4 with max settings, but CoD:Ghosts stutters like Porky Pig. Even Metro: Last Light runs better than CoD:Ghosts!Reply

This game is horribly optimized and buggy. People on Steam forums have been complaining about game-breaking bugs from day one, and there's still issues that haven't been answered for, yet. Like the one in Squad Mode where you can't use any of your squad members in a game, except for the first one. Or the earlier bug where people couldn't even create their first soldier, because they didn't have 3 squad points to unlock it, hence locking them out of multiplayer.

Skip out on this game. Infinity Ward obviously doesn't care about the PC market, and their horrible release just further solidifies that fact. Spend your money on a MP shooter that doesn't insult it's audience. -

lunyone Reply12095017 said:Been playing this game on PC ever since it's release, and I gotta say, this is probably one of the worst performing games that I've ever seen. I'm running an FX-8350, a GTX 780, and 32GB of RAM, and this game will still dip below 45fps. I don't care what anyone says, but CoD and IW6 should be running with no issues on a rig like that. It's a little suspicious when I can get 60fps consistent on a game like Battlefield 4 with max settings, but CoD:Ghosts stutters like Porky Pig. Even Metro: Last Light runs better than CoD:Ghosts!

This game is horribly optimized and buggy. People on Steam forums have been complaining about game-breaking bugs from day one, and there's still issues that haven't been answered for, yet. Like the one in Squad Mode where you can't use any of your squad members in a game, except for the first one. Or the earlier bug where people couldn't even create their first soldier, because they didn't have 3 squad points to unlock it, hence locking them out of multiplayer.

Skip out on this game. Infinity Ward obviously doesn't care about the PC market, and their horrible release just further solidifies that fact. Spend your money on a MP shooter that doesn't insult it's audience.

Quake or Unreal Tournament, anyone? -

oxiide Reply12095151 said:LOL @ NVidia frame variance

I get that you're trying to phrase that as an AMD fanboy taking a shot at Nvidia, but frame variance is all over the place in this review. There's AMD hardware all over those charts too, not just clustered at the low end.

These frame variance numbers often aren't even logical—the HD 7990, with lower frame variance than a single HD 7950? A GTX 690 doing better than a single 670? I think its clear that the quality of Infinity Ward's PC port is a factor here, and maybe that's more important than pouncing on Nvidia's mistakes. -

bemused_fred Reply12095017 said:. I'm running an FX-8350, a GTX 780, and 32GB of RAM,

A mediocre-CPU with a top end GPU and too much RAM? I FOUND YOUR PROBLEM!