Why you can trust Tom's Hardware

Can I just say how excited I am to have our 'proper' graphics card power consumption equipment and software finally in place? Trusting what the AMD or Nvidia drivers tell you in regards to power (e.g., via GPU-Z) is just asking a bit too much, I think. With the proper equipment, we can also definitively state how much power the various graphics cards are actually using. Reporting 'GPU-only' power (AMD) is at best deceptive, especially when the competition (Nvidia) reports total board power use.

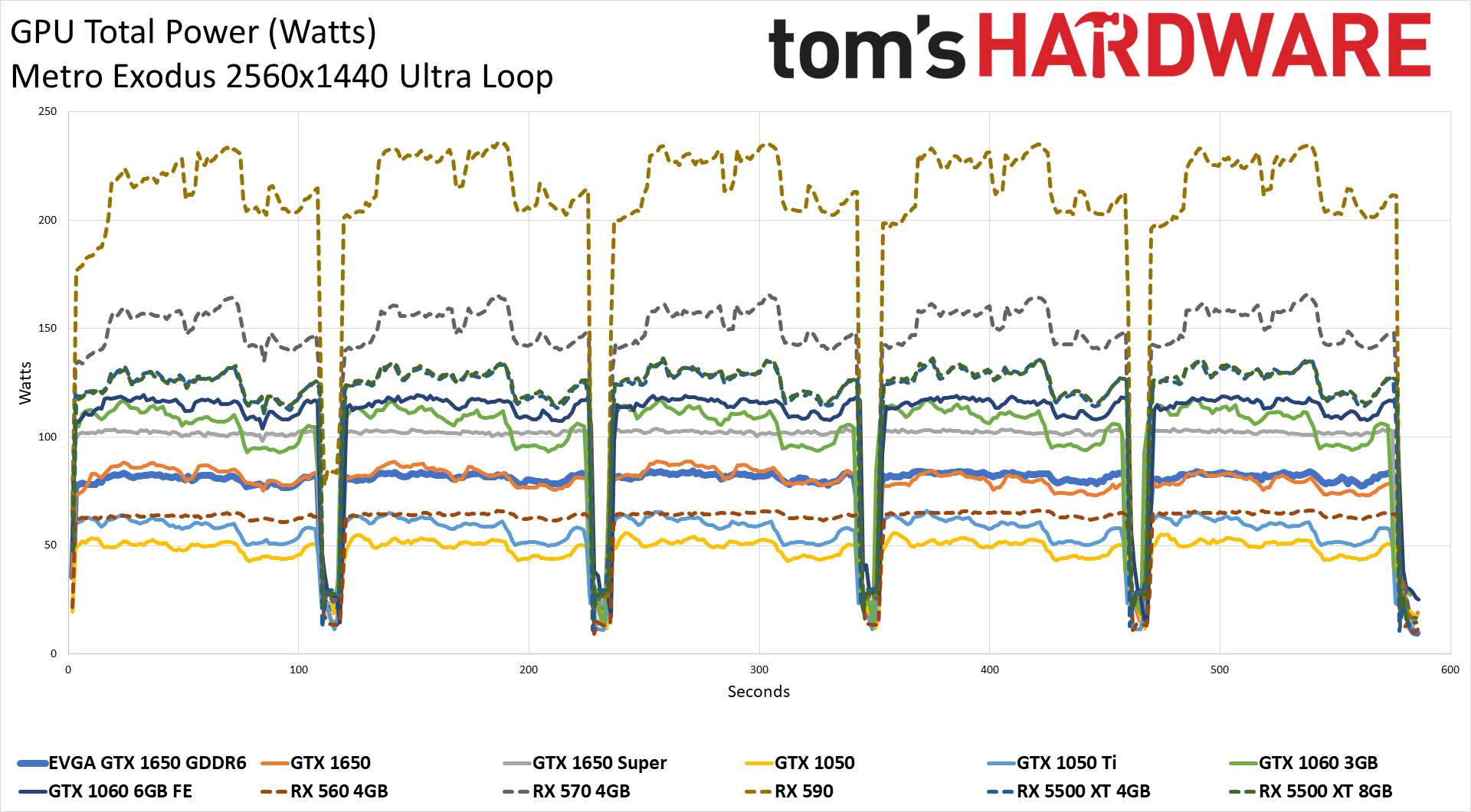

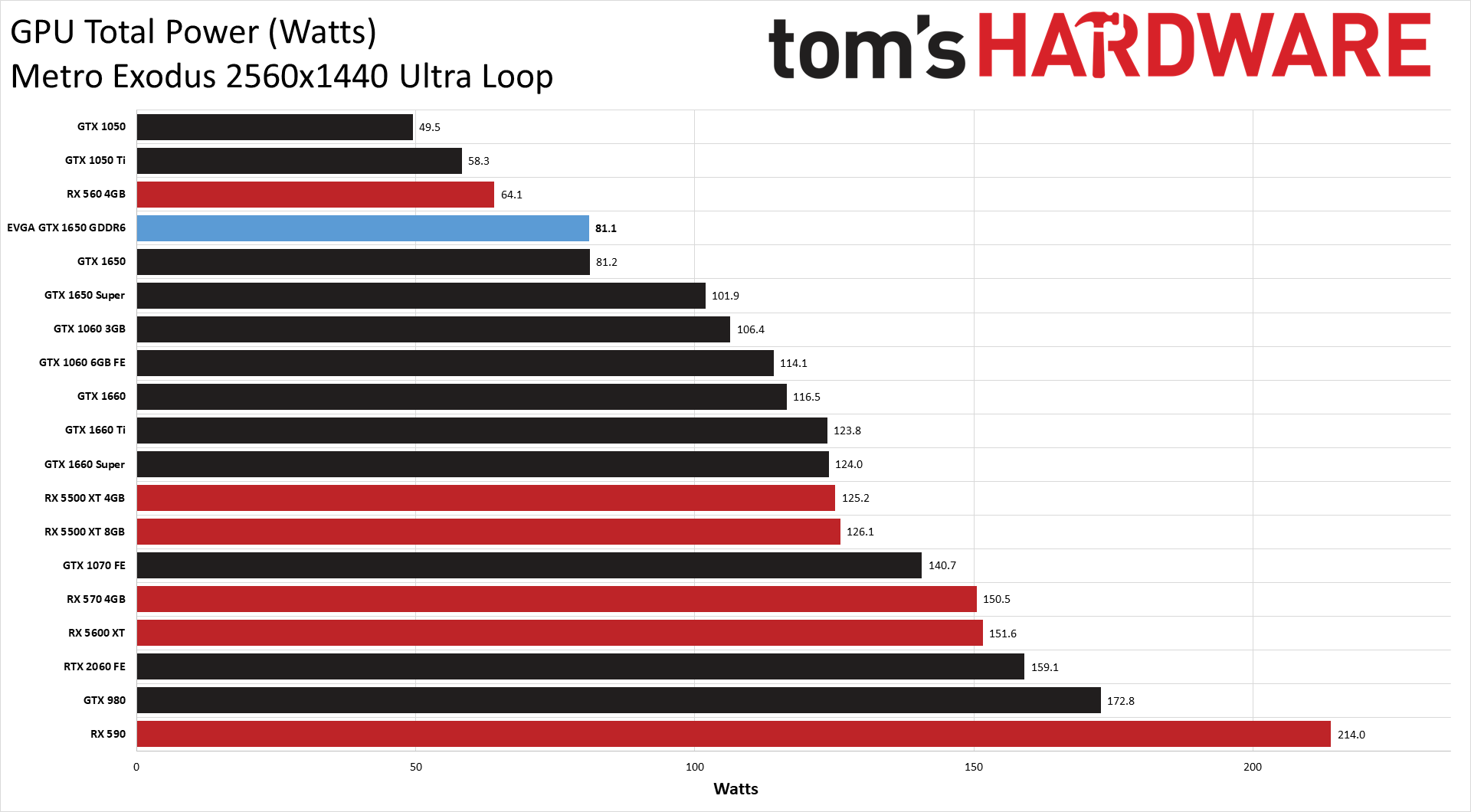

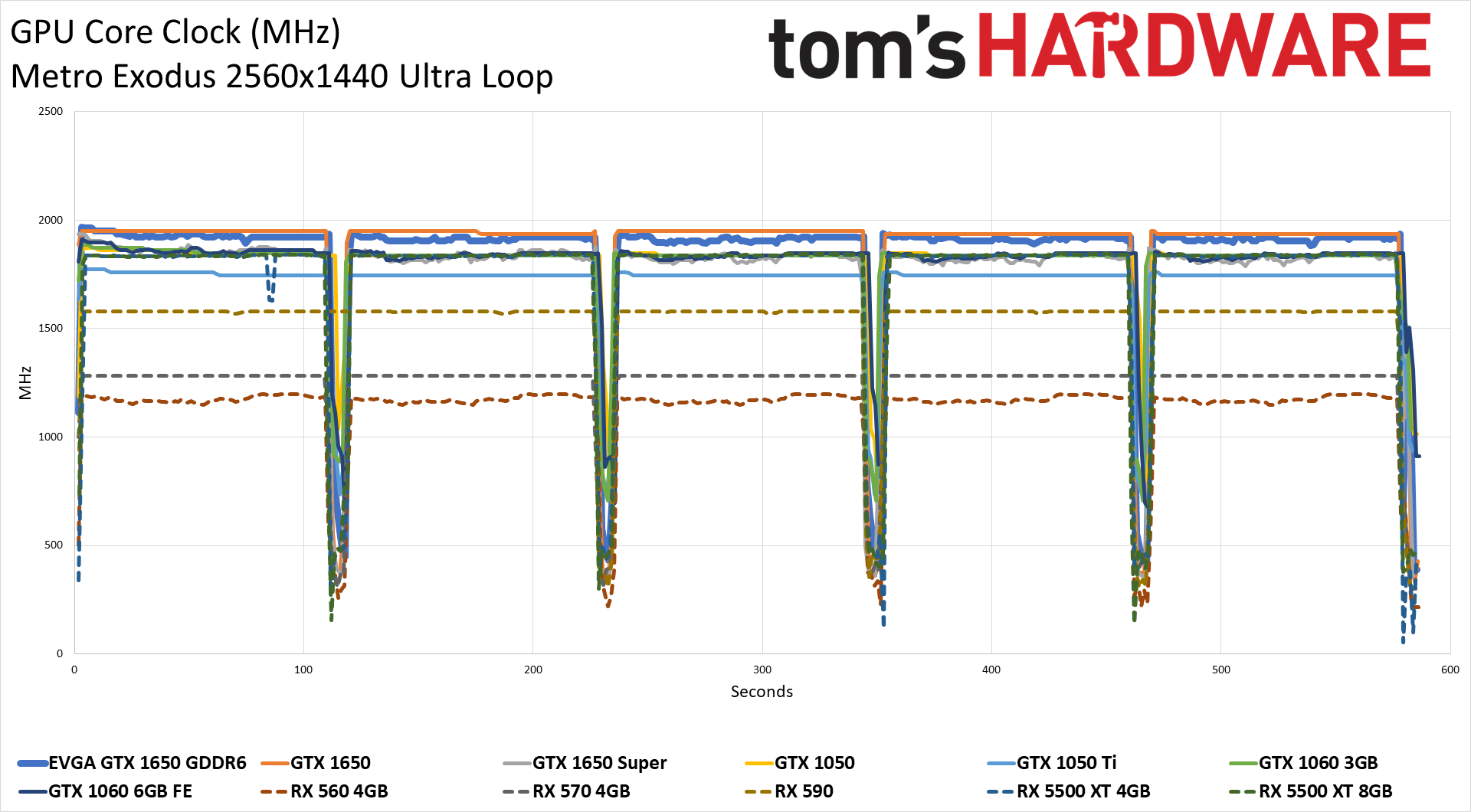

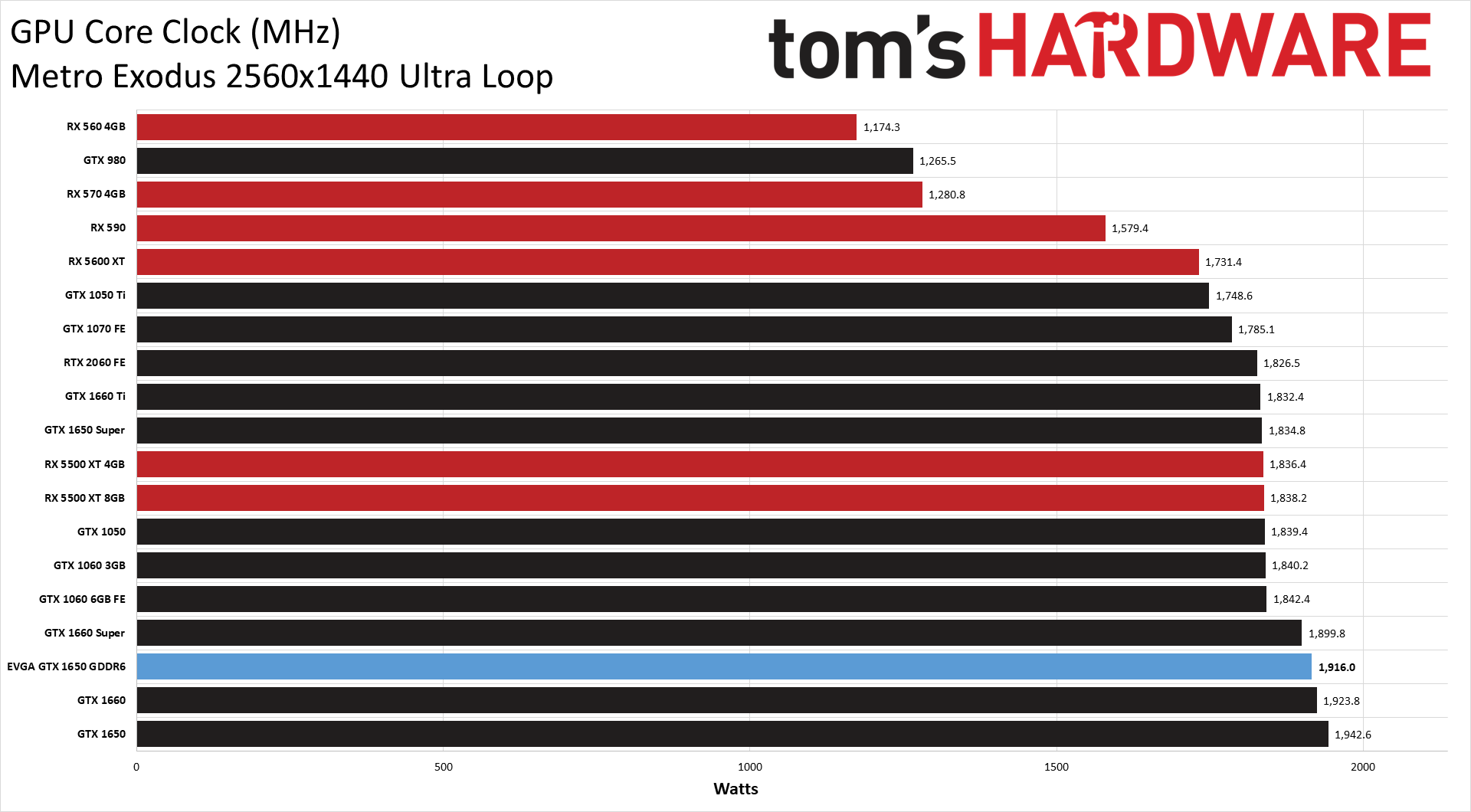

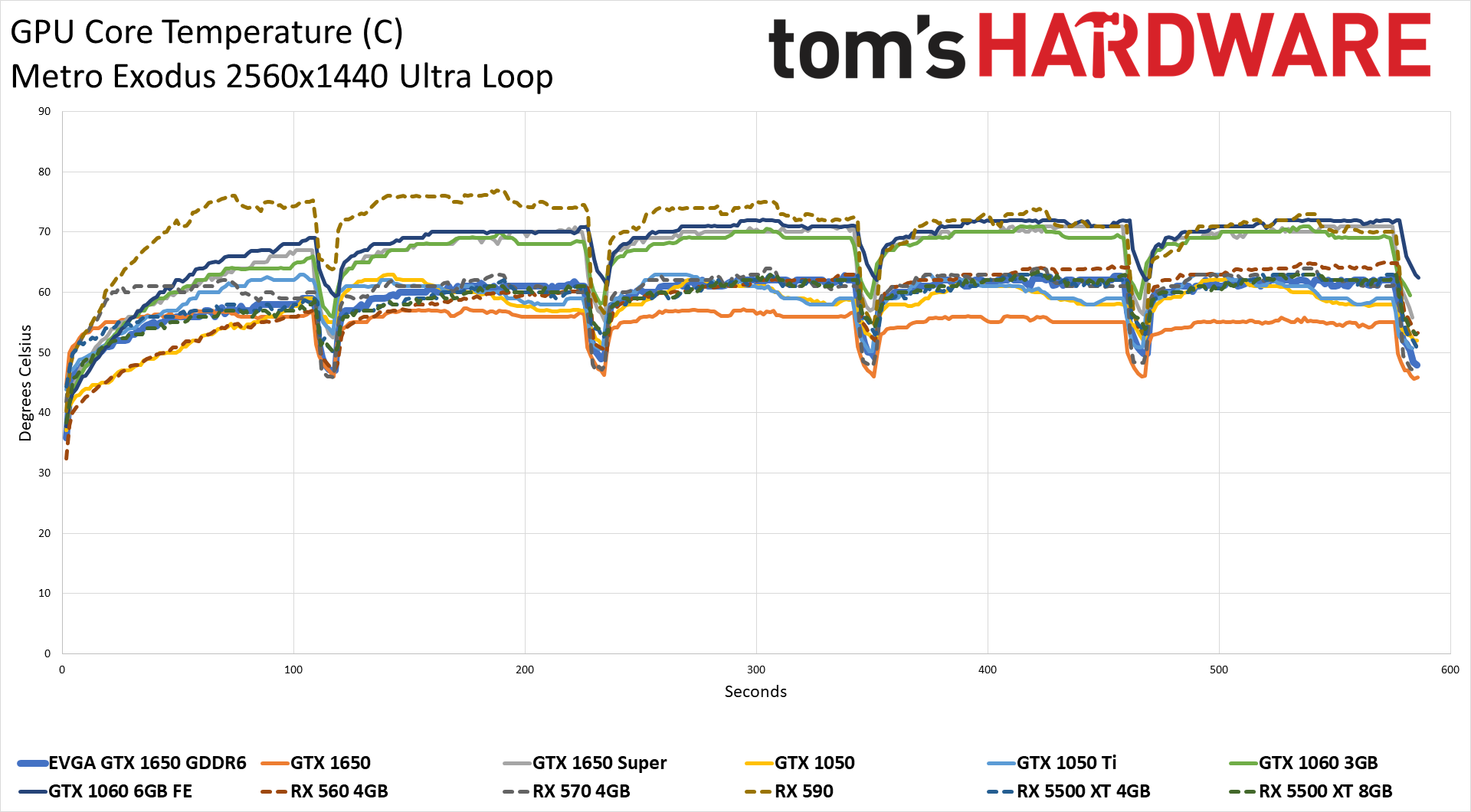

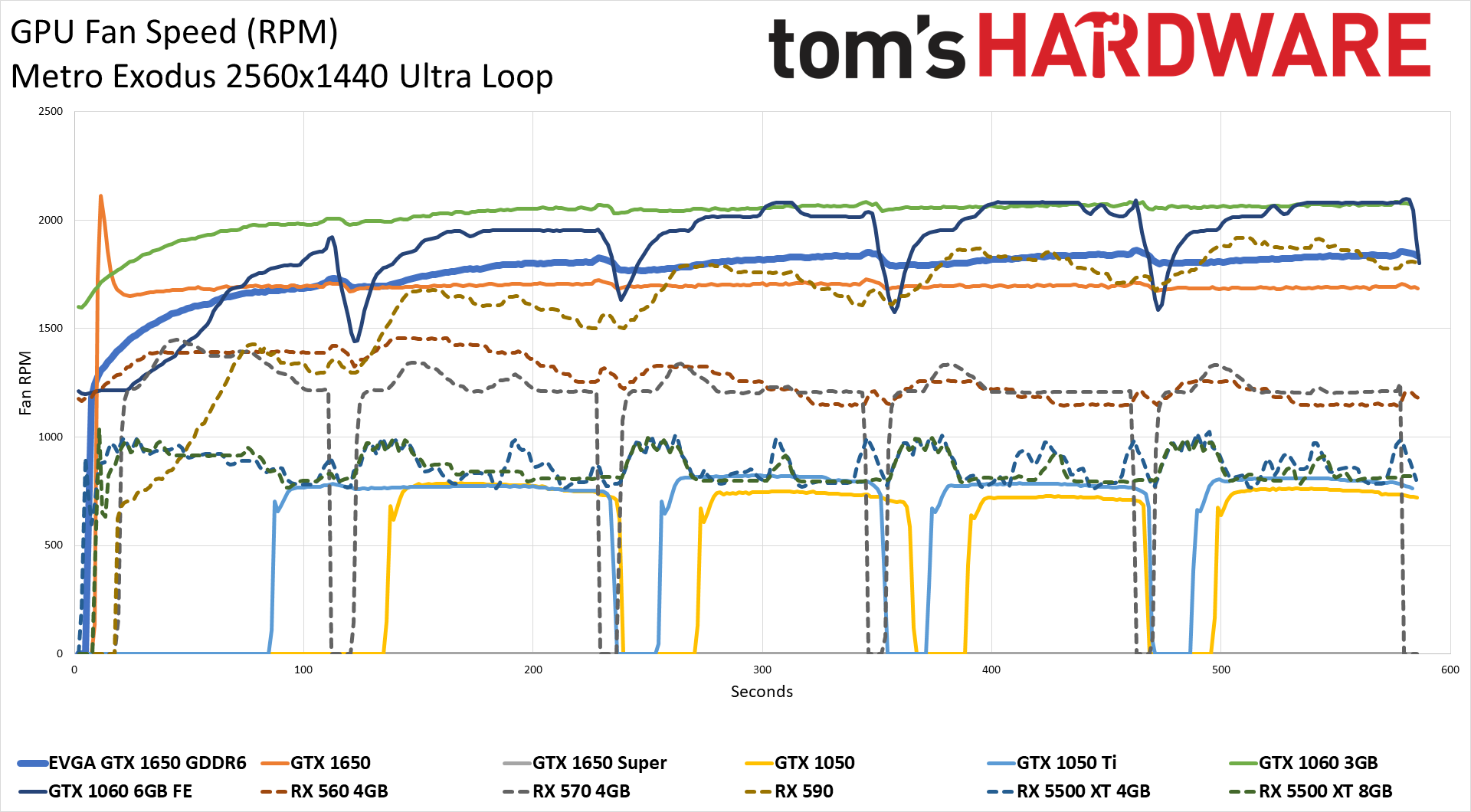

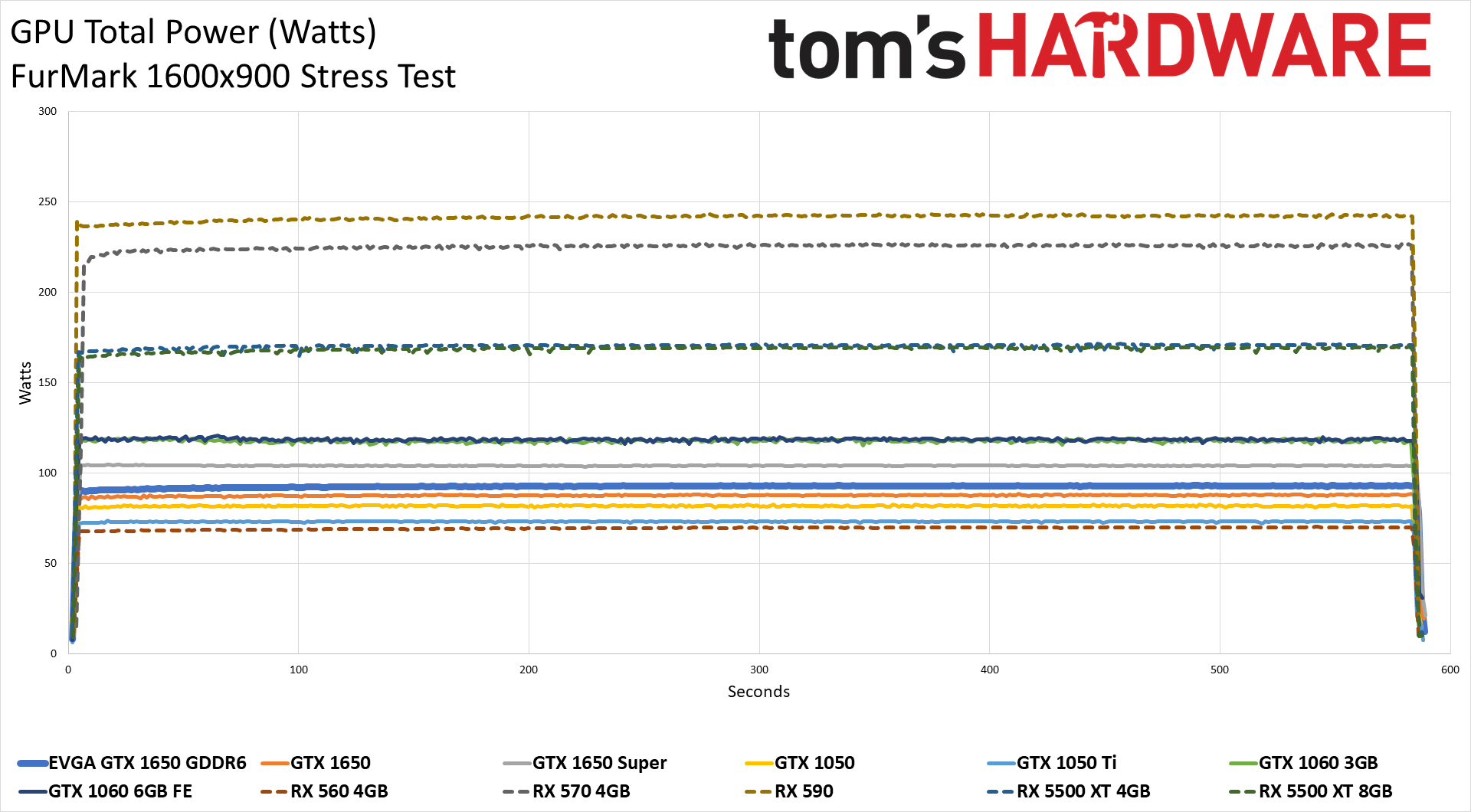

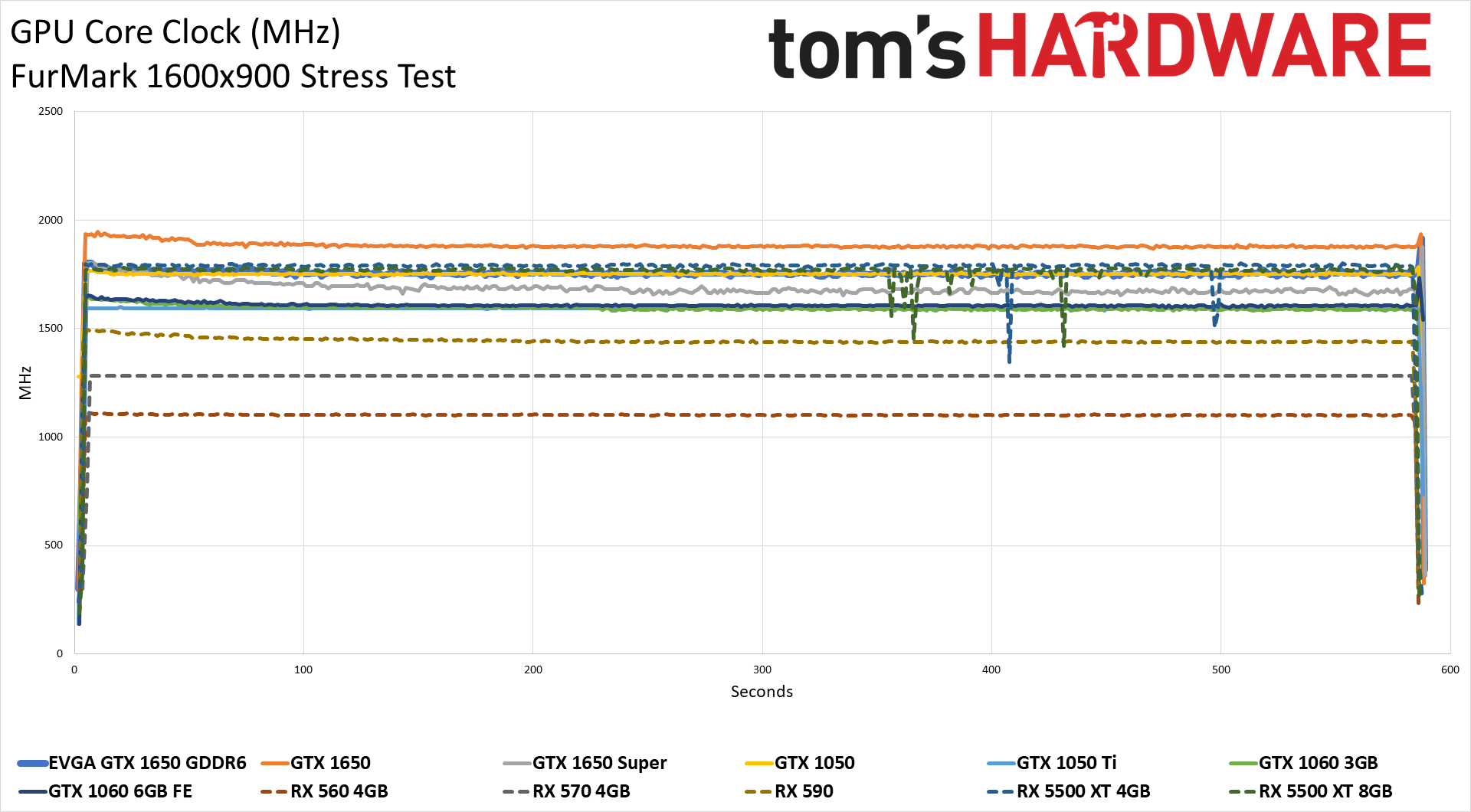

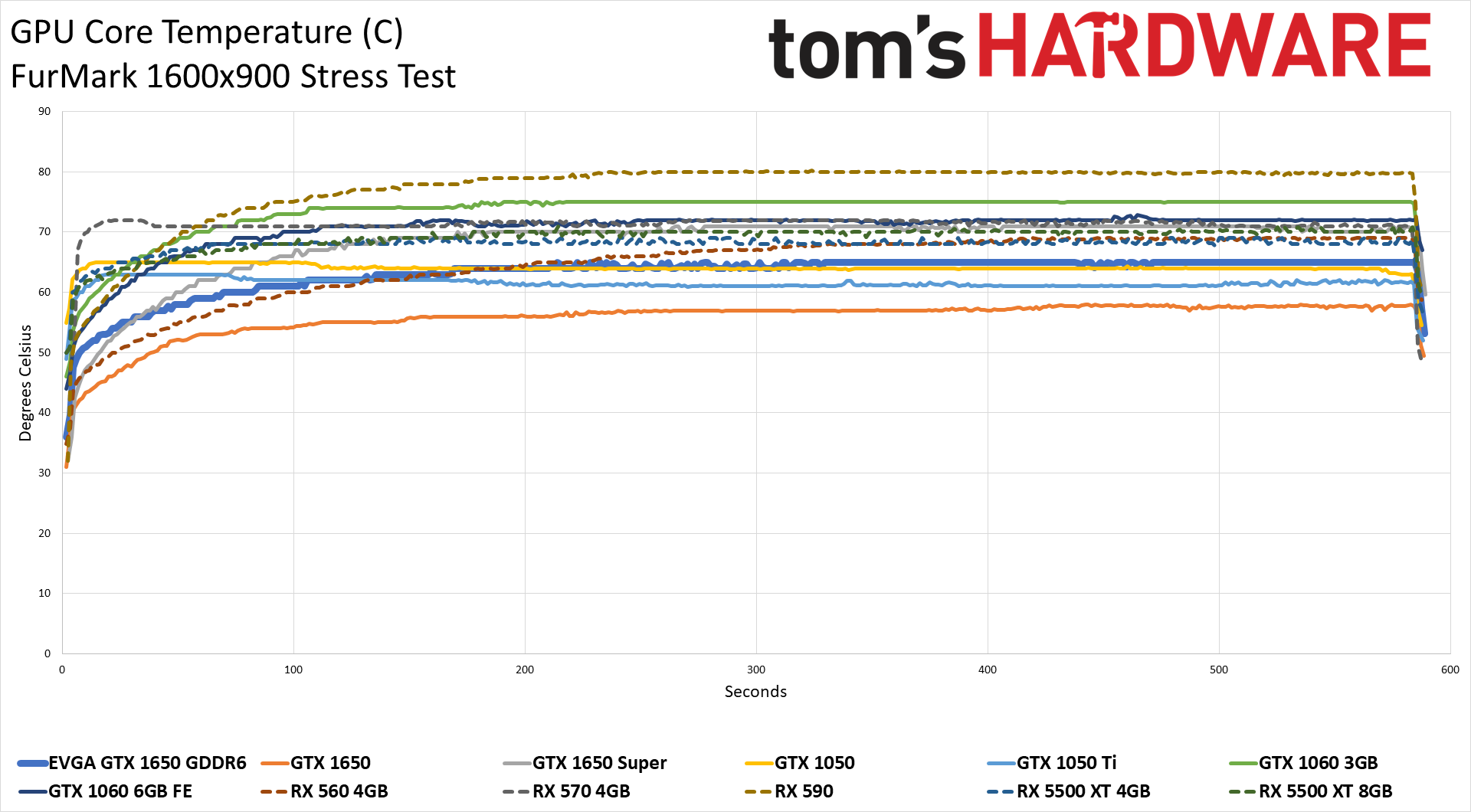

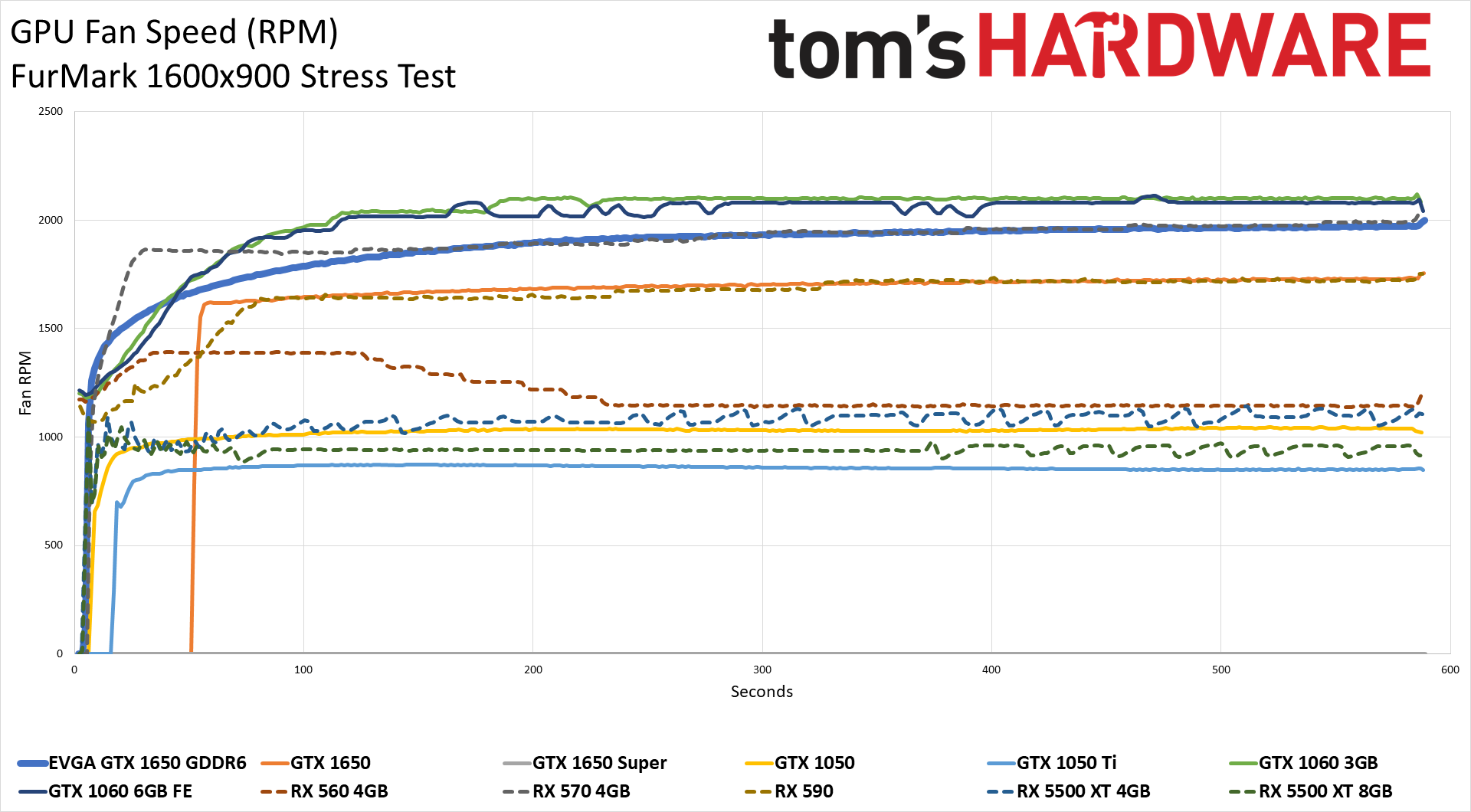

But let's hit the charts — I've reworked everything so that we can hopefully present up to 12 GPUs in a chart while still keeping things legible. AMD GPUs are dotted lines, Nvidia GPUs are solid lines, and the card we're reviewing — the EVGA GTX 1650 GDDR6 SC Ultra in this case — is indicated with an extra thick line. Let's start with a look at gaming, using Metro Exodus, and then we'll look at the worst-case results with FurMark.

EVGA GTX 1650 GDDR6: Metro Exodus Power, Temps, Clocks, and Fan Speed

Looking at the average power use chart, the EVGA GTX 1650 GDDR6 ended up tied with the 1650 GDDR5 model at 81W. The EVGA card sips power compared to mainstream GPUs, but it's not substantially different from the GTX 1050 Ti. And by that, we mean that power use increased right alongside performance. The previous generation GTX 1050 Ti averaged 58W in the same test, so Nvidia created a more powerful chip without massive efficiency changes. That's perhaps expected given the transition from 16nm to 12nm TSMC nodes didn't change as much as the numbers would suggest (i.e., it wasn't a 25% smaller process).

Overall, ignoring the outliers like the RX 590 and RX 570, the GTX 1650 delivered about 18% lower performance than AMD's RX 5500 XT 4GB while using 35% (44W) less power. And that is why we're using Powenetics again rather than GPU-Z's power figures. We're not showing the charts for the PCIe slot or PEG connector, but the EVGA card easily stayed in spec. It drew peak power of 45.8W from the x16 slot and 39.3W peak power from the PEG connector. None of the cards shown here exceeded spec while running the Metro Exodus benchmark, if you're wondering.

The EVGA GTX 1650 GDDR6 GPU core clocks came in way above the listed 1710 MHz boost clock — 200 MHz, higher in fact. Throughout the gaming portion of the benchmark (omitting the loading at the end of each run), the EVGA card pushed 1916 MHz clocks on average. It might run at lower clocks in some other games, and the Gigabyte GTX 1650 card and Zotac GTX 1660 card do post slightly higher average clocks, but this is about as good as it gets for Nvidia's Turing GPUs. AMD's RX 5500 XT 4GB averaged 1836 MHz, just a hair below the 'maximum' 1845 MHz rated boost clock.

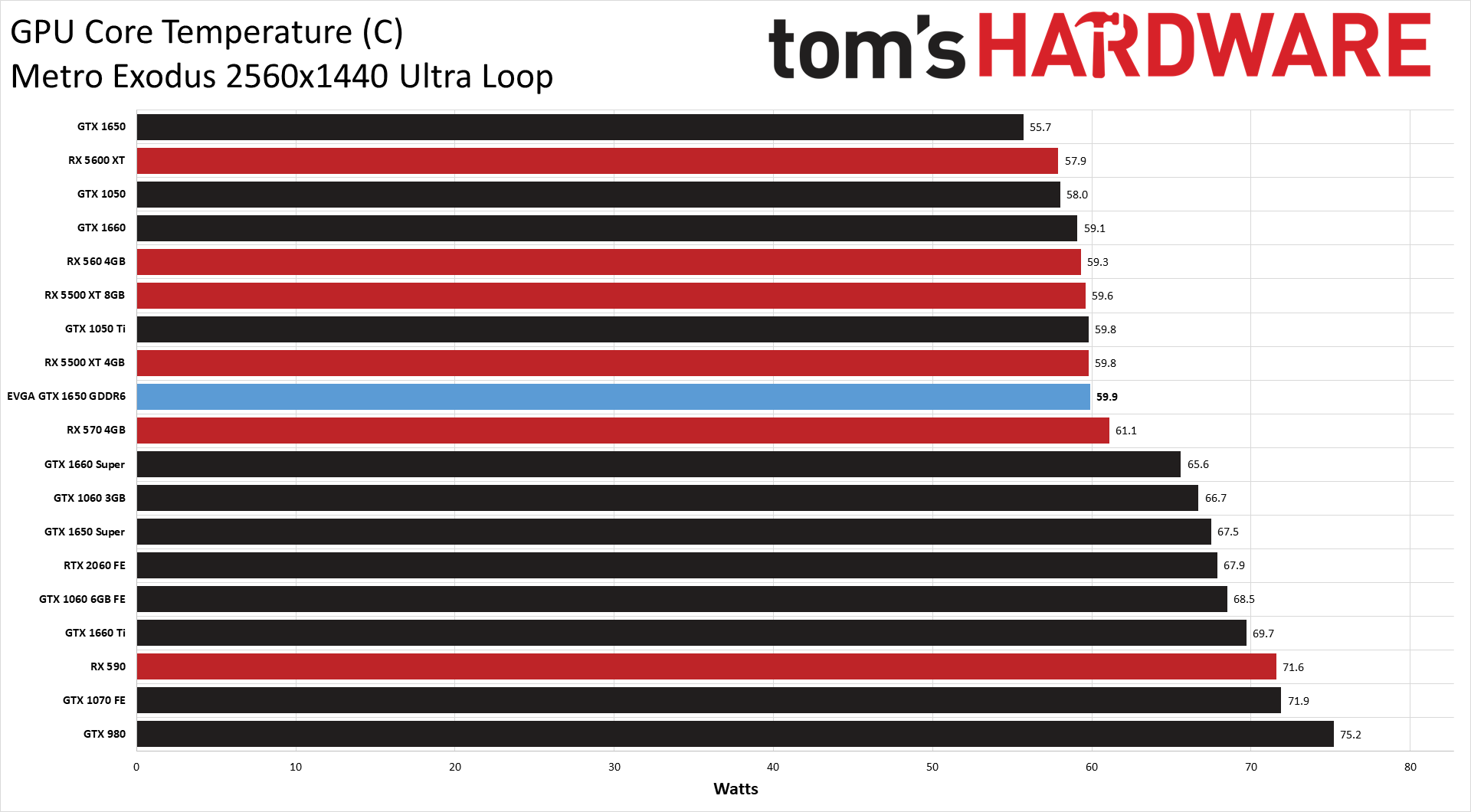

GPU temperature has a direct inverse correlation with fan speed, which we'll look at next — the higher your fan speed, the lower your temperature. Heatsink size and design are also factors, as well as the power use of the chip. Since the EVGA GTX 1650 GDDR6 uses a relatively low power chip, even with the high clock speeds it runs at, temperatures peaked at 62.8C and averaged just 59.9C throughout the gaming test. Basically, anything below 70C is more than fine on modern chips, and the EVGA cooler is certainly doing its job.

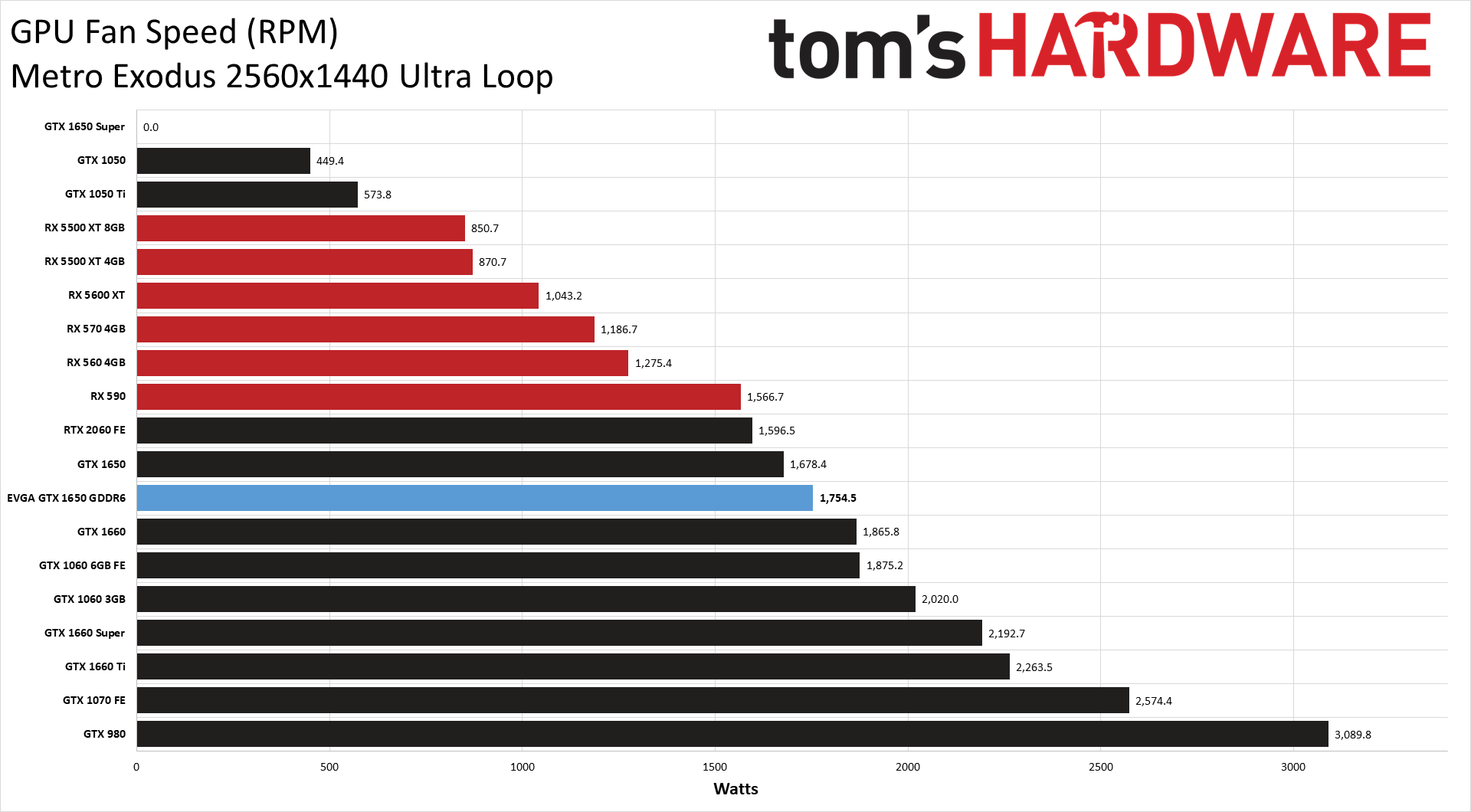

Last up before we kick things into high gear with FurMark, fan speeds are also fine. You can see in the charts that some of the 'hotter' GPUs in the temperature chart correspond to cards that also have lower fan speeds. Basically, manufacturers typically design the fan speed curve around temperature rather than the reverse, so if EVGA, in this instance, tries to keep temperatures below 60C, there will be a ramp-up in fan speed to do that. (Note: the 1650 Super didn't log fan speeds properly — ignore the "0" result.)

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Interestingly, though EVGA doesn't mention this in its product information, the GTX 1650 GDDR6 can shut its fan off. When the GPU hits 45C or higher, or perhaps under any significant GPU load, the fan starts to spin at 40% or about 1250 RPM. Maximum fan speed during our gaming test hit 1850 RPM. It's higher than many of the other GPUs in our chart, but the dual 85mm fans are not particularly loud.

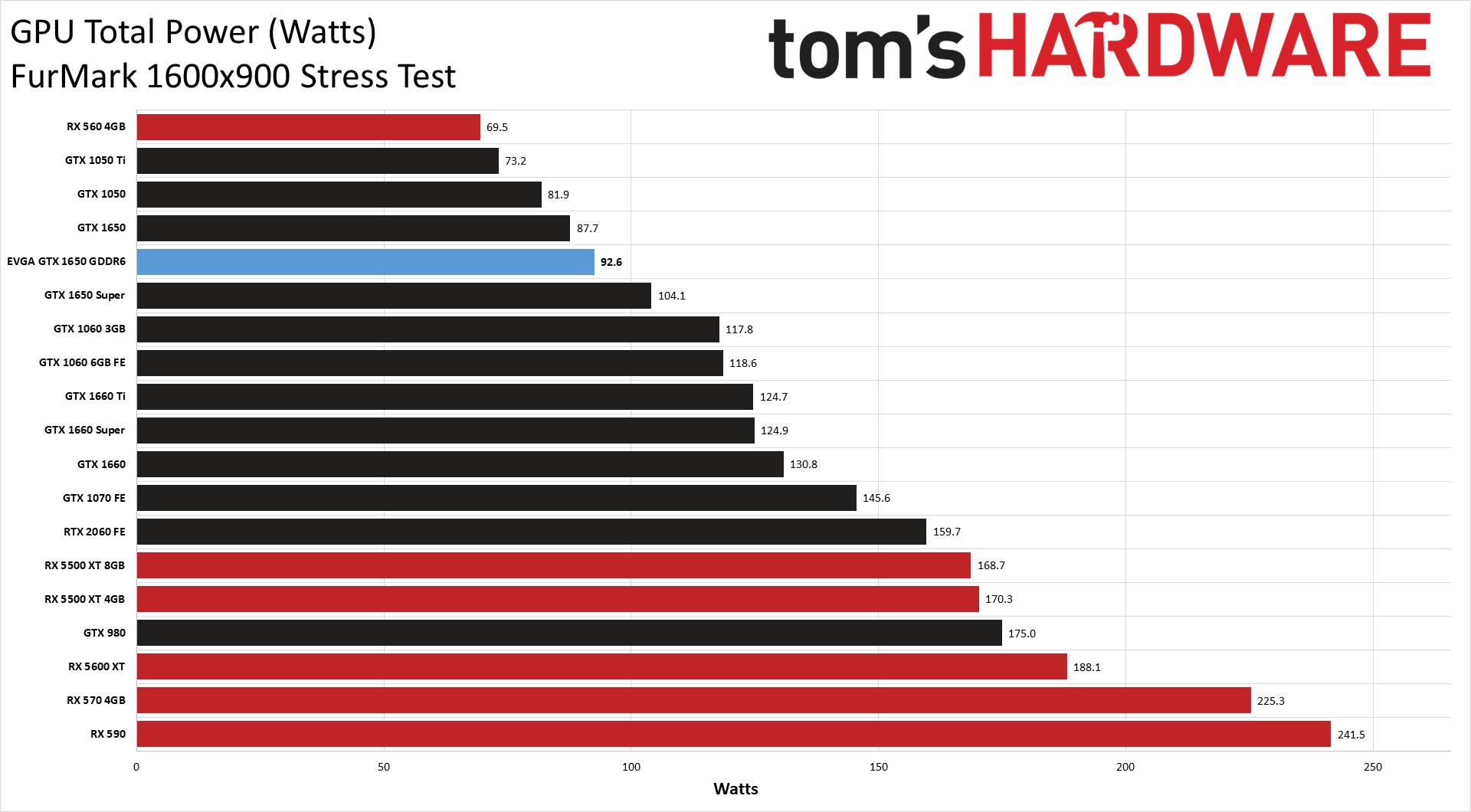

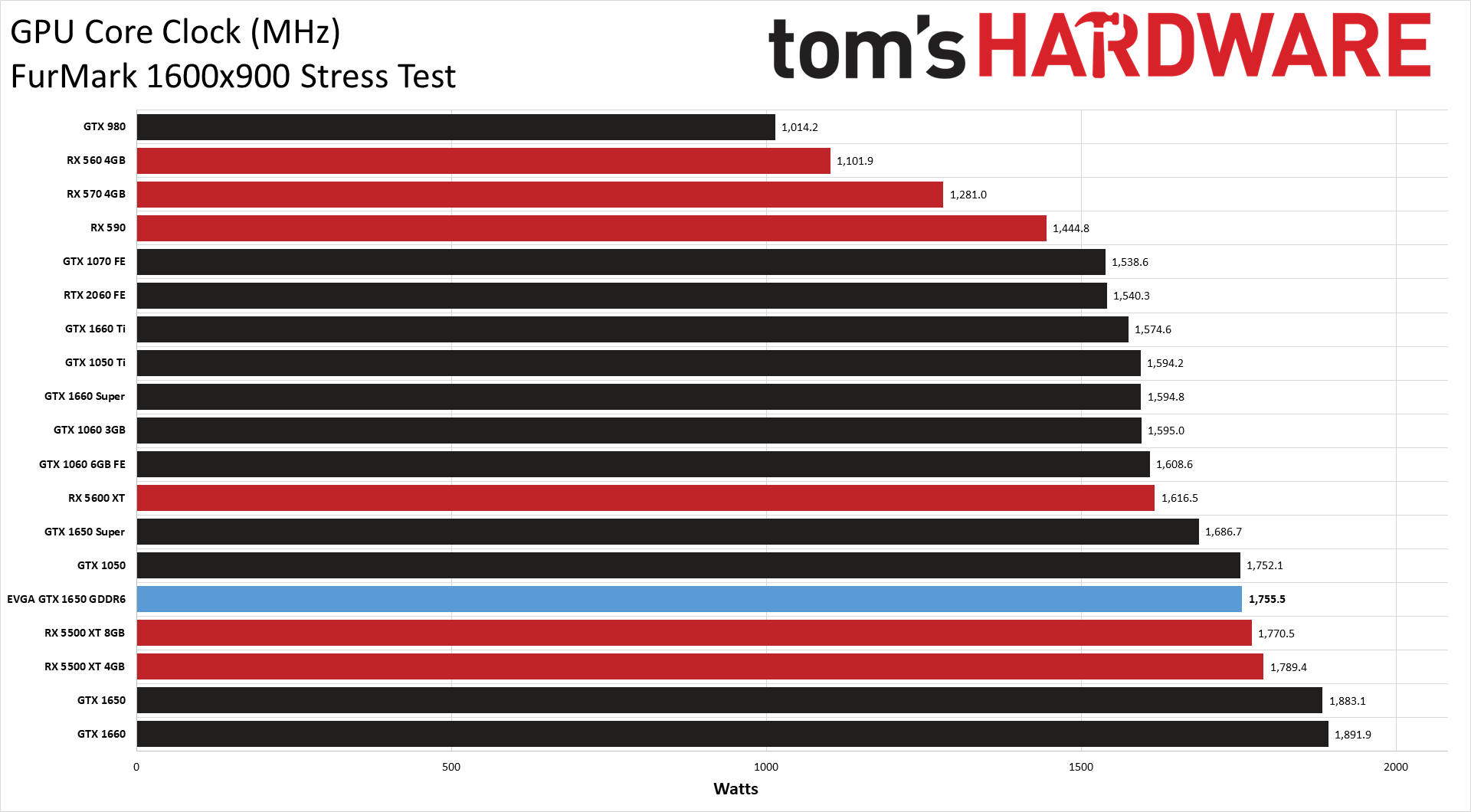

EVGA GTX 1650 GDDR6: FurMark Power, Temps, Clocks, and Fan Speed

As usual, FurMark shakes things up quite a bit. Average power use during the stress test climbed to 92.6W for the EVGA GTX 1650 GDDR6 and peaked at 93.3W, a modest 11.5W jump. That might seem big, but looking at other GPUs, it's not too bad. AMD's RX 5500 XT cards, for example, used about 45W more power in FurMark. This is not a 'normal' GPU workload by any means, and while it might theoretically be possible for a game to push the GPUs to similar power levels, in practice it never happens — there's a lot more going on in a game than just rendering a fuzzy donut as fast as possible.

Along with higher power use, clock speeds were lower on all the GPUs with FurMark. The EVGA GTX 1650 GDDR5 averaged 1755 MHz, down about 160 MHz from the Metro test. And yeah, I realize the line is hard to find in the bunched up data, but it was pretty stable throughout the test period. It's interesting that the GTX 1650 GDDR5 model maintained higher clocks, which is likely from its larger cooler that also keeps temperatures down.

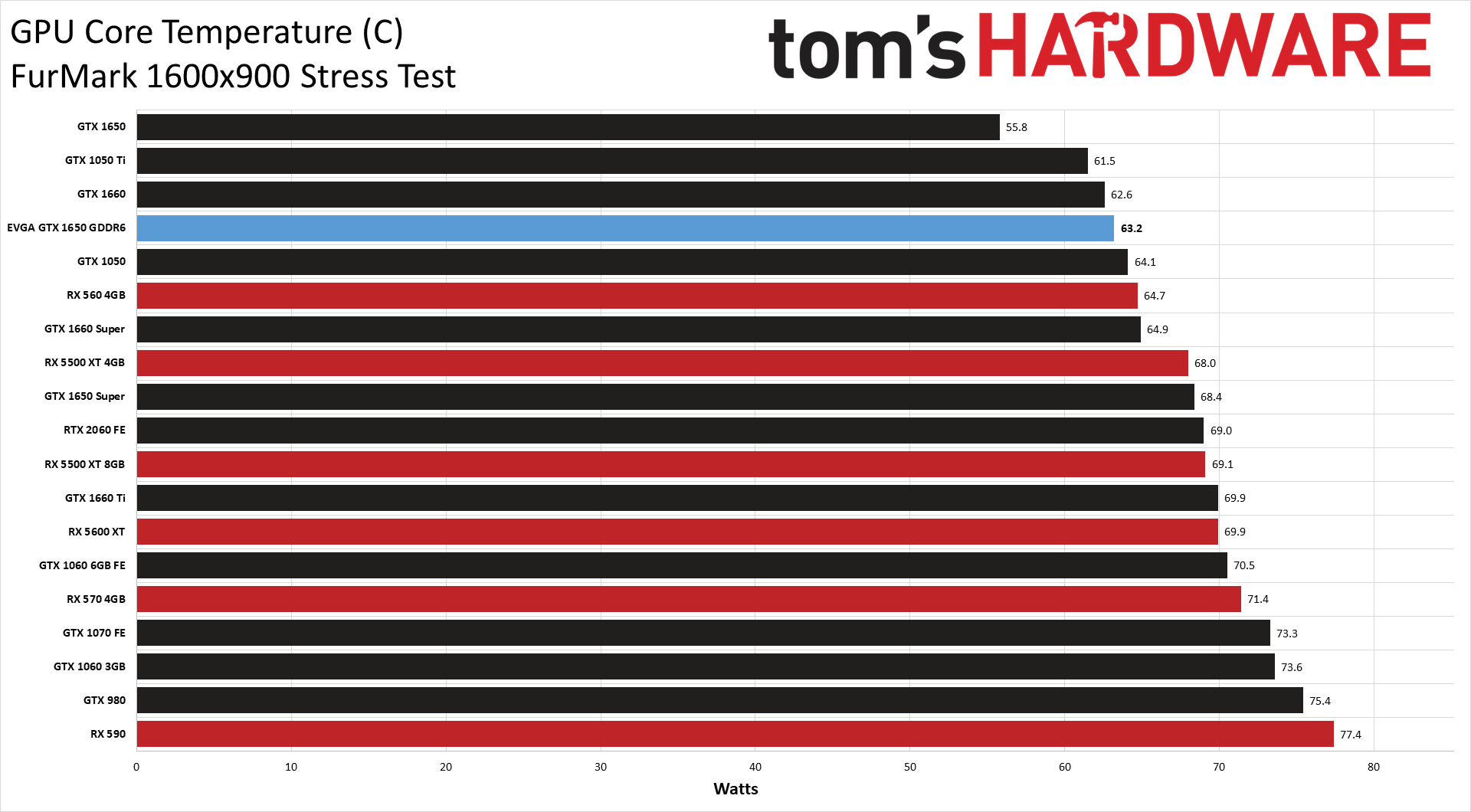

Temperatures were far more stable as well, partly because FurMark is a constant workload, unlike games. The EVGA GTX 1650 GDDR6 averaged 63.2C during the test run and peaked at 65C, which is only about 3C higher than in Metro Exodus. As with the power charts, AMD's GPUs didn't fare as well. The 5500 XT 4GB was 8C higher in FurMark, though all of the GPUs are still under 80C and shouldn't have any issues.

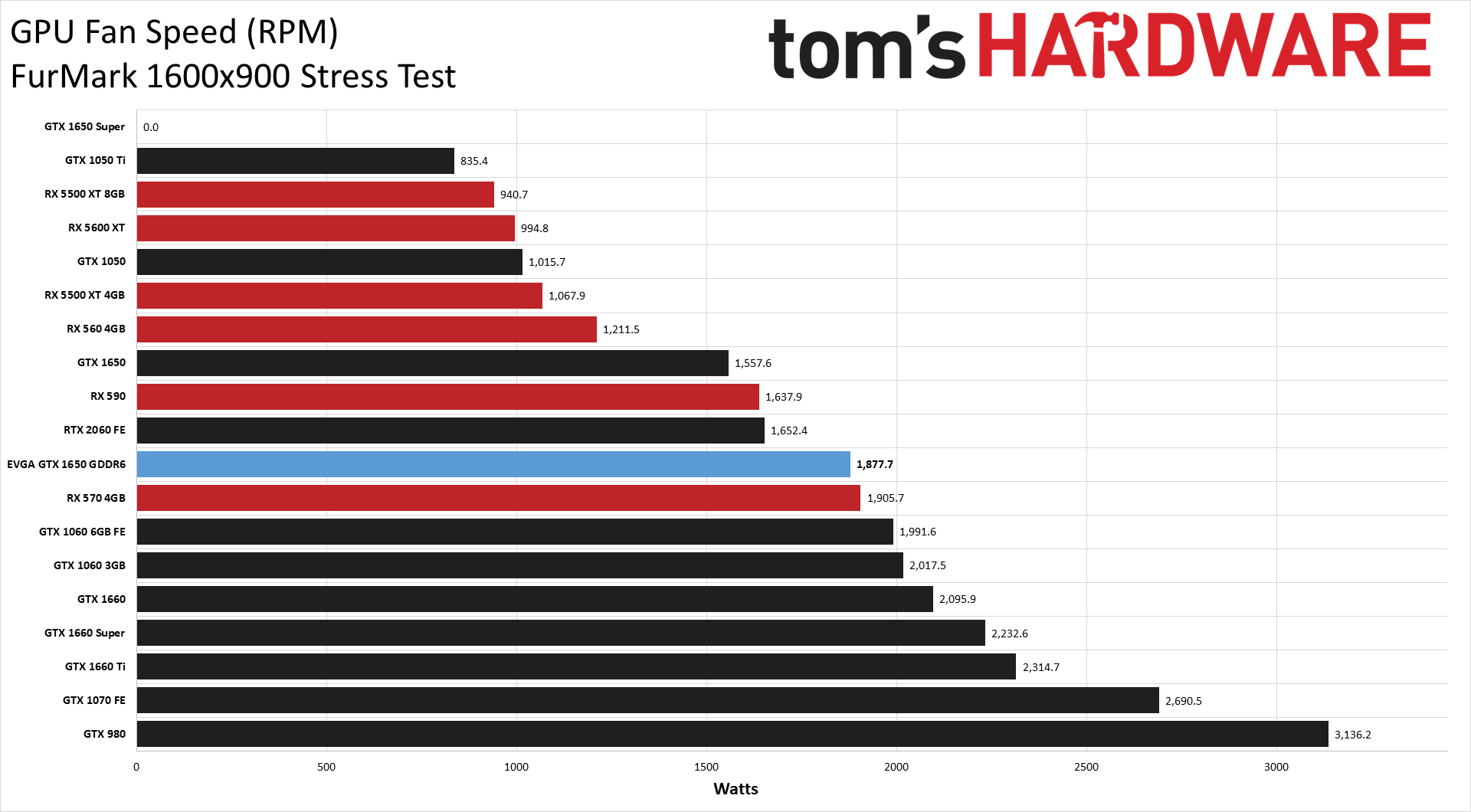

As before, fan speeds influence temperatures, and all the GPUs have to ratchet up the RPMs a bit for FurMark. Also, you should just ignore the fan speeds on the old GTX 980 and 1070 cards — both have been around the proverbial block and I had to manually adjust the fan speed curves to keep the GPUs stable during testing. It's about time to put the 980 out to pasture, I think.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: EVGA GTX 1650 GDDR6: Power, Temperatures, GPU Clocks, and Fan Speeds

Prev Page EVGA GTX 1650 GDDR6: 1080p Ultra Gaming Performance Next Page EVGA GTX 1650 GDDR6: Overclocking and Software

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

NightHawkRMX Cool, maybe Nvidia's new card is now only a few percent behind a 3-year-old RX570 that costs $40 less.Reply

Hey at least it's not 20-25% slower and $40 more than the 3-year-old RX570this time around.

Not impressed at all. My $80 used Sapphire Pulse RX570 4g would humiliate this card that costs double. So would my $89 Sapphire Nitro RX480 8g used. -

King_V Agreed - this is a little bit of a strange decision for Nvidia. I mean, maybe the switch to GDDR6 is just so they don't have to deal with GDDR5 anymore?Reply

In any case, this really only slightly improves the poor standing of the 1650. It's still less capable than the RX 570 4GB. The only saving grace is significantly lower power draw. But "higher price and lower performance" tends to not be a strong selling point at this level, especially if a PCIe connector is going to be needed anyway.

EDIT: also, I didn't realize that the 5500 XT 8GB was as close to the 1660's performance as that. I had for some reason thought the gap between them was wider. -

JarredWaltonGPU Reply

Mostly agreed, though I have to say. I am super tired of the RX 570 4GB as well. It's not an efficient card, and the overall experience is underwhelming, but that's the case for just about every budget GPU. I'm not saying people should upgrade from 570 to a 1650 Super or whatever, but I wouldn't buy a 570 these days, unless it was under $100.NightHawkRMX said:Cool, maybe Nvidia's new card is now only a few percent behind a 3-year-old RX570 that costs $40 less. Hey at least it's not 20-25% slower and $40 more than the 3-year-old RX570 this time around.

Not impressed at all. My $80 used Sapphire Pulse RX570 4g would humiliate this card that costs double. So would my $89 Sapphire Nitro RX480 8g used.

Really, for AMD GPUs, you want 8GB (or RX 5600 XT 6GB) -- stay away from 4GB cards. I generally recommend that same attitude for Nvidia, but Nvidia does a bit better with 4GB overall. Not with an underpowered GPU like TU117, though. Realistically, GTX 1650 GDDR5 should cost $120 now to warrant a recommendation, GTX 1650 GDDR6 for $140 would be fine, and GTX 1650 Super at $160 is good. But I'm not sure there's any margin left trying to sell 1650 at those prices.

GTX 1660 is mostly tied with RX 590 (just a hair faster overall), which is also just a hair faster than the RX 5500 XT 8GB. It can vary by game -- Metro Exodus, RDR2, and Strange Brigade all seem to like the extra VRAM bandwidth of 590 more than other games -- but they're all very close. The 590 does use quite a bit more power, though.King_V said:Agreed - this is a little bit of a strange decision for Nvidia. I mean, maybe the switch to GDDR6 is just so they don't have to deal with GDDR5 anymore?

In any case, this really only slightly improves the poor standing of the 1650. It's still less capable than the RX 570 4GB. The only saving grace is significantly lower power draw. But "higher price and lower performance" tends to not be a strong selling point at this level, especially if a PCIe connector is going to be needed anyway.

EDIT: also, I didn't realize that the 5500 XT 8GB was as close to the 1660's performance as that. I had for some reason thought the gap between them was wider. -

King_V ReplyJarredWaltonGPU said:Mostly agreed, though I have to say. I am super tired of the RX 570 4GB as well. It's not an efficient card, and the overall experience is underwhelming, but that's the case for just about every budget GPU. I'm not saying people should upgrade from 570 to a 1650 Super or whatever, but I wouldn't buy a 570 these days, unless it was under $100.

Yeah, agreed there. Still, at one point a couple of months ago, a new RX 570 4GB was going for $99.99... considering that's with a full warranty, it was a pretty impressive deal. I haven't seen it less than $119.99 these days. Ironically, it's Nvidia's unreasonable 1650 pricing that's keeping the RX 570 viable. Though I suppose a GDDR5 version of the 1650 that has no need for a PCIe connector might get sales just based on that for smaller systems with smaller PSUs.

JarredWaltonGPU said:GTX 1660 is mostly tied with RX 590 (just a hair faster overall), which is also just a hair faster than the RX 5500 XT 8GB. It can vary by game -- Metro Exodus, RDR2, and Strange Brigade all seem to like the extra VRAM bandwidth of 590 more than other games -- but they're all very close. The 590 does use quite a bit more power, though.

Yep - and, I'd have to say that, at, assuming the same price at a 5500XT 8GB, the R590 is a really hard sell given that high level (officially 225W) of draw. -

King_V ReplyNightHawkRMX said:AMD's older cards are competing with their own cards like 5500xt.

I wholeheartedly agree, and that is a problem that AMD brought on themselves. But, at least for AMD, they're getting a sale either way.

Nvidia is almost driving people away from the 1650 and toward the AMD Polaris cards. It seems like they don't HAVE to lose the low-end, but are choosing to do so.

The saving grace for them is the 1650 Super, which both cannibalizes the 1650/GDDR5 and 1650/GDDR6, along with giving a better price/performance than the Polaris cards and direct competition against the 5500XT

Also, the 1660 (when discounted). Though, that might be considered straddling between low-and-mid range. -

yeti_yeti It's honestly not that bad of a card, however I feel like that 6-Pin power connector kind of ruins the entire purpose of the 1650, which is to be a power-efficient, plug-and-play gpu that could be used for upgrading older pcs with sub-par power supplies, that would otherwise be unable to handle a card like rx 570, that requires a power connector. That said, the price could have also been a bit more generous (something like $115-120 would seem pretty reasonable to me).Reply -

JarredWaltonGPU Reply

Yeah, 1650 Super is currently my top budget pick, but it's still in a precarious position. The 1660 can be found for under $200 at times and it's 15% faster, but then the proximity to the 1660 Super ($230 and another 15% faster) makes that questionable as well... but then 5600 XT is 23% faster and another $40. And then you hit diminishing returns.King_V said:The saving grace for them is the 1650 Super, which both cannibalizes the 1650/GDDR5 and 1650/GDDR6, along with giving a better price/performance than the Polaris cards and direct competition against the 5500XT

Also, the 1660 (when discounted). Though, that might be considered straddling between low-and-mid range.

5700 is only 10% faster than the 5600 XT (and costs 13% more, so still reasonable). 2060 is 4% slower but costs 11% more. And it only gets worse from there. 5700 XT is probably the next best step, and it's 10% faster than the 5700 but costs 21% more. 2060 Super costs 5% more than 5700 XT and is about 11% slower. Or 2070 Super is 5% faster than the 5700 XT but costs 36% more!

Honestly, right now it's hard to get excited about anything below the RX 5600 XT -- it offers a tremendous value proposition at the latest prices. You can make a case for just about any GPU with the right price, but performance and price combined with efficiency I'd definitely consider the 5600 XT, especially if you can find it on sale for $250 or so. Same with RX 5700. Of course, right now I'd also just wait and see what Ampere and RDNA2 bring to the party. -

srfdude44 None of these can top the GTX1070. When I can afford a 2080, then I can upgrade, by then the 2080 will be where the 1070 is....sigh.Reply -

JarredWaltonGPU Reply

Yeah, if you have a 1070, your only real upgrade path is to move to at least a 2070, probably faster. Best bet is to wait and see what the RTX 3060 and AMD equivalent (RX 6600?) can offer in performance and price. Hopefully by next year you'll be able to get 2080 levels of performance for under $300. Maybe.srfdude44 said:None of these can top the GTX1070. When I can afford a 2080, then I can upgrade, by then the 2080 will be where the 1070 is....sigh.