Gigabyte's 3D1: Are Two Engines Better Than One?

History Of Multi-Core Cards, Continued

In the more recent past, the comparatively young graphics chip maker XGI also failed with its own dual-core concept. XGI had simply overreached itself and had hoped to put the two leading graphics chip makers, ATi and NVIDIA, under pressure with its dual-core concept. In the end, the Volari V5 Duo and Volari V8 Duo were much too expensive for the graphics performance they offered, which was greeted with a bad response by the market. Beyond that, the cards were plagued by driver and image quality problems. As a result, XGI practically withdrew the cards from the marketplace. Nonetheless, a very limited number of these cards made their way to Europe and onto store shelves.

When XGI announced its Volari V8 Duo card, the expectations were very high. However, the cards had some serious teething troubles and were also plagued by poor drivers. Considering the card's price, the performance was far too low.

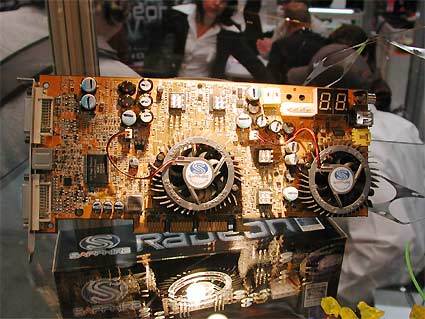

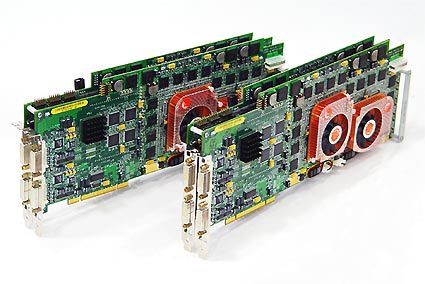

Although the above examples are certainly the most well-known, they aren't the only ones. In the summer of 2003, for example, graphics card maker Sapphire displayed an interesting card at several trade shows. This was an engineering sample sporting two ATi Radeon 9800 Pro chips, alias R300, which shared the 3D workload. Evan & Sutherland, a company specializing in professional simulators, also proves that using several ATi Radeon cores in parallel has been possible for quite some time. In the current simFUSION 6500 workstation, either two or four Radeon 9800XT cores, also known as R360, render the 3D scene together.

In 2003, Sapphire showed a prototype using two Radeon 9800 graphics processors, alias R300, at several trade shows.

Multi-Core rendering with ATI chips: The Evan & Sutherlands simFUSION 6000 workstation uses up to four Radeon 9800 graphics processors. The most recent model, the 6500, uses four Radeon 9800XT cores.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: History Of Multi-Core Cards, Continued

Prev Page History Of Multi-Core Cards Next Page 3D1 Package-

Shankovich So funny to look back now and see how abstract the concept of dual GPU design was back then. Happy to see my favorite mobo company be one of the pioneersReply