Hot Vega: Gigabyte Radeon RX Vega 56 Gaming OC 8G Review

Why you can trust Tom's Hardware

Power Consumption

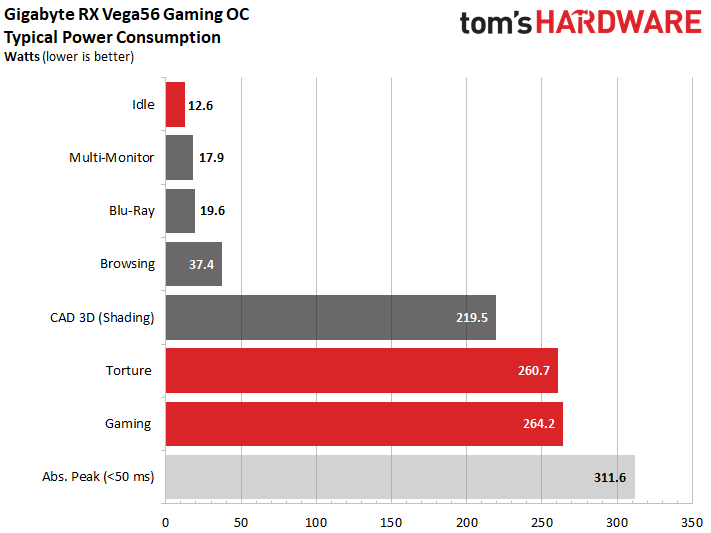

Power Consumption At Different Loads

We measured about 264W during our gaming loop using the driver's Balanced power profile. That's far higher than AMD's reference design, which needed ~223W using the default BIOS. Gigabyte's board even exceeds our 260W result with the reference card's Turbo profile.

Engaging the Turbo profile on Gigabyte's Radeon RX Vega 56 Gaming OC 8G with a 50%-higher power limit pushes our meter above 325W, at which point the thermal solution is almost completely overwhelmed. As a result, we decided not to get any more aggressive with our overclocking. Rather, we stuck with the driver's Balanced power profile for testing.

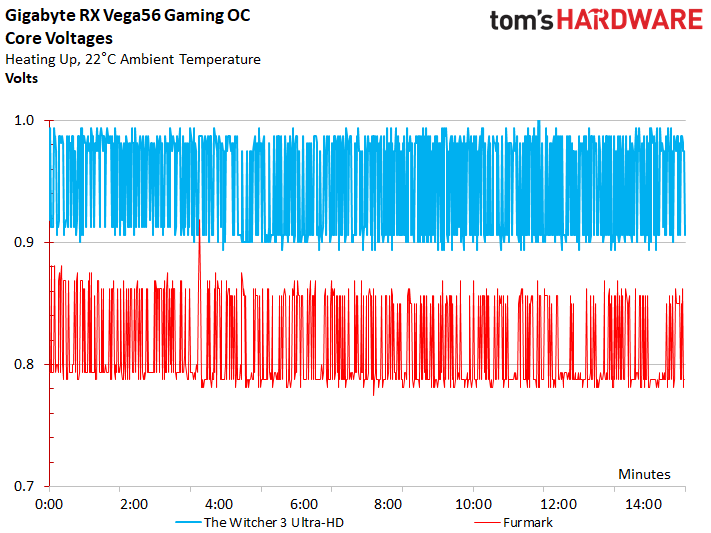

The corresponding voltages for our gaming loop and stress test at Gigabyte's stock settings are plotted in the following graph:

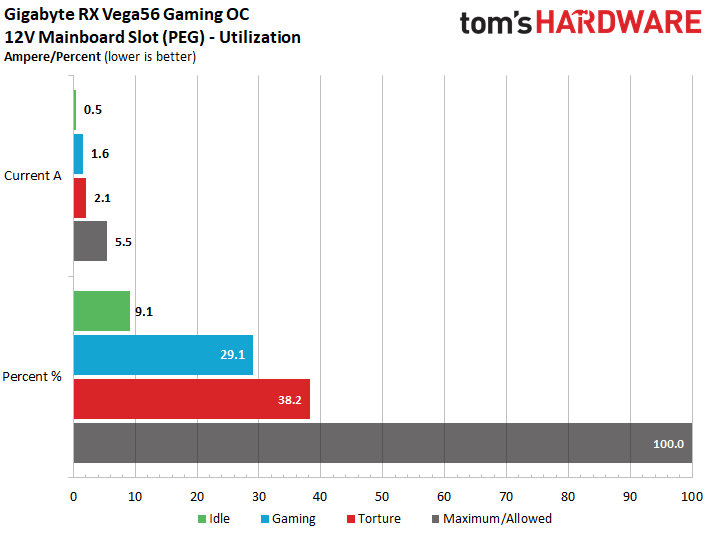

Load On The Motherboard Slot

At a peak of 2.1A through our stress test, Gigabyte's Radeon RX Vega 56 Gaming OC 8G falls significantly below the 5.5A ceiling defined by the PCI-SIG for a motherboard's 12V rail. A mere 1.6A during the gaming loop is even more conservative. Overall, balancing is well-implemented, and the motherboard slot hardly ever experiences serious loads.

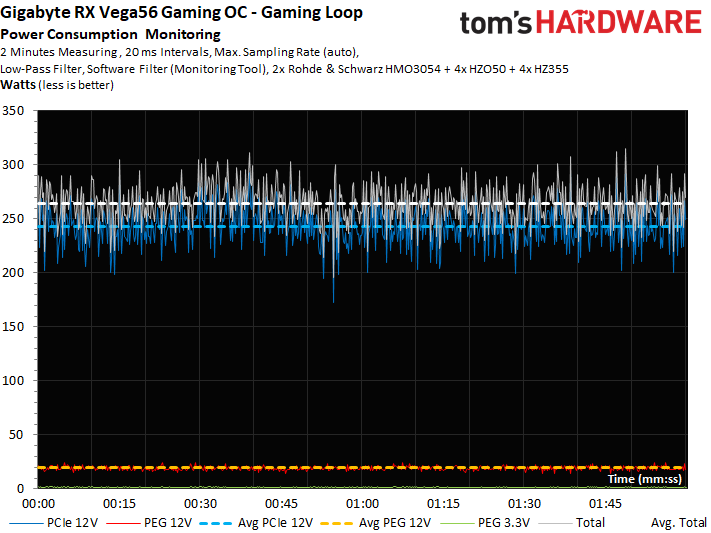

Power Consumption In Detail

The graphs below plot detailed power consumption and current readings in order to illustrate our findings.

Naturally, peaks in power consumption are highest during gaming. But spikes of up to 312W are still acceptable, since they're far too brief to cause a problem.

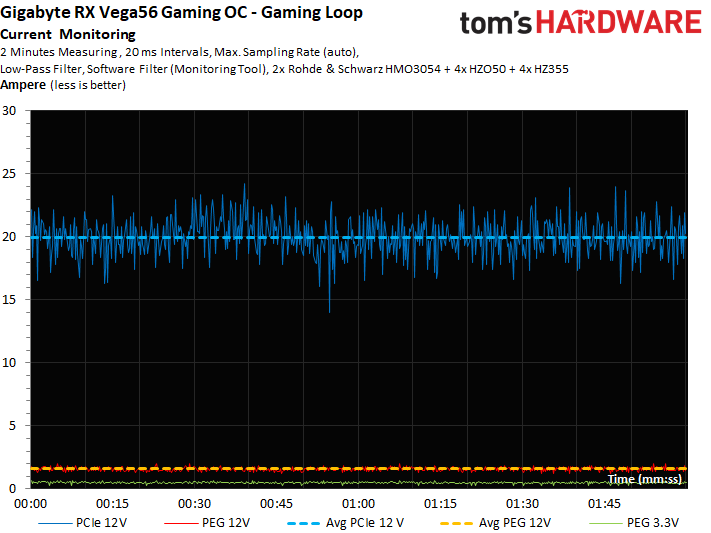

The same goes for the corresponding current measurements:

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

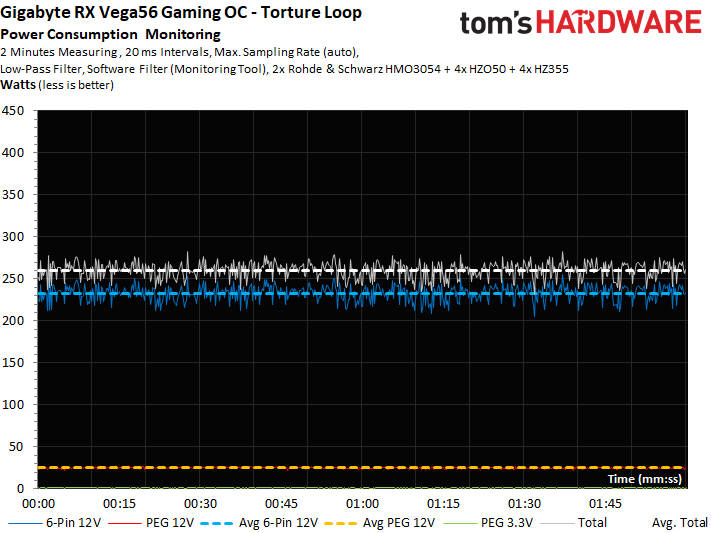

During our stress test, the short-term peaks are significantly less pronounced (even if the power consumption is slightly higher than during gaming workloads).

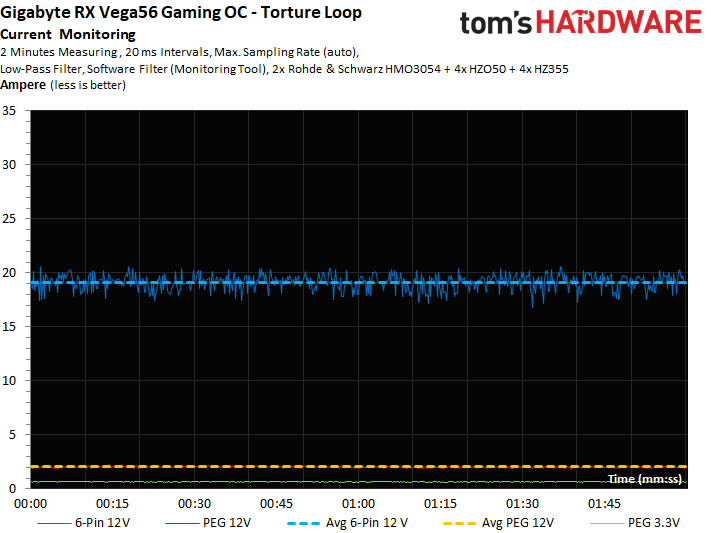

Again, our current readings follow the graph rather closely and show no abnormalities.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: Power Consumption

Prev Page Gaming Performance Next Page Temperatures, Clock Rates & Overclocking

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

marcelo_vidal With the pricey from those gpus :) I will get an 2400g and play 720P. maybe with a little tweaking I can boost to 1920x1080Reply -

Sakkura This thing about board partners only getting a few thousand Vega 10 GPUs goes back many months now. Has AMD just not been making any more? What the heck is going on?Reply

Seems like Gigabyte did a really nice job making an affordable yet effective cooling solution for Vega 56, it's really a shame it goes to waste because there just aren't any chips available. -

CaptainTom To those complaining about the low supply (and resulting high prices) of AIB cards:Reply

It's because the reference cards are still selling very well (at least for their supply). If vendors can sell the $500 Vega 64 for $600 and sell out, why would they bother wasting time on any other model? -

g-unit1111 Reply20576533 said:To those complaining about the low supply (and resulting high prices) of AIB cards:

It's because the reference cards are still selling very well (at least for their supply). If vendors can sell the $500 Vega 64 for $600 and sell out, why would they bother wasting time on any other model?

That's because miners are the ones buying the cards as fast as they come in stock. It's us gamers and enthusiasts that are waiting for the high performance models. Bad thing is, we don't matter to the bottom line. All they see and want is our precious money, and they don't care what model they sell to us. -

aelazadne Because, the Vendor's making money doesn't equal AMD making money. AMD is losing market share in the GPU scene. With Vega unable to keep up with demand AMD is losing customers who would have bought Radeon's but instead go with Nvidia due to availability. The lack of Availability stemming from August and the fact that even now in early 2018 the Vegas are over priced and hard to find ruins customer confidence. In fact, this situation is so bad that the only people benefitting are the people gouging both Nvidia cards and Radeon cars because at this point there is NO COMPETITION.Reply

Also, just because you are gouging doesn't mean you are making money. AMD has to make money and they need to sell these things in a certain volume. In their contracts with Vendors, they will require their vendors to sell a certain amount of vegas in order to order more. Due to scarcity the only companies making money are Retailers. AMD is going to have to address this issue otherwise their investors will begin to come after them for bungling so bad that their market share dropped so bag. Literally, the intel screw up plus Ryzen being good has been a godsend for AMD, they do not need a declining GPU market share sparking a debate with investors over whether AMD should get out and play the Intel game. -

bit_user Reply

I really appreciate the thorough review.20575687 said:...

The super-imposed heatpipes vs. GPU picture was a very nice touch. For any of you who missed it, check out page 6 (Cooling & Noise) about 1/3 or 1/2 of the way down.

-

bit_user Reply

I think you're too cynical. It's an ASIC supply problem. The AIB partners would probably spend the time if they could get enough GPUs to sell custom boards in enough volume to offset the overhead of doing the extra design work.20576533 said:It's because the reference cards are still selling very well (at least for their supply). If vendors can sell the $500 Vega 64 for $600 and sell out, why would they bother wasting time on any other model?

The only real way out of this is for AMD to design a more cost-effective chip with the graphics units removed. That will divert miners' interest away from their graphics products. -

bit_user Almost as surprising to me as how much more oomph they got out of Vega 56 is how well the stock Vega 64 is holding up against stock GTX 1080. Is it just me, or did AMD really gain some ground since launch?Reply