Q&A: Tom's Hardware And Kingston On SSD Technology

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

A Question Of Endurance

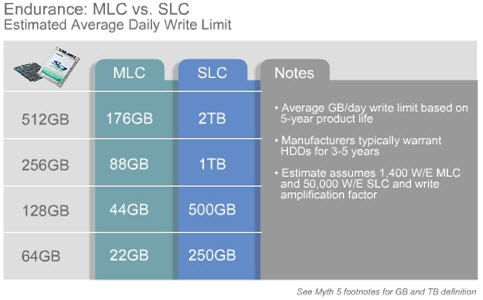

TH: So the risk is than that I won't be able to write to it. But there’s a linear relationship between endurance and capacity, right? As you double the capacity, you double its expected lifespan?

TC: Yes. Data endurance numbers doubled for the same about data read and written per day.

TH: Given that, how many years are we expecting from the current crop of drives?

LK: Well, that's the hard part. You almost have a sliding scale if we’re talking about client usage models to server usage models. They’re very different. The worst kind of writes that you can apply to an SSD are random. You will wear a drive out quicker that way. If all the writes are sequential, that's the best case scenario for an SSD. A typical client workload is probably a mixture of those—not all random, not all sequential.

TC: For example, with our new V+ SSD, the proper life is like this: usually, we say the effective read/write duty is about 20% of the power-on hours. With this, normal operation is 8,760 hours per year, and this allows you to read and write 20 gigabyte per day in operation. With these numbers, we know that an SSD’s expected product life is actually much better than a traditional hard drive.

TH: Let’s circle back to that difference in the effect on endurance of sequential versus random writes. If the drive controller dictates how every bit gets written to the drive and wears the memory evenly, why there is a difference between sequential and random?

TC: Let's put it this way. Most of the time with your hard drive, you need an operating system. The random reads and sequential reads have a major effect on the system’s behavior. When you boot up your computer, you are doing a sequential read. Same with hibernating and application loads. But a lot of times, you also need to access your information, the user data, and that's a random read because your data is spread out everywhere. Now, with a hard drive, the arm has to move. And with this SSD drive, there are no moving parts, so no chance of mechanical failure. So, compared to the hard drive, SSD would provide better performance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

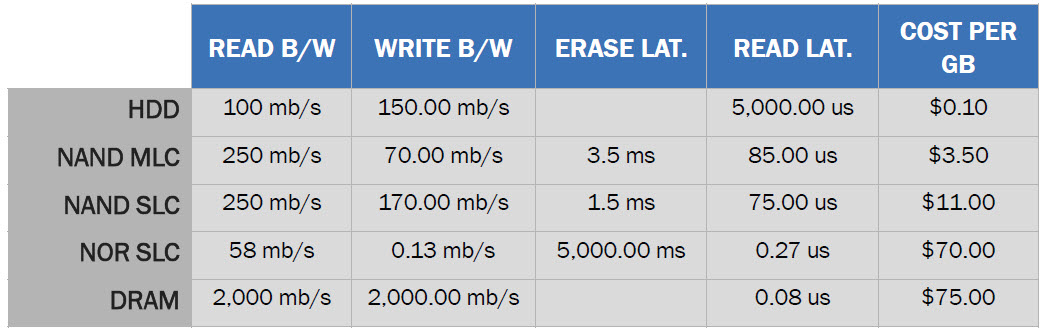

TH: Does multi-bit MLC enter into this discussion? Is having three—or later, four—bits per cell going to change the endurance dynamic, particularly when weighed against SLC?

TC: Three bits per cell is already in the market, but you also have to look at density—32nm or 25nm. That provides the density for more stack. The use of two or three bits per cell is just a current trend. The NAND semiconductor industry is more interested in how it can provide maximum data per square inch. Typically, we are looking for more cell density than bits on this point.

TH: But will 3-bit have an impact on endurance? With more electrons being pushed through each floating gate, does that erode the oxide layer more quickly?

TC: No, actually, because right now all NAND has ECC correction. You’re talking about losing all data endurance, so you cannot recover it. You’re talking about the voltage converting in the cell.

LT: But Tony, if we were to implement today's 3-level cell NAND on an SSD, would the endurance of that be less compared to a current MLC product?

TC: I would say that would be partially true. With data endurance there are two different issues. One is how long it will last, and one is how to correct errors if they appear.

Current page: A Question Of Endurance

Prev Page Kingston Up Close Next Page Can You Spare Some Performance?-

nonxcarbonx Kingston's mitigation software is the best I've seen. On another note, is there a link to the destruction video?Reply -

pink315 "Now, with a hard drive, the arm has to move. Now, with a hard drive, the arm has to move."Reply

I'm not sure if you were trying to be dramatic, or if you just accidentally wrote the same thought twice. Just pointing it out. -

ta152h One way to preserve some of the life of any hard drive is to shut off virtual memory. Most computers don't need it, and if you do, than you're probably better off getting more memory anyway.Reply

The ideal thing for booting up fast would be to go back to using core memory :-P. RAM that doesn't lose power when you turn it off is pretty cool. Low power, low heat, and would impress people when you say "Oh, that? It's my core memory array.". You'd get dates for sure. Can't say what they'd look like, or if they'd be sane. Or even female :( .

Still, I'd buy it. Cache handles most reads anyway, and I'm too old fashioned to feel something is a computer without some form of magnetic storage in it. -

outlw6669 Fun read but nothing really new...Reply

I like how good they are at dodging the tough questions.

What value is there in Kingstons Intel based SSD's vs Intel original?

Well, they helped Kingston launch a very strong product :P

-

neiroatopelcc Maybe it's just me, but I don't feel they properly answered the question of why there's a wear difference bewtween sequential and random ...Reply -

mitch074 I solved my netbook's boot times...Reply

It runs Linux, with a compressed kernel image.

Looks like real mode disk access, registry hives, antivirus and such do slow Windows boot times. -

vvhocare5 I guess Im not a fan of these types of interviews. The interviewee is really just trying to get advertising for their product and they only say good things and gloss over the negatives. They also have some good one liners they toss out, but thats about it.Reply

I would prefer to see the product benchmarked and compared on price..and then let us decide how we are going to spend our money. -

anamaniac Interesting interview.Reply

Keep them coming. =)

Now I have the urge to go buy a 256GB SLC drive and play flaming baseball with it... I probably shouldn't... -

El_Capitan I like how they say, "The worst kind of writes that you can apply to an SSD are random. You will wear a drive out quicker that way". However, Kingston and Intel put all their advertising efforts into promoting the speed of their IOPS for their SSD's for server environments. That means they want you to buy their product to use it so it wears out quicker... which means you need to buy another one to replace it. Now that's a wicket smart business strategy.Reply