Nvidia GeForce GTX 1070 Graphics Card Roundup

Gigabyte GTX 1070 Mini ITX OC

Why you can trust Tom's Hardware

Even the longest can fall short when it comes to mini-ITX enclosures. Suddenly there are other attributes that matter more than just performance, like length, power, and where all of that hot air goes after it's blown off the GPU.

With its GeForce GTX 1070 Mini ITX OC, Gigabyte adapts well to the limitations of small cases, creating a card that's short and still wields Nvidia's powerful GP104 processor. That leaves us with just two questions: how well can the chip be cooled, and how well does Gigabyte manage the trade-off between less surface area and noise output?

This board's price makes it an interesting offering, since Gigabyte deliberately avoids gimmicks and concentrates on the qualities that matter.

Technical Specifications

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Exterior & Interfaces

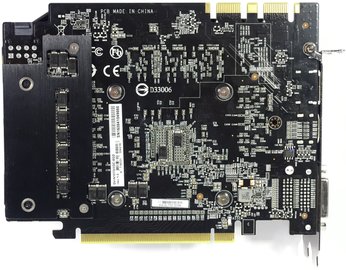

The cooler cover is made of black plastic with orange lacquered highlights. Weighing just 605g, this card is a featherweight among the other 1070s. A length of just 6 3/4 in (17.2 cm) also makes the GeForce GTX 1070 Mini ITX OC conveniently short. The height (12.5cm from the upper edge of the motherboard) and depth (3.5cm, the same as most dual-slot cards) are perfectly acceptable.

Gigabyte deliberately doesn't use a continuous single-piece backplate; in ITX projects, thicker structures attached to the back of a card often cause collisions with CPU coolers or memory. The smaller plate at the end of the card shouldn't pose any problems, and it does serve a real purpose.

The top of the card bears an unlit Gigabyte logo, and the eight-pin auxiliary power connector sits at the top's back edge. We can appreciate the simple, yet functional design. Gigabyte even held off on eye-catching lighting, since few mini-ITX enclosures are windowed.

Horizontally-oriented fins direct heated air to the back and front of the card. Accordingly, the slot plate has some openings for ventilation.

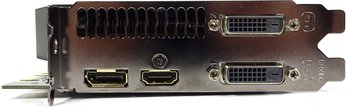

You get a total of four display outputs, all of which can be used simultaneously in a multi-monitor setup. In addition to the two dual-link DVI-D connectors, there is also one full-sized HDMI 2.0b port and a DisplayPort 1.4-capable interface.

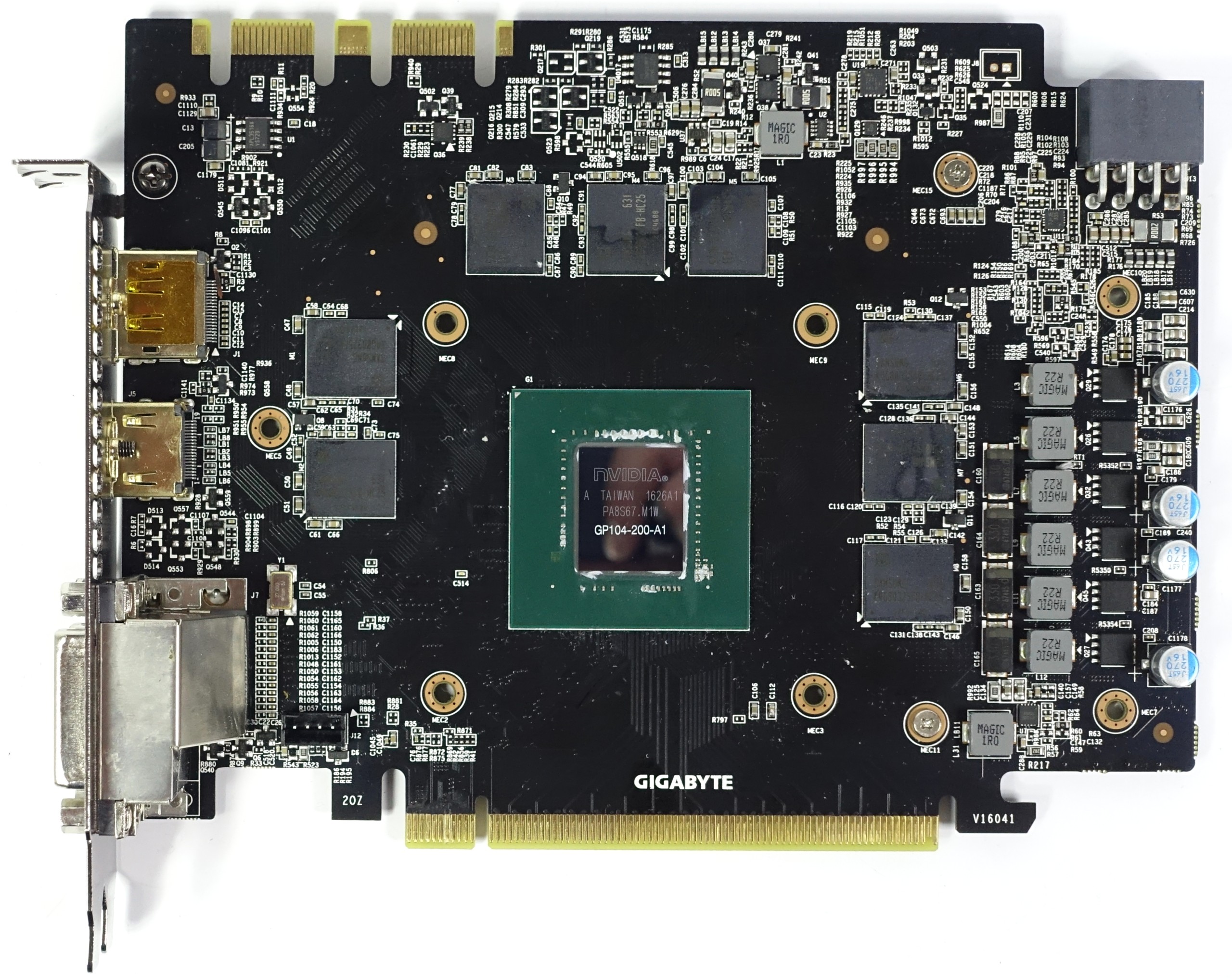

Board & Components

Gigabyte designed an extra-short PCB for this card. The true art is finding a configuration in which the VRMs are arranged in such a way that potential hot-spots are elegantly avoided, despite the lack of space. In practice, Gigabyte's implementation works well.

Like Nvidia's GeForce GTX 1070 Founders Edition, this card uses eight Samsung K4G80325FB-HC25 modules, each able to store up to 8Gb (32x 256Mb).

Such a short card presents issue with placing the memory. On one hand, distance to the GPU cannot be reduced. On the other, the modules must be kept as far away as possible from the voltage regulation circuitry, else they overheat.

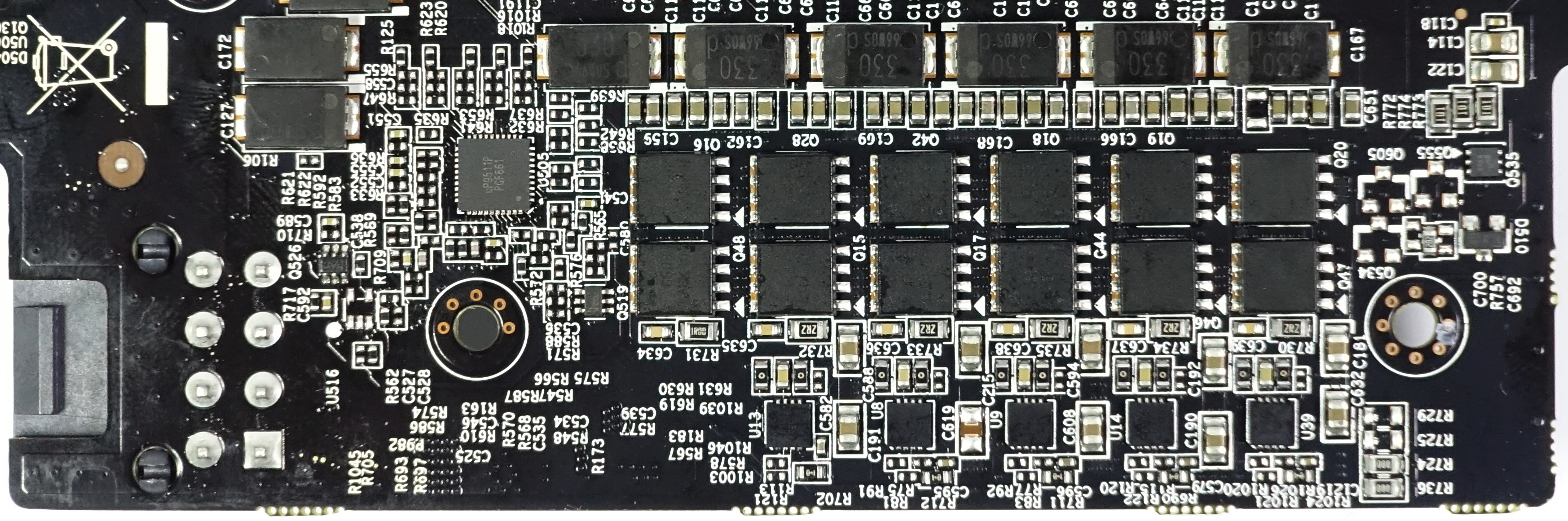

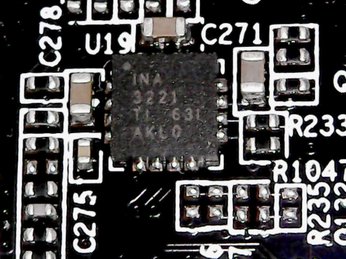

The 4+1-phase system relies on the same uP9511P for PWM control as Nvidia's reference card. Gigabyte places this chip on the back of its PCB, though. The memory's one power phase is controlled by a uP1728 on the top side of the board. The converter rails are doubled, a trick that allows each of them to be advertised as two phases, rather than one. Of course, Gigabyte uses an obligatory INA3221 for monitoring current, too.

Gigabyte implemented a voltage regulation solution that allows it to keep the card short without risking thermal issues. Foxconn coils and 6414 high-side MOSFETs are placed on the front of the PCB, while the five gate drivers responsible for controlling the individual phases are banished to the back.

This is also where the 6508 low-side MOSFETs are found. Two MOSFETs are used for each converter circuit, halving the already low internal resistance of the circuits a second time.

The advantage of this setup is that the low-side MOSFETs can be cooled from above by the cooler, while the low-side chips benefit from increased surface area due to the doubling of MOSFETs. This allows them to be cooled by a small screwed-on plate, preventing problematic hot-spots.

Furthermore, two capacitors are installed right below the GPU to absorb and equalize peaks in voltage.

Power Results

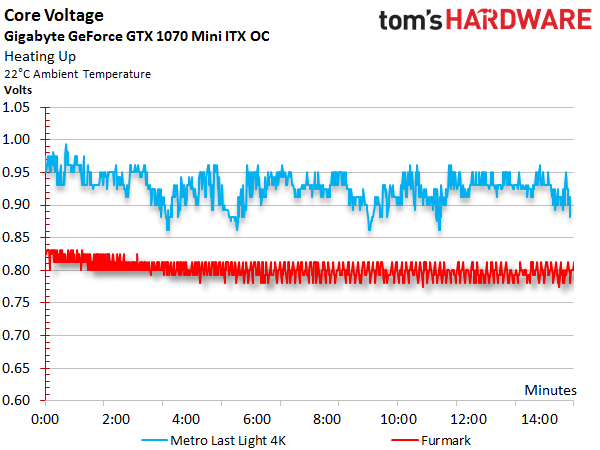

Before we look at power consumption, we should talk about the correlation between GPU Boost frequency and core voltage, which are so similar that we decided to put their graphs one on top of the other. This also shows that both curves drop as the GPU's temperature rises.

After warming-up in our gaming workload, the GPU Boost frequency fluctuates between 1778 and 1860 MHz. Under a more constant and taxing load, the clock rates drop significantly to a range between 1584 and 1607 MHz.

The voltage measurements respond similarly. While we observe ~0.975V in the beginning, this value later drops as low as 0.893V. It is easy to tell that Gigabyte had to put clock rates on a diet in order to manage power consumption and thermal output.

Combining the measured voltages and currents allows us to derive a total power consumption we can easily confirm with our instrumentation by taking readings at the card's power connectors.

As a result of restrictions imposed by Nvidia, whereby the lowest attainable frequencies are sacrificed to hit higher GPU Boost clock rates, the power consumption of many factory-overclocked cards is disproportionately high when they're idle. Gigabyte achieves a good compromise, though. Its lowest frequency is 164 MHz, only slightly above Nvidia's reference.

| Idle | 12W |

|---|---|

| Idle Multi-Monitor | 14W |

| Blu-ray | 12W |

| Browser Games | 103-114W |

| Gaming (Metro Last Light 4K) | 155W |

| Torture (FurMark) | 155W |

These charts go into more detail on power consumption at idle, during 4K gaming, and under the effects of our stress test. The graphs show how load is distributed between each voltage and supply rail, providing a bird's eye view of load variations and peaks.

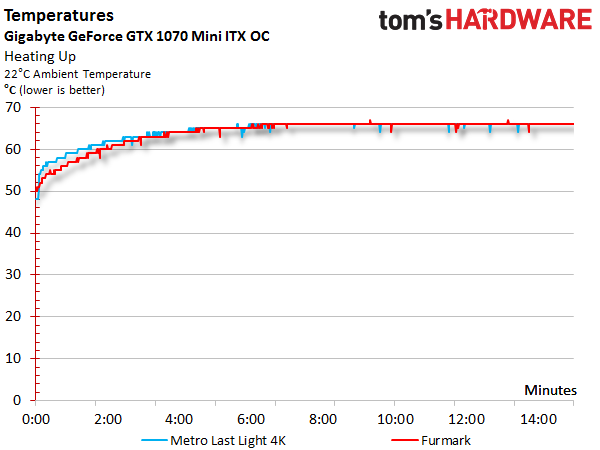

Temperature Results

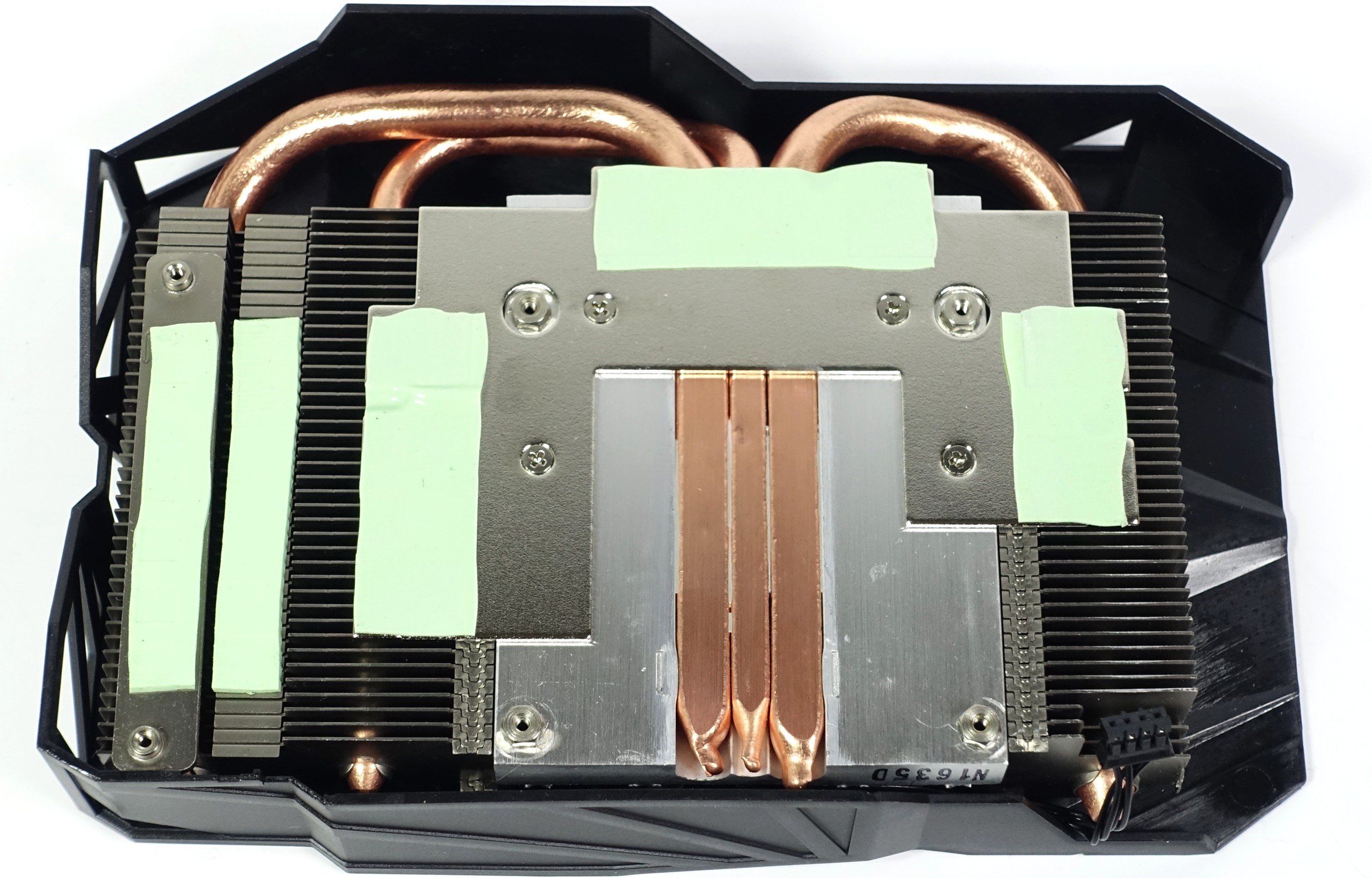

Naturally, power consumption directly affects temperatures. The question of how well Gigabyte's compact GeForce GTX 1070 Mini ITX OC copes with thermal energy can only be answered by looking closely at its cooling solution. The company employs two 1/3-inch (8mm) and one 1/4-inch (6mm) heat pipes made of copper-composite material. They make direct contact with the GPU, accelerating dissipation out through the heat sink's fins.

The small plate for cooling the memory modules is seated on an aluminum heat sink. Three heat pipes are embedded in it, and they're directly connected to the finned cooler.

Gigabyte actively cools the six high-side MOSFETs using the cooler's integrated heat sink. It also draws heat from the coils with thermal pads, and applies slight pressure through them to combat vibration, which we come to know as coil noise. This solution kills two birds with one stone; it eliminates hot-spots and noise simultaneously.

The smaller cooling plate on the back covers all 12 low-side MOSFETs and the gate drivers, dissipating excess heat through the back of the card. As you might imagine, this helps with the small board's thermal challenges quite a bit. Registering 151°F (66°C) on an open test bench, and 154°F (68°C) to 156°F (69°C) in a closed case, the GPU's temperatures are completely acceptable.

A relatively high idle temperature between 118°F (48°C) and 122°F (50°C) is attributable to the card's passive mode. Gigabyte's small cooler doesn't really seem suitable for that purpose. In a compact enclosure, we'd suggest keeping the fan spinning at all times, even slowly. Fortunately, this behavior can be adjusted manually through software.

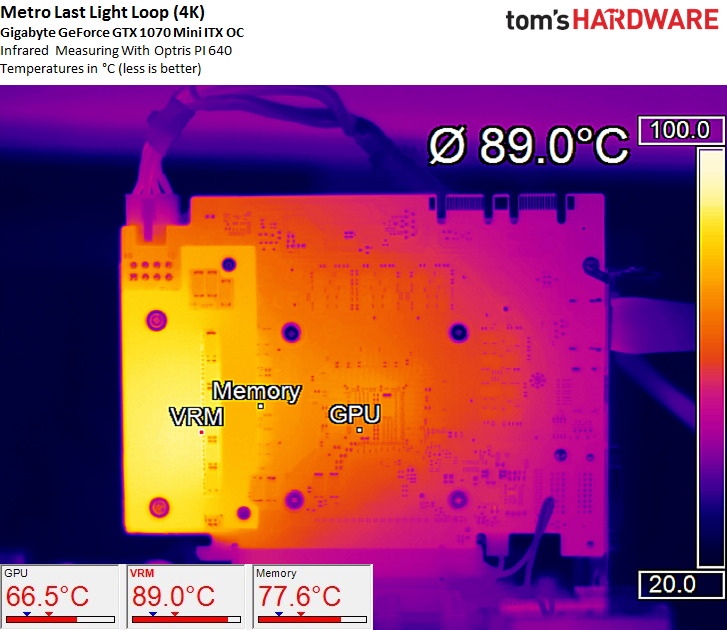

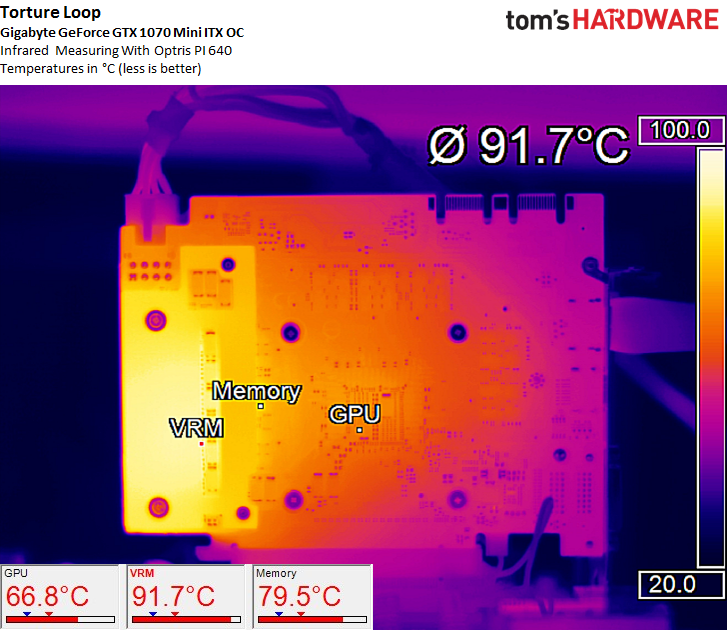

The infrared images reveal no troublesome hot-spots. Nvidia's GPU, Samsung's memory, and the voltage regulators are cooled well, particularly given a dense PCB.

Temperatures rise a bit during our stress test, but they're not high enough to cause concern. The bottom line is that Gigabyte's concept works.

Sound Results

Since the temperatures during our gaming and torture workloads are similar, the observed fan speeds are about the same as well.

There is no start-up peak in the graph, which would normally indicate the jump from passive to active mode. The installed 90mm fan tops out at 3300 RPM and is stable at speeds as low as 400 RPM. As a result, no tricks are necessary to get it up and running. Gigabyte keeps a good grip on hysteresis as well, and that might be helped by the fact that the fan is activated early, just below 132°F (56°C).

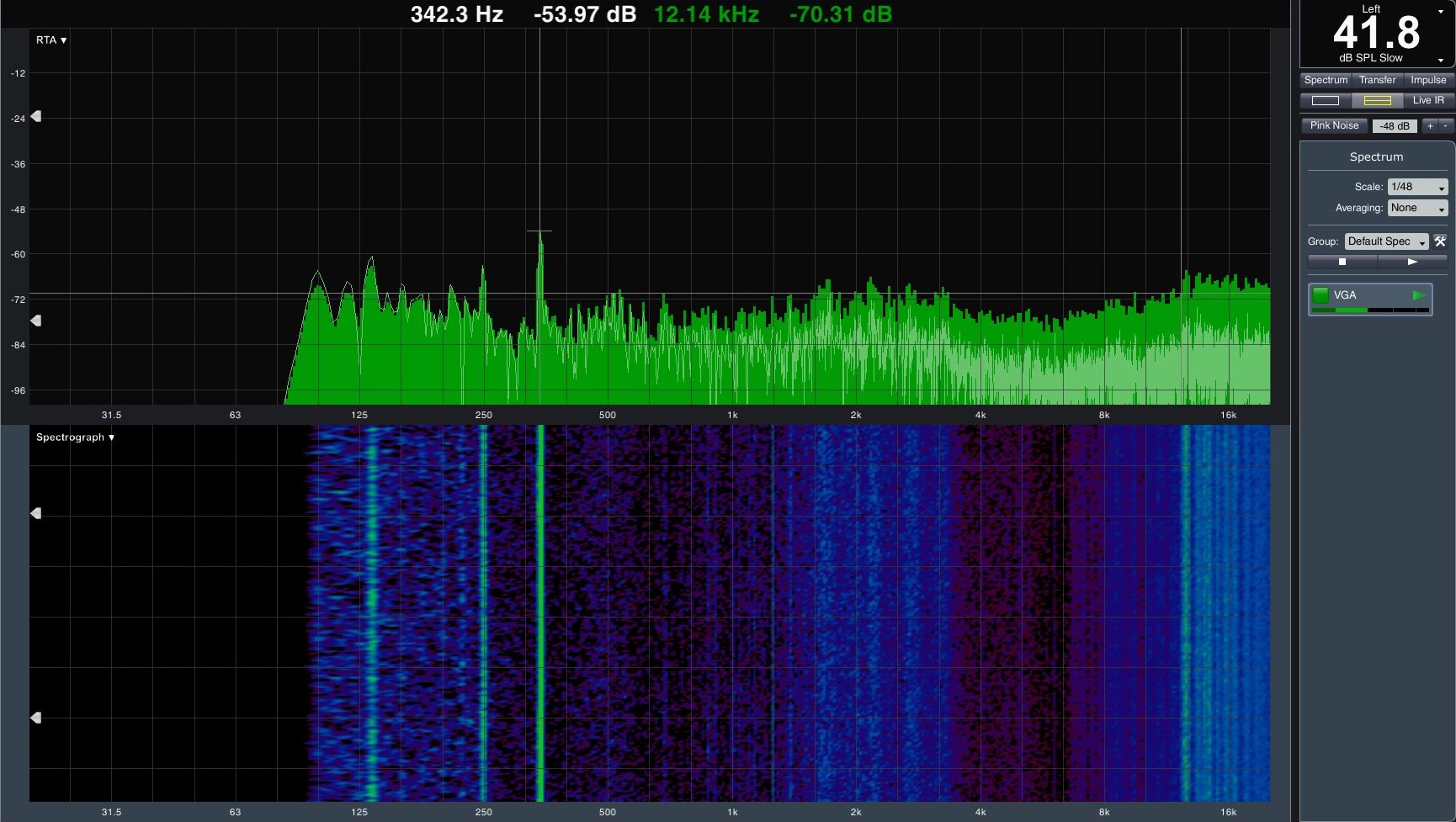

Although the 41.8 dB(A) we measure after a prolonged workload isn't particularly quiet, it's also also not loud enough to bother us. Even Nvidia's GeForce GTX Founders Edition is significantly louder. The high-frequency parts of the VRM still generate quantifiable noise, but they're barely audible. Low-frequency buzzing is rather moderate, and the part that actually is measurable is due to airflow.

Gigabyte does a good job with this small card's cooling; it'd be hard for us to suggest significant improvements.

Gigabyte GTX 1070 Mini ITX OC

Reasons to buy

Reasons to avoid

MORE: Best Deals

MORE: Hot Bargains @PurchDeals

Current page: Gigabyte GTX 1070 Mini ITX OC

Prev Page NEW: Gigabyte GTX 1070 G1 Gaming 8G Next Page MSI GTX 1070 Gaming X 8GGet Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Igor Wallossek wrote a wide variety of hardware articles for Tom's Hardware, with a strong focus on technical analysis and in-depth reviews. His contributions have spanned a broad spectrum of PC components, including GPUs, CPUs, workstations, and PC builds. His insightful articles provide readers with detailed knowledge to make informed decisions in the ever-evolving tech landscape

-

adamovera Archived comments are found here: http://www.tomshardware.com/forum/id-3283067/nvidia-geforce-gtx-1070-graphics-card-roundup.htmlReply -

TheRev MasterOne Asus ROG Strix GeForce GTX 1070 and Gigabyte GeForce GTX 1070 G1 Gaming pics are switched ! Did I win something? a job?Reply -

eglass Disagree entirely about the 1070 being good value. It's the worst value in the 10-series lineup. $400 for a 1070 is objectively a bad value when $500 gets you into a 1080.Reply -

adamovera Reply

The filenames of the images are actually swapped as well, weird - fixed now, thanks!19761809 said:Asus ROG Strix GeForce GTX 1070 and Gigabyte GeForce GTX 1070 G1 Gaming pics are switched ! Did I win something? a job? -

barryv88 Are you guys serious!?? You recommend a 1070 card that costs $530 which isn't even available in the U.S? That sorta cash gets you a much quicker GTX 1080! The controversy on this site is just non stop. If your BEST CPU's list wasn't enough already...Reply -

bloodroses I wonder how the Gigabyte 1070 mini compares to the other mini cards like the Zotac and MSI unit?Reply -

adamovera Reply

This is a roundup of all the 1070's we've tested. The graphics card roundups originate with our German bureau and are re-posted in the UK, so they'll sometimes include EU-only products - I'm guessing they're appropriately priced to the competition in their intended markets.19761907 said:Are you guys serious!?? You recommend a 1070 card that costs $530 which isn't even available in the U.S? That sorta cash gets you a much quicker GTX 1080! The controversy on this site is just non stop. If your BEST CPU's list wasn't enough already...

The Palit received the lowest level award - the Asus, the MSI, and one of the Gigabyte boards are better options. -

JackNaylorPE Reply19761822 said:Disagree entirely about the 1070 being good value. It's the worst value in the 10-series lineup. $400 for a 1070 is objectively a bad value when $500 gets you into a 1080.

The 1080 , like it's predcessors (780 and 980) has consistently been the red headed stepchild of the nVidia lineup. So much so that nVidia even intentionally nerfed the performance of the x70 series because its performance was so close to the x80.

The 1080 has dropped in price because, sitting as it does between the 1080 Ti and the 1070... it doesn't exactly stand out. When the 780 Ti came out, the price of the $780 dropped $160 overnight, so much so that I immediately bought two of them and the two sets of game coupons knocked $360 off my XMas shoping list. At a net $650, it was a good buy.

Using the 1070 FE as a reference and the relative performance data published by techpowerup for example.....Used MSI Gaming model since it is one model line where TPU reviewed all 3 cards

The $404 MSI 1070 Gaming X is 104.2% as fast as the 1070 FE

The $550 MSI 1080 Gaming X is 128.2% as fast as the 1070 FE

The $740 MSI 1080 Ti Gaming X is 169.5% as fast as the 1070 FE

So the cost per dollar for comparable quality designs is:

MSI 1070 Gaming X = 104.2 / $404 = 0.258

MSI 1080 Gaming X = 128.2 / $550 = 0.233

MSI 1080 Ti Gaming X = 169.5 / $740 = 0.229

Even at $500 .. the 1080 only comes in 2nd place at 0.256, so no, the better value argument doesn't hold, even assuming we were getting an equal quality card.

Looked at other comparable as a means of comparison and they are for the most par equal or higher ....

Strix at $420, $550 and $780

AMP at $435, $534 and $750

Now with any technology, eeking those last bits of performance out anything always comes at a increased cost. You more of a cost premium going from Gold to Platinum rating on a PSU than you do from Bronze to Silver of even Gold. It's simply another example of Law of Diminishing Returns. So we should expect to pay more per each performance gain with each incremental increase and that hold here. You'd expect that for each increase in performance the % increase in price per dollar would get bigger. But the x80 is quite an aberration.

We get a whopping 10.7 drop of 0.025 from the 1070 to the 1080

We get a rather teeny 1.7 drop of 0.004 from the 1080 to the 1080 Ti

Therefore, logically.... you are paying a 10.7% cost penalty for the increased performance to move up to from the 1070 to 1080 ... whereas the cost penalty for the increased performance to move up to from the 1080 to 1080 Ti is only 1.7% This is why eacxh time the Ti has been introduced, 1080 sales have tanked.

Another way t look at it...

1070 => 1080 = 23% performance increase for $146 ROI = 15.8%

1080 => 1080 Ti = 32% performance increase for $190 ROI = 16.8 %

It's not a matter **if** you can get **a** 1080 at $500., it's whether you can get the one you want. How is it that the $550 models have more sales than the less expensive ones ? Some folks don't care about noise, some folks don't OC, some folks hope they will be able to get the full performance available to us **if** someone ever comes out with a BIOS editor. And yes, there will cards that are heavily discounted for any number of reasons ... low factory clock, noise or heat concerns , some have taken some hits from bad reviews or are discounted simply because sales are poor .... but if a card is selling well below the average price it is because it's not as well made or just isn't selling for real or imagined issues. (example being EVGA SC / FTW ACX designs are now fixed but but EVGA still has a black eye from the earlier cooler problems and if buying EVGA, peeps want iCX. Finally, the 1080 bears the burden of being compared with the 780 and 980 whicc again got lost between the higher / lower cards.

Given the above ROI numbers, I am surprised that all the 1080s have not dropped below $500. But to my eyes, the `080 only starts to make sense when the cost is below $520 and **the ones I'd buy** just aren't there yet

-

Adroid Yea I refuse to buy a 1070 because they are overpriced, period. I almost bought a 1080 but judging from the performance difference it simply wasn't worth it, either. There is not much a 1080 will do that a 1070 won't. What I mean is - a 1060 will run 1080p fine. With that in mind, a 1080 gives very marginal benefit at 2k, and neither one will run 4k smoothly - so what's the point.Reply

If the 1080 was a 350$ card, I might have bit, but as it stands now I'll be waiting for the 1080ti to drop a bit, which can run most games in 2k over 120fps - and justifying an upgrade from a GTX 700 series card. I'm not going to pay over $400 for a card that won't smoke my GTX 770... I can play all games on moderate settings now, so I want ultra settings at 2k that make use of a 144hz monitor - or bust. -

tyr8338 I`m using gigabyte 2 fan 1070 for over a year now and it`s really good, it`s good overclocker and is running at 1974 mhz overclock 24/7 and 8600 on ram, probably it would be able to go even higher but it`s fine for me :) It`s quiet but at around 50% fan speed it produces some strange vibration sound sometimes, it dosn`t bother me all that much tbh but it can be a little annoying.Reply