Nvidia GeForce GTX 970 And 980 Review: Maximum Maxwell

New Features

Nvidia's GM204 includes a smorgasbord of new functionality, and it's been a while since I've reviewed a GPU that brings so many features to the table. Some of these features will provide an immediate benefit to users right out of the box, while other functionality is definitely more forward-looking in scope and implementation. Let's start with the immediate benefits and work our way outward.

Dynamic Super Resolution

Dynamic Super Resolution (DSR) is a feature that has the potential to reduce jaggies at the edge of pixels, an effect known as 'aliasing'. Put simply, DSR is a form of anti-aliasing.

Article continues belowThose of you familiar with anti-aliasing may be aware of the oldest, most visually appealing, and probably the most demanding anti-aliasing technique available: SuperSampling Anti-Aliasing, or SSAA. SSAA renders a game at a higher resolution than the game is set to display, and downsamples the result to the target resolution. As a result, aliasing artifacts are greatly reduced. The biggest problem with SSAA is that it is so demanding on hardware resources that most game engines ignore it entirely. It can sometimes be forced through the driver, but this can be unreliable.

What Nvidia offers with DSR is a way to deliver supersampling antialiasing while bypassing the game's built in anti-aliasing limitations completely. With DSR, the GeForce card fools the graphics engine into believing that it the monitor can display higher resolutions than it is actually able to. The GeForce GTX 970/980 takes that high resolution information and downsamples it to the monitor's native resolution in real time. This provides the anti-aliasing benefit of SSAA without the need to program support into the software. Nvidia claims that as long as a game engine is capable of rendering to higher resolutions, DSR will work.

What's the downside? Just as SSAA is a performance killer, and so is DSR. Selecting a virtual 4K resolution in game to view at 1080p will saddle you with the same performance penalty you'd get by actually rendering to a 4K monitor. There might even be a slight extra latency added during the downsampling step, and we'll test for that later. Because of this, DSR is most promising for use with light game engines or older titles. If you're getting 120 FPS in Counter-Strike at maximum detail settings, for instance, DSR might be an excellent option to squeeze more visual fidelity out of the game without dropping the frame rate too much.

The other limiting factor for DSR is interface scalability. Not all games that can technically handle a 4K display will be coded to support it from a user interface perspective. Many modern titles have flexible and even user-selectable user interface scaling, but older games that would otherwise benefit from DSR may prove to be practically unusable thanks to on-screen buttons and indicators that are too small to see at 3840x2140. Of course, DSR isn't limited to a particular resolution, so users could opt for other resolutions such as 2560x1440 although it would not be as effective from an anti-aliasing perspective.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

DSR is enabled through GeForce Experience software, and the driver panel provides controls for tweaking the sharpness of the down-sampling filter. While this feature will launch on the GeForce GTX 970 and 980, it will be moved over the rest of the GeForce product line over time.

We think this is a great technique that could bring much-needed visual improvement to many, less visually advanced game titles. It's especially compelling it turns out to be as backward-compatible as we're told it is.

Multi-Frame Sampled Anti-Aliasing

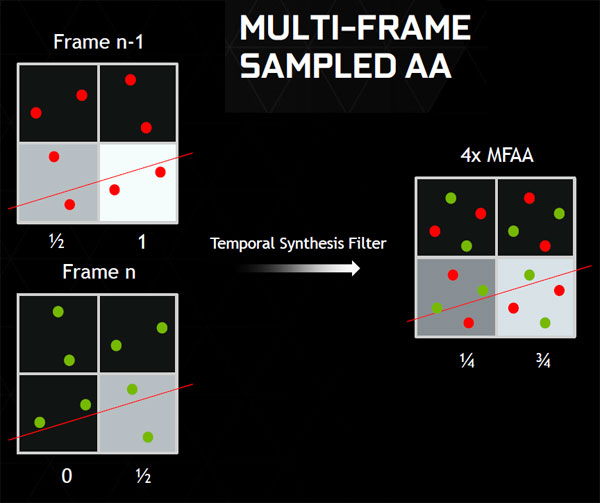

Multi-Frame Sampled Anti-Aliasing (MFAA) is yet another method to reduce pixel stepping artifacts, also introduced with the GeForce GTX 970 and 980. Like DSR it is based on an older technique, and part of its appeal is how Nvidia has implemented it in way that is transparent to the game engine to ensure compatibility. The key difference is that DSR is designed to increase fidelity in games that are graphically maxed out, at the cost of performance. MFAA, on the other hand, is intended to increase performance without sacrificing visual fidelity.

At its core MFAA is very similar to an anti-aliasing mode introduced with the Radeon X800 called Temporal MSAA. AMD has since abandoned this mode as its implementation had flaws, but Nvidia appears to have resurrected the technique with a simple improvement that might make all the difference: a temporal synthesis filter.

If you're not familiar with Temporal MSAA, it's a method to increase the number of apparent sample positions by switching the MSAA filter pattern every alternating frame. Thanks to visual persistence in the human eye, the result looks similar to higher numbers of filter samples. For example, 2x sample Temporal MSAA can appear similar to 4x MSAA, which requires twice the number of filter positions per pixel and results in a lower frame rate. The problem with Temporal MSAA as it was implemented in Radeon cards, though, is that visual artifacts can occur during subtle movement. In addition, high frame rates are a must. When performance drops below 60 FPS, the effect is turned off.

Nvidia may have solved both of these problems with the use of a temporal synthesis filter that takes into account both pixel samples over time and movement in the scene, and then averages out a result. This allows MFAA to work at frame rates below 60 FPS, and should minimize artifacts during camera movement.

Of course when the user's camera moves very quickly MFAA won't be able to keep up, but the beauty of this scenario is that aliasing artifacts are not something that users can really experience when the camera is moving that fast. Put simply, when MFAA breaks down due to a lot of movement, it probably doesn't matter because you won't be able to notice. If it works like Nvidia says it does, MFAA can provide 4x MSAA quality at the performance cost of 2x MSAA.

While the company told us to expect it soon, MFAA is not enabled for the GeForce GTX 970 and 980 launch. We have to wait for more details on the time frame and implementation.

VR Direct

With DSR and MFAA explained, we'll begin to consider features that are a work in progress and probably won't see availability for at least a few months. The first of these features is VR Direct, Nvidia's blanket of technologies designed to enhance experience with virtual reality (VR) head mounted displays (HMDs) like the Oculus Rift.

If you're a VR enthusiast, you may have already considered the tremendous potential benefit that DSR could bring to the resolution-challenged Oculus Rift. With a single 1080p screen split to 960x1080 resolution for each eye, the potential to minimize aliasing at this relatively low resolution is obvious. MFAA is also an anti-aliasing option that might make sense for use with an HMD, as the light computational load of 2x MFAA has a lower latency cost compared to true 4x MSAA.

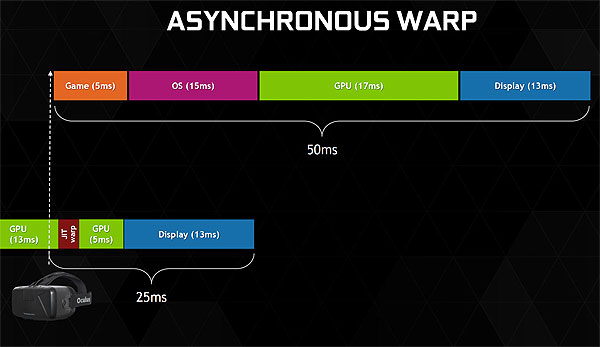

Speaking of latency, Nvidia has already been working to eliminate this problem on the VR graphics pipeline. Latency can be a crippling factor when it comes to HMDs, decoupling the user from their movement and pulling them out of the experience. Asynchronous Warp is a technology that Nvidia designed to combat HMD latency by doing as much of the graphics work as possible ahead of time, re-checking the HMD position closer to render time, and adjusting the frame to better sync with the user's orientation as soon as possible.

In addition, the company mentioned the possibility of an auto stereo driver. One of the first things I thought when I saw the Oculus Rift was how great it would be if Nvidia coded support for the HMD into the 3D Vision driver, allowing users to experience virtual reality in existing game titles that don't have native support. Clearly someone at Nvidia had the same idea, and I look forward to seeing what the company comes up with.

DirectX 12

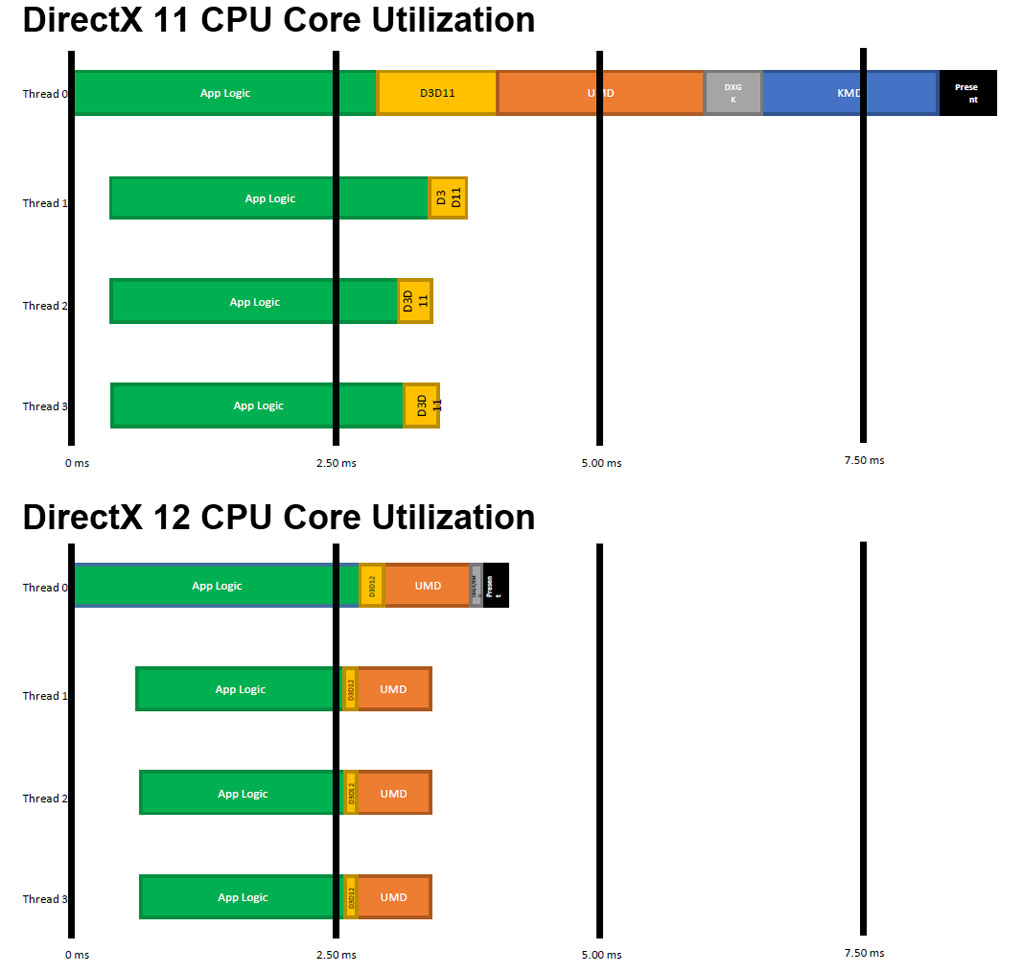

Microsoft has committed that its new graphics API will ship in time for the holiday season in 2015, and developer access to the current build is already available. Full implementation might be more than a year away, but it's somewhat relevant because Nvidia claims that the GeForce GTX 970 and 980 are DirectX 12 compatible.

If you've heard anything about this API update, you probably know that one of the core features of DirectX 12 is reduced CPU overhead by giving developers greater control over resources. This includes features such as pipeline state simplification and command multithreading that allow for greatly improved processing allocation across multiple CPU cores.

DirectX 12 isn't just about leveraging CPU resources more efficiently, though. It's also about new and more efficient rendering features and techniques for developers. These include rasterizer ordered views, which allows for correct and consistent rendering of overlapping semi-transparent objects ordering for the first time; tiled resources, allowing for the 'megatexture' technique to reduce memory use for textured tiles or sparse volumes; and conservative rasterization, a method for testing the contents of an entire pixel instead of just a single point sample, which can be useful for scenarios such as collision detection.

There's a lot of interesting technical data regarding these features, but considering the compressed GeForce GTX 970 and 980 launch we don't have a lot of time to dig into the specifics. We'd rather concentrate on Nvidia's new graphics cards and revisit DirectX 12 as we get closer to its launch. By the way, many of DirectX 12's features will be released in a DirectX 11.3 update in order to bring them to Windows 7, too.

Voxel Global illumination

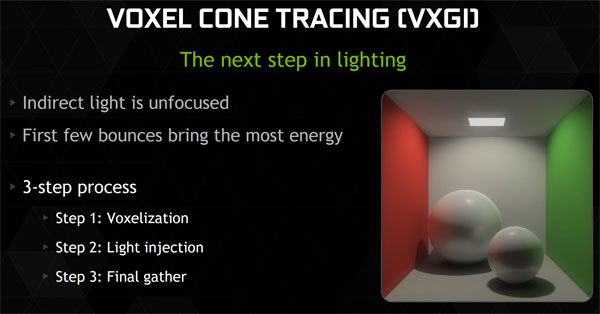

Nvidia's Voxel Global Illumination (VXGI) is perhaps the most ambitious feature included in the GM204 GPU (it wasn't included in the GM107 used in the GeForce GTX 750 series). VXGI represents a wholesale rethinking of the way games are made, a transition to a realistic global illumination lighting model that players have never experienced in real time before, and a tremendous computational load.

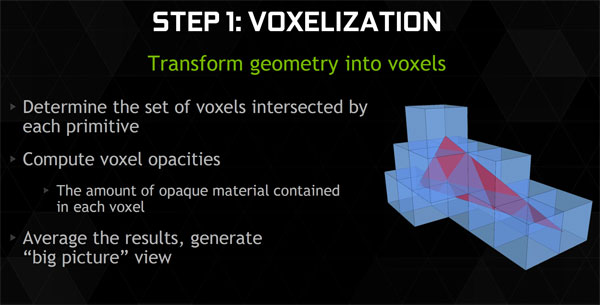

VXGI uses voxel space and voxel cone tracing to deliver an approximation of realistic lighting, one that is much faster to compute when compared to standard 3D ray tracing methods. Let's start by defining some of those terms.

'Voxel' is a combination of the words 'volume' and 'pixel', and represents a volume in a three-dimensional grid in space. Imagine the room you're currently in was completely filled with invisible boxes stacked tightly together all the way to the ceiling. Each of those boxes represents a voxel, a volume in 3d space. The voxel grid is used as a data structure to store light emission and opacity data.

Cone tracing is a technique that uses different resolutions of voxels along a light path to store data about how the light affects objects in its path. This is computationally efficient as the size of the voxels grows (the resolution drops) as the light cone travels further from its last bounce.

Rendering is computed in three steps: the voxelization of the scene, the injection of light, and finally, the final gather. During the final step the geometry is rendered, light is evaluated, and indirect light is gathered to produce a photorealistic result.

Nvidia demonstrated VXGI with the use of a moon landing demo that realistically recreates a famous picture of the original moon landing. It looks absolutely authentic for a real-time lighting demo. Having said that, it was demonstrated on a system running two GeForce GTX 980 cards in SLI, but it didn't look like a very complex scene and featured only a single light source. The potential computational requirements to run VXGI in a full-fledged game gives us pause.

In many ways VXGI is a technology that is more game-developer oriented than consumer oriented at this point. It potentially simplifies the way games are created: with current game engines, lighting effects need to be baked on to textures in a scene. With VXGI everything would be rendered for the player in real time, removing significant steps in the creation process and allowing developers to make changes to a game level without adding workloads. VX3D is attractive as a development kit for game creators to play with this new lighting model.

I think global illumination and path tracing represent the inevitable future of real-time photorealistic game graphics. From a consumer standpoint though, VXGI is in the early chicken-or-egg scenario that every disruptive technology suffers. I'm somewhat skeptical that the GeForce GTX 970 and 980 are fast enough to perform the tremendous number of calculations required by voxel global illumination. Having said that, perhaps VXGI can be used to augment current lighting models for more realism without completely replacing them. I really look forward to seeing how this technology will be integrated into the Unreal 4 engine, which Nvidia told us will happen by Q4 of this year, and I'm anxious to see the effect it has on visual fidelity and frames-per-second performance.

Current page: New Features

Prev Page Introducing GM204: There's A New Maxwell In Town Next Page Nvidia GeForce GTX 980 Reference CardDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

lancear15 I was waiting for Tom's review to make my final decision, the 980 is definitely going into my current 5960x build! I cant wait.Reply -

HKILLER so how long before you do a round up?i mean this time i've seen some pretty crazy looking cards (Zotac's Extreme AMP! edition looks crazy and the Inno3D too)and EVGA has shown off ACX 2.0 which they claim to be the most efficient GPU air cooler in the world...so many to choose from also EVGA FTW has been nicely overclocked i've seen it performing almost on par with 980Reply -

realibrad byt he way... Last page 2nd sentence after the graph of Avg game performance.Reply

I was hoping for more performance but the efficiency is quite nice. They just put pressure on the top end and gave us a price reduction, instead of overall performance gains. -

balister Very nice, but I still want to see what the power consumption along with what might be possible with the drop to 20nm (since this is still 28nm).Reply

Likely, we're going to see a Maxwell Titan equivalent come in the next year or so as these are a x04 much like Kepler with the 670/80s were and we're still going to be waiting to see what the x10 will be with the Maxwell architecture. -

MANOFKRYPTONAK Why didn't you include an overclocking comparison? Why didn't you include the 780, but included the 770? Doesn't make much sense...Reply -

vertexx 970 is the real story until the 980ti comes out - what a value proposition with the 970!Reply

Good stuff here - but you guys were a bit slow on this one. Tom's Hardware is the first site I visit every morning. But with the delay of this article, I've been all over the net this morning on other sites that got their stuff out sooner. -

daveys93 Will there be a follow-up article about overclocking these cards? Other sites are showing results that both of the new cards are capable of 1500+ MHz on air (aftermarket coolers and even a few with stock coolers), which is a massive overclock. Looks like NVIDIA left the door open for some decent voltage increases, but many results have been in the 1450-1500 MHz range at stock voltage. I am a big fan of the thoroughness of Tom's articles so I am very interested in seeing overclocking results and analysis from this site.Reply