ATI Radeon HD 4850: Smarter by Design?

Geometric Performances, PowerPlay

AMD hasn’t only improved its architecture’s weaknesses; the engineers have also improved the cards’ existing strong points even more. The performance of the Geometry Shaders has been improved. That’s not surprising. This type of shader is still very recent, and the preceding architecture was the first version either AMD or Nvidia had implemented. Now they’ve had time to improve on their first versions. Like Nvidia, AMD has increased the size of the output buffer of the Geometry Shaders in order to conserve more data on the GPU. The number of Geometry Shader threads being processed at one time has been multiplied by four. Let’s look at the practical results of these improvements:

Though the RV770 wasn’t very impressive on the Galaxy benchmark (which, if you notice that the GT200 shows a very limited gain over the G92 and seems not to be influenced much by the size of the buffer in all cases), it really showed its capabilities with Hyperlight, where it placed second just behind the GTX 280.

Let’s continue our tests with the accent on geometry, this time with vertex shading:

Again not surprisingly, the AMD architecture still holds sway. But again there’s some disappointment, since you’d expect an architecture with 800 ALUs to scores much better. But in practice all current GPUs are limited by the power of the setup engine, which holds them to one triangle per cycle in the best cases. The Vertex Shader 3.0 test simply refused to run on the RV770.

Let’s move on with vertex shader performance, this time specifically targeting texture fetching, since it’s a useful technique, especially for displacement mapping. Nvidia kept the advantage by a nose on the Earth test, but on the Waves test AMD was far ahead, even leaving the hottest new series from Nvidia behind.

PowerPlay

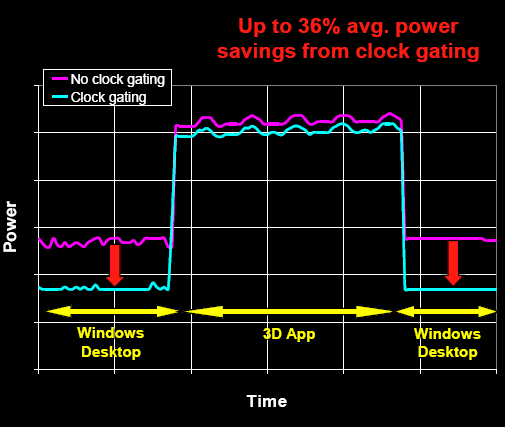

AMD has also improved management of its GPU’s power consumption, in particular by introducing clock gating, which disables certain parts of the chip when they’re not being used. AMD has also corrected a bug in its power management that was revealed on the RV670s when running with midrange or low-end CPUs. With such CPUs, the RV670 was sometimes underused and so shifted to low-power mode, and when the CPU had finished processing the data and suddenly sent them in a burst, the GPU had to move back into high-performance mode, which took several cycles and could cause micro stuttering.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The GPU also has a microcontroller that takes readings:

- of the temperature at various different sensors disseminated around the GPU;

- of the activity of the different GPU areas. This microcontroller is what controls clock gating and the frequency of the GPU as a function of these readings, thus minimizing cost at the level of the driver.

Current page: Geometric Performances, PowerPlay

Prev Page ROPs, Memory Controller Next Page Specifications-

Neog2 Wow $200 in Best Buy for a HD 4850,Reply

$450 in Best Buy for a GTX 260.

And the 4850 is pretty close to the 280.

Ouu the 4870 is going to give Nvidia a run for there money

for the first time in a while. -

Prodromaki Oced Asus and 4850 instead of 4870 + too many games based on engines favoring nVidia...Reply

P.S. +1000 -> 2222 -

For Mass Effect the Engine limits the Maximum framerate to 62FPS. You can change this in the BIOENGINE.INI file (in the Documents\BioWare\Mass Effect\Config\ folder on Vista) by changing the value:Reply

MaxSmoothedFrameRate=62 in the Engine.GameEngine section -

puterpoweruser I can't believe it took nVidia coming out with a new card again to have tom's make a review finally of the 4850.Reply

"it was unavailable due to the sloppy handling of this launch"

Seriously? AMD can't control if their retail partners screwed the pooch on the release date, because they were so anxious to get people this great product. They made sure the product was readily available well before the launch date.

They should be praised for not having a paper launch, not told that it was a sloppy launch, very poor form saying that.

Hell i went to best buy and bought 2 4850's on sunday, when the cards weren't even supposed to be available yet, the guy told me "they have been in stock for over a month in the back, they aren't supposed to be available yet but i can get two for you." Were the AMD police supposed to come and smack best buy on it's hand and keep me from giving them profits?

Sorry if i'm ranting, just put the blame where it belongs. -

Malovane No offense, Fedy Abi-Chahla and Florian Charpentier, and thanks for the hard work, but I think the article should be revised a bit. First off, this should be a review of graphics cards.. not a burned out overclocked Asus motherboard. If you attribute your 4850 test crashing due to your motherboard.. why throw in results of 0 across the board for the 4850? You just corrupted your data and made the final fps averages meaningless, which is the thing people were generally interested in. Secondly, why in the world are you including tests that don't fit the definition of "playable" on any card in your test lineup (Crysis 2560x1600). It just throws off averages, as people aren't going to run this game at 7fps! If there's no card in the lineup that gets close to 30fps in a certain test, just move on! Save it for the quad crossfire or triple sli tests or something. You're giving high weights to resolutions that only a fraction of a percentage point of dedicated gamers can utilize (and those wouldn't bother with a single GPU). Lastly, please get those annoying gigantonormous screenies out of the review. It makes the review look like it was done by kindergarteners.Reply -

puterpoweruser I didn't finish reading the whole article yet but was the driver hotfix and the current 8.6 driver applied to the 4850?? It improved performance and stability greatly as i saw, it make the actual clock speed the card is set it run nicely and gives it great overhead to overclock through the CCCReply -

draxssab Who wants the Radeon 4800 full revew? (including the 4870, that do better than the GTX 280 in some games!)Reply

http://www.hardware.fr/articles/725-8/dossier-amd-radeon-hd-4870-4850.html

In french, but the graphs talk by themselves. Ho, and if you want a short translation = impressive and incredibly more efficient than Nvidia (if you compare the size of the GPU, yes it's A LOT more efficient) -

spaztic7 These reviews are getting better! Although I have seen many benchmarks and tests of the 4850 before this, I still love seeing how the 48x0 line is doing against the green machine! Anandtech.com has a kill 4870 review!Reply