AMD Radeon HD 7970 GHz Edition Review: Give Me Back That Crown!

Test Setup And Benchmarks

| Test Hardware | |

|---|---|

| Processors | Intel Core i7-3960X (Sandy Bridge-E) 3.3 GHz at 4.2 GHz (42 * 100 MHz), LGA 2011, 15 MB Shared L3, Hyper-Threading enabled, Power-savings enabled |

| Motherboard | Gigabyte X79-UD5 (LGA 2011) X79 Express Chipset, BIOS F10 |

| Memory | G.Skill 16 GB (4 x 4 GB) DDR3-1600, F3-12800CL9Q2-32GBZL @ 9-9-9-24 and 1.5 V |

| Hard Drive | Intel SSDSC2MH250A2 250 GB SATA 6Gb/s |

| Graphics | AMD Radeon HD 7970 GHz Edition 3 GB (@ 1000/1500 MHz) |

| Row 5 - Cell 0 | AMD Radeon HD 7970 3 GB (@ 925/1375 MHz) |

| Row 6 - Cell 0 | AMD Radeon HD 7950 3 GB |

| Row 7 - Cell 0 | Nvidia GeForce GTX 680 2 GB |

| Row 8 - Cell 0 | Nvidia GeForce GTX 670 2 GB |

| Row 9 - Cell 0 | Nvidia GeForce GTX 690 4 GB |

| Power Supply | Cooler Master UCP-1000 W |

| System Software And Drivers | |

| Operating System | Windows 7 Ultimate 64-bit |

| DirectX | DirectX 11 |

| Graphics Driver | AMD Catalyst 12.7 (Beta) For HD 7970 GHz Edition, 7970, and 7950 |

| Row 15 - Cell 0 | Nvidia GeForce Release 304.48 (Beta) For GTX 680 and 670 |

| Row 16 - Cell 0 | Nvidia GeForce Release 301.33 For GTX 690 |

You'll notice we're missing some comparison hardware that was included in our most recent GeForce GTX 670 review. That's by design. AMD's Catalyst 12.7 driver release purportedly includes a number of important improvements. Thus, we felt it necessary to retest the Radeon HD 7970 and 7950, omitting any 6900-series board due to time constraints. Similarly, Nvidia just this week made public its 304.48 driver, which was supposed to improve performance in a number of games as well. Unfortunately, it didn't really affect any of the titles we tested, so we wasted some cycles there. But at least we have the GTX 680 and 670 benchmarked anew using the latest from Nvidia, too.

| Games | |

|---|---|

| Battlefield 3 | Ultra Quality Settings, No AA / 16x AF, 4x MSAA / 16x AF, v-sync off, 1680x1050 / 1920x1080 / 2560x1600, DirectX 11, Going Hunting, 90-second playback, Fraps |

| Crysis 2 | DirectX 9 / DirectX 11, Ultra System Spec, v-sync off, 1680x1050 / 1920x1080 / 2560x1600, No AA / No AF, Central Park, High-Resolution Textures: On |

| Metro 2033 | High Quality Settings, AAA / 4x AF, 4x MSAA / 16x AF, 1680x1050 / 1920x1080 / 2560x1600, Built-in Benchmark, Depth of Field filter Disabled, Steam version |

| DiRT 3 | Ultra High Settings, No AA / No AF, 8x AA / No AF, 1680x1050 / 1920x1080 / 2560x1600, Steam version, Built-In Benchmark Sequence, DX 11 |

| The Elder Scrolls V: Skyrim | High Quality (8x AA / 8x AF) / Ultra Quality (8x AA, 16x AF) Settings, FXAA enabled, v-sync off, 1680x1050 / 1920x1080 / 2560x1600, 25-second playback, Fraps |

| 3DMark 11 | Version 1.03, Extreme Preset |

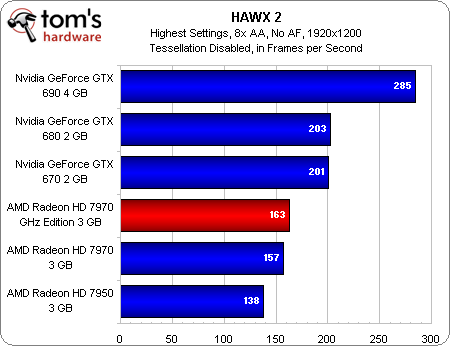

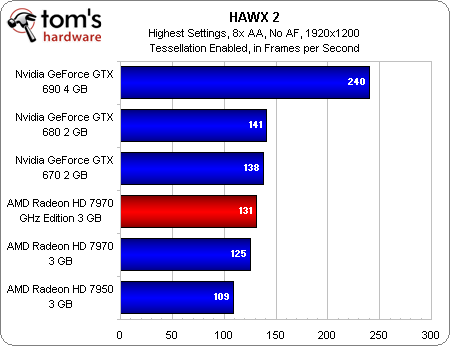

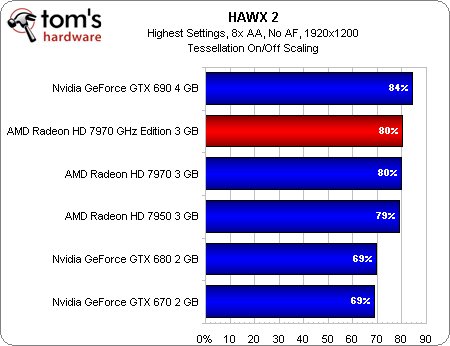

| HAWX 2 | Highest Quality Settings, 8x AA, 1920x1200, Retail Version, Built-in Benchmark, Tessellation on/off |

| World of Warcraft: Cataclysm | Ultra Quality Settings, No AA / 16x AF, 8x AA / 16x AF, From Crushblow to The Krazzworks, 1680x1050 / 1920x1080 / 2560x1600, Fraps, DirectX 11 Rendering, x64 Client |

| SiSoftware Sandra 2012 | Sandra Tech Support (Engineer) 2012.SP4, GP Processing and GP Bandwidth Modules |

| Arsoft MediaConverter 7.5 | 449 MB MPEG-2 1080i Video Sample to Apple iPad 2 Profile (1024x768), 148 MB H.264 1080i Video Sample to Apple iPad 2 Profile (1024x768) |

| LuxMark 2.0 | 64-bit Binary, Version 1.0, Classroom Scene |

If you're one of the folks who likes to see our tessellation scaling results in HAWX 2, you'll find them below. In essence, though, the Radeon HD 7970 GHz Edition scales exactly like the 7970 that came before it.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Test Setup And Benchmarks

Prev Page Will Your Old 7970 Take A GHz Edition Firmware? Next Page Benchmark Results: 3DMark 11-

Darkerson My only complaint with the "new" card is the price. Otherwise it looks like a nice card. Better than the original version, at any rate, not that the original was a bad card to begin with.Reply -

wasabiman321 Great I just ordered a gtx 670 ftw... Grrr I hope performance gets better for nvidia drivers too :DReply -

mayankleoboy1 nice show AMD !Reply

with Winzip that does not use GPU, VCE that slows down video encoding and a card that gives lower min FPS..... EPIC FAIL.

or before releasing your products, try to ensure S/W compatibility. -

vmem jrharbortTo me, increasing the memory speed was a pointless move. Nvidia realized that all of the bandwidth provided by GDDR5 and a 384bit bus is almost never utilized. The drop back to a 256bit bus on their GTX 680 allowed them to cut cost and power usage without causing a drop in performance. High end AMD cards see the most improvement from an increased core clock. Memory... Not so much.Then again, Nvidia pretty much cheated on this generation as well. Cutting out nearly 80% of the GPGPU logic, something Nvidia had been trying to market for YEARS, allowed then to even further drop production costs and power usage. AMD now has the lead in this market, but at the cost of higher power consumption and production cost.This quick fix by AMD will work for now, but they obviously need to rethink their future designs a bit.Reply

the issue is them rethinking their future designs scares me... Nvidia has started a HORRIBLE trend in the business that I hope to dear god AMD does not follow suite. True, Nvidia is able to produce more gaming performance for less, but this is pushing anyone who wants GPU compute to get an overpriced professional card. now before you say "well if you're making a living out of it, fork out the cash and go Quadro", let me remind you that a lot of innovators in various fields actually do use GPU compute to ultimately make progress (especially in academic sciences) to ultimately bring us better tech AND new directions in tech development... and I for one know a lot of government funded labs that can't afford to buy a stack of quadro cards -

DataGrave ReplyNvidia has started a HORRIBLE trend in the business that I hope to dear god AMD does not follow suite.

100% acknowledge

And for the gamers: take a look at the new UT4 engine! Without excellent GPGPU performace this will be a disaster for each graphics card. See you, Nvidia. -

cangelini mayankleoboy1Thanks for putting my name in teh review now if only you could bold it;-)Reply

Excellent tip. Told you I'd look into it!